In the realm of digital computation and software engineering, basic mathematical operations are often taken for granted. However, when we look under the hood of modern technology, the simple act of “how to multiply fractions” transforms from a middle-school arithmetic lesson into a complex challenge of algorithmic precision and data structure management. Whether it is a financial application calculating interest rates or an engineering tool modeling structural integrity, the way computers handle rational numbers—fractions—dictates the accuracy and reliability of the entire system.

This article explores the technical methodologies used to multiply fractions within software environments, the importance of precision arithmetic in programming, and how emerging AI tools are revolutionizing the way we approach these foundational calculations.

The Core Logic: Translating Mathematical Rules into Algorithmic Code

To understand how technology handles fraction multiplication, we must first look at the mathematical logic and how it is translated into binary instructions. Unlike addition or subtraction, which requires a common denominator, the multiplication of fractions is computationally straightforward but requires specific handling of data types to prevent overflow or precision loss.

The Fundamental Algorithm: Numerator vs. Denominator

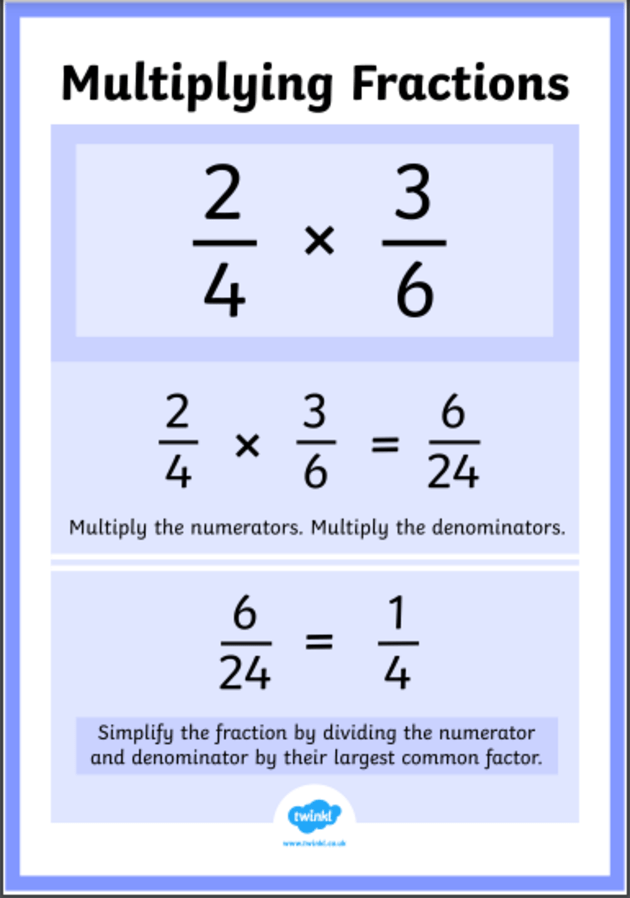

The basic rule is simple: multiply the numerators together and multiply the denominators together. In technical terms, if we represent a fraction as an object or a structure containing two integers, the multiplication function $f(a/b, c/d)$ results in $(a times c) / (b times d)$.

In low-level programming, this involves several steps. First, the software must ensure that the denominators ($b$ and $d$) are non-zero to avoid “divide-by-zero” errors that can crash an application. Second, the system must handle the sign of the numbers, ensuring that a negative times a negative correctly yields a positive result. While this seems trivial to a human, a software developer must explicitly define these edge cases to ensure the “Tech” remains robust.

Handling Simplification: The Greatest Common Divisor (GCD) Function

A critical component of any fraction-handling software is the simplification process. Multiplying $1/2$ by $2/3$ yields $2/6$, but for the output to be useful in a professional digital environment, it must be simplified to $1/3$.

The technical solution for this is the Euclidean Algorithm, one of the oldest and most efficient algorithms in computing. It calculates the Greatest Common Divisor (GCD) between the resulting numerator and denominator. By dividing both components by the GCD, the software ensures that the fraction is stored in its “canonical form.” This is essential for memory efficiency and for making comparisons between different fractional results in a database.

Integrating Fraction Multiplication in Programming Languages

In the tech world, not all languages handle fractions equally. Most developers default to “floating-point” numbers (decimals), but floating-point math is notoriously imprecise. For example, $0.1 + 0.2$ in many systems does not equal exactly $0.3$ due to binary representation errors. To solve this, high-level languages have developed specific libraries to handle fractions with 100% accuracy.

Python’s fractions Module

Python is widely used in AI and data science because of its readability and powerful libraries. The fractions module allows developers to create Fraction objects. When you multiply two such objects, Python manages the numerator and denominator separately, ensuring that no precision is lost. This is particularly vital in fintech applications where even a micro-penny of rounding error can lead to massive discrepancies over millions of transactions.

Implementing Custom Classes in C++ and Java

In systems where performance is the priority, such as high-frequency trading platforms or game engines, developers often write their own fraction classes in C++ or Java. This allows for manual memory management and the use of “operator overloading.” By overloading the * operator, a developer can make the code fractionA * fractionB work seamlessly, hiding the complex GCD and multiplication logic behind a clean, user-friendly interface. This abstraction is a hallmark of high-quality software engineering.

The Role of AI and Machine Learning in Solving Fraction Problems

The “how” of multiplying fractions has evolved with the advent of Large Language Models (LLMs) and specialized AI. We are moving away from simple calculators and toward systems that understand the context of the math.

Natural Language Processing (NLP) for Word Problems

Modern AI tools like ChatGPT or WolframAlpha use NLP to interpret “word problems” involving fractions. When a user asks, “If I have three-quarters of a server’s bandwidth and I use one-third of that for backups, how much am I using?”, the AI identifies the fractional components ($3/4$ and $1/3$) and applies the multiplication logic.

The tech behind this involves “symbolic reasoning.” Instead of just calculating a decimal, the AI maintains the symbolic representation of the fraction throughout the process. This allows it to explain the steps to the user, providing an educational tutorial alongside the answer—a perfect blend of software utility and human-centric design.

Neural Networks and Symbolic Regression

Beyond simple multiplication, advanced AI uses symbolic regression to find fractional relationships in massive datasets. In fields like physics-informed machine learning, AI models look for rational numbers that describe natural laws. Here, the ability to multiply and manipulate fractions within a neural network’s architecture allows the machine to discover formulas that are interpretable by humans, rather than just “black box” numbers.

Specialized Software Tools and Digital Calculators

While most users interact with a standard calculator app, the tech industry utilizes highly specialized “Computer Algebra Systems” (CAS) for complex fractional math.

Beyond the Standard Calculator: CAS Systems

Tools like Mathematica, Maple, and the open-source SageMath are built to handle “arbitrary-precision” arithmetic. When multiplying fractions that might have numerators with hundreds of digits, these systems use specialized data structures that don’t suffer from the “integer overflow” issues found in standard 32-bit or 64-bit processing. This is crucial in cryptography, where large prime numbers are often manipulated in fractional forms to create secure encryption keys.

Educational Apps and User Experience (UX) Design

From a product perspective, “how to multiply fractions” is a major niche in the EdTech (Education Technology) sector. Apps like Photomath use Computer Vision (CV) to scan handwritten fractions. Once the image is captured, the app must segment the numerator from the denominator, recognize the multiplication symbol, and then run the algorithmic logic we’ve discussed. The UX challenge is presenting these complex technical steps in a way that is intuitive, fast, and visually engaging for students.

Future Trends: Quantized Computing and Precision Arithmetic

As we look toward the future of technology, the way we handle mathematical operations continues to change. One of the most exciting areas is “Quantized Computing” in AI.

Improving AI Efficiency through Fractions

Training massive AI models requires enormous amounts of power. Researchers are now looking at “quantization,” which involves using lower-precision numbers (like small fractions) instead of large 32-bit decimals to represent the “weights” in a neural network. By understanding how to multiply these fractions efficiently at a hardware level (on GPUs and TPUs), engineers can create AI that is faster and more energy-efficient without sacrificing too much accuracy.

The Rise of Rational Arithmetic Hardware

There is ongoing research into hardware that natively understands rational numbers (fractions) rather than converting everything to floating-point decimals. Such “Rational Arithmetic Units” could theoretically eliminate rounding errors entirely at the hardware level. This would be a revolutionary shift in computer architecture, making the question of “how to multiply fractions” a core component of the next generation of silicon chips.

In conclusion, multiplying fractions is far more than a simple arithmetic operation; it is a fundamental challenge that spans the entire tech stack. From the basic GCD algorithms used in simple scripts to the complex symbolic reasoning of AI and the future of hardware design, the precision of fractional multiplication remains a cornerstone of digital reliability. As we continue to build more complex systems, our ability to handle these rational numbers with software-driven accuracy will define the boundaries of what technology can achieve.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.