In the realm of physics, heat is often defined simply as the transfer of energy from one body to another due to a temperature difference. However, in the fast-paced world of technology, heat is much more than a scientific definition; it is the single greatest hurdle to the next generation of computing, the silent killer of hardware longevity, and the primary byproduct of the digital age. To understand what type of energy heat is within a tech context, we must look beyond the molecular vibration and explore how thermal energy dictates the limits of software performance, artificial intelligence, and global infrastructure.

The Physics of Heat: Kinetic Energy in the Digital Circuit

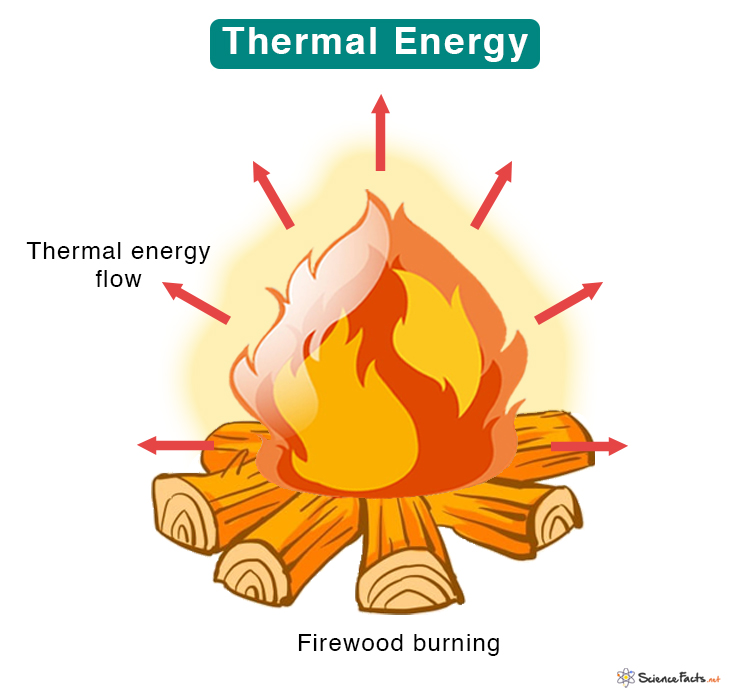

At its most fundamental level, heat is a form of kinetic energy. Specifically, it is the internal energy of a system caused by the microscopic, random motion of its constituent particles—atoms and molecules. In the context of a laptop, a smartphone, or a massive server rack, this kinetic energy is the direct result of electrical resistance.

Molecular Motion and Electrical Resistance

When electricity flows through the microscopic pathways of a silicon chip, electrons collide with the atoms of the conductive material. Each collision transfers a small amount of energy to the atom, causing it to vibrate more vigorously. This collective vibration is what we perceive as heat. In the tech industry, this is known as Joule heating. As we demand more processing power—pushing billions of transistors to flip on and off billions of times per second—the frequency of these collisions increases, leading to higher thermal output.

Entropy and the Landauer’s Principle

A critical concept for tech enthusiasts and engineers is Landauer’s Principle, which links information theory to thermodynamics. It suggests that any logically irreversible manipulation of information, such as erasing a bit of data, must increase entropy and release a specific amount of heat. This means that heat is not just a “flaw” in our hardware; it is a fundamental physical requirement of computation itself. As we move toward more complex AI models, the “heat cost” of processing data becomes a central focus for software optimization and hardware design.

Thermal Management as the Frontier of Hardware Innovation

As transistors shrink toward the atomic scale, managing the resulting thermal energy has become the primary bottleneck for hardware manufacturers. We have reached a point where we can no longer simply increase clock speeds (the “Megahertz Myth” of the early 2000s) because the heat generated would melt the silicon. This has shifted the focus of tech innovation from raw speed to thermal efficiency.

The Silicon Limit and Thermal Throttling

Modern CPUs and GPUs are equipped with sophisticated sensors designed to monitor heat in real-time. When a processor reaches its thermal ceiling, it engages in “thermal throttling”—deliberately slowing down its clock speed to reduce the kinetic energy generation and prevent permanent physical damage. For developers and gamers, this means that the performance of software is often dictated not by the code itself, but by the physical ability of the hardware to dissipate heat. This is why “thermal design power” (TDP) is now a primary specification in tech reviews and gadget comparisons.

Active vs. Passive Cooling Architectures

The tech industry has responded with increasingly creative ways to manage thermal energy.

- Passive Cooling: Utilizes heat sinks (conductive metal structures) and natural convection to move heat away from components. This is common in tablets and thin laptops where silence and portability are prioritized.

- Active Cooling: Employs fans or mechanical movement to force airflow over heat sinks.

- Vapor Chambers: A high-tech solution found in flagship smartphones where a small amount of liquid evaporates and condenses to move heat away from the processor more efficiently than solid metal.

Data Centers: Managing Thermal Energy on a Global Scale

While personal gadgets deal with heat on a small scale, the global tech infrastructure—the “Cloud”—faces a thermal challenge of staggering proportions. Data centers are essentially massive converters that turn electricity into heat while processing data as a byproduct. Managing this energy is now a multi-billion dollar sector of digital security and infrastructure management.

Liquid Cooling and Immersion Techniques

Traditional air conditioning is becoming insufficient for the high-density server racks required for modern AI training. This has led to the rise of liquid cooling, where chilled water or specialized coolants are piped directly to the heat sources. Even more radical is immersion cooling, a trend where entire server motherboards are submerged in a non-conductive, dielectric fluid. This fluid absorbs heat much more effectively than air, allowing for tighter component packing and higher performance without the risk of thermal runaway.

Waste Heat Recovery and Sustainability

In a shift toward “Green Tech,” many companies are no longer viewing the heat generated by data centers as waste, but as a resource. Innovative projects in Northern Europe and North America are now “harvesting” the thermal energy from data centers and piping it into local district heating systems to warm homes and greenhouses. By repurposing the kinetic energy of vibrating atoms in a processor to heat water for a city, the tech industry is closing the loop on energy efficiency and redefining the lifecycle of thermal energy.

The Role of Heat in Emerging Tech: AI and Quantum Computing

As we look toward the future of technology, heat remains the ultimate antagonist. Two of the most significant trends in the tech world—Artificial Intelligence and Quantum Computing—are defined by their relationship with thermal energy.

AI Hardware Demands and the Thermal Wall

The explosion of Large Language Models (LLMs) like GPT-4 has created a desperate need for specialized chips (like NVIDIA’s H100 GPUs). these chips consume massive amounts of power and generate intense heat because of the sheer volume of parallel calculations they perform. The “Thermal Wall” is a very real threat to the scaling of AI; if we cannot find more efficient ways to manage the heat generated by neural network training, the cost of AI development will become environmentally and financially unsustainable. This is driving a new trend in “AI-optimized” hardware that prioritizes low-voltage operations to minimize heat at the source.

Superconductivity and the Quest for Absolute Zero

On the opposite end of the spectrum is Quantum Computing. Unlike classical computers that generate heat, quantum bits (qubits) are incredibly sensitive to it. Even the smallest amount of thermal energy can cause “decoherence,” essentially breaking the quantum state and causing errors in calculation. To function, many quantum computers must be kept at temperatures near absolute zero—colder than outer space. This requires massive cryogenic dilution refrigerators. Here, the tech trend is not about dissipating heat, but about the total elimination of it. The mastery of thermal energy—or the lack thereof—will be the deciding factor in which quantum architecture eventually wins the race.

Conclusion: Heat as the Metric of Efficiency

In the technology niche, heat is the most honest metric we have. It is the physical manifestation of inefficiency, the boundary of what our hardware can achieve, and a constant reminder of the laws of thermodynamics. Whether it is a developer optimizing code to reduce CPU cycles, a hardware engineer designing a new vapor chamber, or a data center architect implementing liquid immersion, the tech world is in a constant battle with thermal energy.

Understanding that heat is kinetic energy allows us to appreciate the sheer complexity of the devices we use every day. As we push toward more powerful AI, more portable gadgets, and more sustainable infrastructure, our ability to control, dissipate, and repurpose heat will be the defining characteristic of 21st-century innovation. Heat isn’t just a byproduct; it is the physical frontier of the digital age.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.