In the rapidly evolving landscape of human-computer interaction (HCI), the industry has long been dominated by two primary senses: sight and sound. We have perfected high-definition displays and spatial audio, yet the most fundamental human connection to the physical world—the sense of touch—remains the “final frontier” of the digital experience. In biological terms, this complex network of sensory systems is known as somatosensation.

Somatosensation is the multifaceted sensory system that allows us to perceive touch, pressure, temperature, pain, and the position of our limbs in space. For the technology sector, replicating somatosensation is no longer a niche pursuit for laboratory scientists; it is the cornerstone of the next generation of augmented reality (AR), virtual reality (VR), advanced prosthetics, and remote robotics. By understanding and digitizing somatosensation, we are moving beyond “using” devices to truly “inhabiting” digital environments.

1. The Biological Blueprint: Understanding Somatosensation for Engineering

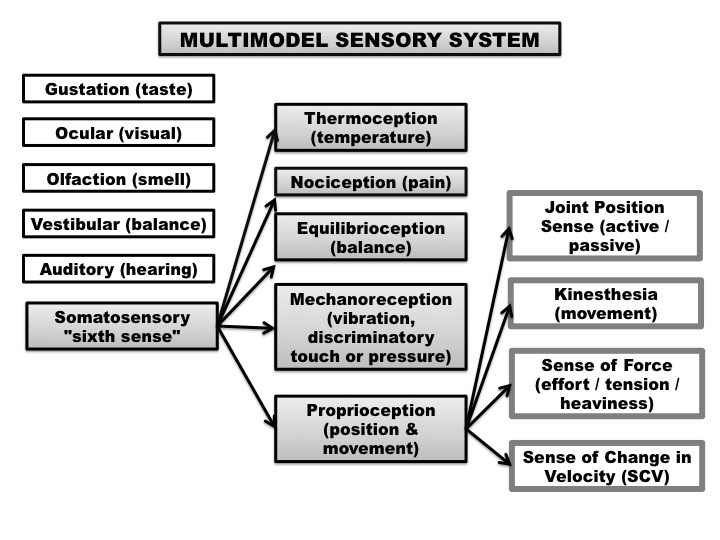

To replicate touch in a digital environment, engineers must first understand the high-resolution biological hardware they are trying to mimic. Somatosensation is not a single sense but a suite of distinct sensory modalities governed by specialized receptors in the skin and muscles.

Mechanoreception and the Precision of Haptics

The most recognizable aspect of somatosensation is mechanoreception—the ability to detect mechanical stimuli such as pressure, vibration, and texture. In the tech world, this is the foundation of haptic feedback. Human skin is embedded with various receptors, like Meissner’s corpuscles for light touch and Pacinian corpuscles for high-frequency vibration.

Tech developers are currently focused on “high-fidelity haptics,” moving away from the simple “buzz” of an old smartphone to localized actuators that can simulate the specific friction of cloth or the distinct click of a virtual button. This requires a sophisticated marriage of materials science and software algorithms to trick the brain into perceiving a digital surface as having physical properties.

Proprioception: The “Internal GPS” of Hardware

Proprioception is the somatosensory sense that tells your brain where your limbs are without you having to look at them. In tech, this is critical for the development of “natural” interfaces. When a user wears a VR headset, the system must align the digital avatar’s movements perfectly with the user’s physical proprioceptive feedback. Any “drift” or lag between what the body feels and what the eyes see leads to motion sickness and a break in immersion. This is why low-latency tracking and inertial measurement units (IMUs) are essential somatosensory technologies.

Thermoreception and the Future of Sensory Realism

While often overlooked, thermoreception—the ability to sense heat and cold—is a vital part of the somatosensory experience. Emerging tech startups are now integrating Peltier elements into wearable “haptic suits.” These elements can rapidly heat up or cool down, allowing a user in a digital simulation to feel the warmth of a virtual campfire or the chill of a digital winter, adding a layer of biological realism previously thought impossible.

2. Haptic Technology: From Buzzes to Bio-Mimicry

The tech industry’s journey into somatosensation began with simple vibration motors, but the current trajectory is focused on “Surface Haptics” and “Wearable Force Feedback.” This evolution is redefining how we interact with professional tools and consumer gadgets.

The Evolution of Tactile Displays

Traditional screens are smooth, flat glass, which offers zero somatosensory information. However, the next generation of tactile displays uses electrovibration or ultrasonic waves to create “friction maps” on a screen. By modulating the electrical field between the finger and the glass, these devices can make a smooth surface feel rough, sticky, or ridged. This has profound implications for digital accessibility, allowing visually impaired users to “feel” digital maps or UI elements.

Force Feedback and the Industrial Internet of Things (IIoT)

Somatosensation is a critical component of “Teleoperation”—the ability to control robots from a distance. In high-stakes fields like robotic surgery or deep-sea exploration, the operator needs to feel the resistance of the tissue or the weight of an object. Sophisticated haptic gloves now provide “force feedback,” using mechanical exoskeletons or pneumatic bladders to physically stop the user’s hand from closing when their virtual avatar grabs a solid object. This allows for a level of precision that visual feedback alone cannot provide.

The Consumer Wearable Market

Beyond gaming, somatosensory tech is finding a home in health and wellness wearables. Companies are developing devices that use “haptic pacing”—gentle rhythmic pulses on the wrist—to regulate heart rates, reduce anxiety, or provide turn-by-turn navigation through “tactile cues.” This transforms the smartwatch from a notification hub into a somatosensory peripheral that communicates directly with the wearer’s nervous system.

3. Neural Interfaces: Direct Communication with the Somatosensory Cortex

While haptic gloves and suits act on the skin, the most cutting-edge tech in this niche bypasses the skin entirely. Brain-Computer Interfaces (BCIs) and Neural Engineering are aiming to input somatosensory data directly into the brain or the peripheral nervous system.

Bidirectional Neural Prosthetics

For individuals with limb loss, the greatest challenge has been the lack of sensory feedback. Modern “smart” prosthetics are now being designed with somatosensation in mind. By embedding sensors in the prosthetic fingertips and wiring them to the user’s remaining nerves, tech can send electrical signals back to the brain. When the prosthetic hand touches an object, the user “feels” it as if it were their own hand. This bidirectional communication is a landmark achievement in neuro-technology.

The Role of BCIs in Digital Immersion

Companies like Neuralink and Synchron are exploring how BCIs can facilitate a more direct form of somatosensation. By stimulating the somatosensory cortex—the area of the brain responsible for processing touch—researchers believe they can eventually simulate tactile experiences without any external physical stimuli. This would represent the ultimate “Matrix-style” immersion, where a user could feel a digital environment purely through neural data streams.

Software Integration and Sensory Mapping

The software side of neural somatosensation is equally complex. It requires “Neural Decoders” that can translate binary code into electrical impulses that the brain recognizes as “soft,” “sharp,” or “hot.” This requires advanced AI and machine learning models to map out individual neural patterns, as every human brain processes somatosensory information slightly differently.

4. The Impact of Digital Somatosensation on Professional Sectors

The integration of somatosensation into the tech stack is not just about entertainment; it is a transformative force for professional industries, from medicine to remote engineering.

Remote Surgery and Tele-Mentoring

In the medical tech sector, the “Sense of Touch” is the difference between life and death. Robotic surgery platforms are integrating somatosensory feedback to allow surgeons to perform complex procedures from thousands of miles away. By feeling the tension of a suture or the density of an organ through haptic interfaces, surgeons can maintain the “surgical intuition” that they usually only have when physically present in the OR.

Digital Twins and Maintenance

In the aerospace and manufacturing sectors, “Digital Twins” (virtual replicas of physical assets) are becoming more sophisticated. Using somatosensory-enabled AR, an engineer can “touch” a virtual engine to feel for vibrations or heat patterns that indicate mechanical failure. This tech allows for “predictive maintenance” through tactile inspection, even if the physical machine is on a different continent.

Education and Skill Acquisition

Somatosensation is essential for “muscle memory.” Tech-based training programs are utilizing haptic feedback to train workers in high-skill trades. For example, a trainee welder can use a haptic-enabled torch that vibrates or resists movement when the angle is incorrect. This accelerates the learning curve by engaging the somatosensory system, which is far more effective for motor-skill retention than watching a video tutorial.

5. Challenges, Ethics, and the Future of Digitized Touch

As we move toward a world where somatosensation can be recorded, transmitted, and simulated, the tech industry faces significant technical and ethical hurdles.

The “Latency Gap” and Data Bandwidth

Somatosensation is incredibly sensitive to timing. If you touch an object and there is even a 50-millisecond delay in the feedback, the brain perceives it as an error, leading to “sensory dissonance.” Replicating high-fidelity touch requires massive data bandwidth and near-zero latency, pushing the limits of 5G and 6G networks. To make somatosensory tech universal, we need an infrastructure that can handle the “Tactile Internet.”

Bio-Privacy and Haptic Security

If a device can “write” sensations to your nervous system, it raises profound security concerns. “Haptic hacking” could theoretically involve sending painful or disorienting sensory input to a user. Furthermore, somatosensory data—how we move, our grip strength, our reaction to stimuli—is highly personal. As tech companies begin to collect this data to refine their haptic algorithms, the industry must establish strict protocols for “Bio-Privacy.”

The Roadmap to Total Immersion

The future of somatosensation in tech lies in the transition from “vibrating gadgets” to “integrated sensory environments.” We are moving toward a “Full-Body Internet,” where our physical and digital sensations are seamlessly blended. Whether it’s through ultra-thin haptic skins, neural implants, or sophisticated force-feedback exoskeletons, the digitization of somatosensation will redefine what it means to be “connected.”

By mastering somatosensation, technology is finally moving beyond the screen. We are entering an era where the digital world will no longer just be something we look at, but something we can reach out and feel. This sensory revolution will close the gap between the virtual and the physical, making our digital interactions as rich, nuanced, and tangible as the world around us.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.