The question of “what percent of crimes are committed by black people” is a deeply sensitive one, often intertwined with complex socio-economic factors and historical contexts. However, when approached through a technological lens, the inquiry shifts from a direct statistical assertion to an examination of how such data is collected, processed, analyzed, and presented. In the digital age, the tools and methodologies employed in data science and algorithmic processing play a pivotal role in shaping our understanding, and often our misconceptions, about crime and its demographics. This exploration delves into the technological infrastructure and processes that underpin crime statistics, highlighting how advancements in data analysis, the potential for algorithmic bias, and the dissemination of information via digital platforms all contribute to the conversation.

The Digital Backbone of Crime Data Collection and Analysis

The generation of any statistic, particularly one as consequential as crime demographics, relies heavily on technological systems. From the initial reporting of an incident to the aggregation and analysis of vast datasets, technology forms the invisible framework that supports these figures. Understanding this framework is crucial for critically evaluating the numbers presented.

Data Acquisition and Standardization: The Foundation of Statistical Integrity

The journey of crime data begins with its acquisition. Law enforcement agencies at local, state, and federal levels utilize a variety of digital systems to record incidents. These can range from Computer-Aided Dispatch (CAD) systems, which log calls for service and initial incident details, to more comprehensive Records Management Systems (RMS) that store arrest records, court dispositions, and sentencing information. The accuracy and completeness of data entered into these systems are paramount. Human input errors, inconsistencies in reporting protocols across different jurisdictions, and the technical limitations of the software itself can introduce noise and inaccuracies at the very inception of the data lifecycle.

Furthermore, the standardization of data is a significant technological challenge. Different agencies may use varying crime classification codes, definitions of offenses, and data fields. Initiatives like the FBI’s Uniform Crime Reporting (UCR) program and its successor, the National Incident-Based Reporting System (NIBRS), aim to create a more standardized dataset. However, the transition and adoption of these newer, more detailed systems are technologically driven and often face hurdles related to software upgrades, training, and the sheer volume of data that needs to be migrated and reconciled. The effectiveness of these standardization efforts is directly tied to the technological capacity and willingness of participating agencies to implement and adhere to them.

Algorithmic Processing and Statistical Modeling: Extracting Meaning from Raw Data

Once collected, crime data is subjected to various forms of algorithmic processing and statistical modeling to derive meaningful insights. This is where the power of computational analysis comes to the forefront. Sophisticated software packages are used for data cleaning, transformation, and the application of statistical techniques such as regression analysis, correlation studies, and predictive modeling. These tools allow researchers and analysts to identify trends, detect patterns, and attempt to understand the relationships between different variables, including demographic factors.

The algorithms themselves are designed by humans, and their underlying logic can inadvertently embed biases. For instance, if historical data used to train an algorithm reflects societal biases in policing or arrest patterns, the algorithm may learn and perpetuate these biases. This is particularly relevant when algorithms are used for predictive policing, where they might disproportionately flag certain neighborhoods or demographic groups as higher risk, even if the underlying crime rates are not inherently different when controlled for other socio-economic factors. The “black box” nature of some complex algorithms further exacerbates this issue, making it difficult to fully understand why a particular output is generated.

The Technological Specter of Algorithmic Bias in Crime Statistics

The application of technology to crime statistics is not a neutral act. The very tools designed to illuminate reality can, through inherent design or flawed implementation, cast long shadows of bias, particularly when sensitive demographic information is involved. This section unpacks how technology can contribute to skewed perceptions of crime and its perpetrators.

Pre-existing Biases in Data Input: The Garbage In, Garbage Out Principle

The most significant source of algorithmic bias in crime statistics often originates before the data even enters sophisticated analytical models. This is the “garbage in, garbage out” principle, writ large. Historical policing practices, deeply influenced by systemic racism and socio-economic disparities, have led to disproportionate surveillance, stops, and arrests of individuals from certain racial and ethnic groups, particularly Black communities.

When this historically biased data is fed into algorithms, the algorithms learn these patterns as indicative of criminal propensity. For example, if policing resources have historically been concentrated in predominantly Black neighborhoods, leading to more arrests for minor offenses, an algorithm trained on this data might conclude that these neighborhoods are inherently more “criminal.” This creates a feedback loop: the algorithm directs more resources to these areas, leading to more arrests, which further reinforces the algorithm’s biased output. The technology, in this instance, acts as an amplifier of existing societal biases rather than an objective measure of crime.

Algorithmic Discrimination and Differential Outcomes

Beyond data input, the design of algorithms themselves can lead to discriminatory outcomes. Machine learning models, which are increasingly used in criminal justice for tasks like risk assessment in sentencing or parole decisions, have been shown to exhibit racial bias. These algorithms are often trained on a multitude of factors, but if certain proxies for race (like zip code, socio-economic status, or prior arrest history which itself can be biased) are highly correlated with race and are included in the model, the algorithm can inadvertently produce racially disparate results.

For instance, an algorithm designed to predict recidivism might assign higher risk scores to Black individuals compared to white individuals with similar criminal histories and socio-economic backgrounds. This is not necessarily because the algorithm is explicitly programmed to be racist, but because the patterns it has learned from biased data lead it to associate certain characteristics, which are disproportionately present in Black communities due to historical and ongoing societal inequities, with a higher likelihood of reoffending. The technological application, therefore, can legitimize and automate discrimination under the guise of objective analysis.

Digital Platforms and the Dissemination of Skewed Narratives

The way crime statistics and demographic breakdowns are presented and consumed in the digital realm significantly influences public perception and discourse. The technology of the internet, social media, and digital media outlets plays a crucial role in shaping narratives, often amplifying sensationalism and contributing to the perpetuation of stereotypes.

The Power of Virality and Sensationalism in Online Discourse

The internet, with its emphasis on engagement and shareability, often favors content that is sensational, provocative, or confirms pre-existing beliefs. When crime statistics are presented without proper context, nuance, or an understanding of their underlying data collection and algorithmic processing, they can easily be misinterpreted and weaponized. Social media algorithms are designed to maximize user interaction, and content that elicits strong emotional responses, including outrage or fear, tends to spread rapidly.

Articles or posts that highlight crime statistics disproportionately involving Black individuals, without delving into the socio-economic factors, systemic issues, or potential biases in data collection, can go viral. This rapid dissemination can create an impression that these statistics represent a complete and unbiased picture of crime, reinforcing negative stereotypes about Black communities. The technology of social media, in this regard, becomes a powerful engine for spreading misinformation and exacerbating racial prejudices.

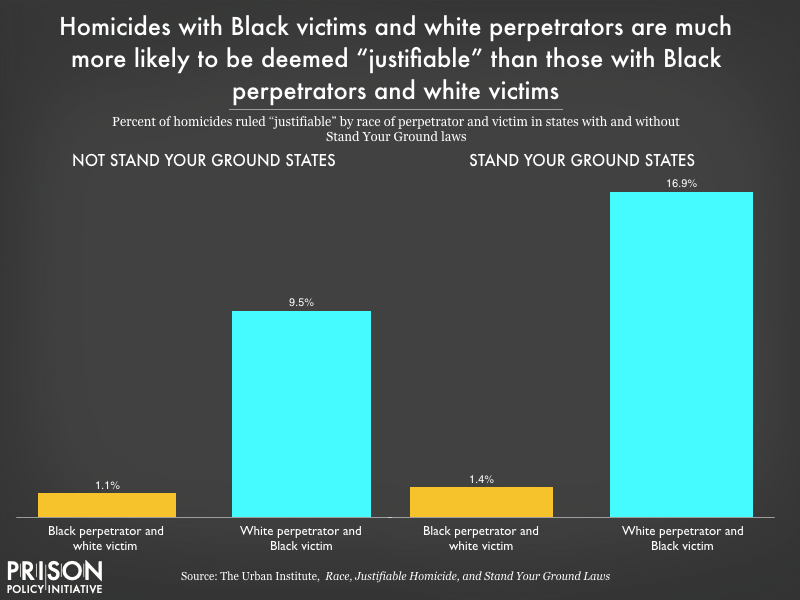

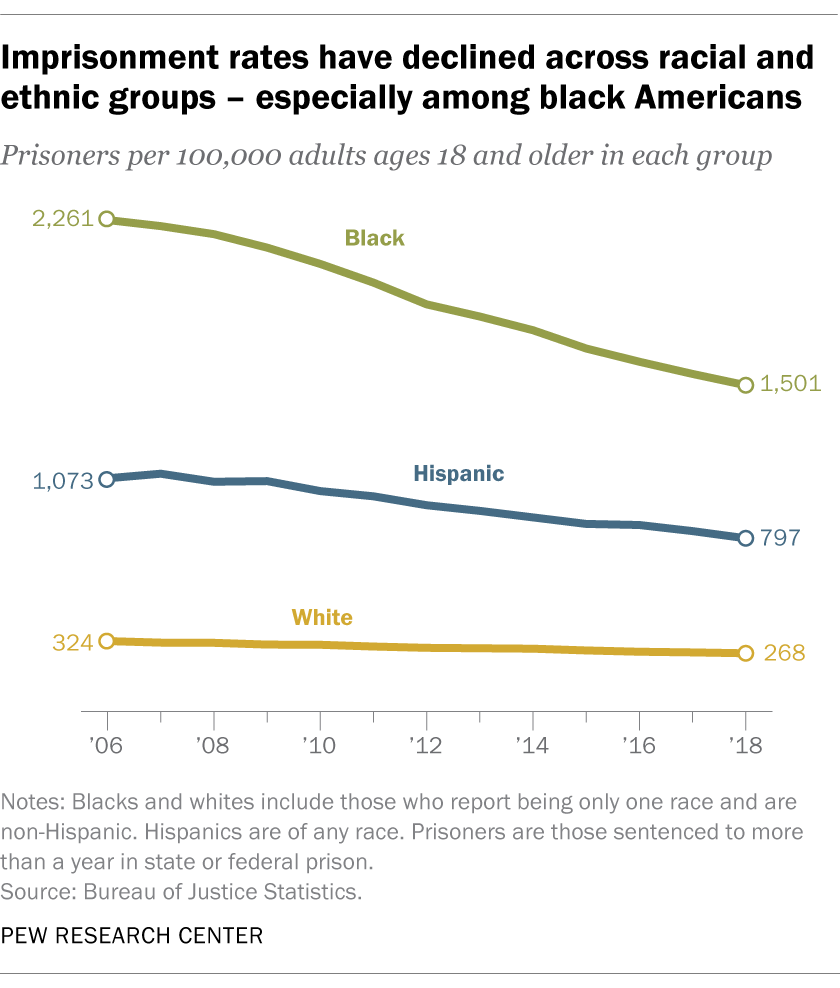

The Role of Data Visualization and Interactive Tools

Data visualization technologies, while incredibly useful for making complex data accessible, can also be employed to deliberately or inadvertently mislead. The choice of charts, graphs, color schemes, and the way data is presented can significantly influence how it is interpreted. For example, a poorly designed bar chart can exaggerate differences, and a misleading time-series graph can create false impressions of trends.

Interactive data dashboards and online explorable datasets, while empowering for users who want to delve deeper, also place a burden of interpretation on the end-user. Without a strong understanding of statistical principles and the limitations of the data, users can easily draw incorrect conclusions. The technological accessibility of such tools means that flawed or biased statistical narratives can be easily reproduced and disseminated, often presented with an aura of digital authority that can mask underlying inaccuracies or biases. Ultimately, the technology used for presenting data must be scrutinized as critically as the data itself.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.