In the landscape of modern technology, the ability to perform basic mathematical operations like adding fractions has evolved far beyond the pencil-and-paper methods taught in primary school. While the fundamental logic remains the same—finding a common denominator and summing the numerators—the implementation of these operations within software, algorithms, and artificial intelligence represents a sophisticated intersection of mathematics and computer science. For developers, data scientists, and tech enthusiasts, understanding how to programmatically add fractions is essential for maintaining precision in financial software, architectural rendering, and scientific simulations where floating-point errors could lead to catastrophic failures.

The Evolution of Calculation: From Abacus to Algorithmic Processing

The journey of mathematical computation has moved from physical tools to abstract digital logic. In the realm of technology, “adding fractions” is not just about finding a sum; it is about data integrity and computational efficiency.

The Logic of Common Denominators in Digital Systems

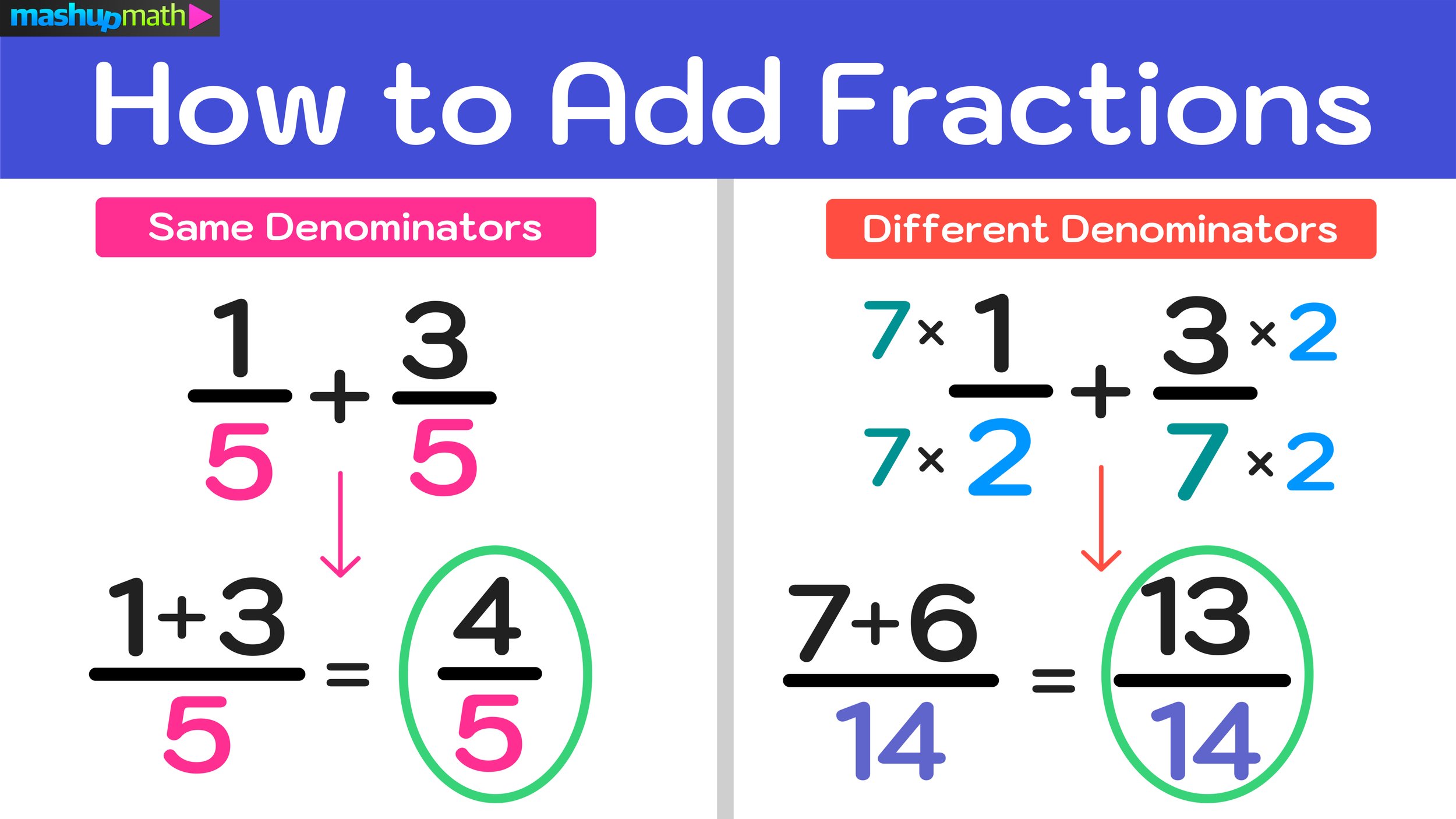

To a computer, a fraction is often represented as a pair of integers: a numerator and a denominator. When we instruct a system to add two fractions, the algorithm must first synchronize the denominators. In tech terms, this is an optimization problem. The software must identify the Least Common Multiple (LCM) of the denominators to ensure the fractions are compatible. This process is the backbone of symbolic computation engines used in high-level research software, ensuring that the machine treats the numbers as exact values rather than approximations.

Floating Point vs. Precise Fractional Data Types

One of the most significant challenges in tech is the “floating-point error.” In binary systems, certain decimal fractions (like 0.1 + 0.2) cannot be represented perfectly, leading to tiny rounding errors (0.30000000000000004). To combat this, specialized software libraries utilize a “Fraction” or “Rational” data type. These types store the numerator and denominator separately, allowing the computer to add fractions with 100% precision, bypassing the limitations of standard decimal-to-binary conversion. This is critical in fields like digital cryptography and aerospace engineering.

Programming the Solution: Adding Fractions in Popular Languages

For software engineers, adding fractions involves writing clean, reusable code that can handle various inputs while maintaining performance. Different programming environments offer different tools to achieve this.

Implementing Fraction Addition in Python

Python is renowned for its “batteries included” philosophy, and its fractions module is a prime example of tech-driven math. By importing the Fraction class, a developer can simply input Fraction(1, 3) + Fraction(1, 6). The underlying technology automatically handles the finding of the common denominator and the final simplification of the result (in this case, 1/2). This abstraction allows developers to focus on higher-level logic without worrying about the granular steps of common denominator calculation.

JavaScript and the Math of User Interfaces

In web development, particularly in EdTech (Educational Technology) apps, adding fractions is a common requirement for interactive learning tools. Since JavaScript does not have a native fraction type, developers often build custom objects or use libraries like Math.js. The logic involves a “GCD” (Greatest Common Divisor) function. By calculating the GCD, the software can simplify the fraction after addition, ensuring the user sees “1/2” instead of “4/8” on their screen. This enhances the user experience (UX) by providing intuitive, human-readable results.

C++ and Low-Level Memory Efficiency

In high-performance computing, such as graphics engine development, adding fractions requires a focus on memory management. C++ developers might create a struct to represent a fraction, using operator overloading to redefine the + symbol specifically for these objects. This allows the hardware to process fractional addition at near-instant speeds, which is vital when a game engine needs to calculate millions of proportional coordinates per second.

AI and Machine Learning: Solving Complex Fractions with Neural Networks

The rise of Artificial Intelligence has transformed how we approach mathematical problem-solving. AI tools are no longer just calculating; they are “reasoning” through the steps of adding fractions.

Natural Language Processing (NLP) and Word Problems

Large Language Models (LLMs) like GPT-4 or specialized math AI utilize Natural Language Processing to interpret word problems involving fractions. When a user asks, “How do I add three-quarters of a cup of flour to two-thirds of a cup?”, the AI identifies the numeric values and the operation required. It translates the linguistic intent into a mathematical formula, executes the addition, and then translates the result back into a natural response. This represents a leap in how humans interact with digital math.

Computer Vision: Scanning and Adding Handwritten Fractions

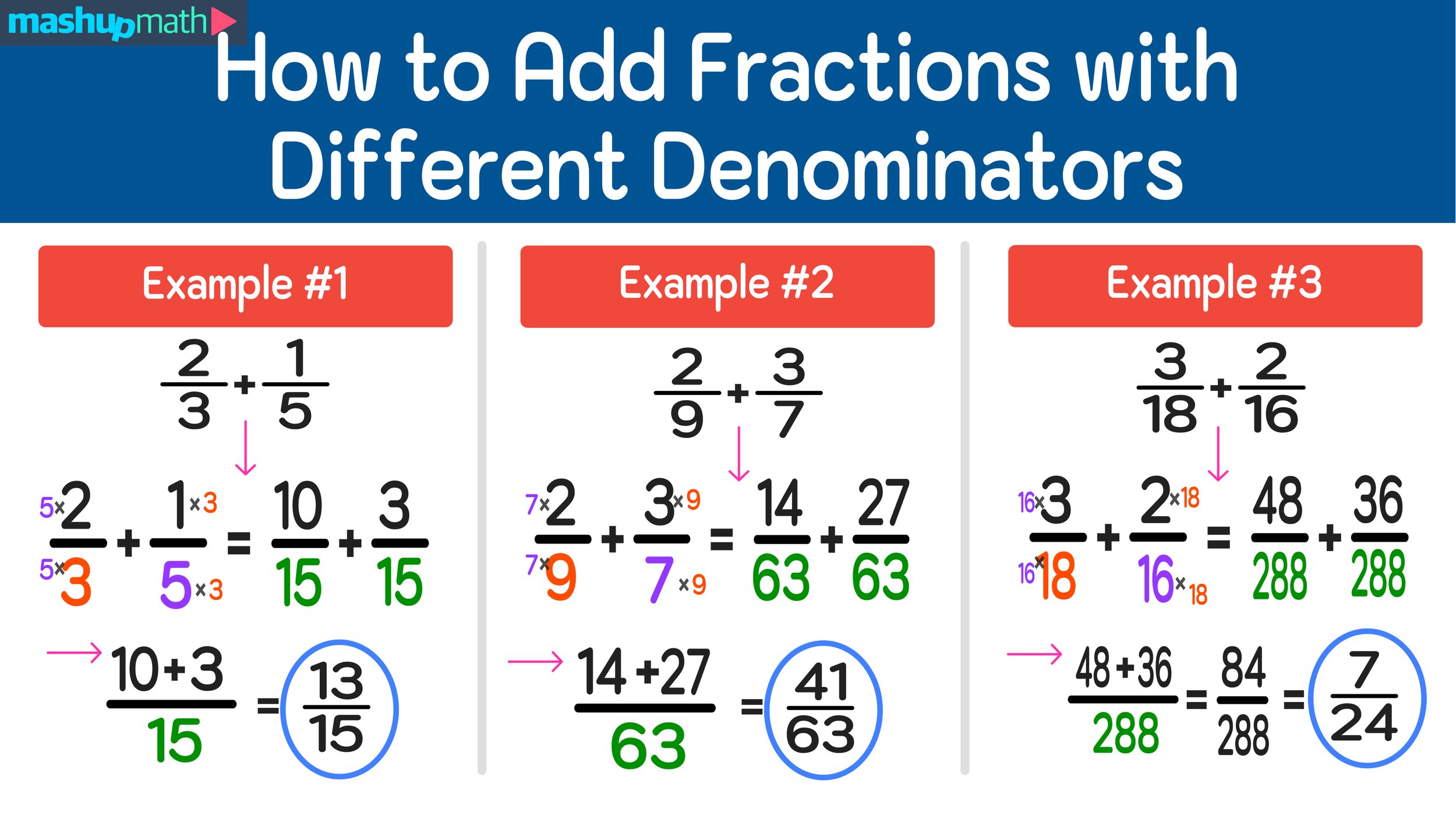

One of the most impressive tech applications is the use of Computer Vision (CV) to solve fractions. Apps like Photomath use neural networks to recognize handwritten fractions through a smartphone camera. The software identifies the boundaries of the numbers, detects the fraction bar, and uses optical character recognition (OCR) to convert the image into digital data. Once digitized, the app applies standard algorithms to add the fractions, often providing a step-by-step digital visualization of the process for the student.

Specialized Software and Tools for Advanced Fraction Management

Beyond custom code, several mainstream tech tools provide robust environments for managing and adding fractions in professional settings.

Spreadsheet Mastery: Excel and Google Sheets Formulas

While most users view spreadsheets as decimal-heavy tools, Excel and Google Sheets have sophisticated “Fraction” formatting. By setting a cell format to “Fraction,” the software interprets the input accordingly. Advanced users can use the MROUND or TEXT functions to force results into specific denominators (e.g., adding fractions of an inch for construction layouts). This bridge between raw data and specialized formatting is a staple of digital business operations.

Educational Tech (EdTech) and Interactive Learning Modules

The EdTech sector has gamified the process of adding fractions. Interactive platforms like Khan Academy or Brilliant use “Adaptive Learning Algorithms.” These tools monitor a user’s progress and, if a user struggles with adding fractions with unlike denominators, the software automatically pivots to provide more foundational exercises. This use of data-driven instruction ensures that the technology scales with the user’s proficiency level.

The Future of Computational Mathematics: Quantum Computing and Beyond

As we look toward the future of technology, the way we handle mathematical operations—including the simple act of adding fractions—may undergo another paradigm shift.

Parallel Processing of Large Fractional Datasets

In the era of Big Data, we are often adding millions of fractions simultaneously (think of weighing probabilities in a massive data set). Modern GPUs (Graphics Processing Units) utilize parallel processing to handle these calculations at speeds impossible for a standard CPU. This tech allows for real-time adjustments in complex systems, such as stock market predictors or weather models, where fractional increments determine the accuracy of a forecast.

Security and Cryptography: The Role of Rational Numbers

In the high-stakes world of digital security, rational numbers (fractions) play a growing role in post-quantum cryptography. While traditional encryption relies on the difficulty of factoring large integers, new methods explore the complexity of multi-dimensional lattices where fractional coordinates are used to hide data. Adding these fractions accurately across distributed systems is essential for maintaining the “ledger” in blockchain technology and secure communication channels.

In conclusion, “how to add fractions” is no longer just a classroom exercise; it is a fundamental technological process. From the precise “Rational” data types in software engineering to the neural networks of AI that can read a student’s handwriting, technology has turned fractional addition into a fast, accurate, and highly scalable operation. Whether you are coding a financial app in Python or using an AI tutor to help with homework, the tech behind the math ensures that every numerator and denominator finds its place in the digital world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.