The question “what year was Hamlet first performed” appears deceptively simple. On the surface, it’s a direct factual query, one that a quick search might seem to resolve. However, beneath this straightforward phrasing lies a profound challenge for information retrieval, historical research, and the very technologies we increasingly rely on to understand our past. It’s a question that, when dissected through a technological lens, illuminates the complexities of historical data, the advancements in AI and digital humanities, and the ongoing quest to bring the distant past into clearer focus for contemporary audiences. This article will delve into how technology grapples with such nuanced historical inquiries, transforming archival dust into actionable data and fragmented narratives into coherent historical understanding.

The Digital Historian’s Challenge: Unraveling the Past with Data

For centuries, historical inquiry was a pursuit primarily confined to physical archives, libraries, and the painstaking work of human scholars sifting through primary sources. The question of “what year was Hamlet first performed” would have necessitated a deep dive into theatrical records, patron accounts, literary critiques, and potentially contradictory manuscripts. Today, while human expertise remains paramount, technology has introduced powerful new tools and formidable challenges in equal measure. The essence of historical research has become, in many ways, a grand data problem, where the past is a vast, often incomplete, dataset waiting to be processed, analyzed, and interpreted by sophisticated algorithms and digital frameworks.

From Parchment to Pixels: Digitizing Archival Records

The first crucial step in leveraging technology for historical questions like Hamlet’s debut is the digitization of primary sources. Millions of historical documents—manuscripts, playbills, letters, financial ledgers, and literary commentaries—are being transformed from fragile physical artifacts into robust digital files. This process is far more complex than simply scanning a page. It involves high-resolution imaging, optical character recognition (OCR) to convert images of text into machine-readable formats, and sophisticated metadata tagging to ensure these digital assets are discoverable and interoperable. For a play like Hamlet, this could mean digitizing early quartos, the First Folio, contemporary diaries mentioning performances, and even archaeological findings related to Elizabethan theatres. The quality and comprehensiveness of this digitization effort directly impact the fidelity of the digital archive, forming the foundational layer for any subsequent technological analysis. Errors in OCR, missing pages, or poor image quality can introduce noise or gaps that propagate through later stages of analysis, underscoring the vital importance of meticulous digital preservation practices.

The Ambiguity of Anomaly: Handling Incomplete Data

Historical records are rarely perfectly preserved or entirely consistent. The past is rife with gaps, contradictions, and ambiguities—a significant hurdle for computational systems designed to work with structured, clean data. When asking “what year was Hamlet first performed,” researchers often encounter indirect evidence: a payment record to actors, a reference in another play, or a posthumous publication date. Rarely does a definitive, universally agreed-upon “opening night” document exist for plays from Shakespeare’s era. This is where the challenge of “incomplete data” becomes critical. Technologies must be designed not just to retrieve facts but to infer, contextualize, and even probabilistically assess the likelihood of certain events. Machine learning models, trained on vast corpora of historical texts, can learn to identify patterns and relationships between disparate pieces of information, helping historians piece together plausible timelines. However, these models also need to be transparent about their uncertainties, providing not just an answer but also an indication of the confidence level in that answer, acknowledging the inherent “ambiguity of anomaly” that characterizes historical research.

AI and Natural Language Processing: Decoding Historical Queries

Once historical documents are digitized and organized, the next technological frontier lies in how we interact with them. Natural Language Processing (NLP) and advanced Artificial Intelligence (AI) are revolutionizing how we formulate and answer complex historical questions. The query “what year was Hamlet first performed” isn’t just a string of words; it’s a semantic request for a specific type of historical event, involving entities (Hamlet, performance), temporal markers (year), and relationships (first, performed). AI systems, particularly Large Language Models (LLMs), are becoming increasingly adept at understanding and processing such nuanced inquiries, moving beyond keyword matching to genuine contextual comprehension.

Semantic Search and Contextual Understanding

Traditional search engines often rely on keywords. Type “Hamlet first performed” and you’ll get pages containing those exact words. Semantic search, powered by AI, goes much further. It understands the meaning and intent behind the query. Instead of just matching keywords, it builds a conceptual understanding: “The user is looking for the initial public presentation date of a play titled Hamlet.” This allows the search to retrieve documents that might use different phrasing (e.g., “the debut of Shakespeare’s tragedy,” “when Burbage’s company staged Hamlet”) but convey the same underlying meaning. AI models learn the intricate relationships between words, concepts, and historical entities. They can distinguish between “Hamlet the play” and “Hamlet the character,” or “performance year” versus “publication year.” This contextual understanding is vital for historical queries where the language itself has evolved over centuries, and direct keyword matches might miss crucial information or retrieve irrelevant data.

Large Language Models as Historical Interpreters

The advent of Large Language Models (LLMs) represents a significant leap in AI’s ability to engage with and interpret historical data. Trained on vast amounts of text data, including historical archives, LLMs can identify patterns, synthesize information, and even generate coherent narratives from disparate sources. When presented with “what year was Hamlet first performed,” an LLM doesn’t just perform a lookup; it acts as a sophisticated interpreter. It can:

- Identify relevant sources: Pinpoint specific historical texts, theatrical records, or scholarly articles that discuss Hamlet’s early performances.

- Extract key entities and dates: Distinguish between various dates mentioned (composition, publication, different performances) and focus on the “first performance.”

- Synthesize conflicting information: If multiple sources offer slightly different dates or theories, an LLM can analyze the evidence, weigh the credibility of sources (if trained to do so), and articulate the prevailing scholarly consensus or the existence of ongoing debate.

- Contextualize the answer: Beyond just a year, an LLM can provide crucial context about the performance conditions, the theatre, the acting company, or the historical period, enriching the answer considerably.

However, it’s critical to note that LLMs are not infallible. They “hallucinate” or generate plausible but incorrect information, especially when dealing with sparse or ambiguous data. Their interpretations are based on the patterns they learned from their training data, which itself can contain biases or inaccuracies. Therefore, human oversight and critical evaluation remain essential when using LLMs as historical interpreters, particularly for establishing definitive facts.

The Architecture of Knowledge: Building Digital Humanities Databases

To effectively answer historical questions with technology, the digitized data and AI models need a robust underlying structure. This is where the field of Digital Humanities intersects with computer science to build sophisticated knowledge architectures. These systems are designed not just to store information but to represent complex historical relationships, contexts, and uncertainties in a machine-readable format. For a question like Hamlet’s first performance, this means building a framework that can not only hold the date but also link it to the specific theatre, the acting company, contemporary reviews, and even geographical information.

Ontologies and Metadata: Structuring Historical Information

At the heart of these knowledge architectures are ontologies and rich metadata. An ontology is a formal representation of knowledge as a set of concepts within a domain and the relationships between those concepts. For historical theatre, an ontology might define concepts like “Play,” “Performance,” “Theatre,” “Actor,” “Date,” “Patron,” and specify relationships such as “PlayperformedatTheatreonDatebyActorcompany.” This structured approach allows computers to understand the semantic meaning of data, not just its literal form.

Metadata (data about data) then populates this ontological framework. For each digitized document related to Hamlet, metadata would include not only basic information (author, date of creation, format) but also structured tags linking it to the play, specific performances, individuals involved, and relevant historical periods. This could include:

event:performanceplay:Hamletdate:circa_1600-1601(with qualifiers likeestimated,most_likely)location:The_Globe_Theatreactor_company:Lord_Chamberlain's_Men

This granular level of tagging and the explicit definition of relationships allow sophisticated queries that transcend simple keyword searches, enabling systems to infer connections and build a comprehensive understanding of historical events, even when information is scattered across hundreds of different sources.

Interoperability: Connecting Disparate Sources

Historical data is inherently fragmented. Records related to Hamlet might reside in a library’s manuscript collection, a university’s digital archive of Elizabethan drama, a museum’s collection of theatre artifacts, or an archaeological database of theatre sites. A major challenge and a key focus of digital humanities are achieving interoperability—the ability of different systems and organizations to exchange and make use of information. This is crucial for answering complex questions like “what year was Hamlet first performed,” which often requires drawing insights from multiple, geographically dispersed, and institutionally siloed datasets.

Interoperability is facilitated through:

- Standardized formats: Using common data formats (like XML, JSON) and exchange protocols.

- Persistent identifiers: Assigning unique, stable identifiers to historical entities (plays, people, places) that can be referenced across different databases.

- APIs (Application Programming Interfaces): Allowing different software applications to communicate and share data seamlessly.

By building interconnected digital ecosystems, researchers and AI systems can synthesize information from a truly global archive, painting a more complete picture of historical events and reducing the chance of overlooking crucial evidence simply because it was stored in an inaccessible silo.

Beyond the Date: Reconstructing Performance with VR/AR

While determining the exact year of Hamlet’s first performance is a fundamental historical question, technology is pushing the boundaries far beyond mere dates. The ultimate goal for many digital humanities projects is not just to know when something happened, but to truly understand how it happened and what it felt like. This leads us into the realm of immersive technologies like Virtual Reality (VR) and Augmented Reality (AR), which promise to bring historical events, including theatrical performances, to vivid, interactive life.

Immersive Re-enactments: Bringing History to Life

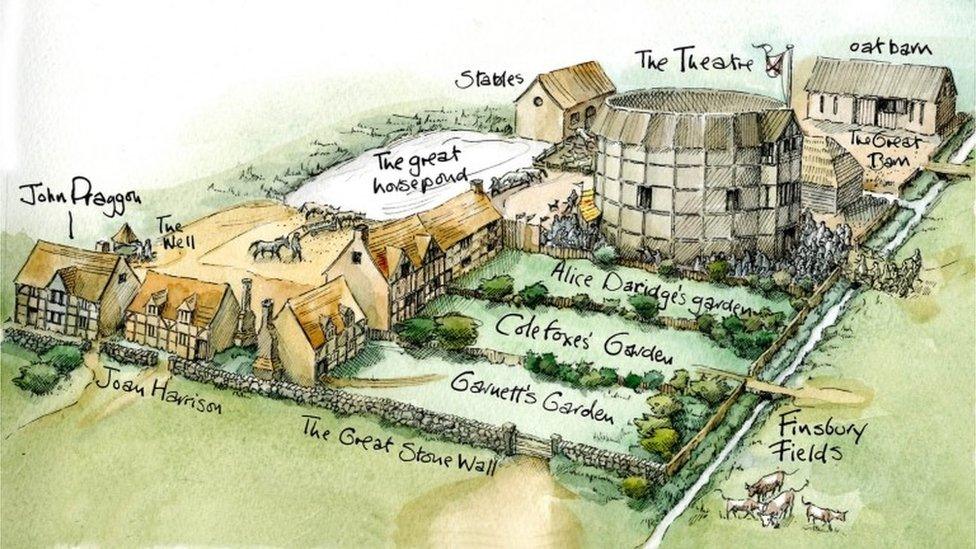

Imagine not just reading about Hamlet’s first performance but being able to “attend” it. VR technology is making this a nascent reality. By combining historical data—architectural plans of the Globe Theatre, descriptions of Elizabethan costumes, phonetic reconstructions of period English, and scholarly interpretations of staging practices—developers can create highly accurate, immersive virtual environments. For Hamlet, this could mean:

- Walking through a historically accurate Globe Theatre: Experiencing the layout, the proximity of actors to the audience, the natural lighting, and the sounds of the bustling crowd.

- Witnessing the performance: Seeing virtual actors, whose movements are based on historical performance theories and digital motion capture, deliver the lines in period-appropriate accents and gestures.

- Understanding the audience experience: Observing how groundlings, gentry, and nobility interacted with the play, and the social dynamics of an Elizabethan theatre.

These immersive re-enactments are not just entertainment; they are powerful tools for historical empathy and understanding. They allow scholars to test theories about staging and acoustics, and they provide an unparalleled educational experience for students and the general public, making abstract historical facts tangible and immediate.

The Ethical AI in Historical Interpretation

As AI and immersive technologies become more sophisticated, the ethical considerations around historical interpretation become increasingly salient. When AI “fills in the blanks” of incomplete historical data or generates virtual representations of the past, whose interpretation is it reflecting? How do we ensure that these technologies do not inadvertently perpetuate historical biases or create a “fake history” that feels real?

The ethical development of AI in historical contexts requires:

- Transparency: Clearly documenting the sources, assumptions, and algorithmic processes used to generate historical interpretations or virtual reconstructions.

- Bias detection and mitigation: Actively working to identify and reduce biases present in training data and algorithmic outputs.

- Attribution and provenance: Ensuring that the origins of historical facts, interpretations, and generated content are always clearly credited.

- Collaboration between disciplines: Fostering ongoing dialogue between historians, computer scientists, ethicists, and artists to ensure that technological advancements serve historical accuracy and responsible representation.

The question “what year was Hamlet first performed” serves as a microcosm for the larger technological journey into our past. It highlights the incredible progress in digitization, AI, and digital humanities in making historical information accessible and understandable. Yet, it also underscores the enduring complexity of historical truth, reminding us that technology, while a powerful ally, is ultimately a tool that must be wielded with critical judgment, ethical awareness, and a profound respect for the nuances of human history. The quest for historical truth is an ongoing dialogue, where technology provides the increasingly sophisticated language for that conversation.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.