The simple query, “What year did Ohio become a state?”, might seem like a straightforward historical question. Yet, beneath its unassuming surface lies a profound story about how humanity interacts with information, a narrative utterly transformed by technology. This question, a staple of trivia nights and school projects, is no longer answered by flipping through encyclopedias or poring over microfiche. Today, it’s a prompt for algorithms, a spark for semantic search, and a testament to the remarkable evolution of digital knowledge retrieval. In an era where information is both abundant and overwhelming, understanding the technological infrastructure that delivers these instant answers is paramount. This article delves into the technological innovations that power our quest for knowledge, examining the systems, algorithms, and emerging tools that make complex historical queries, and countless others, effortlessly accessible.

The Evolution of Knowledge Access: From Archives to Algorithms

The journey from a dimly lit library reference section to an instant search result on a smartphone screen encapsulates centuries of human endeavor in organizing and accessing knowledge. The very nature of a factual query like “What year did Ohio become a state?” has remained constant, but the methods for fulfilling that curiosity have undergone several revolutions, each propelled by technological advancement.

The Analog Predicament: Manual Research and Physical Repositories

For millennia, information was a tangible entity, confined to scrolls, books, and archives. To answer a specific historical question involved a laborious process of physical research. One would visit a library, navigate the Dewey Decimal System or Library of Congress Classification, locate relevant texts, and manually scan their contents. Access was often restricted by geography, time, and the sheer volume of available material. Expertise was highly valued, not just for knowing facts, but for knowing where to find them. Historians, librarians, and archivists were the gatekeepers and navigators of this vast, physical ocean of data. The process was slow, prone to human error, and inherently exclusive, limiting the spread of knowledge to those with the means and access to specialized institutions. This era, while foundational to scholarship, highlighted the urgent need for more efficient means of knowledge dissemination.

The Dawn of Digital: Early Databases and Static Information

The mid-20th century heralded the initial shift towards digital information. Early computers, with their nascent data storage capabilities, offered the first glimpse of a future where information could be organized, searched, and retrieved electronically. Punch cards evolved into magnetic tapes and then hard drives, laying the groundwork for digital databases. These early systems were largely static and siloed, often confined to academic institutions or government agencies. Libraries began digitizing card catalogs, creating early forms of online public access catalogs (OPACs). While still far from the interactive experience we know today, these advancements represented a monumental leap. They allowed for quicker, more precise searches within predefined datasets, reducing the physical burden of manual retrieval. The information itself, however, remained largely a one-way street: users queried, and the system returned pre-indexed data. There was little dynamism, and the sheer scope of interconnected knowledge remained largely unaddressed.

The Internet Revolution: Democratizing Access to Facts

The advent of the World Wide Web in the 1990s marked the true democratization of information access. Suddenly, a question like “What year did Ohio become a state?” could be posed to a nascent search engine, which would crawl interconnected websites to find potential answers. This was a paradigm shift, transforming information from a scarce commodity into an abundant resource. Websites, digital libraries, and online encyclopedias proliferated, creating an unprecedented global knowledge base. Crucially, the internet introduced hyperlinking, allowing users to navigate seamlessly between related pieces of information, mirroring the interconnectedness of human thought. The growth of robust backend infrastructure, including servers, data centers, and global networking protocols, made this instantaneous access possible on a massive scale. This era laid the foundation for the intelligent systems we rely on today, establishing the expectation that answers to virtually any question should be not just available, but immediately at our fingertips.

AI and Search Engines: The Architecture of Instant Answers

The journey from simply finding documents to getting direct, concise answers to questions like “What year did Ohio become a state?” is largely thanks to the sophisticated interplay of Artificial Intelligence (AI) and advanced search engine technologies. These systems have moved beyond mere keyword matching to genuinely understanding intent and context.

Understanding Search Engine Algorithms: Ranking and Relevance

At the heart of modern information retrieval are complex search engine algorithms. When a user types a query, these algorithms perform a multi-faceted analysis. They don’t just look for exact keyword matches; they consider synonyms, latent semantic indexing (LSI) to understand related concepts, and the overall intent behind the query. For a question like “What year did Ohio become a state?”, the algorithm would identify “Ohio,” “state,” and “year” as key entities, then infer the user’s intent to find a specific date. Factors like page authority, freshness of content, user engagement signals, and backlinks play crucial roles in ranking results, ensuring that credible and relevant sources rise to the top. Google’s PageRank algorithm, though evolved considerably, set the precedent for valuing the quality and interconnectedness of information sources, aiming to provide authoritative answers rather than just a list of pages containing keywords. The continuous refinement of these algorithms, often incorporating machine learning, allows search engines to adapt to evolving language patterns and user behaviors, constantly improving the accuracy and utility of their results.

The Rise of Conversational AI: Siri, Alexa, and Google Assistant

The natural progression from typed queries to spoken questions led to the development of conversational AI assistants. Siri, Alexa, Google Assistant, and others have revolutionized how we interact with information. Instead of typing, one can simply ask, “Hey Google, what year did Ohio become a state?” These AI tools leverage natural language processing (NLP) to understand human speech, convert it into a machine-readable query, and then interface with powerful search engines and knowledge bases to retrieve the answer. The challenge here is immense: understanding nuances in pronunciation, accents, and the varied ways humans phrase questions. Once the query is understood, the AI must synthesize information from various sources and present it in a clear, concise, and often spoken format. This capability transforms devices from passive tools into interactive knowledge companions, making information access more intuitive and integrated into daily life, whether in smart homes, cars, or on mobile devices.

Knowledge Graphs and Semantic Search: Beyond Keywords

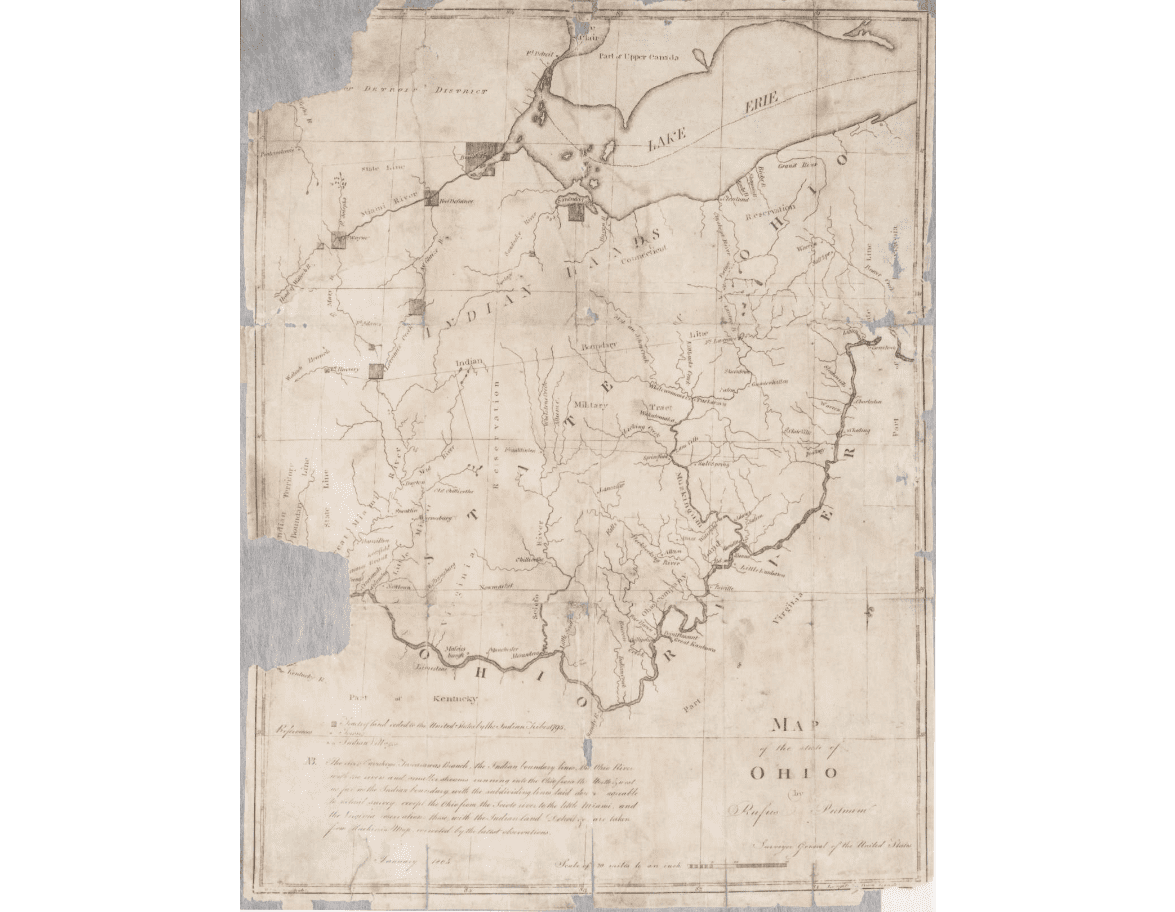

Perhaps the most significant leap in answering specific factual questions directly comes from technologies like knowledge graphs and semantic search. A knowledge graph is a structured network of entities (people, places, things, concepts) and the relationships between them. For instance, a knowledge graph would not just know “Ohio” and “state” as separate terms, but would understand that “Ohio” is a “state,” that “states” have “founding dates,” and that there is a specific “year” associated with Ohio’s statehood.

Semantic search goes beyond matching keywords by attempting to understand the meaning and context of a query. When asked “What year did Ohio become a state?”, a semantic search engine doesn’t just look for pages with those words; it understands the conceptual relationship between the terms and directly accesses its knowledge graph to find the precise piece of data that answers the question. This is why, when you ask Google this question, you often get a direct answer snippet at the top of the search results, often without even needing to click on a link. This shift from document retrieval to answer retrieval represents a profound advancement, driven by sophisticated data modeling, machine learning, and the continuous ingestion and structuring of vast amounts of information.

The Imperative of Data Accuracy and Digital Preservation

While technology has made answering factual questions like “What year did Ohio become a state?” incredibly easy, it also introduces critical challenges concerning data accuracy, reliability, and the long-term preservation of information. The digital age, with its rapid dissemination capabilities, demands a renewed focus on ensuring the integrity of our shared knowledge base.

Combating Misinformation: The Role of AI and Human Oversight

The same technologies that empower instant answers can, unfortunately, also amplify misinformation. Fake news, manipulated historical accounts, and misleading statistics can spread virally, eroding trust in digital information sources. Combating this requires a multi-pronged approach where AI plays a significant, though not exclusive, role. AI-powered algorithms can detect patterns indicative of misinformation, such as unusually high engagement from bot networks, inconsistent narratives across different sources, or the use of emotionally charged language. Fact-checking organizations increasingly leverage AI tools to identify dubious claims and quickly cross-reference them with authoritative sources. However, human oversight remains indispensable. Expert human fact-checkers review AI-flagged content, provide nuanced contextual analysis, and make final determinations, particularly for complex historical or socio-political topics. The challenge lies in balancing automated detection with human verification to maintain both speed and accuracy in a constantly evolving information landscape.

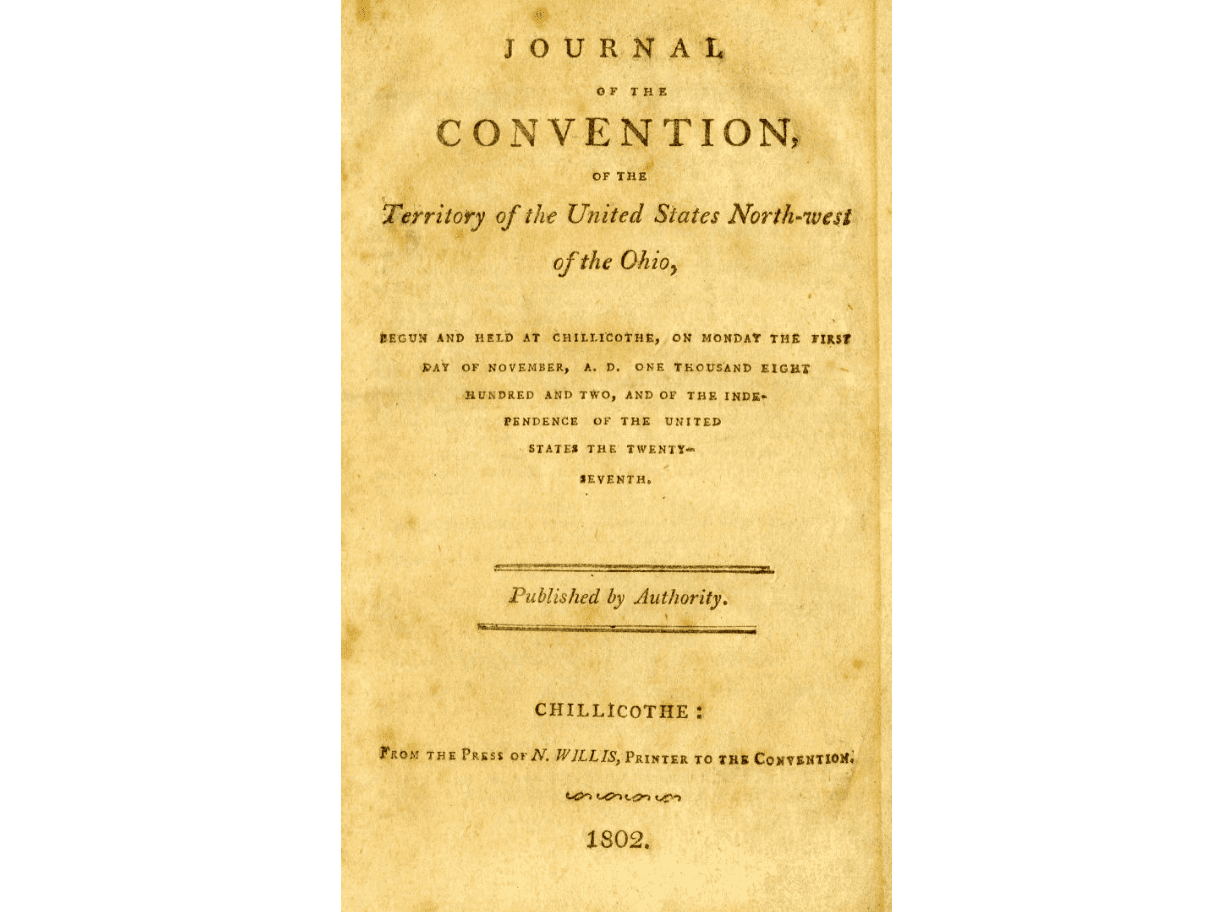

Archiving the Past for Future Generations: Cloud Solutions and Blockchain

Ensuring that factual information, from Ohio’s statehood date to the minutiae of daily events, remains accessible for future generations is a monumental task. Traditional archival methods are insufficient for the sheer volume of digital data generated daily. Cloud computing offers scalable and resilient solutions for digital preservation. Data is stored across multiple geographically dispersed servers, ensuring redundancy and protection against localized disasters. Advanced encryption and access controls safeguard sensitive historical records.

Emerging technologies like blockchain are also being explored for their potential in digital archiving. Blockchain’s immutable ledger could provide a tamper-proof record of historical documents and data, ensuring their authenticity over time. Each piece of information, once recorded, cannot be altered without detection, offering a robust defense against historical revisionism or data corruption. While still in early stages for large-scale historical archiving, the principles of decentralization and immutability offer exciting possibilities for creating highly trustworthy and durable digital archives. This combination of scalable cloud infrastructure and secure ledger technologies is crucial for guaranteeing that answers to future historical inquiries remain reliably available.

Ethical AI in Historical Context: Bias in Data and Algorithms

As AI systems become more sophisticated in retrieving and interpreting historical information, ethical considerations come to the forefront. AI models are trained on vast datasets, and if these datasets contain historical biases (e.g., underrepresentation of certain groups, colonial perspectives, or outdated terminology), the AI may inadvertently perpetuate or even amplify these biases in its responses. For instance, an AI trained predominantly on historical texts from a specific cultural viewpoint might present a skewed or incomplete account of a complex historical event.

Addressing this requires careful curation of training data, active efforts to de-bias algorithms, and transparency in how AI systems arrive at their conclusions. Developers and historians must collaborate to identify and mitigate historical biases within data sources, ensuring that AI-powered information retrieval systems present a more balanced and comprehensive view of the past. The goal is not just to provide an answer to “What year did Ohio become a state?”, but to ensure that the broader historical context provided by AI is equitable, accurate, and reflective of diverse human experiences.

Emerging Technologies Shaping Our Quest for Knowledge

The relentless pace of technological innovation promises even more transformative ways to interact with and understand information. Future tools will not only provide answers but will also contextualize, visualize, and personalize the learning experience, further blurring the lines between information retrieval and immersive education.

Augmented Reality (AR) in Historical Education and Exploration

Augmented Reality (AR) is poised to revolutionize how we experience history and explore factual information. Imagine asking “What year did Ohio become a state?” and not just receiving a text answer, but then pointing your phone at a map of the United States to see an AR overlay that visually highlights Ohio’s territorial evolution, showing its borders as they were in 1803 and how they changed over time. AR could bring historical figures to life in 3D, allow users to virtually walk through historical events, or even overlay historical data onto physical landmarks. Museums are already experimenting with AR to enhance exhibits, providing interactive layers of information that cater to individual visitor interests. This technology transforms passive learning into an engaging, multi-sensory experience, making historical facts more tangible and memorable.

Personalized Learning Paths and Adaptive AI Tutors

Beyond simple factual queries, future AI systems will act as personalized learning companions. Imagine an AI tutor that, upon answering “What year did Ohio become a state?”, could then gauge your interest and prior knowledge, offering follow-up questions, related historical context, or even suggesting a personalized learning module on early American statehood. These adaptive AI tutors would tailor content difficulty, delivery style, and pace to individual learners, identifying gaps in understanding and providing targeted resources. Leveraging machine learning, these systems could track progress, recommend curated content from across the web, and simulate historical scenarios, creating a dynamic and highly effective educational experience that goes far beyond mere information retrieval. This represents a shift from simply providing answers to fostering deep understanding.

Quantum Computing’s Potential for Ultrafast Information Processing

While still in its nascent stages, quantum computing holds the potential for another revolution in information processing and retrieval. Current search engines and AI systems, powerful as they are, operate within the constraints of classical computing. Quantum computers, utilizing principles like superposition and entanglement, could process vast amounts of data and explore complex relationships at speeds unimaginable today. For a question like “What year did Ohio become a state?”, a quantum-enhanced search could potentially sift through the entirety of human-recorded history, cross-referencing disparate sources, identifying subtle connections, and synthesizing information with unprecedented speed and accuracy. This could lead to a new generation of AI that can not only answer specific questions but also uncover previously unseen patterns in historical data, predict future trends based on past events, and solve complex historical puzzles that currently elude even the most powerful classical supercomputers. The future of knowledge access, powered by such advancements, promises to be an era of boundless discovery.

The simple query “What year did Ohio become a state?” serves as a powerful microcosm for understanding the profound technological advancements that have reshaped our access to information. From the laborious days of manual archive research to the instant, AI-driven answers of today, technology has consistently pushed the boundaries of what’s possible. As we look ahead, emerging fields like AR, personalized AI, and quantum computing promise to further transform our quest for knowledge, making it more immersive, personalized, and insightful. Yet, with great power comes great responsibility; ensuring the accuracy, ethical integrity, and long-term preservation of digital information remains a paramount challenge, demanding continuous innovation and human vigilance. The journey to understand our past, present, and future is inextricably linked to the technologies we build to explore them.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.