In the early days of computing, a “large number” might have been the storage capacity of a floppy disk or the clock speed of a primitive processor. Today, our definition of magnitude has shifted into a realm that defies human intuition. When we ask, “What is the biggest number in the world?” we are no longer just asking a mathematical question; we are asking a technological one. In the digital age, the “biggest” numbers represent the limits of our hardware, the complexity of our encryption, and the staggering scale of the data we generate every second.

From the 64-bit architectures that power our laptops to the trillions of parameters within large language models (LLMs), technology is the lens through which we view and manipulate the infinite. Understanding these numbers is essential for grasping how the modern world functions, stays secure, and evolves.

The Computational Evolution of Massive Figures

The way computers perceive numbers is fundamentally different from human cognition. While we use a base-10 system and can theoretically add digits forever on a piece of paper, computers are bound by the physical constraints of their architecture.

From 8-Bit to 64-Bit: How Hardware Defines Numeric Limits

In the world of computer science, the size of a number is often dictated by the “width” of a register. In the 1980s, 8-bit systems were the standard, meaning the largest unsigned integer a processor could handle in a single operation was 255 ($2^8 – 1$). As technology progressed to 16-bit and 32-bit systems, those limits expanded exponentially.

The transition to 64-bit computing represented a monumental leap in our ability to process “big” numbers. A 64-bit register can store an integer as high as 18,446,744,073,709,551,615. For most applications, including memory addressing, this number is so large that it is effectively infinite. It allows a computer to point to 16 exabytes of RAM—a figure that far exceeds any consumer hardware available today. However, in fields like astrophysics or cryptography, even these massive 64-bit limits are just the starting point.

The Floating-Point Problem: Precision vs. Magnitude

When technology needs to deal with numbers larger than what a standard integer can hold, it turns to “Floating-Point Arithmetic.” This is the computational equivalent of scientific notation. Instead of storing every single digit, the computer stores a significand and an exponent.

The IEEE 754 standard for double-precision floating-point numbers allows for values up to approximately $1.8 times 10^{308}$. While this allows us to represent numbers that describe the number of atoms in the observable universe, it comes at a cost: precision. In high-level software development, the “biggest number” is often a trade-off. As the magnitude grows, the computer loses the ability to track the smallest increments. This technical ceiling is a constant hurdle for developers building simulations of the cosmos or high-frequency trading algorithms where both scale and precision are paramount.

Big Data and the Architecture of the Infinite

If we look away from pure values and toward the quantity of information, the “biggest numbers” in technology are found in the global datasphere. We are no longer measuring information in gigabytes or terabytes; we have entered the era of the Zettabyte.

Exabytes and Zettabytes: Mapping the Global Datasphere

A zettabyte is one sextillion bytes ($10^{21}$). To put that in perspective, if every person on Earth took a photo every second for a year, the resulting data would still barely scratch the surface of a zettabyte. According to market intelligence firms like IDC, the “Global DataSphere”—the amount of data created, captured, copied, and consumed worldwide—is expected to exceed 180 zettabytes by 2025.

Managing these numbers requires a fundamental shift in software architecture. Distributed systems and cloud computing platforms like AWS, Google Cloud, and Azure are the only way to process these “biggest numbers.” By breaking down massive datasets into smaller chunks distributed across thousands of physical servers, technology allows us to calculate trends and insights from numbers that would be impossible for any single machine to comprehend.

Distributed Computing and the Search for Prime Giants

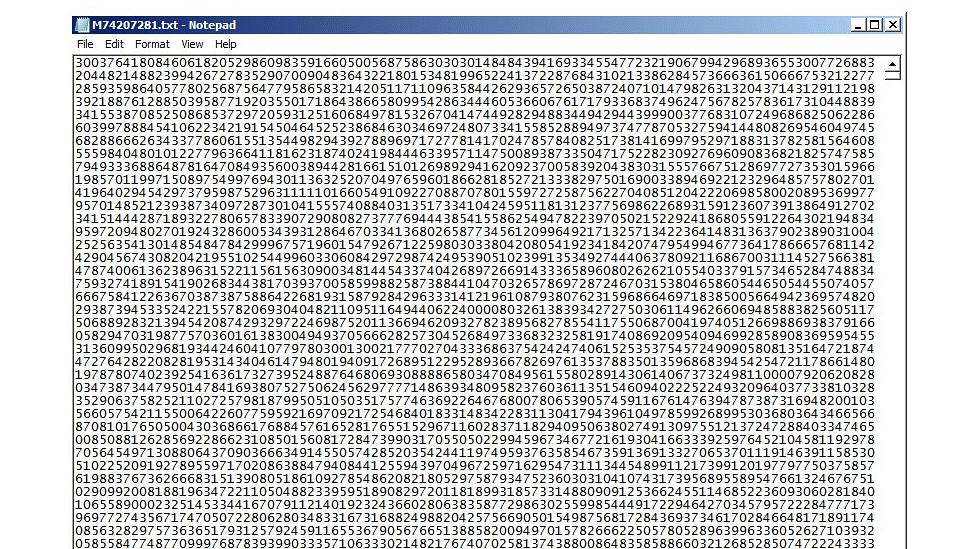

Technology also uses massive numbers as a benchmark for performance. One of the most famous examples is the Great Internet Mersenne Prime Search (GIMPS). This project utilizes the spare processing power of thousands of computers worldwide to find the largest prime numbers.

The current record-holder is a Mersenne prime with nearly 25 million digits. These are not just “numbers”; they are the results of billions of CPU hours. Finding these giants requires specialized software that can perform “BigInt” calculations—operations on numbers so large they must be stored across multiple memory addresses. This pursuit pushes the boundaries of hardware stability and algorithmic efficiency, proving that in tech, the biggest number is often a moving target.

Cryptography and the Security of Large Primes

In the realm of digital security, the biggest numbers serve a very practical purpose: they are the locks that protect our global financial systems, private communications, and national secrets. Modern encryption relies on the fact that while it is easy to multiply two large numbers together, it is computationally “impossible” to factor the result back into the original primes if those numbers are sufficiently large.

The RSA Algorithm: Why 2048-Bit Numbers Keep the World Safe

When you visit a secure website, your browser likely uses RSA encryption. This technology relies on 2048-bit numbers. A 2048-bit number is roughly 617 decimal digits long. To give you an idea of its size, the number of atoms in the observable universe is estimated to be around $10^{80}$. A 2048-bit number is approximately $10^{617}$.

The “biggest number” in this context is the “work factor”—the amount of time it would take a supercomputer to crack the code. For a standard 2048-bit key, it is estimated that it would take trillions of years using current classical computing technology. In tech, these massive numbers are the foundation of trust. We rely on the sheer magnitude of these figures to ensure that even the most powerful government agencies cannot brute-force their way into encrypted data.

Quantum Computing: The Threat to Large Number Encryption

The relationship between technology and big numbers is about to change with the advent of quantum computing. While classical computers struggle with factoring large primes, Shor’s algorithm—a quantum algorithm—could theoretically solve these problems in minutes.

As a result, the tech industry is moving toward “Post-Quantum Cryptography” (PQC). This involves moving to even larger numbers and more complex mathematical structures, such as lattices. The “biggest number” in security is getting bigger as we race to stay ahead of the processing power of the next generation of hardware.

AI and the Scale of Parameters

In the current tech landscape, the most discussed “biggest numbers” aren’t about encryption or storage, but about the architecture of Artificial Intelligence. When we talk about the power of models like GPT-4, we are talking about their parameter count.

Large Language Models: Trillions of Parameters and Counting

In machine learning, a parameter is essentially a “weight” or a variable that the model learns during training. The first iterations of neural networks had thousands of parameters. By the time GPT-3 arrived, that number had grown to 175 billion. Current industry estimates suggest that the latest frontier models operate on numbers in the trillions.

These numbers represent a new kind of magnitude: computational complexity. To train a model with a trillion parameters, tech companies must coordinate thousands of GPUs (Graphics Processing Units) in parallel, performing quadrillions of floating-point operations per second (FLOPs). The scale of these numbers determines the “intelligence” of the AI. The larger the number of parameters, the more nuance, logic, and information the model can encapsulate.

The Energy Cost of Processing Massive Datasets

There is a physical reality to these massive numbers. Every time a technology stack processes a trillion-parameter model or searches for a 25-million-digit prime, it consumes electricity. The “biggest number” in AI is often paired with another massive figure: the kilowatt-hours required to sustain the data centers.

As we push toward “General Artificial Intelligence,” the tech industry is facing a scaling law. To make the numbers bigger (more parameters, more data), we need more power. This has led to a surge in tech-driven energy solutions, from liquid-cooling systems for servers to tech giants investing in small modular nuclear reactors. The pursuit of the “biggest number” in AI is literally reshaping the world’s energy infrastructure.

Conclusion: The Infinite Horizon of Technology

So, what is the biggest number in the world? In mathematics, there is no end. But in technology, the “biggest number” is the one that sits at the very edge of our current ability to compute, store, and secure.

It is the 64-bit limit that defined a generation of hardware. It is the 2048-bit prime that secures our bank accounts. It is the zettabyte of data that maps our collective human experience. And today, it is the trillions of parameters that allow a machine to converse with us.

As we move toward quantum computing and more advanced AI, these numbers will only continue to grow. We are no longer limited by what we can write down; we are only limited by the speed of light, the density of silicon, and the ingenuity of our algorithms. In the world of technology, the search for the biggest number is actually a search for the limits of human potential. As long as we continue to innovate, that number will never stop growing.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.