In a world increasingly shaped by algorithms, advanced hardware, and digital innovations, the term “scientific method” might conjure images of white lab coats and beakers, far removed from the bustling tech industry. However, to truly understand the relentless pace of technological progress, the reliability of our software, or the intelligence of our AI systems, one must grasp the fundamental role of the scientific method. Far from being an arcane academic pursuit, it is the invisible engine driving every line of code, every hardware iteration, and every groundbreaking discovery in the tech landscape. Its purpose, in essence, is to provide a systematic framework for understanding, predicting, and ultimately controlling phenomena, enabling us to build more robust, efficient, and intelligent technologies.

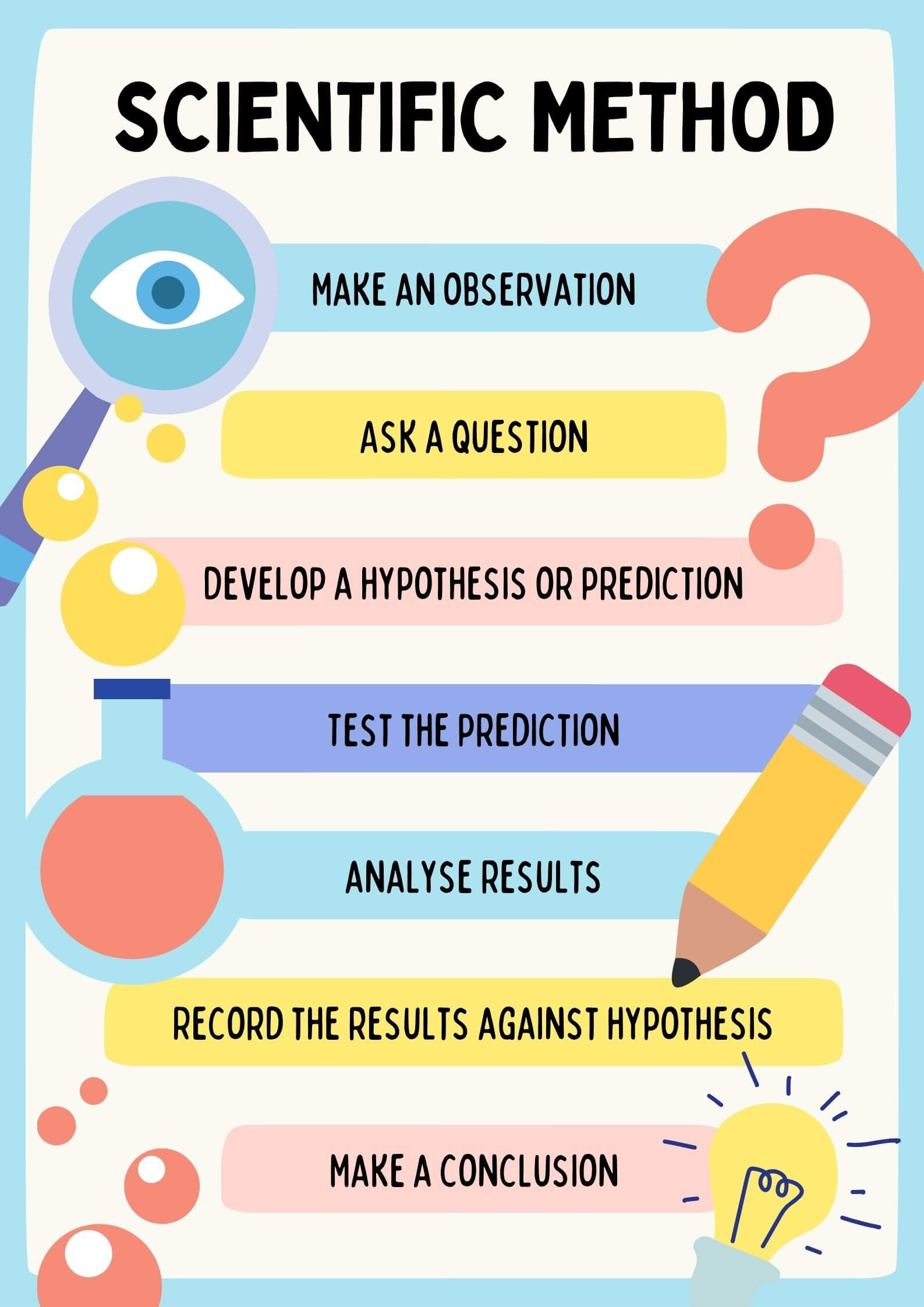

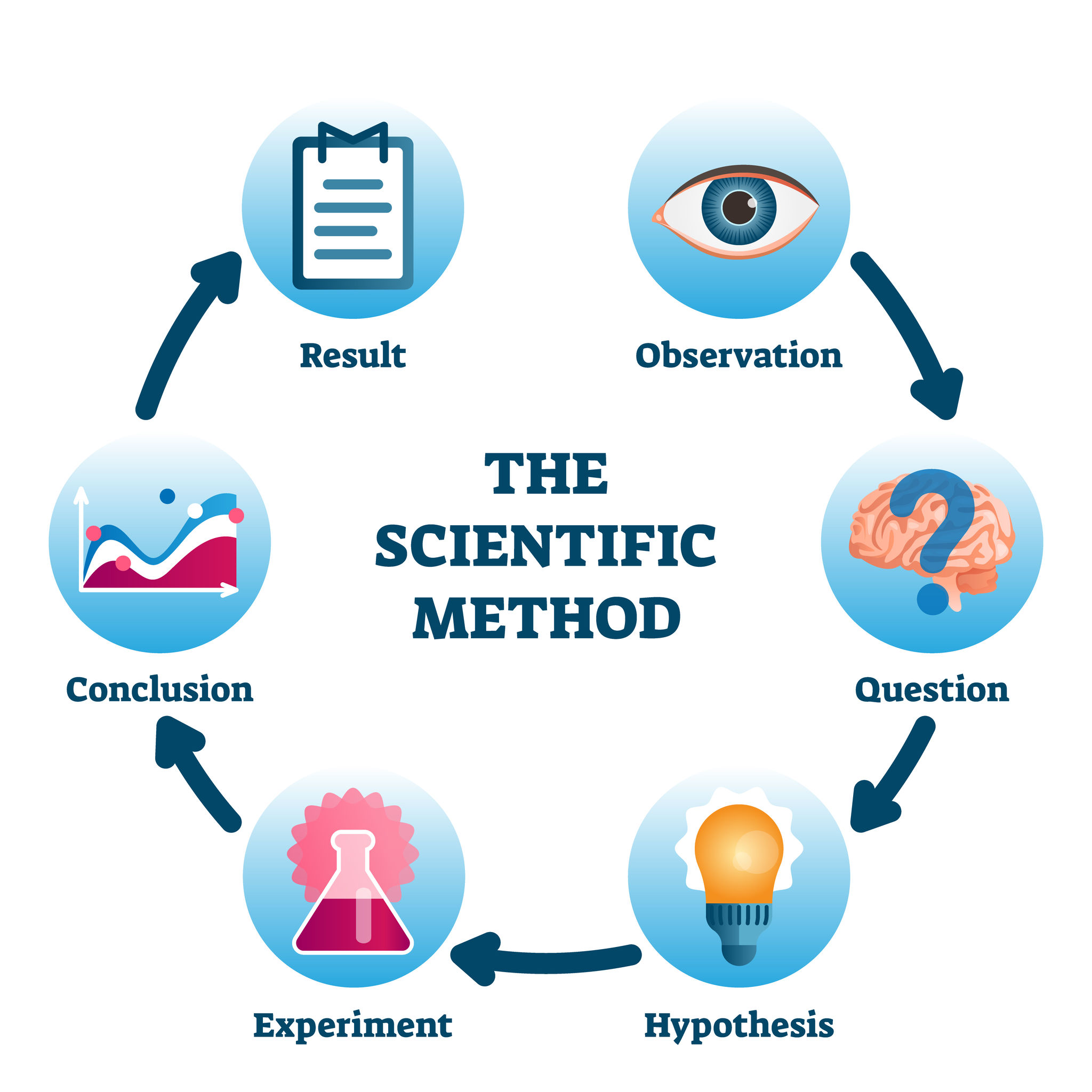

The scientific method is not a rigid, linear set of steps but rather an iterative process of observation, hypothesis formulation, experimentation, data analysis, and conclusion. It’s a commitment to empirical evidence and logical reasoning, rejecting dogma in favor of verifiable results. Within the technology sector, this methodology translates into a powerful mechanism for problem-solving, innovation, and validation, forming the bedrock upon which the entire industry is built.

The Foundational Pillar of Technological Advancement

At its core, technology is applied science. Whether we’re talking about quantum computing, advanced robotics, or the latest smartphone, each marvel of engineering stems from a deep understanding of natural laws and principles. The scientific method serves as the essential bridge connecting theoretical scientific discovery with practical technological implementation.

From Basic Research to Applied Innovation

Every technological breakthrough, from the transistor to the internet, began with fundamental scientific inquiry. Researchers, guided by the scientific method, observed phenomena, proposed hypotheses about how things work, designed experiments to test these hypotheses, and rigorously analyzed the results. This iterative process of discovery builds a robust body of knowledge that engineers and developers then leverage. For instance, the understanding of semiconductor physics (a product of scientific methodology) directly led to the invention of microprocessors, which are the brains of all modern computing devices. Without the systematic approach of the scientific method in basic research, the conceptual frameworks required for applied technology simply would not exist. It provides the clarity and certainty needed to move from a theoretical concept to a viable, implementable solution.

Bridging Theory and Practicality in Tech

The chasm between a theoretical concept and a working product is often vast. The scientific method provides the tools to navigate this complexity. When a tech company aims to develop a new feature, a more efficient algorithm, or a groundbreaking gadget, they are essentially engaging in a series of scientific experiments. They hypothesize that a particular design or approach will yield desired results (e.g., “this new compression algorithm will reduce file size by 20% without noticeable quality loss”). They then design and execute experiments (write code, build prototypes, conduct A/B tests), collect data, and analyze whether their hypothesis holds true. This systematic validation process ensures that technological solutions are not just innovative but also functional, reliable, and performant in real-world applications. It’s the difference between a brilliant idea and a product that actually works and delivers value.

Driving Software Development and Engineering Excellence

The intricacies of software development and engineering, often perceived as a blend of art and logic, are profoundly rooted in the principles of the scientific method. From the initial ideation phase to deployment and maintenance, a systematic, evidence-based approach is paramount for creating reliable, efficient, and secure software.

Iterative Design and Agile Methodologies

Modern software development paradigms, particularly Agile and DevOps, intrinsically embody the scientific method. In Agile sprints, teams observe current system behavior or user needs, hypothesize solutions (e.g., “adding this button will improve user engagement”), develop a minimal viable product (MVP) or feature increment (experiment), collect user feedback and performance metrics (data analysis), and then draw conclusions to inform the next iteration. This continuous cycle of “build, measure, learn” is a direct application of the scientific method, allowing developers to adapt quickly, validate assumptions, and refine products based on empirical evidence rather than conjecture. It minimizes risk and ensures that development efforts are constantly aligned with real-world requirements and performance goals.

Debugging as a Mini-Scientific Experiment

Debugging, a ubiquitous task for any software developer, is a perfect microcosm of the scientific method in action. When a bug appears, the developer observes the anomalous behavior, forms a hypothesis about the root cause (e.g., “this crash is due to an unhandled null pointer exception in module X”), designs an experiment to test this hypothesis (e.g., setting a breakpoint, stepping through code, or adding logging statements), collects data (observing variable states, call stacks), and then draws a conclusion to either confirm or refute the hypothesis. If refuted, a new hypothesis is formed, and the cycle continues. This systematic approach ensures that problems are diagnosed accurately and fixed effectively, preventing the costly and time-consuming “shotgun debugging” where changes are made randomly without a clear understanding of the problem.

Ensuring Software Reliability and Performance

Beyond fixing immediate issues, the scientific method is crucial for proactively ensuring the overall reliability, scalability, and performance of software systems. Performance testing, load testing, and security audits are all structured experiments designed to test specific hypotheses about a system’s resilience under various conditions. Developers hypothesize that a system can handle ‘X’ concurrent users or that a specific encryption protocol is robust against ‘Y’ type of attack. They then run controlled experiments, measure key metrics (latency, throughput, error rates, vulnerability scores), analyze the data, and refine the software accordingly. This rigorous, evidence-based approach is indispensable for building software that can withstand the demands of production environments and meet user expectations for stability and speed.

The Engine Behind Artificial Intelligence and Data Science

Perhaps nowhere is the scientific method more explicitly foundational than in the fields of Artificial Intelligence (AI) and Data Science. These disciplines are inherently experimental, relying on systematic inquiry to develop, validate, and improve intelligent systems.

Hypothesis Testing in Machine Learning

Every machine learning project begins with a hypothesis. For example, “this convolutional neural network architecture will accurately classify images with X% accuracy on unseen data,” or “these features, when fed into a logistic regression model, will predict customer churn with Y precision.” Data scientists then design experiments: they select datasets, preprocess data, train models, tune hyperparameters, and evaluate performance using specific metrics. The results of these experiments either support or refute the initial hypothesis, guiding subsequent iterations of model development. This iterative, hypothesis-driven approach is critical for optimizing model performance, preventing overfitting, and building AI solutions that are robust and reliable.

Validating AI Models and Algorithms

The scientific method is central to the rigorous validation of AI models. It’s not enough for an AI model to perform well on training data; its true utility lies in its ability to generalize to new, unseen data. This requires carefully designed validation experiments, often involving splitting data into training, validation, and test sets, and employing cross-validation techniques. Data scientists hypothesize that a model will perform consistently across different data subsets. Through systematic testing and statistical analysis of metrics like accuracy, precision, recall, F1-score, or AUC, they gather empirical evidence to validate the model’s generalization capabilities. This systematic validation is crucial for deploying trustworthy AI systems in critical applications like autonomous vehicles, medical diagnostics, or financial fraud detection.

Reproducibility and Explainability in AI

A core tenet of the scientific method is reproducibility – the ability for other researchers to replicate an experiment and obtain similar results. In AI, this translates to documenting code, data preprocessing steps, model architectures, and training procedures with sufficient detail so that others can independently verify findings. Moreover, as AI systems become more complex, the need for explainability (understanding why an AI makes certain decisions) grows. The scientific method encourages systematic investigation into model behavior, using techniques like SHAP values or LIME to hypothesize about feature importance or decision paths, and then testing these hypotheses with various inputs. This commitment to reproducibility and explainability, driven by scientific rigor, fosters trust in AI systems and advances the collective understanding of artificial intelligence.

Fostering Innovation and Problem-Solving in Hardware and Gadgets

The tangible world of hardware and consumer electronics, from microchips to smart devices, is also a testament to the continuous application of the scientific method. Developing physical products involves a cycle of design, testing, and refinement that mirrors scientific experimentation.

Prototyping, Testing, and Refinement

When engineers design a new gadget – perhaps a more energy-efficient processor or a durable smartphone casing – they begin with a hypothesis about how a particular material, design, or component will perform. They then build prototypes (the experimental setup), conduct a battery of tests (the experiment itself), ranging from thermal stress tests to electromagnetic compatibility checks, and meticulously collect data on performance, durability, and failure points. This empirical data is then analyzed to draw conclusions, which inform the next iteration of the design. This iterative prototyping and testing cycle, a direct application of the scientific method, is indispensable for overcoming engineering challenges and bringing robust, high-performance hardware to market. It ensures that products are not only functional but also reliable and safe.

Optimizing User Experience Through Systematic Inquiry

Even the subjective realm of user experience (UX) design in hardware benefits immensely from the scientific method. When a company develops a new user interface for a smart device or designs the ergonomic contours of a VR headset, they don’t rely solely on intuition. They hypothesize about what design elements will lead to the most intuitive or comfortable user experience. They then conduct user studies, A/B testing of different interface layouts, or gather biometric data to quantify user interaction (the experiment). The data collected (e.g., task completion times, error rates, eye-tracking data) is then analyzed to objectively assess which designs perform best and why. This systematic, data-driven approach allows hardware manufacturers to optimize for usability and user satisfaction, transforming subjective preferences into empirically validated design decisions.

In conclusion, the purpose of a scientific method within the tech industry is multifaceted and indispensable. It is the framework that enables us to move from conjecture to evidence, from problem to solution, and from idea to innovation. It underpins the reliability of our software, the intelligence of our AI, the robustness of our hardware, and the security of our digital lives. By fostering a culture of systematic inquiry, experimentation, and empirical validation, the scientific method ensures that technological progress is not merely rapid but also robust, reliable, and truly beneficial to humanity. It is the compass guiding the exploration of new frontiers in technology, ensuring that every step forward is grounded in verifiable truth.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.