The question “what is the percentage of school shootings in america” stands as a poignant example of the urgent, complex, and often emotionally charged queries we pose to our digital systems daily. While the immediate impulse might be to seek a definitive numerical answer, this title, when viewed through the lens of technology, transforms into a profound prompt for exploring the intricate world of data collection, algorithmic interpretation, information dissemination, and the ethical responsibilities inherent in our interconnected digital age. It’s a query that doesn’t just demand a statistic; it implicitly challenges the technological infrastructure that attempts to provide one, highlighting the need for robust systems that prioritize accuracy, context, and ethical considerations.

In an era where information is abundant yet reliable insights can be scarce, understanding the “percentage” of anything, especially sensitive social phenomena, requires an appreciation for the technological underpinnings of data science. From the vast datasets that need to be aggregated and analyzed, to the artificial intelligence algorithms that sift through information, and the user interfaces that present findings, technology shapes every step of our quest for truth. This article delves into how technology addresses such complex data inquiries, focusing not on the specific numerical answer to the original title, but on the technological journey and challenges involved in arriving at any such sensitive statistical conclusion. It examines the digital tools and frameworks that enable us to ask, process, and ultimately comprehend the percentages that define our world, underscoring the vital role of tech in shaping public understanding and discourse.

The Digital Quest for Definitive Data: Beyond Simple Percentages

When a user types a question like “what is the percentage of school shootings in america” into a search engine or data analytics platform, they initiate a complex technological process far beyond a simple database lookup. This query triggers a sophisticated interplay of algorithms, data aggregation techniques, and information retrieval systems designed to distill vast oceans of raw data into a digestible insight. However, the path to a reliable percentage is fraught with technological challenges, ranging from the sheer volume and varied nature of data sources to the inherent biases that can creep into both collection and analysis. Understanding these complexities is crucial for anyone seeking accurate and contextually rich statistical information online.

Navigating Data Sources and Veracity in the Age of Information Overload

The first technological hurdle in answering any statistical question lies in identifying and evaluating data sources. For a query concerning a sensitive topic like the one in our title, relevant data might be scattered across various governmental databases, academic research papers, non-profit organization reports, news archives, and even social media feeds. Each source comes with its own collection methodologies, reporting standards, and potential biases. Tech solutions, such as web scraping tools, natural language processing (NLP) algorithms, and sophisticated database management systems, are essential for aggregating this disparate information.

NLP, for instance, can be used to extract structured data from unstructured text documents, identifying key events, dates, and locations. Machine learning models can then be deployed to de-duplicate records, resolve ambiguities, and normalize data formats, transforming a chaotic collection of information into a cohesive dataset. However, these tools are only as effective as the quality of the input. Verifying the veracity of each source remains a critical human and technological challenge, often requiring algorithmic checks for consistency, cross-referencing with trusted sources, and even blockchain technologies in some emerging applications to establish an immutable audit trail for data provenance. The technological infrastructure for source validation is continuously evolving to combat the pervasive problem of misinformation, which can profoundly skew any reported percentage.

The Algorithmic Lens: How Search Engines Shape Our Understanding

Once data is gathered and somewhat standardized, search engines and AI-powered analytical platforms play a pivotal role in filtering, ranking, and presenting information. When a user asks “what is the percentage of school shootings in america,” the underlying algorithms don’t just pull a number; they interpret the query’s intent, identify relevant keywords, and then scan vast indices of web pages, databases, and cached information. These algorithms decide what information is most authoritative, recent, and relevant, effectively shaping the user’s perception of the statistic.

However, this algorithmic lens is not neutral. Search algorithms are designed with various objectives, including relevance, authority, freshness, and user engagement, which can sometimes inadvertently introduce biases. For instance, an algorithm might prioritize highly publicized incidents or data from sources that frequently update their content, potentially overshadowing less reported but equally valid data. Furthermore, machine learning models, trained on historical data, can perpetuate existing societal biases if not carefully audited and mitigated. The technology of search engine optimization (SEO) also means that certain information may be more visible due not to its inherent truth, but its digital packaging. Ethical AI development and transparent algorithmic design are paramount to ensure that the “percentage” presented reflects the most accurate and unbiased representation available, rather than a curated or inadvertently skewed result.

The Technological Infrastructure of Statistical Reporting and Analysis

Beyond the initial query and information retrieval, the accurate derivation and presentation of complex statistics depend heavily on advanced technological infrastructures. From the granular processing of raw numbers to their visualization in intuitive dashboards, technology underpins every stage of transforming data into meaningful insights. For sensitive subjects like the one in our title, this infrastructure must not only be powerful but also designed with precision and a deep understanding of its societal impact.

From Raw Data to Actionable Insights: The Role of Data Science and AI

Data science forms the backbone of translating raw figures into actionable insights. It involves a sophisticated interplay of statistical modeling, machine learning algorithms, and high-performance computing. For calculating a percentage related to a specific type of event, data scientists employ various techniques to identify discrete occurrences, categorize them consistently, and then aggregate them over defined periods and geographical areas. This often requires advanced classification algorithms that can distinguish between different types of incidents from textual descriptions or other unstructured data.

Furthermore, AI-driven analytics can identify trends, correlations, and anomalies that might not be apparent to human observers. Predictive analytics, for instance, uses historical data and machine learning models to forecast future probabilities or identify contributing factors. While the immediate question is about a current percentage, the underlying technological capacity often extends to understanding why that percentage exists and what factors might influence it. However, the application of AI in such sensitive contexts necessitates rigorous validation and interpretability. “Explainable AI” (XAI) technologies are emerging to help data scientists and users understand how an AI arrived at its conclusions, ensuring transparency and building trust, especially when the statistics have significant societal implications. The responsible deployment of AI ensures that derived percentages are not just numbers, but insights grounded in robust and auditable methodologies.

Visualizing Complex Truths: Tools for Transparent Data Presentation

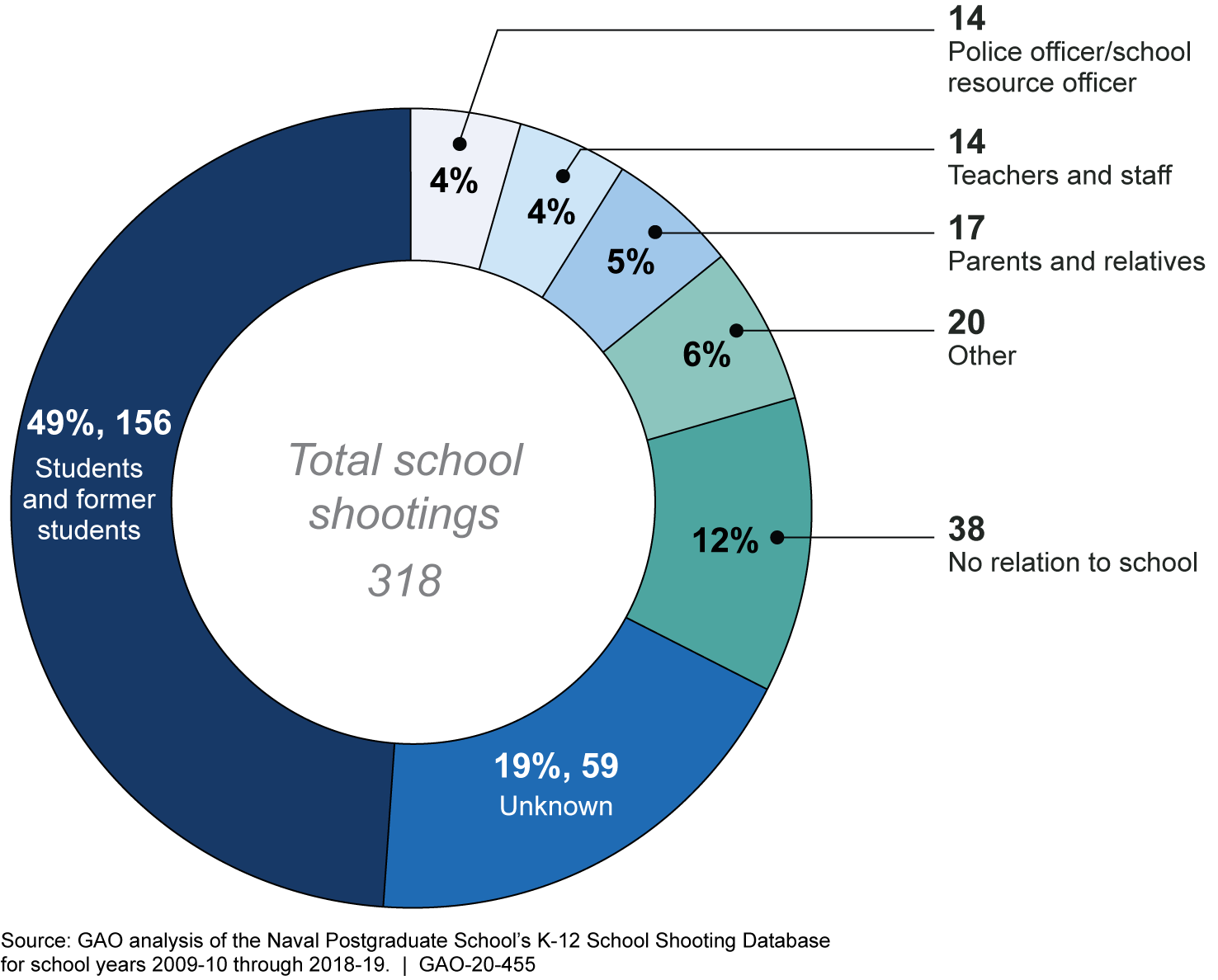

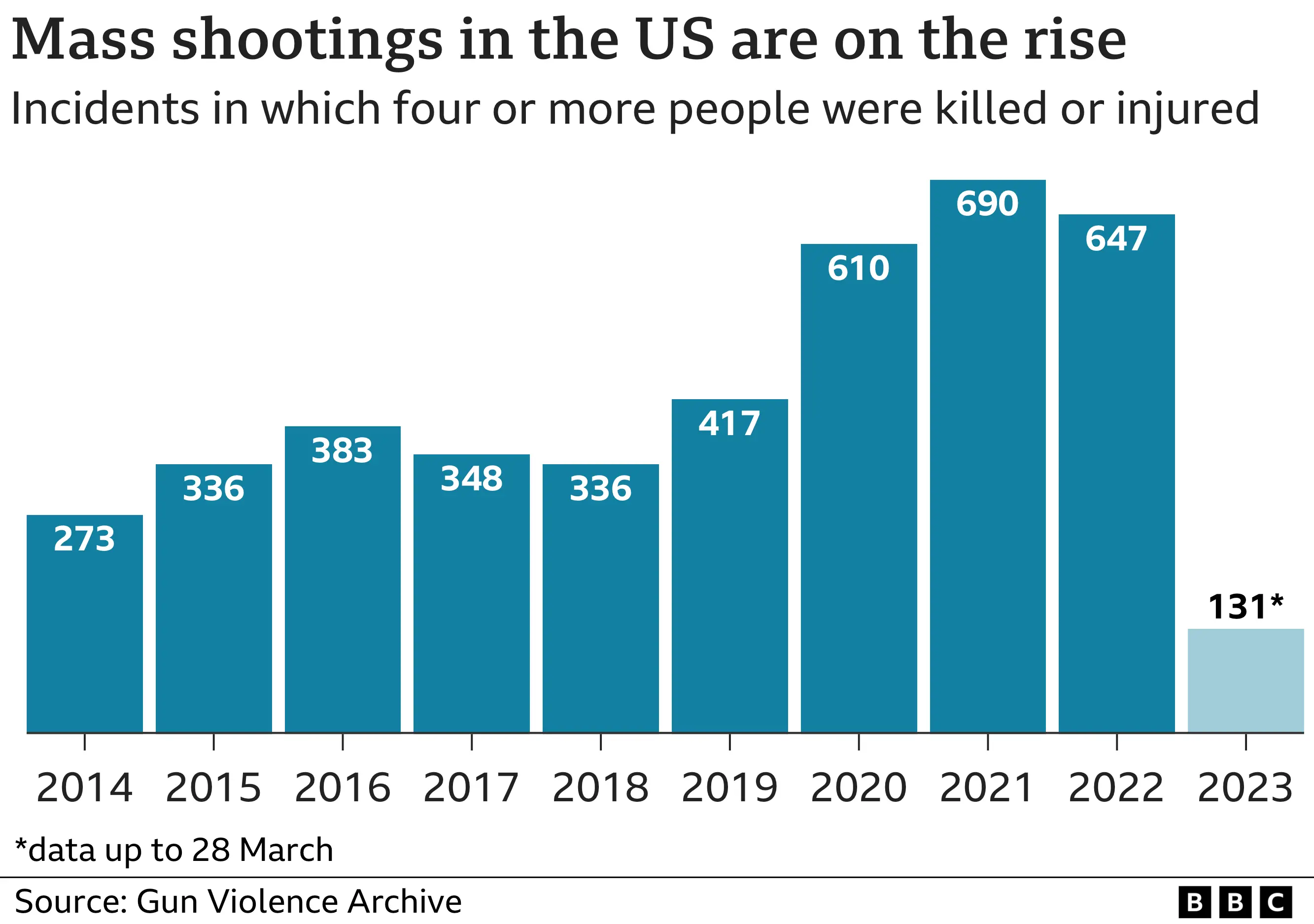

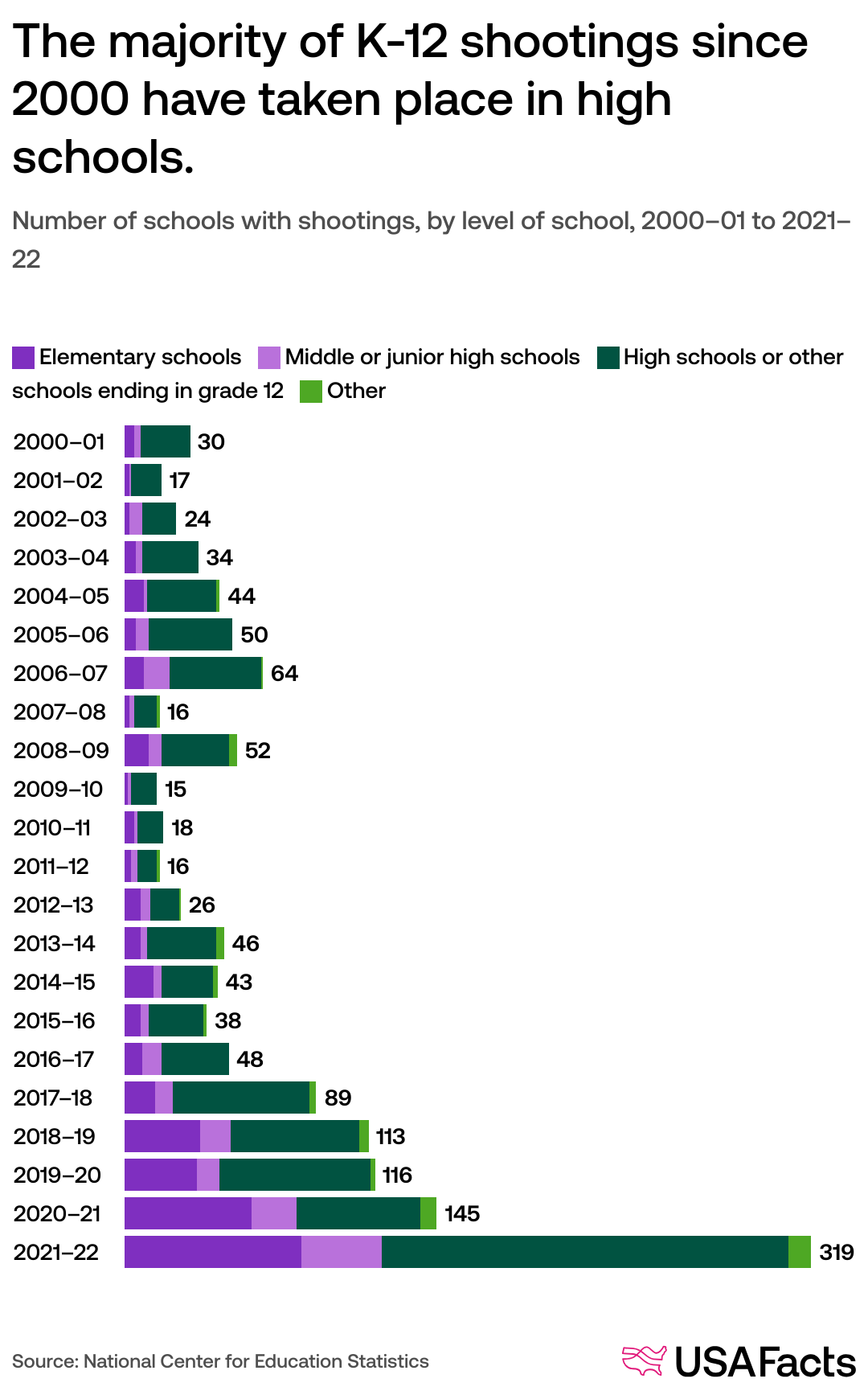

Once a percentage is derived, its effective communication is paramount. Data visualization tools and platforms are technological marvels that transform complex datasets into understandable graphics, charts, and interactive dashboards. For a statistic like “what is the percentage of school shootings in america,” simply presenting a raw number might be insufficient or even misleading without proper context. Visualization tools allow for the addition of crucial details: trends over time, geographical distribution, demographic breakdowns, and comparisons with other relevant data points.

Interactive dashboards, built with technologies like D3.js, Tableau, or Power BI, empower users to explore the data themselves, applying filters, drilling down into specific sub-categories, and customizing their view of the statistics. This technological capability democratizes access to data analysis, moving beyond static reports to dynamic, user-driven exploration. Moreover, these tools are increasingly incorporating accessibility features to ensure that insights are available to individuals with diverse needs. Ethical data visualization emphasizes clarity, avoids misleading graphical representations, and provides clear citations for data sources and methodologies. The goal is to use technology to not just present a number, but to facilitate a comprehensive and transparent understanding of the underlying phenomenon.

Digital Ethics and the Responsibility of Information Dissemination

The pursuit and presentation of statistics, particularly those pertaining to sensitive social issues, carry significant ethical weight. In the digital age, where information spreads globally in an instant, the responsibility of technology platforms and content creators to ensure accuracy and context is paramount. The question “what is the percentage of school shootings in america” underscores the need for robust ethical frameworks in data science and information technology to counter misinformation and foster informed public discourse.

Combating Misinformation and Disinformation in Sensitive Statistical Contexts

The digital landscape is a fertile ground for both genuine inquiry and deliberate falsehoods. When sensitive statistics are involved, misinformation (unintentional inaccuracy) and disinformation (deliberate falsehoods) can have devastating real-world consequences, eroding public trust and hindering effective policy-making. Technology plays a dual role here: it is both a vector for the rapid spread of false information and a crucial tool in its detection and mitigation.

Advanced AI models, including natural language processing and machine learning, are now being deployed to identify patterns indicative of misinformation. These systems can analyze text for sensationalism, inconsistencies, lack of credible sources, and even detect deepfakes or AI-generated content designed to mislead. Fact-checking organizations increasingly leverage tech tools to automate parts of their verification process, cross-referencing claims with trusted databases and official reports at speeds impossible for human analysts alone. Social media platforms, while often criticized for facilitating the spread of misinformation, are also investing heavily in AI-powered content moderation and labeling systems to flag unverified or false statistics. However, these technological solutions require continuous refinement and human oversight to avoid false positives and adapt to the ever-evolving tactics of those who spread disinformation.

Fostering Digital Literacy: Empowering Users to Critically Evaluate Data

While technological solutions for identifying and combating misinformation are vital, the ultimate defense lies in empowering users with digital literacy. This refers to the ability to locate, evaluate, synthesize, and communicate information using digital technologies, and, crucially, to critically assess the reliability of sources and the validity of statistics encountered online. For complex questions like “what is the percentage of school shootings in america,” a digitally literate individual would not simply accept the first number presented.

Technology plays a role in fostering this literacy through educational tools, online courses, and interactive platforms that teach critical thinking skills. Search engines can be designed to provide context clues about the reliability of sources, offer multiple perspectives, and highlight methodological transparency. Browser extensions and apps can assist users in identifying bias or verifying facts in real-time. By understanding how algorithms work, how data can be manipulated, and the importance of source verification, users can become more discerning consumers of information. The goal is to move beyond passive consumption to active, critical engagement with the data, ensuring that “percentages” are understood not just as numbers, but as complex summaries derived from specific methodologies, potentially carrying inherent limitations.

The Future of Data Intelligence: Predictive Analytics and Proactive Tech Solutions

The journey for understanding percentages doesn’t end with current figures; it extends into leveraging technology to anticipate future trends and inform proactive strategies. The field of data intelligence is rapidly advancing, moving beyond descriptive statistics to predictive modeling, offering a glimpse into how technology might help societies respond more effectively to complex challenges.

AI in Early Warning Systems: Leveraging Data for Prevention (Ethically)

The sophisticated data collection and analytical capabilities discussed throughout this article open doors to the development of early warning systems. While directly predicting specific events remains ethically fraught and technically challenging, AI can be ethically deployed to identify broader risk factors, patterns, and indicators across vast datasets. For example, machine learning models could analyze anonymized public data — such as socio-economic indicators, community engagement levels, public health statistics, and online sentiment data — to identify geographic areas or demographic cohorts that might be experiencing increased stress or exhibiting certain patterns associated with a range of social challenges.

Such systems would not pinpoint individuals but rather highlight systemic vulnerabilities, allowing communities and policymakers to allocate resources more effectively towards preventative measures and support systems. This approach emphasizes privacy-preserving analytics, aggregation of non-identifiable data, and a focus on broad societal health rather than individual surveillance. The ethical development of AI in this context involves rigorous oversight, transparency in methodology, and a commitment to using technology for societal good by informing proactive, rather than purely reactive, strategies. The aim is to leverage the power of data intelligence not just to measure problems, but to contribute to their mitigation, fostering a safer and more informed future.

The question “what is the percentage of school shootings in america” transcends a simple search query; it encapsulates a multifaceted technological challenge. It forces us to confront the complexities of data acquisition, the biases of algorithmic processing, the responsibilities of information dissemination, and the ethical imperatives of leveraging advanced tech for societal good. The quest for this “percentage,” or any other sensitive statistic, is less about finding a single definitive number and more about understanding the sophisticated digital ecosystem that governs how such numbers are generated, accessed, interpreted, and ultimately acted upon. As technology continues to evolve, so too must our commitment to developing ethical, transparent, and robust systems that empower us to critically engage with, and wisely apply, the percentages that define our world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.