In the rapidly evolving landscape of technology, where data is the new currency and algorithms dictate much of our digital experience, a fundamental understanding of numerical concepts becomes increasingly crucial. While often overlooked in casual conversation about cutting-edge gadgets and AI advancements, the building blocks of mathematics, including fractions, play an indispensable role in how information is processed, transmitted, and utilized. This exploration delves into the essence of the numerator within the context of fractions, revealing its significance in various technological applications, from digital signal processing and data compression to the intricate workings of network protocols and software engineering. Understanding the numerator is not merely an academic exercise; it is a gateway to comprehending the granular mechanisms that underpin our digital world.

The Foundational Element: Defining the Numerator and Denominator

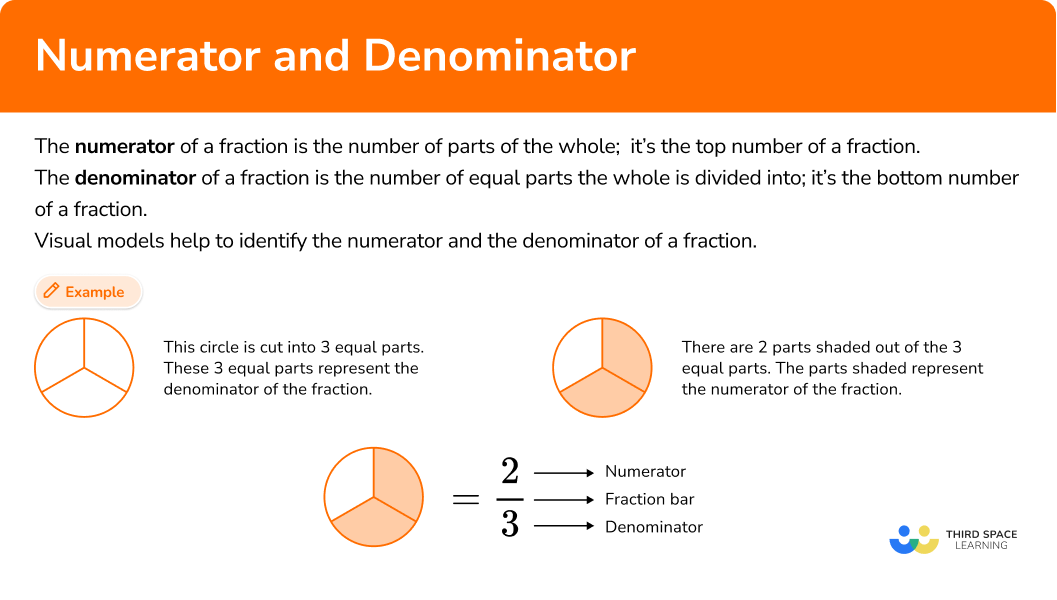

At its heart, a fraction is a representation of a part of a whole. It’s a concise way to express division or a ratio. Every fraction is composed of two distinct parts, each carrying vital information.

The Upper Half: The Numerator’s Identity

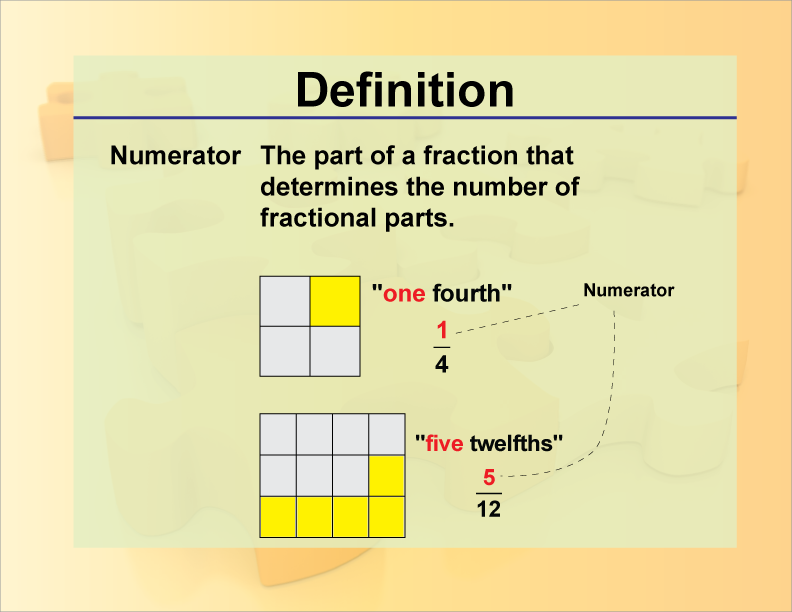

The numerator, positioned above the fraction bar, represents the number of parts we are considering. It answers the question, “How many of these parts do we have?” In essence, it’s the quantity that is being counted or measured. For instance, in the fraction 3/4, the numerator is 3, indicating that we are concerned with three out of the four equal parts that constitute the whole. Its value dictates the magnitude of the portion being represented. A larger numerator, with a constant denominator, signifies a greater portion of the whole. Conversely, a smaller numerator indicates a smaller portion. This inherent characteristic makes the numerator a primary driver in determining the overall value and proportion that a fraction signifies.

The Lower Half: The Denominator’s Role

Beneath the fraction bar lies the denominator. Its fundamental role is to define the total number of equal parts into which the whole has been divided. It answers the question, “How many equal parts make up the whole?” In our 3/4 example, the denominator is 4, signifying that the whole has been divided into four equal segments. The denominator sets the scale or unit of division. It’s crucial to understand that fractions only make sense when the parts are of equal size. A change in the denominator alters the size of each individual part. For example, 1/2 represents a larger portion than 1/4 because the whole is divided into fewer, and therefore larger, pieces. The denominator is thus the bedrock upon which the numerator’s count is based, providing the necessary context for interpretation.

Numerators in the Digital Realm: Beyond Mathematical Abstraction

While fractions are a fundamental mathematical concept, their practical application extends far beyond textbooks and chalkboards. In the realm of technology, the principles of numerators and denominators are embedded in numerous systems and processes, often in ways that are not immediately apparent to the end-user. Their ability to represent proportions and ratios makes them ideal for encoding and interpreting digital information.

Digital Signal Processing: Quantifying Waveforms

In digital signal processing (DSP), which is fundamental to everything from audio and video playback to telecommunications and sensor data analysis, fractions are implicitly at play. Analog signals, which are continuous, must be converted into discrete digital values. This process, known as sampling, involves taking measurements of the signal at regular intervals. The quality and fidelity of this conversion are directly related to how precisely these sampled values can represent the original analog waveform.

Consider the concept of quantization. Here, the continuous range of possible analog signal amplitudes is divided into a finite number of discrete levels. The process of mapping an analog amplitude to its nearest digital level can be visualized as dividing a continuous scale into segments. The number of bits used to represent each sample determines the number of these quantization levels, which in turn dictates the precision of the digital representation. While not explicitly written as fractions in the code, the underlying principles of dividing a range and selecting a specific point within that division are governed by proportional reasoning akin to fractions. The “granularity” of the digital representation, how finely the analog signal is dissected, can be thought of in terms of fractions of the total amplitude range.

Data Compression: Efficient Encoding of Information

Data compression algorithms, essential for reducing file sizes and optimizing data transmission over networks, heavily rely on the efficient representation of information, often leveraging fractional concepts. Techniques like lossy compression, used in image and audio formats like JPEG and MP3, work by discarding information that is deemed less perceptible to human senses. This discarding is done in a calculated manner, essentially representing the “important” parts of the data as a fraction of the original.

For example, in image compression, certain high-frequency details might be removed. The degree of compression, or how much data is removed, can be thought of as a ratio – the size of the compressed data to the original data. This ratio, if less than 1, is a fraction. The numerator would represent the size of the compressed file, and the denominator the size of the original file. The algorithm determines what constitutes the “essential” information by analyzing statistical redundancies and perceptual importance. This is a sophisticated application of proportional reasoning, where the numerator of an implicit fraction defines the essential components being retained for efficient storage and transmission.

Advanced Applications: Where Numerators Drive Innovation

The significance of the numerator and its underlying fractional principles extends to more complex technological domains, impacting the very architecture of our digital infrastructure and the intelligence we derive from data.

Network Protocols: Packet Size and Efficiency

In computer networking, data is transmitted in small units called packets. The design of network protocols, such as TCP/IP, involves intricate management of these packets to ensure reliable and efficient data transfer. The size of these packets is a critical parameter, and it’s often determined by factors that involve proportional considerations.

While not explicitly stating “numerator” in packet headers, the Maximum Transmission Unit (MTU) of a network, for instance, defines the largest packet size that can be transmitted without fragmentation. This MTU is a numerical value that dictates how much data can be encapsulated. When data larger than the MTU needs to be sent, it is fragmented into smaller pieces. The number of fragments generated, and how much data fits into each fragment, involves a division process. The numerator of the implicit fraction would be the total data size, and the denominator would be the MTU (or a related divisor). Efficient packet sizing aims to maximize the useful data payload (numerator) relative to the overhead of the packet header (denominator), thereby improving network throughput.

Algorithmic Efficiency: Big O Notation and Resource Allocation

In computer science, Big O notation is a mathematical notation used to describe the limiting behavior of a function when the argument tends towards a particular value or infinity. It’s primarily used in the context of algorithmic complexity to classify algorithms according to how their run time or space requirements (memory usage) grow as the input size grows. While often expressed with variables, the underlying concept is about ratios and proportions of resource usage relative to input.

For example, an algorithm with O(n) complexity means its running time grows linearly with the input size ‘n’. An algorithm with O(n^2) complexity means its running time grows quadratically. If we consider the “work done” by the algorithm as the numerator and the “input size” as the denominator in a simplified sense, Big O notation describes how this ratio changes. A “better” algorithm, in terms of efficiency, would have a smaller numerator for a given denominator, meaning it uses fewer resources for a given amount of input. This directly impacts the performance of software applications, the feasibility of processing large datasets, and the speed at which complex computations can be performed. The numerator in this context represents the computational effort or memory required.

Machine Learning and AI: Feature Representation and Model Parameters

In machine learning and artificial intelligence, data is represented as numerical features. These features are often normalized or scaled to fall within a specific range, a process that inherently involves fractional concepts. Normalization, for instance, might scale features to a range between 0 and 1. This is achieved by subtracting the minimum value and dividing by the range (maximum minus minimum). The resulting values, which are essentially fractions of the original range, become the numerators representing the scaled feature values.

Furthermore, the parameters within machine learning models, such as weights in neural networks, are continuously adjusted during training. These adjustments, driven by optimization algorithms, aim to minimize an error or loss function. The magnitude of these adjustments, and how they influence the model’s predictions, can be understood in terms of proportional changes. The numerator of an implicit fraction could represent the error, and the denominator the learning rate or a related scaling factor, influencing how much the parameters are updated. The ability to precisely control and interpret these fractional adjustments is critical for building accurate and robust AI systems.

Conclusion: The Ubiquitous Significance of the Numerator

From the fundamental representation of parts of a whole to the intricate workings of digital systems, the numerator, as a core component of fractions, demonstrates a pervasive and profound significance in the technological domain. It’s the quantifiable element that tells us “how much” of something we are concerned with, whether it’s a portion of a digital signal, a compression ratio, a packet payload, or an algorithmic resource requirement. As technology continues to advance and become more deeply integrated into our lives, a solid grasp of these foundational mathematical concepts, particularly the role of the numerator, becomes an indispensable tool for understanding the underlying mechanisms that power our digital world and for innovating within it. Recognizing its ubiquity allows us to appreciate the elegance and power of simple mathematical ideas in shaping complex technological realities.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.