For decades, the way we consumed digital audio was largely dictated by a two-dimensional plane. We moved from mono to stereo, and eventually to surround sound, which added a sense of breadth and depth. However, we are currently witnessing the most significant shift in audio engineering since the invention of the loudspeaker: the rise of spatial sound.

Spatial sound, often referred to as 3D audio or spatial audio, is a suite of technologies designed to simulate a three-dimensional acoustic environment. Unlike traditional audio formats that push sound toward you from fixed channels, spatial sound places individual “audio objects” in a virtual sphere around your head. This allows listeners to perceive sound coming from above, below, behind, and at varying distances, mimicking the way we hear sound in the physical world. As we dive deeper into the hardware and software driving this revolution, it becomes clear that spatial sound is not just a gimmick—it is the future of human-computer interaction.

Understanding the Mechanics: How Spatial Sound Works

To understand spatial sound, one must first understand the limitations of its predecessors. Traditional stereo audio uses two channels (left and right). Surround sound expands this to 5.1 or 7.1 configurations, adding speakers behind and to the side of the listener. While immersive, these systems are “channel-based,” meaning the sound is baked into specific speakers. If you don’t have a speaker physically located behind your left shoulder, you won’t hear a distinct sound from that exact coordinate.

The Shift from Channel-Based to Object-Based Audio

Spatial sound breaks free from the “channel” constraint by utilizing object-based audio. In this model, sound engineers don’t assign a sound to a speaker; they assign it to a coordinate in a 3D space. Metadata attached to the audio file tells the playback device—whether it’s a pair of headphones or a high-end soundbar—exactly where that sound should appear to originate. The device’s processor then “renders” the sound in real-time, adjusting for the specific hardware the user is wearing.

Psychoacoustics and Head-Related Transfer Functions (HRTF)

The magic of spatial sound, especially in headphones, relies on a branch of science called psychoacoustics. Humans determine the location of a sound based on subtle cues: the time difference between the sound hitting the left vs. right ear (Interaural Time Difference), the difference in volume (Interaural Intensity Difference), and how the shape of our outer ear (the pinna) filters frequencies.

Engineers use Head-Related Transfer Functions (HRTF) to map these interactions mathematically. By applying these digital filters to a standard audio signal, software can trick your brain into thinking a sound is coming from ten feet above your head, even though the source is a tiny driver tucked inside your ear canal.

Key Technologies and Standards in the Industry

As spatial sound has moved into the mainstream, several tech giants have developed proprietary standards to define how this 3D metadata is handled and delivered.

Dolby Atmos: The Pioneer of Object-Based Audio

Originally developed for cinema, Dolby Atmos is now the gold standard for spatial sound in the home and on mobile devices. It allows for up to 128 simultaneous audio objects. In a home theater setup, this usually involves “height channels” (speakers on the ceiling or upward-firing drivers). On mobile devices, Dolby Atmos uses sophisticated virtualization to create a similar sense of space through standard stereo headphones.

DTS:X and Sony 360 Reality Audio

While Dolby leads the market, competitors like DTS:X offer similar object-based experiences without requiring specific speaker layouts. Sony has also carved out a niche with “360 Reality Audio,” which uses the MPEG-H 3D Audio standard. Sony’s focus is primarily on the music industry, working with artists to remaster tracks so that instruments feel like they are positioned in a physical circle around the listener.

Apple’s Personalized Spatial Audio and Dynamic Head Tracking

Apple revolutionized the consumer perception of this technology by integrating “Spatial Audio” across the iOS and macOS ecosystems. What sets Apple apart is the integration of hardware and software. By using the accelerometers and gyroscopes in AirPods and Beats headphones, Apple implements “Dynamic Head Tracking.” If you are watching a movie on an iPad and turn your head to the right, the audio shifts so that the dialogue still sounds like it is coming from the screen’s location. This anchors the sound to the device, greatly enhancing the sense of realism.

The Hardware Ecosystem: Bringing Immersive Sound to Life

The transition to spatial sound requires more than just clever software; it demands a robust hardware ecosystem capable of processing complex algorithms with minimal latency.

Headphones and Earbuds with Built-in Inertial Sensors

Modern high-end earbuds are no longer just speakers; they are miniature computers. To support effective spatial audio with head tracking, devices must contain Inertial Measurement Units (IMUs). These sensors track the wearer’s movements at a high frequency (often 100 times per second or more) to ensure the virtual soundstage remains stable. The processing power required to calculate HRTF filters in real-time while maintaining Bluetooth connectivity is a significant feat of semiconductor engineering.

The Role of Mobile Devices and Dedicated Sound Processors

The heavy lifting of spatial rendering often happens on the host device (the smartphone or PC). Modern Systems-on-a-Chip (SoCs), such as Apple’s H2 chip or Qualcomm’s Snapdragon Sound platforms, feature dedicated Digital Signal Processors (DSPs). These chips are optimized for the mathematical calculations required for binaural rendering—the process of converting 3D object data into a two-channel signal that our ears perceive as 3D.

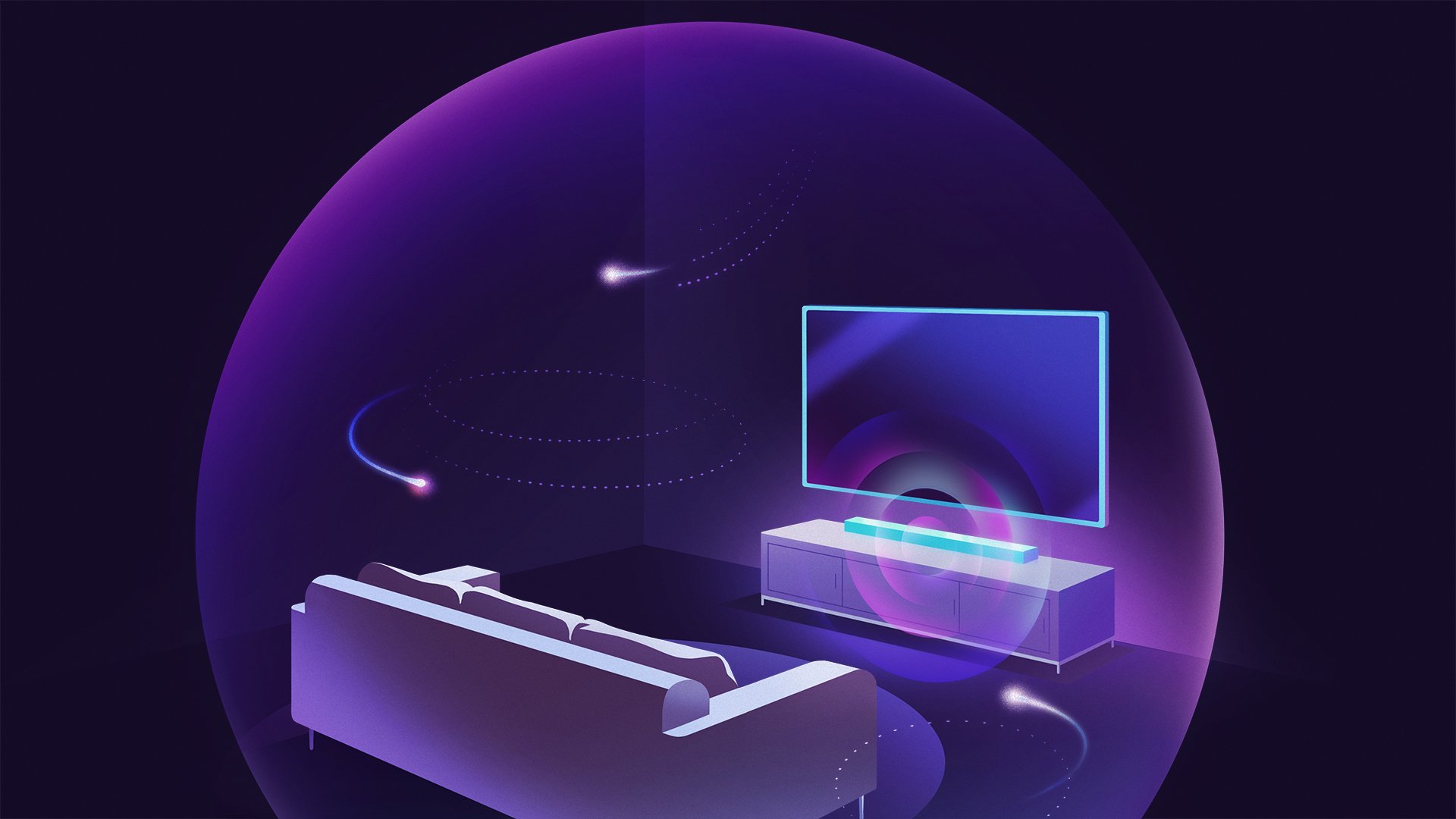

Soundbars and “Phased Array” Technology

In the living room, spatial sound is achieved through beamforming and phased arrays. High-end soundbars use multiple drivers angled in specific directions. By precisely controlling the timing of the sound waves (constructive and destructive interference), the soundbar can “bounce” audio off your walls and ceiling. This creates “phantom speakers” in locations where no physical hardware exists, effectively enveloping the room in sound.

Applications Beyond Entertainment: The Future of Spatial Sound

While movies and music are the most obvious beneficiaries, spatial sound technology is expanding into fields that will redefine our digital lives.

Virtual Reality (VR), Augmented Reality (AR), and the Metaverse

In VR and AR, spatial sound is not optional; it is foundational. For a virtual environment to feel “real,” the audio must match the visual perspective. If a virtual character speaks to you from behind, the sound must originate from behind. Without spatial audio, the “immersion” of the Metaverse would break immediately. Advanced spatial engines now account for “room acoustics,” meaning the software calculates how sound would bounce off virtual marble walls versus virtual carpeted floors.

Gaming: The Competitive Advantage

The gaming industry was an early adopter of spatial audio. In competitive first-person shooters, the ability to hear the exact floorboard a rival steps on provides a tangible advantage. Technologies like Windows Sonic and PlayStation 5’s Tempest 3D AudioTech provide gamers with 360-degree situational awareness, allowing them to react to threats before they even appear on the screen.

Enhancing Remote Collaboration and Video Conferencing

One of the most exhausting aspects of video conferencing is “Zoom fatigue,” caused in part by multiple voices coming from a single monaural source. Spatial sound can solve this by “spacing out” participants in a virtual room. If the person on the left side of your screen speaks, their voice comes from the left. This mimics natural social settings, making it easier for the brain to distinguish between speakers and decreasing cognitive load during long digital meetings.

How to Experience Spatial Sound Today: A Setup Guide

If you are looking to dive into the world of spatial audio, the barrier to entry has never been lower. Most modern tech stacks already have the necessary components; they simply need to be activated.

Content Platforms and Streaming Services

To hear spatial sound, you need content encoded with the right metadata.

- Video: Netflix, Disney+, and Apple TV+ offer extensive libraries in Dolby Atmos.

- Music: Apple Music, Tidal, and Amazon Music HD feature “Spatial Audio” or “360 Reality Audio” sections where tracks are specifically mixed for 3D environments.

Optimizing Your Software Settings

For PC users, Windows 10 and 11 offer “Windows Sonic for Headphones” as a free built-in spatial sound solution. For a more premium experience, users can download the Dolby Access or DTS Sound Unbound apps from the Microsoft Store. These apps allow for deeper customization and calibration based on your specific headphone model. On mobile, ensure that your OS is updated, as both Android and iOS have integrated spatial audio toggles within their “Sound and Haptics” settings.

As we look toward the future, the “spacial” (spatial) sound revolution is set to become even more personalized. We are approaching an era where we can take photos of our ears to create a custom HRTF profile, ensuring that the 3D audio we hear is perfectly tuned to our unique anatomy. Whether through a pair of earbuds or a sophisticated home theater, spatial sound is effectively erasing the boundary between digital media and reality.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.