In the modern technological landscape, the term “jihadist” has evolved beyond its traditional socio-political roots to become a significant focal point for digital security experts, software developers, and artificial intelligence researchers. In the context of technology, the term refers to a specific category of cyber-actor and a subset of digital content characterized by extremist ideologies, radicalization efforts, and coordinated cyber-attacks. Understanding what constitutes “jihadist” activity in the digital realm is no longer just the purview of intelligence agencies; it is a critical component of digital security, platform moderation, and the development of ethical AI.

As we move deeper into the decade, the intersection of extremist ideologies and high-level technology has created a complex battlefield. From the use of end-to-end encryption to the deployment of sophisticated propaganda algorithms, the digital footprint of these entities poses a unique challenge to the tech industry. This article explores the technical dimensions of this threat, the AI tools used to combat it, and the infrastructure required to secure the global digital ecosystem.

Defining the Digital Footprint: What is Jihadist Cyber Activity?

In the tech industry, identifying “jihadist” activity involves more than just monitoring keywords. It requires a sophisticated understanding of digital behavior, metadata analysis, and the evolution of online communication. This category of activity is characterized by highly decentralized networks that leverage the same tools used by legitimate businesses to spread influence and execute technical disruptions.

The Shift from Physical to Virtual Recruitment

Historically, extremist recruitment was a localized, physical process. However, the tech boom has transitioned this into a virtual lifecycle. Modern groups utilize “Social Media Intelligence” (SOCMINT) to identify vulnerable targets. From a technical perspective, this involves the use of automated bots and “sock puppet” accounts that bypass platform filters. These digital entities engage in sentiment analysis—often using rudimentary AI themselves—to find individuals expressing specific grievances, thereby automating the top of the “radicalization funnel.”

Identifying Malicious Code and Propaganda Streams

Jihadist cyber activity isn’t limited to recruitment; it involves the dissemination of “terror-ware.” This includes instructional software, encrypted communication apps specifically modified for anonymity, and the use of the InterPlanetary File System (IPFS) to host resilient, uncensorable propaganda. For digital security professionals, the challenge lies in distinguishing between legitimate encrypted traffic and traffic intended for the coordination of extremist activities. The “what” in this context is a sophisticated blend of media manipulation and decentralized web hosting.

The Role of Artificial Intelligence in Threat Detection

To counter the sheer volume of data generated by extremist groups, the tech industry has turned to Artificial Intelligence. AI tools are now the frontline defense in identifying, flagging, and removing jihadist content before it reaches a mass audience. This process involves some of the most advanced applications of Machine Learning (ML) and computer vision currently available.

Natural Language Processing (NLP) and Sentiment Analysis

Standard keyword filters are easily bypassed by using coded language or “leetspeak.” Advanced NLP models, such as BERT or GPT-based classifiers, are trained to understand context, nuance, and cultural subtext. These tools can analyze thousands of posts per second to identify the underlying intent of a message. By recognizing patterns in linguistic structure, AI can flag content that promotes violence or radicalization, even if the specific “prohibited” words are missing. This technological layer is essential for maintaining the safety of large-scale social platforms.

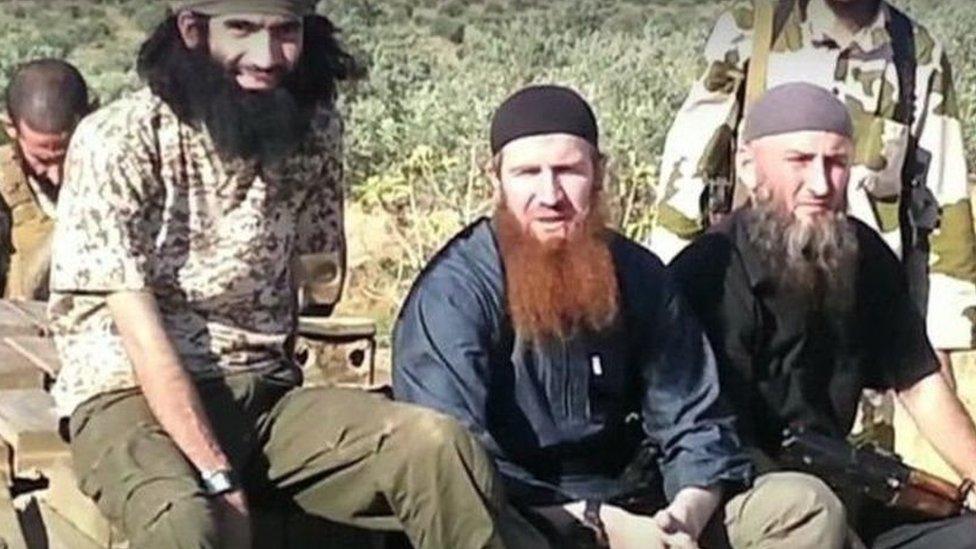

Image Recognition and Flagging Extremist Symbols

A significant portion of extremist content is visual. AI-driven computer vision tools are trained to recognize specific flags, insignias, and even the unique visual styles of propaganda videos. Using “hashing” technology—specifically Perceptual Hashing—tech companies can create a digital fingerprint of a known extremist image. Once an image is hashed, it can be blocked across multiple platforms simultaneously. This collaborative tech effort, often coordinated through global databases, ensures that once a piece of “jihadist” media is identified, its ability to go viral is technically neutralized.

Digital Security Measures for Infrastructure Protection

Beyond content moderation, the “jihadist” threat extends to the security of physical and digital infrastructure. Ideologically motivated hackers, often referred to as “hacktivists” or cyber-terrorists, target government databases, power grids, and corporate networks.

Defending Against Ideologically Motivated Ransomware

While many ransomware attacks are financially motivated (the “Money” niche), a subset of attacks is designed purely for disruption and psychological impact. These attacks often utilize “wiper” malware, which doesn’t just encrypt data for ransom but destroys it entirely to cause maximum operational damage. Digital security teams must implement robust backup protocols and “air-gapped” systems to protect critical infrastructure from these politically or ideologically motivated breaches.

The Importance of Zero Trust Architecture

To mitigate the risk of internal threats and sophisticated phishing attempts used by extremist groups, many tech firms are adopting a “Zero Trust” architecture. This security model operates on the principle of “never trust, always verify.” By requiring strict identity verification for every person and device attempting to access resources on a private network, organizations can prevent the lateral movement of a cyber-attacker who may have gained initial access through a radicalized individual or a compromised credential.

Ethics and Privacy in Digital Surveillance

As we develop more powerful tech tools to identify “jihadist” activity, a significant debate arises regarding the balance between security and privacy. The technology used to track extremists is, fundamentally, the same technology that could be used for mass surveillance of civilian populations.

Balancing Public Safety with Data Privacy

The tech industry faces a constant dilemma: how much access should intelligence agencies have to encrypted data? While “backdoors” in encryption could help identify extremist coordination, they also weaken the overall security of the internet, making all users vulnerable to hackers and authoritarian regimes. The consensus among the majority of the tech community is that end-to-end encryption is a fundamental right, and threat detection must happen at the “edges” of the network—through metadata and user reporting—rather than by compromising the encryption itself.

The Risk of Algorithmic Bias in Flagging Content

One of the most significant technical challenges in identifying “jihadist” content is the risk of algorithmic bias. If an AI is poorly trained, it may begin to flag legitimate religious or political discourse as “extremist.” This not only leads to unfair censorship but also creates a “false sense of security” where actual threats are missed because the AI is focused on the wrong parameters. Developers are now focusing on “Explainable AI” (XAI) to ensure that when a piece of content is flagged, the system can provide a clear, logical reason for the decision, allowing for human-in-the-loop verification.

The Future of the Tech-Extremism Conflict

The definition of “jihadist” in a tech context will continue to shift as new technologies emerge. We are already seeing the early stages of “Deepfake” propaganda, where AI is used to create realistic videos of leaders or events to incite violence. Furthermore, the rise of decentralized finance (DeFi) and privacy coins presents new challenges for tracking the funding of these digital operations.

The tech industry’s response must be one of constant innovation. This involves not only better code and faster processors but also a more profound commitment to ethical software development. By building resilient systems, fostering international cooperation between tech giants, and refining AI to be both precise and fair, the digital world can defend itself against the evolving threat of extremism while preserving the open, secure nature of the internet.

In conclusion, “jihadist” activity represents a high-stakes challenge for the technology sector. It is a catalyst for the development of more sophisticated AI, more robust security protocols, and a deeper conversation about the ethics of the digital age. As developers and security experts, the goal is to create a digital environment where technology serves as a bridge for connection, rather than a tool for radicalization and destruction.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.