Latent variables are a fundamental concept in many areas of science and statistics, but their true power and applicability are perhaps most evident within the realm of technology and data science. They are the unseen forces, the underlying constructs that we infer from observable data, allowing us to build more sophisticated models, understand complex phenomena, and develop more intelligent systems. In the context of technology, latent variables are crucial for everything from understanding user behavior to improving the accuracy of machine learning algorithms and designing more intuitive digital experiences.

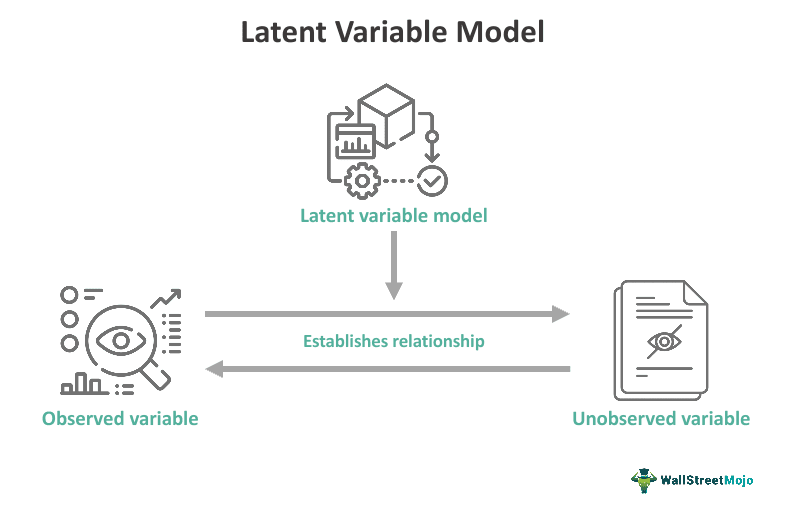

The term “latent” itself suggests something hidden or concealed. In statistical modeling and data analysis, a latent variable is a variable that cannot be directly measured or observed. Instead, its presence and influence are inferred from other variables that can be observed, often referred to as manifest variables or indicators. Think of it like a doctor diagnosing an illness. The illness itself is the latent variable – it’s not directly seen. However, the doctor observes symptoms like fever, cough, and fatigue (the manifest variables) to infer the underlying condition. Similarly, in technology, we might infer a user’s “interest in a particular product category” (latent variable) from their browsing history, search queries, and past purchases (manifest variables).

The power of latent variables lies in their ability to simplify complex datasets and uncover deeper patterns. By representing multiple observable indicators with a single, underlying construct, we can reduce dimensionality, make models more parsimonious, and gain a more generalized understanding of the phenomena we are studying. This is particularly valuable in the age of big data, where the sheer volume and complexity of information can be overwhelming.

The Ubiquity of Latent Variables in Tech

From understanding the nuances of human interaction with digital interfaces to powering the sophisticated predictions of artificial intelligence, latent variables are woven into the fabric of modern technology. They provide a bridge between the raw, observable data we collect and the abstract concepts that drive intelligent systems.

Understanding User Behavior and Preferences

One of the most significant applications of latent variables in technology is in understanding user behavior and preferences. Companies collect vast amounts of data on how users interact with their products and services – website clicks, app usage patterns, social media engagement, and purchase histories. These observable actions are often the indicators of underlying, unobservable user characteristics.

Inferring User Intent and Engagement

Consider an e-commerce platform. A user’s decision to spend a long time browsing a specific category, adding items to their wishlist, and reading product reviews can be indicators of a latent variable like “purchase intent” or “interest level.” By modeling these relationships, the platform can infer which users are most likely to buy, allowing for targeted marketing campaigns, personalized recommendations, and optimized website layouts. Similarly, a user’s consistent engagement with certain types of content on a social media platform might indicate a latent interest in a particular topic, enabling the platform to surface more relevant posts and advertisements.

Psychometric Modeling for User Profiling

Beyond immediate purchase intent, latent variables are used in more sophisticated user profiling. For instance, psychometric models can infer latent traits like “innovativeness,” “risk aversion,” or “digital literacy” from a series of survey responses or observed behaviors. These latent traits can then inform the design of adaptive user interfaces, the development of personalized learning experiences, or the tailoring of educational content to different learning styles. Understanding these underlying user characteristics allows technology developers to create more engaging, effective, and user-centric digital products.

Enhancing Machine Learning and AI Models

Latent variables play a pivotal role in advancing the capabilities of machine learning and artificial intelligence, enabling models to learn more effectively from data and make more accurate predictions.

Feature Engineering and Dimensionality Reduction

In many machine learning tasks, the raw observable features can be numerous and highly correlated, leading to computational inefficiencies and the “curse of dimensionality.” Latent variables offer a powerful solution through dimensionality reduction. Techniques like Principal Component Analysis (PCA) and Factor Analysis explicitly aim to identify underlying latent factors that explain the variance in the observed data. These latent factors can then be used as new, more informative features for subsequent machine learning models, often leading to improved performance and faster training times. For example, instead of feeding a model hundreds of pixel values from an image, a latent variable model might extract a few key latent features representing shapes, textures, or object components.

Latent Dirichlet Allocation (LDA) for Text Analysis

A prime example of latent variable modeling in AI is Latent Dirichlet Allocation (LDA). LDA is a generative probabilistic model that uncovers the underlying thematic structure within a collection of documents. It assumes that each document is a mixture of topics, and each topic is a distribution of words. The “topics” are the latent variables, which are not explicitly defined but are inferred from the co-occurrence patterns of words across the corpus. For instance, in a collection of news articles, LDA might identify latent topics like “politics,” “sports,” or “technology,” even if these labels are never provided to the model. This is invaluable for tasks like document summarization, information retrieval, and content recommendation.

Recommender Systems and Collaborative Filtering

Recommender systems, a cornerstone of modern online platforms, heavily rely on latent variables to predict user preferences. Collaborative filtering techniques, for example, often assume that users and items can be represented in a shared latent space. A user’s rating for an item is seen as an indicator of their position in this latent space, and the model learns to predict ratings by finding users with similar latent representations or items with similar latent characteristics. Techniques like Matrix Factorization (e.g., Singular Value Decomposition or Non-negative Matrix Factorization) are employed to decompose user-item interaction matrices into lower-dimensional latent factor matrices, effectively capturing underlying user tastes and item attributes. This allows for personalized recommendations of movies, products, music, and more.

Exploring Advanced Applications in AI and Data Science

The conceptual elegance of latent variables extends to highly sophisticated applications that push the boundaries of what’s possible in AI and data science, enabling more nuanced understanding and generation of complex data.

Generative Models and Latent Space Representation

Generative models, a rapidly evolving area of AI, often leverage latent variables to create new data that mimics the characteristics of the training data. The “latent space” in these models represents a compressed, abstract space where meaningful variations in the data can be encoded.

Variational Autoencoders (VAEs)

Variational Autoencoders (VAEs) are a powerful class of generative models that use latent variables to learn a probabilistic mapping from input data to a lower-dimensional latent space and then back to the data space. The encoder maps input data to a distribution in the latent space, and the decoder samples from this latent space to reconstruct the data. By learning this latent representation, VAEs can generate novel data samples by sampling from the learned latent distribution and passing them through the decoder. This is instrumental in generating realistic images, music, and even synthetic datasets for training other models. The latent variables in a VAE capture the underlying factors of variation in the data, allowing for smooth interpolations and meaningful transformations within the generated content.

Generative Adversarial Networks (GANs)

Generative Adversarial Networks (GANs) also implicitly utilize a latent space. A GAN consists of two neural networks: a generator and a discriminator. The generator takes random noise from a latent space as input and tries to produce synthetic data that resembles real data. The discriminator, in turn, tries to distinguish between real and fake data. Through adversarial training, the generator learns to produce increasingly realistic data by mapping latent vectors to convincing outputs. The latent space in GANs allows for control over the generated output; by manipulating the latent input, one can generate variations of the synthetic data, such as changing the expression on a generated face or altering the style of an image.

Causal Inference and Latent Confounders

While often used for prediction and pattern discovery, latent variables are also increasingly important in the field of causal inference, helping researchers understand not just correlations but true cause-and-effect relationships.

The Challenge of Unobserved Confounders

In observational studies, identifying causal relationships can be challenging due to the presence of unobserved confounding variables. A confounder is a variable that influences both the presumed cause and the presumed effect, creating a spurious association. For instance, if we observe that ice cream sales and drowning incidents both increase in the summer, we might mistakenly infer a causal link. However, the unobserved latent variable of “warm weather” is the true confounder, influencing both ice cream consumption and swimming activity.

Advanced Modeling Techniques for Latent Confounders

Techniques like Structural Equation Modeling (SEM) and Bayesian networks are often employed to model latent variables, including latent confounders. By explicitly incorporating latent variables into these models, researchers can attempt to control for their influence and better isolate the causal effects of interest. This is critical in fields like digital health, where understanding the causal impact of interventions or behaviors on outcomes requires careful consideration of unmeasured factors. For example, in analyzing the impact of a new app feature on user well-being, latent variables might represent unobserved user personality traits or external life stressors that could influence both app usage and perceived well-being.

The Future of Latent Variables in Technology

As data becomes more abundant and computational power continues to grow, the role of latent variables in technology is set to expand dramatically. They are not merely statistical tools but foundational elements for building more intelligent, adaptive, and human-aligned technological systems.

Towards More Interpretable and Explainable AI

The “black box” nature of many complex AI models is a significant challenge. Latent variables offer a promising avenue towards more interpretable and explainable AI. By identifying and understanding the latent factors that drive a model’s decisions, we can gain insights into its reasoning process. This is crucial for building trust in AI systems, particularly in critical applications like healthcare and autonomous systems. Researchers are actively developing methods to visualize and analyze latent spaces, making the underlying mechanisms of AI more transparent.

Personalized and Adaptive Experiences at Scale

The ability to infer latent user characteristics allows for an unprecedented level of personalization. Future technologies will likely feature even more sophisticated latent variable models that dynamically adapt user interfaces, content delivery, and even product features based on an individual’s inferred needs, preferences, and cognitive states. This moves beyond simple rule-based personalization to truly dynamic and context-aware digital experiences.

Discovering Novel Insights and Innovations

Latent variable models are powerful tools for discovery. By uncovering hidden relationships and underlying structures in data that are not immediately apparent, they can lead to the identification of novel user segments, emerging trends, and unexpected patterns. This ability to extract deeper meaning from vast datasets will be instrumental in driving innovation across all sectors of the technology industry, from scientific research and product development to marketing and user experience design. The ongoing development of more efficient and robust latent variable modeling techniques promises to unlock even greater potential for understanding and shaping the digital world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.