In the modern landscape of digital entertainment and software engineering, the user experience is often defined by what we don’t see. When a player swings a sword in an action game, or a user taps a notification on a smartphone, the seamlessness of that interaction is governed by a fundamental concept in computational geometry: the hitbox. While the term originated in the early days of arcade gaming, it has evolved into a sophisticated pillar of software development, influencing everything from competitive eSports to the ergonomics of mobile application design.

A hitbox is essentially an invisible shape—or a collection of shapes—used by a computer program to detect real-time collisions. In the context of technology and software architecture, hitboxes serve as the bridge between the visual representation of an object and the mathematical logic that dictates how that object interacts with its environment. Without the precision of well-engineered hitboxes, the digital worlds we inhabit would feel unresponsive, chaotic, and fundamentally broken.

The Engineering Behind the Hitbox: How Software Defines Interaction

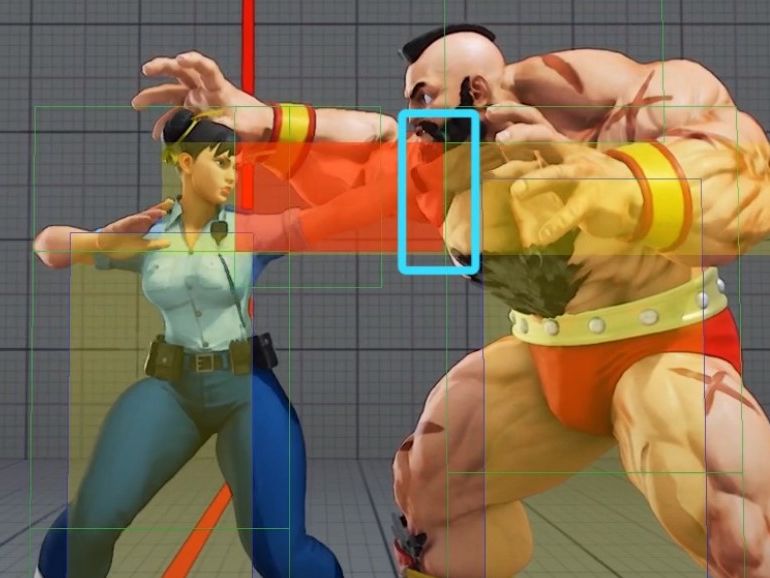

At its core, a hitbox is a data structure designed to simplify the complex geometry of a 3D or 2D model for the sake of computational efficiency. Modern character models in video games can consist of millions of polygons; calculating physical collisions for every individual triangle in real-time would overwhelm even the most powerful consumer GPUs. To solve this, developers wrap these complex models in “invisible boxes” that the physics engine can process much faster.

Geometric Foundations: Spheres, Capsules, and Polygons

Software engineers utilize several geometric primitives to create hitboxes. The simplest form is the Axis-Aligned Bounding Box (AABB). These are rectangles that stay aligned with the coordinate axes. While they are incredibly “cheap” to calculate, they are imprecise because they do not rotate with the object.

To combat this, more advanced software uses Oriented Bounding Boxes (OBB), which can rotate, or Capsules, which are often used for human characters. Capsules are particularly favored in tech development because they offer a smooth surface that prevents “snagging” on digital environments, allowing for more fluid movement in 3D spaces. In high-fidelity competitive games, developers use “Pixel-Perfect” or “Poly-Perfect” collision detection, where the hitbox mimics the visual mesh almost exactly, though this comes at a significant cost to processing power.

Collision Detection Algorithms

The “magic” of a hitbox lies in the algorithm that checks for overlaps. In a high-speed software environment, the engine must perform hundreds of “checks” per second. Most modern engines utilize a two-phase approach:

- The Broad Phase: The system quickly identifies which objects are even remotely close to each other using spatial partitioning (like Octrees or Grids).

- The Narrow Phase: Once the system knows two objects are near, it performs the expensive, precise calculation to see if their hitboxes have actually intersected.

This tiered logic is a cornerstone of software optimization, ensuring that resources are only spent on calculations that matter to the user’s current experience.

Precision vs. Performance: The Balancing Act in Game Development

One of the greatest challenges in technology development is the trade-off between accuracy and speed. In the world of “hitboxes,” this is often the difference between a game feeling “fair” and a game feeling “clunky.” If a hitbox is larger than the visual model, players experience “phantom hits”—being struck when they clearly dodged. Conversely, if the hitbox is too small, their attacks may pass right through an enemy, leading to frustration.

Computational Costs of Complex Hitboxes

As we push toward 4K gaming and high-refresh-rate displays (144Hz and beyond), the window of time a CPU has to calculate a collision shrinks to mere milliseconds. If a developer chooses to use highly complex hitboxes that perfectly wrap around a character’s fingers, ears, and equipment, they risk “frame drops.”

Engineers must decide where to spend their “computational budget.” For example, in a massive multiplayer online (MMO) game where hundreds of characters are on screen, hitboxes are simplified into basic cylinders. In a one-on-one fighting game like Street Fighter or Tekken, hitboxes are incredibly detailed because the CPU only needs to track two entities. This strategic allocation of processing power is a hallmark of professional software optimization.

Netcode and Latency: The “Favor the Shooter” Paradigm

In online software, hitboxes are further complicated by latency (lag). Because data takes time to travel from a player’s computer to a server, what one player sees is often a few milliseconds behind the actual state of the game.

To solve this, developers use Lag Compensation and Rollback Netcode. The server essentially “rewinds” the hitbox positions to match the exact moment a player took an action. This tech ensures that if you clicked on a target on your screen, the software validates that hit, even if the target has technically moved on the server’s clock. This intersection of networking and geometry is one of the most complex areas of modern software engineering.

Beyond Combat: Hitboxes in User Interface and Digital Navigation

While the term is synonymous with gaming, the logic of the hitbox is ubiquitous in all forms of technology, particularly in User Interface (UI) and User Experience (UX) design. Every button on your smartphone, every link on a website, and every icon in a professional software suite like Adobe Photoshop has an invisible “hitbox”—often referred to in web development as a “hit area” or “bounding box.”

The “Fitts’s Law” Connection

In the tech world, UI designers rely on Fitts’s Law, a predictive model of human movement that states the time required to move to a target is a function of the target’s size and distance. In practical software terms, this means that the “hitbox” for a critical button (like “Save” or “Submit”) should be larger than its visual icon.

A professional app developer might make the touchable area of a 20×20 pixel icon actually 44×44 pixels. This invisible padding ensures that users with different finger sizes or those using the app in a moving vehicle can still interact with the software reliably. This is a direct application of hitbox theory to improve digital accessibility.

Improving Accessibility Through Intelligent Hitbox Design

For users with motor impairments, the precision required to click small targets can be a barrier. Tech companies are increasingly using “Dynamic Hitboxes” in their operating systems. If a user’s cursor is moving rapidly toward a menu, the software can temporarily expand the “invisible” hit area of that menu to catch the click, even if the user is slightly off-target. This use of proactive collision logic transforms a frustrating software experience into an intuitive one.

The Future of Hitboxes: AI and Real-Time Physics Simulations

As we look toward the future of technology, hitboxes are evolving from static shapes into dynamic, intelligent systems. The rise of Artificial Intelligence (AI) and Machine Learning (ML) is beginning to change how software perceives “touch” and “impact.”

Machine Learning in Collision Mapping

Traditional hitboxes are hand-drawn by artists or generated by simple algorithms. However, new AI-driven tools can analyze a 3D mesh and automatically generate the most efficient set of hitboxes to represent that shape. These tools use “convex decomposition” to break complex shapes into a series of simpler parts. By using ML to optimize this process, developers can create games and simulations that are more realistic without sacrificing performance on mobile or lower-end hardware.

The Role of Ray Tracing in Dynamic Hitbox Calculation

Ray tracing is often discussed in the context of lighting and reflections, but its underlying tech—calculating the intersection of a ray of light with a surface—is essentially the ultimate hitbox. As hardware becomes more capable of real-time ray tracing, we are moving toward a world where the “hitbox” is no longer a separate, simplified shape. Instead, the software can check for collisions against the actual high-resolution geometry of the object in real-time.

This shift will be particularly revolutionary for Virtual Reality (VR) and Augmented Reality (AR). In a VR environment, the “hitboxes” of your virtual hands must be incredibly precise to mimic the feeling of picking up a pen or turning a doorknob. The integration of haptic feedback with these advanced hitboxes will define the next generation of immersive tech.

Conclusion

The hitbox is a perfect example of the “hidden” technology that makes our digital lives possible. It is a bridge between the abstract world of mathematics and the visceral world of human interaction. Whether it is ensuring a fair match in a professional eSports tournament or making sure a “Buy Now” button is easy to tap on a mobile screen, the hitbox is a silent guardian of the user experience.

As software continues to grow in complexity and hardware becomes more capable, the hitbox will continue to evolve. From the simple rectangles of the 1980s to the AI-optimized, ray-traced volumes of tomorrow, this invisible architecture remains one of the most vital tools in the software developer’s arsenal. Understanding what a hitbox is—and how it functions—is to understand the very DNA of interactive technology.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.