At first glance, the question “what is 3 times 2 3?” appears disarmingly simple, a basic arithmetic problem posed perhaps in an elementary school classroom. The answer, of course, is eighteen (3 * 2 * 3 = 18). Yet, in the vast and rapidly evolving landscape of technology, this seemingly trivial query serves as a profound touchstone, a fundamental building block upon which the most sophisticated computational systems are constructed. It encapsulates the essence of processing information, executing algorithms, and providing instant, accurate answers – a core function that underpins everything from the simplest calculator app to the most advanced artificial intelligence.

This article delves beyond the elementary solution, exploring how such a basic mathematical operation illuminates critical aspects of technology: the evolution of computational devices, the sophisticated mechanisms of AI that interpret and solve such queries, the architectural principles of software and algorithms, and the exciting future where even fundamental operations are being re-imagined. The journey from manually calculating “3 times 2 3” to having a digital assistant provide the answer in milliseconds is a testament to humanity’s relentless pursuit of computational efficiency and intelligence.

From Manual Computation to Automated Answers: A Brief History

The human desire to solve “3 times 2 3” and countless other arithmetic problems efficiently dates back millennia. Early civilizations devised ingenious tools and methods, each epoch pushing the boundaries of what was considered fast and accurate. The trajectory from these rudimentary beginnings to today’s instantaneous digital solutions forms the bedrock of our modern technological prowess.

The Dawn of Calculation: Abacus, Slide Rules, Early Mechanical Calculators

Long before silicon chips and gigahertz processors, the need for reliable calculation led to the invention of tools like the abacus, used for thousands of years to perform arithmetic operations with surprising speed and accuracy. Later, in the 17th century, the invention of the slide rule and mechanical calculators by pioneers such as Blaise Pascal and Gottfried Wilhelm Leibniz marked significant leaps. These devices, intricate arrangements of gears and levers, could automate basic operations, relieving humans of tedious, error-prone manual calculations. For a problem like “3 times 2 3,” these tools represented the cutting edge, offering a structured, albeit still largely manual, way to arrive at eighteen. They were precursors, demonstrating the value of mechanizing computation.

The Digital Revolution: Electronic Computers and the Speed of Thought

The 20th century ushered in the true digital revolution with the advent of electronic computers. Machines like ENIAC and UNIVAC, massive and power-hungry, transformed computation from a mechanical process into an electronic one. The ability to represent numbers and operations as electrical signals, processed at incredible speeds, meant that “3 times 2 3” could be solved almost instantaneously. The shift from analog to digital not only vastly increased processing speed but also introduced the concept of programmability, allowing machines to perform a sequence of operations automatically. This era laid the groundwork for the modern digital devices we take for granted, where basic arithmetic is handled with such trivial ease that we rarely give it a second thought.

Software’s Role: Abstracting Complexity, Making Math Accessible

While hardware provides the raw computational power, it is software that truly unlocks its potential. Programming languages and operating systems emerged to translate human-readable instructions into machine code, making complex calculations accessible to a wider audience. Today, software applications, from basic calculators on smartphones to sophisticated spreadsheets and statistical packages, abstract away the underlying hardware complexities. When you type “3 * 2 * 3” into a search bar or a programming interpreter, software interprets your query, sends it to the processor, and displays the result – eighteen – in a blink. This abstraction allows users to focus on the problem at hand, rather than the intricate dance of electrons and logic gates beneath the surface. Software is the friendly interface that makes the immense power of computation approachable and practical for everyday use.

AI and the Interpretation of Basic Queries

The simple query “what is 3 times 2 3?” is not just a test of a machine’s mathematical ability; it’s a window into the sophisticated world of Artificial Intelligence (AI), particularly its capacity to understand, process, and respond to human language. Modern AI systems, from voice assistants to search engines, leverage advanced techniques to interpret such queries, demonstrating an intelligence that goes beyond mere calculation.

Natural Language Processing (NLP): Understanding “What is…”

At the heart of an AI’s ability to answer “what is 3 times 2 3?” lies Natural Language Processing (NLP). NLP is a branch of AI that enables computers to understand, interpret, and generate human language. When a user asks a question in plain English, an NLP model first parses the sentence, identifying keywords (“what,” “is,” “times”) and numerical entities (“3,” “2,” “3”). It disambiguates the intent, recognizing that “times” in this context signifies multiplication, not temporal duration or repetition. This process involves complex algorithms that have been trained on vast datasets of human speech and text, allowing the AI to grasp the semantic meaning and convert it into a structured query that its computational core can execute. The seemingly straightforward understanding of “what is 3 times 2 3” is actually a triumph of linguistic AI.

Algorithmic Efficiency: The Instant Result

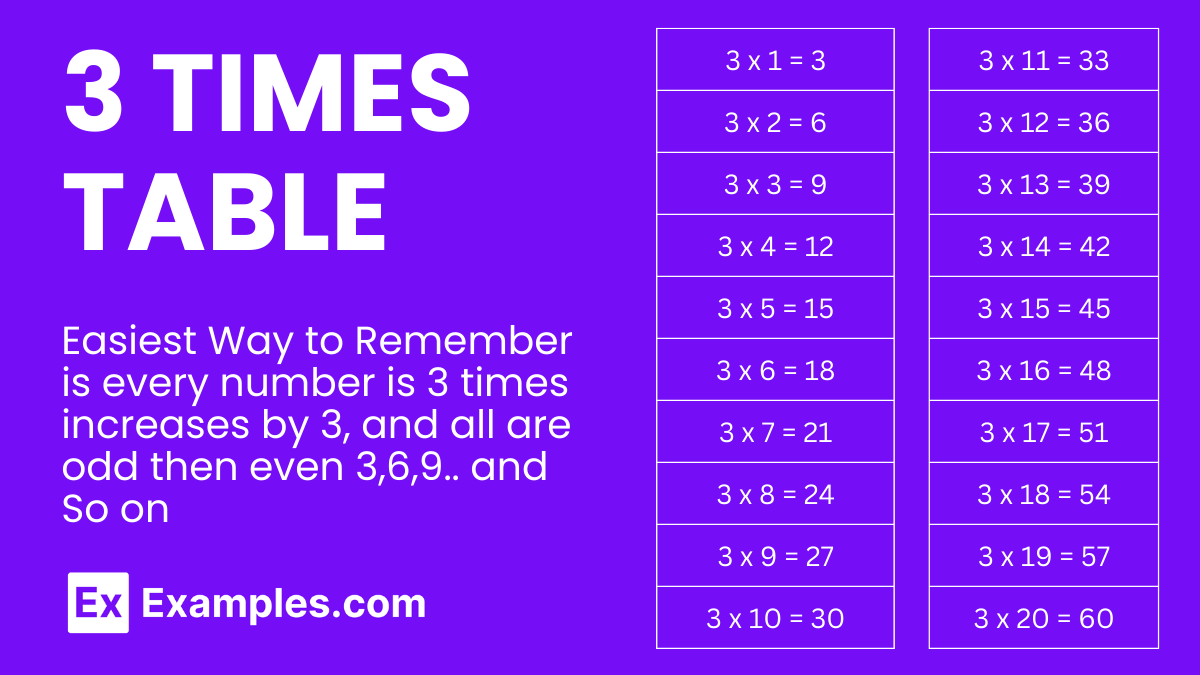

Once the NLP component has successfully translated the human query into a machine-executable instruction (e.g., calculate(3 * 2 * 3)), the task falls to the core algorithmic engine. Modern processors are incredibly efficient at performing arithmetic operations. For a basic multiplication problem, the computation is near-instantaneous, often measured in nanoseconds. The challenge isn’t the arithmetic itself, but the efficiency of the algorithm that retrieves, executes, and presents the result. Search engines, for instance, have highly optimized algorithms designed to identify mathematical expressions within queries, perform the calculation directly, and display the answer prominently, often before even showing search results. This seamless, rapid delivery of the answer “18” is a testament to decades of optimization in both hardware and software.

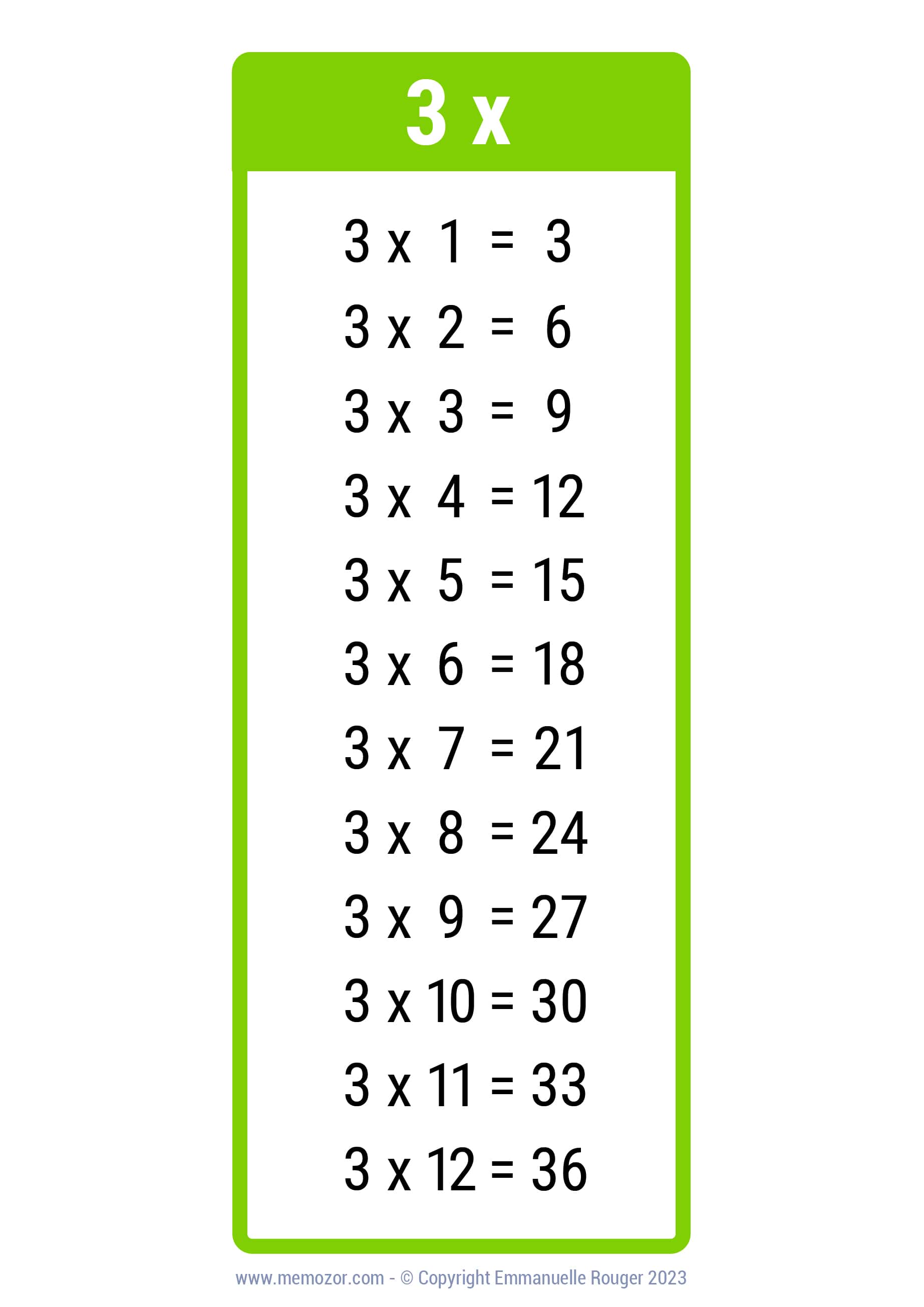

Beyond Simple Math: Contextualizing and Expanding Queries

While providing the direct answer “18” is a primary function, advanced AI goes a step further by contextualizing and sometimes expanding upon such simple queries. If a user frequently asks mathematical questions, the AI might infer an interest in educational content or specific mathematical tools. Some AI assistants might even offer related information, such as “Do you want to know about multiplication tables?” or “Should I convert this into another unit?” This ability to move beyond a literal answer and engage with the user’s potential underlying intent transforms a mere calculator into an intelligent assistant. It highlights AI’s growing capacity for inferential reasoning, even from the most basic input, demonstrating its potential to be a proactive problem-solver rather than just a reactive responder.

The Building Blocks of Software and Algorithms

The answer to “what is 3 times 2 3?” might be simple, but the principles that allow software and algorithms to arrive at it are fundamental to all computational tasks. Every complex application, from gaming engines to financial modeling software, is built upon a myriad of these primitive operations, meticulously arranged and optimized.

Primitive Operations: The “3 Times 2 3” of Code

At the lowest level of computing, everything breaks down into primitive operations: addition, subtraction, multiplication, division, logical comparisons (AND, OR, NOT), and data movement. “3 times 2 3” is an example of such a primitive operation (multiplication) combined with others. Just as atoms are the basic building blocks of matter, these primitive operations are the fundamental units of computation. Every line of code in any programming language, no matter how high-level, eventually translates into a sequence of these basic operations that the computer’s central processing unit (CPU) can execute. Understanding this foundational layer is crucial for software developers, as the efficiency of a complex program often hinges on how effectively these primitive operations are sequenced and optimized.

Data Structures and Efficiency: Optimizing for Speed

While primitive operations define what a computer can do, data structures dictate how information is organized and accessed. Efficient data structures are crucial for optimizing the performance of algorithms, especially when dealing with vast amounts of data. For “3 times 2 3,” the numbers ‘3’ and ‘2’ are simple scalar values, easily held in memory registers. However, for more complex calculations involving arrays, lists, or databases, the choice of data structure significantly impacts how quickly the computer can retrieve operands and store results. A well-designed algorithm, combined with appropriate data structures, can perform millions of operations per second, making the processing of “3 times 2 2” (or much larger problems) virtually instantaneous. Without efficient data management, even basic computations would grind to a halt when scaled up.

Scalability: Handling Millions of “3 Times 2 3” Simultaneously

Modern computing is not just about solving one “3 times 2 3” problem; it’s about solving millions, billions, or even trillions of similar (or far more complex) problems simultaneously and continuously. This capability is known as scalability. Cloud computing platforms, for example, distribute computational tasks across vast networks of servers, allowing thousands of users to perform calculations, stream content, or run applications concurrently. The principles that make a single multiplication efficient are extended to parallel processing, distributed computing, and multi-threading, enabling systems to handle immense workloads. Whether it’s processing real-time financial transactions, rendering complex 3D graphics, or running large-scale scientific simulations, the ability to scale up basic operations like “3 times 2 3” is what defines the power and reach of contemporary technology.

The Future of Computational Simplicity and Sophistication

The seemingly simple query “what is 3 times 2 3?” continues to resonate even as we look towards the horizon of technological advancement. Future innovations, from quantum computing to pervasive AI, promise to further redefine how we perform and perceive even the most fundamental operations.

Quantum Computing’s Promise: Redefining “Times”

Quantum computing represents a paradigm shift that could fundamentally alter how we perform calculations, potentially even redefining the very concept of “times.” Unlike classical computers that use bits (0 or 1), quantum computers use qubits, which can exist in multiple states simultaneously (superposition) and interact in complex ways (entanglement). While current quantum computers are still in their infancy, they hold the potential to solve certain types of problems exponentially faster than classical computers, particularly those involving complex simulations, optimization, and cryptography. For a basic operation like “3 times 2 3,” classical computers are already optimal. However, in the context of vastly more complex problems where multiplication is part of an intractable equation, quantum algorithms could open up entirely new avenues for computation, pushing the boundaries of what is possible.

Everyday AI Integration: Seamless Problem Solving

In the near future, the ability of AI to answer queries like “what is 3 times 2 3?” will become even more seamless and integrated into our daily lives. AI will move beyond dedicated devices like smartphones and smart speakers, becoming embedded in our environments, from smart homes to autonomous vehicles. Imagine asking a question aloud in your kitchen, and an integrated AI system instantly displays the answer on a smart screen or speaks it back to you. This pervasive integration will not only make basic computation more convenient but also empower users to tackle more complex problems by leveraging AI’s ability to combine multiple primitive operations with contextual understanding and access to vast datasets. The intelligence to interpret and solve simple queries will be a silent, ubiquitous force, enhancing our productivity and knowledge.

Digital Literacy: Understanding the “How” Behind the “What”

As technology becomes more powerful and opaque, understanding the “how” behind the “what” for even simple queries like “3 times 2 3” becomes increasingly important. Digital literacy in the future will involve not just knowing how to use technology, but also having a foundational grasp of the computational principles that govern it. This understanding fosters critical thinking about AI’s capabilities and limitations, promotes ethical considerations in software development, and empowers individuals to be creators, not just consumers, of technology. Appreciating that an instant answer of “18” is the culmination of centuries of innovation, sophisticated algorithms, and immense processing power helps to demystify technology and build a more informed society.

Conclusion

The seemingly straightforward query “what is 3 times 2 3?” belies a profound story of human ingenuity, technological evolution, and the relentless pursuit of computational intelligence. From the abacus to quantum bits, from simple mechanical gears to sophisticated AI algorithms, the journey to solve this basic math problem efficiently illuminates the very foundations of modern technology. It underscores the critical role of primitive operations, the power of software abstraction, the marvels of natural language processing, and the vision for a future where computation is both incredibly powerful and seamlessly integrated. As technology continues to advance, the ability to understand, execute, and scale fundamental operations will remain the bedrock upon which new paradigms of innovation are built, making the simple answer of “eighteen” a symbol of boundless computational potential.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.