At first glance, the question “what is 2/1 as a decimal” seems like an elementary math problem. The mathematical answer is straightforward: 2 divided by 1 is 2, and expressed as a decimal, it is 2.0. However, in the realm of technology, software engineering, and computational logic, the transition from a fraction to a decimal representation is a foundational concept that governs how machines process data, how algorithms make decisions, and how modern software architectures maintain numerical integrity.

In the digital landscape, every number—whether a simple integer like 2 or a decimal like 2.0—undergoes a complex journey of translation. This article explores the technical nuances of numerical representation, the architectural implications of floating-point arithmetic, and why understanding the “decimal” side of simple fractions is critical for developers and tech professionals.

The Fundamentals of Numerical Representation in Computing

When we ask what 2/1 is as a decimal, we are essentially performing a type conversion. In mathematics, 2 and 2.0 are identical in value. In the world of technology and programming, however, they are often treated as two entirely different entities: the Integer and the Floating-Point number.

From Fractions to Decimals: The Logic of Division

In computer science, division is more than just a mathematical operation; it is a resource-intensive process. When a processor encounters $2/1$, it must determine the context of the operation. In many low-level programming languages (like C or Java), dividing two integers results in an “integer division.” If you divide 5 by 2 in integer division, the result is 2, not 2.5.

However, when we explicitly ask for the decimal representation (2.0), we are signaling the system to use “floating-point” logic. This ensures that the precision of the result is preserved. For a value like $2/1$, the system recognizes that the denominator is 1, meaning the magnitude of the numerator is unchanged, but the type of data shifts to accommodate potential fractional values in the future.

Integer vs. Floating-Point: How Computers Store 2.0

To a human, 2 and 2.0 are the same. To a computer’s memory, they look very different. An integer (2) is stored as a simple binary string (e.g., 00000010 in an 8-bit system). A floating-point number (2.0) is stored according to the IEEE 754 standard, which divides the bits into a sign bit, an exponent, and a mantissa (or significand).

Understanding this distinction is vital for software performance. Integer operations are generally faster and consume less power than floating-point operations. However, the decimal form (2.0) is necessary for applications requiring high precision, such as graphic rendering engines, GPS navigation, and machine learning models.

Why Precision Matters in Modern Software Development

The transition from a fraction to a decimal is where many technical bugs are born. While $2/1$ results in a clean $2.0$, other fractions do not translate as cleanly into the binary systems used by modern gadgets and software.

The Pitfalls of Binary Rounding Errors

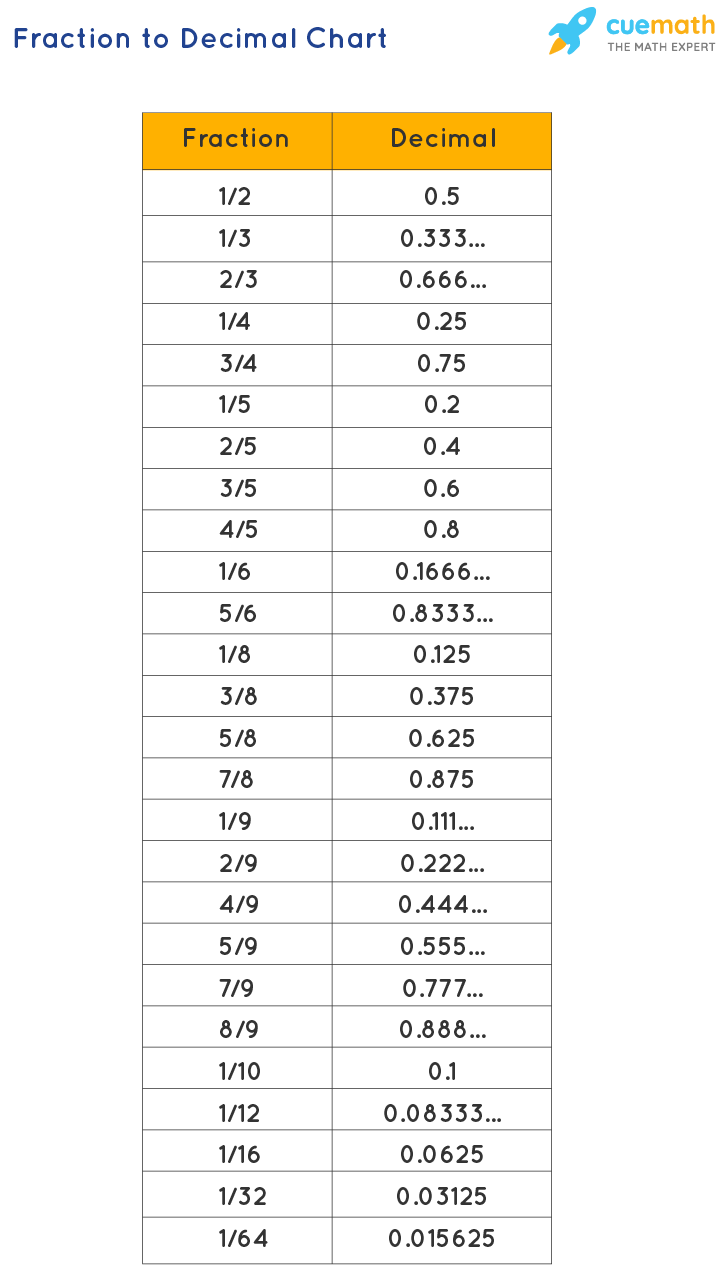

Computers use base-2 (binary), while humans use base-10 (decimal). This leads to a fascinating technical challenge. Just as $1/3$ becomes a repeating decimal in base-10 ($0.333…$), certain simple decimals in base-10 become repeating decimals in binary. For example, $0.1$ cannot be represented exactly in binary.

While $2/1$ as a decimal is a “clean” $2.0$ in both systems, the methodology of handling that conversion is the same one used for more complex fractions. If a developer assumes that decimal conversions are always perfect, they may encounter “rounding errors” that accumulate over millions of calculations. In high-frequency trading apps or aerospace software, a rounding error in the millionth decimal place can lead to catastrophic failure.

Handling Decimals in Fintech and Enterprise Software

In Financial Technology (FinTech), the question of how to represent numbers like $2.0$ is a matter of security and compliance. Because standard floating-point decimals can be imprecise, many financial systems avoid them entirely. Instead, they use “Decimal” or “BigDecimal” classes.

These tools treat numbers as strings or special objects to ensure that 2.0 stays exactly 2.0, without any hidden “noise” (like $2.0000000000004$) that might occur during complex interest calculations. This level of digital security ensures that when a user sees a balance or a ratio, they are seeing a mathematically perfect representation of their assets.

AI and Advanced Calculation Tools

The rise of Artificial Intelligence (AI) and Large Language Models (LLMs) has changed how we interact with simple questions like “what is 2/1 as a decimal.” We are moving from “calculating” numbers to “reasoning” about them.

Large Language Models and Mathematical Reasoning

When you ask an AI tool for the decimal version of $2/1$, it doesn’t just look up a table. It uses natural language processing to understand the intent of the question. Modern AI models, such as GPT-4 or specialized mathematical models like Minerva, utilize symbolic reasoning to differentiate between a simple string of text and a mathematical constant.

However, a known challenge in AI development is “hallucination” in arithmetic. Early models sometimes struggled with basic math because they were predicting the next most likely word rather than performing a calculation. Today, tech leaders are integrating AI with computational engines (like WolframAlpha) to ensure that when a user asks for a decimal conversion, the AI hands the task to a dedicated logic gate, ensuring 100% accuracy.

The Future of Computational Intelligence

As we look toward the future of tech, the way we handle numerical data is becoming more abstract. We are entering an era of “Edge Computing,” where data is processed locally on smart gadgets rather than in the cloud. In these environments, optimizing how numbers like $2/1$ are stored is crucial for saving battery life and reducing latency.

Whether it’s a smart watch calculating your heart rate ratio or an autonomous vehicle calculating distance, the ability to flip between fractional logic and decimal precision instantaneously is a hallmark of modern computational intelligence.

Tools and Resources for Developers and Tech Enthusiasts

For those working in the tech industry, managing decimal conversions and numerical data types is a daily task. Understanding the tools available can help maintain code quality and system stability.

Best Libraries for High-Precision Arithmetic

If you are developing an app and need to handle more than just $2/1$, you need robust libraries.

- In Python: The

decimalmodule is the gold standard for non-binary floating-point arithmetic. - In JavaScript: The

BigIntandBig.jslibraries are essential for avoiding the infamous “0.1 + 0.2 !== 0.3” problem. - In C#: The

decimalkeyword provides a 128-bit data type with much higher precision than the standarddouble.

These tools are the “industrial-strength” versions of the simple conversion we performed at the start of this article. They ensure that $2/1$ remains $2.0$ across all platforms and hardware architectures.

Debugging Logic Errors in Unit Conversion

One of the most common “tutorials” for junior developers involves unit conversion—converting a fraction (like a aspect ratio of 2/1) into a decimal for UI scaling. If a developer uses integer division by mistake, the decimal portion might be truncated (cut off).

For example, if you were calculating a more complex ratio like $3/2$ and the system used integer logic, the result would be $1.0$ instead of $1.5$. This is why “type casting” (telling the computer to treat the 2 as a float) is a fundamental skill in digital security and software tutorials. It prevents the loss of data and ensures the user interface remains responsive and visually accurate.

Conclusion: More Than Just a Number

The question “what is 2/1 as a decimal” serves as a perfect entry point into the sophisticated world of modern technology. While the mathematical answer is a simple 2.0, the technical reality involves a complex interplay of data types, memory management, and algorithmic precision.

In an age where AI, FinTech, and high-performance software drive our global economy, the way we represent numbers matters. From the way an integer is stored in a processor’s registers to the way an AI interprets a user’s query, the journey of $2/1$ to $2.0$ is a testament to the precision and logic that define our digital world. For tech professionals and curious users alike, understanding these fundamentals is the key to mastering the tools that shape our future.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.