In the era of high-definition displays, augmented reality, and precision-engineered optical sensors, the definition of “bad eyesight” has evolved beyond simple Snellen chart measurements. Historically, vision was assessed primarily by one’s ability to read distant letters in a doctor’s office. Today, however, our interaction with the digital world has created a new set of benchmarks. Whether we are discussing the pixel density of a smartphone or the refresh rate of a VR headset, what we consider “bad” vision is increasingly defined by our ability to interface with modern technology.

Visual acuity is no longer just a biological metric; it is a critical component of the user experience (UX) in the tech ecosystem. As we push the boundaries of what displays can do, understanding the threshold of poor vision becomes essential for hardware developers, software engineers, and digital consumers alike.

Understanding Visual Acuity: From 20/20 to Technological Benchmarks

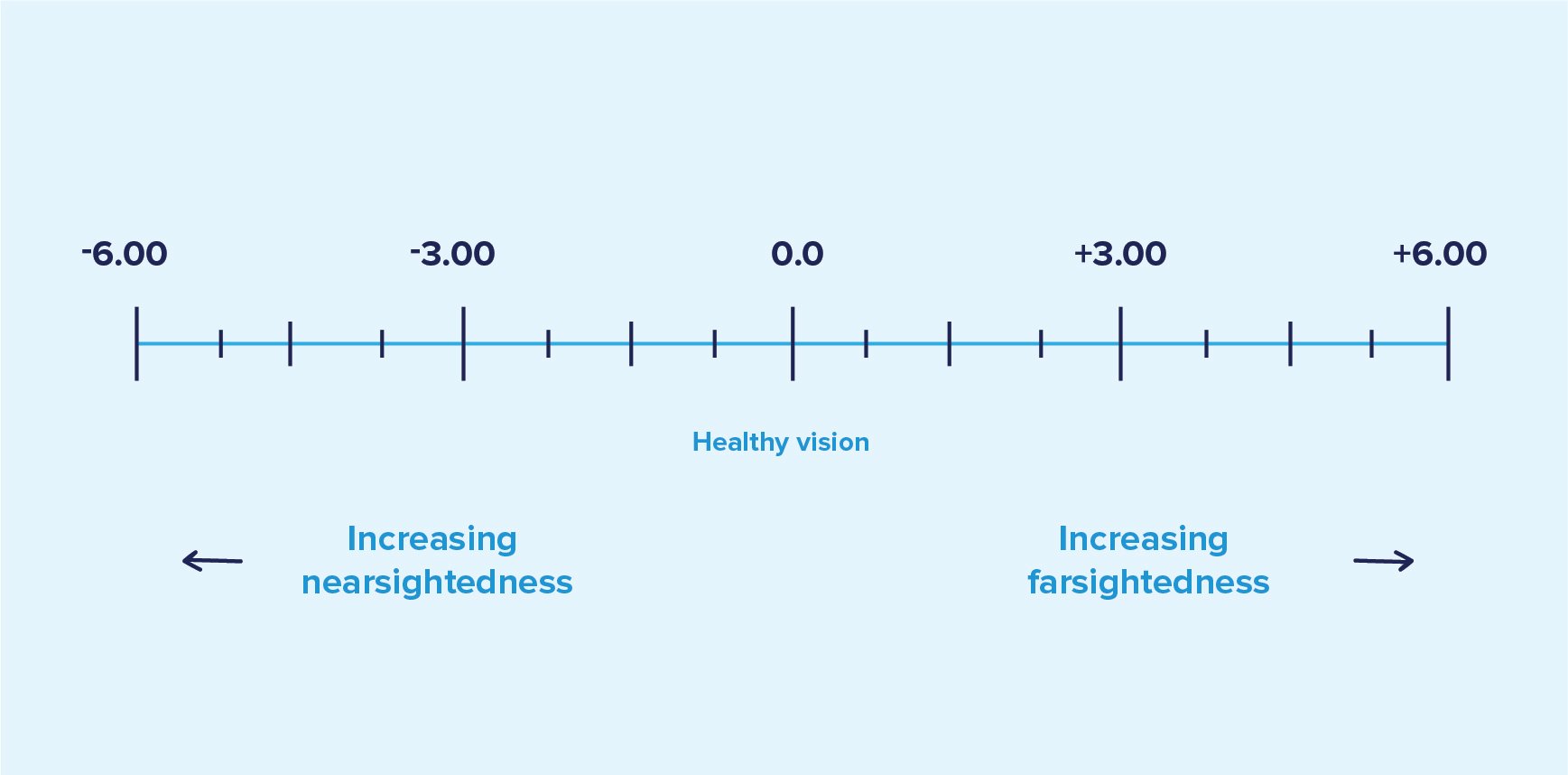

To understand what eyesight is considered “bad,” we must first look at the industry standard: 20/20 vision. This term, popularized in the mid-19th century, refers to a person’s ability to see at 20 feet what a “normal” person should see at that distance. In the tech sector, this translates to how we perceive resolution. If your vision is 20/40, you are often considered to have “bad” eyesight for tasks requiring precision, as you must be twice as close to an object to see it with the clarity of a person with 20/20 vision.

The Science of Pixels and Retinal Clarity

From a technological standpoint, the “Retina Display” concept introduced by Apple was one of the first major attempts to align hardware specs with human visual limits. A display reaches “retina” status when the pixel density is so high—typically over 300 pixels per inch (PPI) for a handheld device—that the human eye with 20/20 vision can no longer discern individual pixels at a standard viewing distance.

If a user has “bad” eyesight—for example, 20/60 or worse—the high-resolution benefits of a 4K monitor or an 8K television are largely lost. For these users, the bottleneck isn’t the GPU or the display panel; it is the biological receiver. In this context, bad eyesight is defined as any vision impairment that prevents the user from perceiving the intended fidelity of modern digital hardware.

When “Bad” Vision Interferes with Modern Interfaces

In the software world, “bad” eyesight is often categorized by the point at which standard UI/UX design becomes unusable. Most operating systems are designed with the assumption of near-perfect corrected vision. When vision drops below the 20/40 threshold, standard 12-point fonts and small navigation icons become a challenge. This has led to the rise of “Accessibility Tech,” where software must compensate for biological limitations through “Zoom” features, high-contrast modes, and screen readers. In the tech industry, vision is considered bad when it requires these assistive interventions to navigate a standard digital environment.

Digital Eye Strain: The New Metric for Poor Visual Performance

In the modern workplace, “bad eyesight” isn’t always a permanent refractive error like myopia or hyperopia. Often, it is a temporary but debilitating degradation of vision known as Computer Vision Syndrome (CVS). As we spend upwards of eight to ten hours a day staring at backlit LED screens, our visual performance fluctuates.

Computer Vision Syndrome (CVS) and Productivity

CVS is a tech-induced form of “bad” vision. Symptoms include blurred vision, double vision, and an inability to focus on distant objects after long periods of screen use (a phenomenon known as accommodative spasm). For tech professionals—developers, data analysts, and designers—this temporary decline in visual acuity can lead to a significant drop in productivity.

From a tech perspective, vision is considered “bad” when the eye’s focusing mechanism becomes fatigued to the point of error. This has prompted the development of “eye-tracking” software that monitors blink rates and suggests “micro-breaks” to prevent the degradation of visual performance.

Blue Light and Hardware Solutions

The technology industry has responded to the epidemic of digital eye strain with both hardware and software solutions. The “Night Shift” or “Blue Light Filter” modes found on most modern smartphones are designed to reduce the high-energy visible (HEV) light that contributes to digital eye strain.

Furthermore, the rise of E-ink technology (used in devices like the Kindle) represents a hardware-level solution for those with sensitive vision. By utilizing reflective light rather than refractive backlight, E-ink mimics the experience of reading paper, effectively lowering the threshold of what is considered “strenuous” vision. When we ask “what eyesight is considered bad” in the context of e-readers, we are often looking at the contrast ratio—if the eye cannot distinguish the text from the background without significant backlighting, the hardware is failing the user’s biological needs.

The Role of AI and Machine Learning in Measuring Optical Health

We are moving away from the era of manual eye exams. Artificial Intelligence (AI) and Machine Learning (ML) are now the primary tools used to determine exactly when a person’s vision has crossed into the “bad” category. This shift from subjective reporting to objective data is transforming the optical tech landscape.

Automated Refraction and Deep Learning Diagnosis

Modern clinics now use wavefront aberrometers—highly sophisticated devices that map how light travels through the eye. This creates a “digital fingerprint” of the eye’s refractive errors. AI algorithms can then analyze these maps to detect conditions like keratoconus or early-stage macular degeneration long before a patient realizes their vision is “bad.”

In this tech-driven environment, “bad eyesight” is redefined as any deviation from a mathematically perfect optical wave. Machine learning models trained on millions of retinal images can now identify “bad” vision with higher accuracy than a human practitioner, spotting minute changes in the retinal vasculature that indicate systemic health issues like diabetes or hypertension.

Smart Wearables: Tracking Eye Health in Real-Time

The next frontier in defining visual health is wearable tech. Smart glasses, equipped with inward-facing cameras and sensors, can monitor a user’s pupil dilation and tracking speed. If the software detects that the user is squinting or that their eye movements have become sluggish, it can alert them that their visual performance has dropped below an acceptable baseline.

For high-stakes tech environments—such as pilots using HUDs (Heads-Up Displays) or surgeons using AR-assisted tools—”bad” vision is a dynamic state. It is measured in milliseconds of lag in focus or a few degrees of peripheral loss, all tracked by real-time diagnostic software.

Future-Proofing Vision: Assistive Technologies and Beyond

As we look toward the future, the tech industry is not just measuring bad eyesight; it is actively working to eliminate the concept altogether. Through a combination of sophisticated hardware standards and neural interfaces, the gap between “good” and “bad” vision is narrowing.

High-Refresh Rates and Eye Comfort Display Standards

Gaming monitors and high-end smartphones are now pushing 120Hz, 144Hz, and even 240Hz refresh rates. While the primary marketing focus is on “smoothness,” the health benefit is a reduction in motion blur and flickering, which are major contributors to visual fatigue. Organizations like TÜV Rheinland now issue “Eye Comfort” certifications for displays. In this professional tech context, eyesight is considered “bad” if it cannot perceive the difference between 60Hz and 120Hz, as this often indicates an underlying issue with visual processing speed or sensitivity.

Bionic Vision and Neural Interfaces

The ultimate technological answer to bad eyesight lies in neural bypass technology. Projects like Elon Musk’s Neuralink and other Brain-Computer Interfaces (BCI) aim to feed visual data directly into the visual cortex. For individuals with “bad” eyesight due to optic nerve damage or retinal decay, these technologies offer a future where vision is no longer dependent on the biological eye.

In a world where we can augment our sight with digital overlays, the traditional definition of “bad eyesight” (not being able to read a chart) becomes obsolete. Instead, we move toward a definition centered on “bandwidth”—how much visual information can the brain-computer interface transmit and process?

Conclusion: The Convergence of Biology and Bitrate

What eyesight is considered “bad” is a question that no longer has a simple answer. In a strictly medical sense, vision worse than 20/40 is the threshold for many daily restrictions, and 20/200 is the marker for legal blindness. However, in the realm of technology, “bad” vision is any state where the human eye becomes the limiting factor in the digital experience.

As our devices become more high-resolution and our lives become more integrated with digital interfaces, the tech industry will continue to lead the charge in redefining, measuring, and correcting our visual limitations. Whether through AI-driven diagnostics, blue-light-filtering hardware, or the promise of neural implants, technology is ensuring that “bad eyesight” is merely a technical challenge waiting for a solution. Understanding this intersection is vital for anyone navigating the modern digital landscape, as our vision is the primary gateway through which we consume the innovations of the future.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.