The concept of a “watt” is fundamental to understanding how our modern world, powered by an ever-increasing array of electronic devices and sophisticated systems, functions. While the term is ubiquitous, from the labels on light bulbs to the specifications of high-performance computers, its precise meaning can often be a bit nebulous for the average consumer. In the context of technology, understanding watts is not just about knowing how much electricity something uses; it’s about grasping the very essence of its operational capability, efficiency, and its place within the broader technological ecosystem.

This exploration delves into the core of what a watt measures, focusing specifically on its implications within the realm of technology. We will dissect its definition, explore its practical applications in everyday gadgets and complex systems, and discuss how understanding wattage plays a crucial role in everything from selecting the right hardware to optimizing energy consumption in a digitally driven life. By demystifying the watt, we empower ourselves to make more informed decisions about the technology we rely on daily.

The Fundamental Unit: Defining the Watt

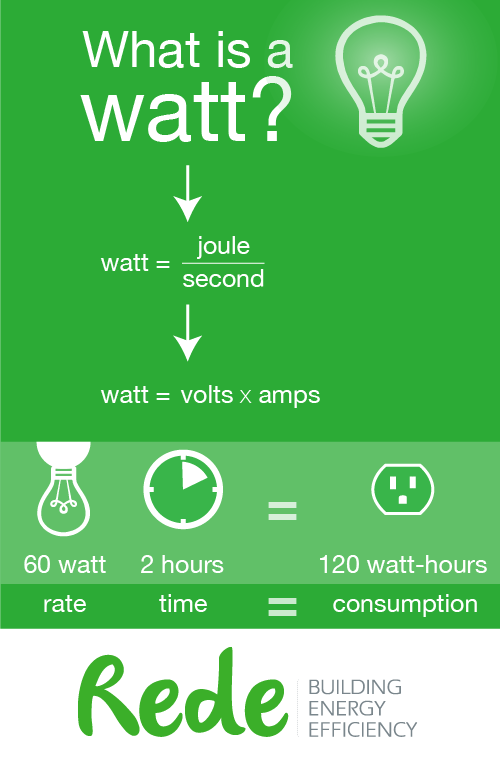

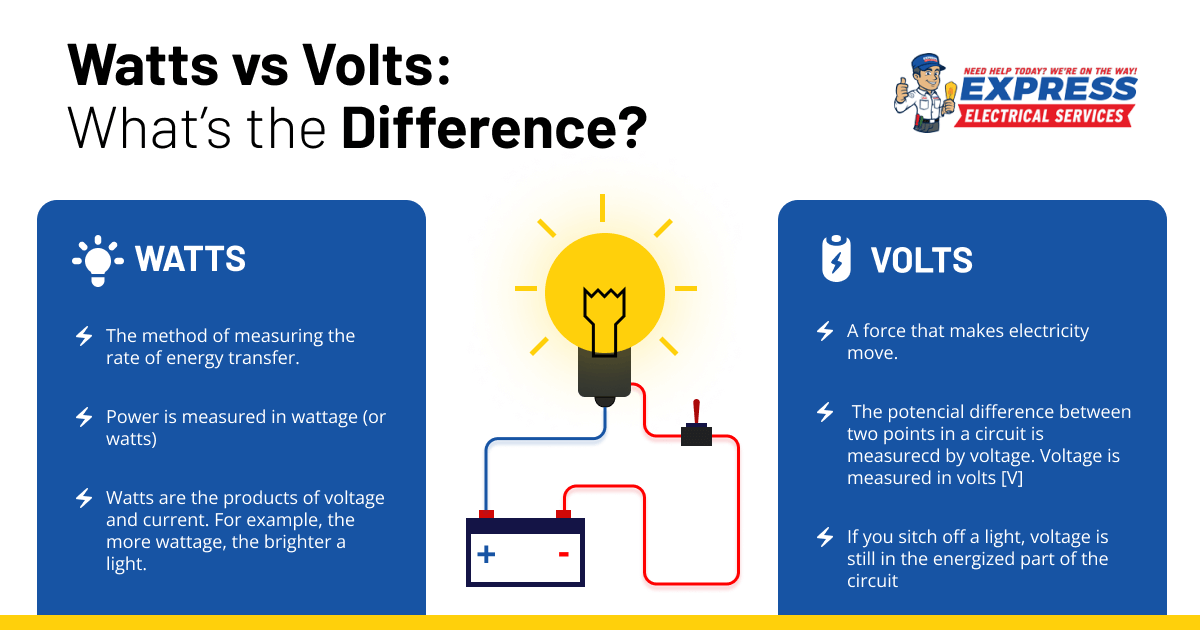

At its heart, the watt is a unit of power. This might seem simple, but the implications of power within a technological context are profound. Power, in physics, is the rate at which energy is transferred or converted. The watt, therefore, quantifies this rate. Understanding this foundational definition is the first step to appreciating its significance in the technological landscape.

Energy vs. Power: A Crucial Distinction

It’s vital to differentiate between energy and power. Energy is the capacity to do work. Think of it as the total amount of “stuff” available. Electricity, for example, is a form of energy. Power, on the other hand, is how quickly that energy is being used or delivered. A watt measures this rate. To draw an analogy, imagine a water tank. The total amount of water in the tank represents energy. The rate at which water flows out of a tap is the power. A wider tap (higher wattage) will empty the tank (deplete energy) much faster than a narrower tap (lower wattage).

The Joule and the Second: Building Blocks of the Watt

Scientifically, one watt is defined as one joule of energy transferred or converted per second (1 W = 1 J/s). A joule is the standard unit of energy. This relationship highlights that power is a dynamic quantity, constantly measuring the intensity of energy flow at any given moment. In the context of electronics, this means a device consuming 100 watts is using energy at a rate of 100 joules every second. This rapid consumption is what enables devices to perform their functions, from illuminating a room to processing complex data.

Introducing the Kilowatt and Megawatt: Scaling Up

While watts are useful for smaller devices, the scale of power consumption in larger technological systems necessitates larger units. A kilowatt (kW) is equal to 1,000 watts, commonly used for appliances like ovens, air conditioners, and electric vehicles. A megawatt (MW), equal to 1,000 kilowatts or one million watts, is used to describe the power output of power plants, industrial machinery, and large data centers. Understanding these prefixes allows us to comprehend power demands across the entire spectrum of technological applications, from the personal to the industrial.

Watts in Action: Powering Our Digital Lives

The practical manifestation of watts is evident in almost every piece of technology we interact with. From the smallest sensor to the most powerful supercomputer, wattage specifications provide critical insights into a device’s performance, its energy demands, and its compatibility with our existing power infrastructure.

Gadgets and Consumer Electronics: From Phones to Laptops

When we purchase a smartphone, laptop, or smart TV, we often encounter wattage figures, even if they aren’t always explicitly stated. For chargers, the wattage indicates how quickly they can replenish a device’s battery. A 5W charger for a phone will take longer to charge than a 20W or even a 65W charger. Similarly, the power supply unit (PSU) in a computer is rated in watts, indicating the maximum amount of power it can deliver to the system’s components. Gamers and professionals who push their systems with powerful graphics cards and processors will need PSUs with higher wattage ratings to ensure stable operation.

Power Adapters and Charging Speeds

The wattage of a power adapter is a direct indicator of its charging speed. Higher wattage means more energy can be transferred to the battery per unit of time, resulting in faster charging. This is particularly relevant for portable devices like smartphones, tablets, and laptops, where quick charging can be a significant convenience. When choosing a replacement charger or an accessory, understanding its wattage and comparing it to the device’s recommended charging specifications is crucial for optimal performance and battery health.

Display Brightness and Consumption

The screens of our devices, from monitors to televisions, also consume power, measured in watts. Higher brightness levels and larger screen sizes generally require more wattage. For instance, a large, ultra-high-definition television will consume more power than a smaller, lower-resolution display. Manufacturers often provide energy consumption ratings in watts on their products, allowing consumers to make more informed choices based on their energy efficiency preferences.

Computing Hardware: The Heartbeat of the Digital Realm

In the demanding world of computing, wattage is a critical specification that dictates performance, stability, and even the physical requirements of a system. This is especially true for high-performance components like CPUs and GPUs, which are the engines of modern computing.

Central Processing Units (CPUs) and Thermal Design Power (TDP)

The CPU is the brain of any computer, and its power consumption is a key factor in its performance. While the actual power draw can fluctuate, the Thermal Design Power (TDP) is a widely used metric that represents the maximum amount of heat a CPU is expected to generate under typical workloads. This figure, measured in watts, is a strong indicator of its power consumption. A higher TDP generally suggests a more powerful processor, but also a greater need for robust cooling solutions and a capable power supply. Understanding TDP helps enthusiasts and professionals select CPUs that fit their performance goals and their system’s power and cooling capabilities.

Graphics Processing Units (GPUs) and Gaming Performance

GPUs are the powerhouses behind modern gaming, video editing, and artificial intelligence. Their wattage requirements are often significantly higher than CPUs, especially for high-end discrete graphics cards. The recommended power supply wattage for a gaming PC is heavily influenced by the GPU’s power draw. A GPU consuming 250W, for example, will necessitate a power supply unit capable of delivering at least this much power, plus additional headroom for the CPU and other components.

Servers and Data Centers: The Backbone of the Internet

The massive infrastructure that powers the internet and cloud computing – servers and data centers – are immense consumers of electrical power, measured in megawatts. The efficiency of these facilities, often quantified by metrics like Power Usage Effectiveness (PUE), directly impacts their operational costs and environmental footprint. Optimizing wattage consumption in data centers is a continuous technological endeavor, involving advanced cooling systems, efficient power distribution, and the deployment of low-power server hardware.

Beyond Consumption: Watts and Efficiency

Understanding watts is not solely about how much power a device uses, but also how efficiently it uses that power. In the technological landscape, efficiency is paramount, driving innovation and influencing design choices across the board.

Power Supply Units (PSUs) and Efficiency Ratings

Power supply units are the critical intermediaries between the wall outlet and a computer’s components. They convert AC power from the grid to DC power usable by electronics. PSUs are rated not only by their maximum wattage output but also by their efficiency, often indicated by certifications like 80 PLUS Bronze, Silver, Gold, Platinum, and Titanium. These ratings denote the percentage of power drawn from the wall that is actually delivered to the components. A higher efficiency rating means less energy is wasted as heat, leading to lower electricity bills and a reduced environmental impact. For example, an 80 PLUS Gold certified PSU operating at 50% load might be 90% efficient, meaning only 10% of the power drawn is lost.

Energy-Efficient Technology: The Driving Force

The constant push for more powerful and feature-rich devices has been paralleled by an equally strong drive for energy efficiency. This is crucial for battery-powered devices, where maximizing runtime is essential, and for large-scale computing, where energy costs can be substantial. Advancements in semiconductor design, power management techniques, and the development of more efficient architectures are all aimed at reducing the wattage required to achieve a given level of performance.

Battery Life and Power Management

For portable electronics, wattage directly correlates with battery life. A device that consumes less power will have a longer operational time on a single charge. Modern operating systems and hardware employ sophisticated power management strategies, dynamically adjusting clock speeds, turning off unused components, and optimizing performance to minimize wattage draw when full power isn’t needed. This allows for a balance between responsiveness and energy conservation.

Green Computing and Sustainability

In the realm of large-scale computing, the concept of “green computing” emphasizes reducing the energy footprint of data centers and IT infrastructure. This involves deploying energy-efficient hardware, optimizing server utilization, and implementing advanced cooling technologies to minimize the wattage consumed per unit of computation. The overall wattage of a data center is a significant factor in its environmental impact, making efficiency a key performance indicator and a driver of technological innovation.

Conclusion: Watts as a Measure of Technological Capability

The humble watt, a fundamental unit of power, plays a far more significant role in our technological lives than is often appreciated. It’s not merely a number on a label; it’s a metric that speaks to a device’s capability, its performance potential, its efficiency, and its impact on our energy consumption. From the power adapter charging our smartphones to the massive infrastructure powering the internet, understanding wattage empowers us to make informed decisions.

As technology continues to evolve at an unprecedented pace, with devices becoming more powerful and interconnected, the importance of grasping the concept of power – and how it’s measured in watts – will only grow. Whether you are a consumer choosing a new gadget, a gamer building a high-performance rig, or a professional working with complex IT systems, a solid understanding of watts is an essential component of navigating the modern technological landscape. It allows us to appreciate the engineering behind our devices, optimize their performance, and contribute to a more sustainable digital future.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.