The history of technology is replete with innovations that promised to solve complex problems, often with unforeseen and profound consequences. While the term “technology” today conjures images of silicon chips and advanced algorithms, its roots lie in human attempts to manipulate and improve the world around us. In this vein, early neurological interventions, such as the lobotomy, can be viewed through a historical technological lens – as an attempt to “engineer” human consciousness and behavior, albeit with rudimentary tools and a nascent understanding of the human brain. This article delves into the historical context and the impact of lobotomies, examining them not as a purely medical procedure, but as a primitive technological intervention with significant implications for the individuals subjected to it and for the broader societal discourse on human enhancement and control.

The Precursors to Neurological Engineering: Identifying the “Bugs” in the System

The development of lobotomy was not an isolated event but emerged from a period of burgeoning scientific inquiry into the human mind and a growing societal desire to manage mental illness and behavioral deviance. In the early to mid-20th century, mental health institutions were often overwhelmed, and prevailing treatments for severe psychological distress were limited, often proving ineffective or even harmful. The prevailing understanding of mental illness was often fragmented, with little consensus on its underlying causes or mechanisms. This created a fertile ground for radical, and ultimately misguided, solutions.

The “Machine” of the Brain: Early Analogies and Misconceptions

At the time, the brain was often conceptualized as a complex, albeit mechanical, system. Neurologists and psychologists, influenced by early engineering principles and emergent understandings of electrical impulses in the nervous system, began to hypothesize that certain psychological disorders might stem from overactive or “faulty” neural pathways. This mechanistic view of the brain, while simplistic by today’s standards, paved the way for interventions aimed at physically altering these perceived problematic circuits. The focus was on identifying a “bottleneck” or a malfunctioning component within the brain’s intricate network and attempting to isolate or sever it.

The Need for “System Reset”: Societal Pressures and Institutional Challenges

The societal context of the era also played a crucial role. Mental institutions were frequently overcrowded, understaffed, and struggling to manage patients who exhibited behaviors deemed disruptive or dangerous. There was a strong impetus to find methods that could effectively “calm” or “pacify” individuals, making them more manageable within institutional settings. The perceived success of early surgical interventions in other areas of medicine may have also lent credibility to the idea of surgical solutions for mental ailments. The lobotomy, in essence, was seen by some as a way to perform a “system reset” on individuals whose internal “programming” was believed to be malfunctioning, thereby restoring a semblance of order to both the individual and the institution.

The “Intervention”: The Lobotomy Procedure as a Crude Technological Fix

The lobotomy procedure itself, in its various forms, represented a direct physical intervention aimed at altering the brain’s functional architecture. While the specific techniques varied, the underlying principle was to sever connections within the prefrontal cortex, the area of the brain associated with higher-level cognitive functions, personality, and social behavior. This was a blunt instrument, a drastic measure undertaken with the belief that it would alleviate suffering and restore functionality, akin to a programmer attempting to fix a complex software bug by deleting large sections of code without fully understanding its interdependencies.

Techniques: From Ice Picks to Drills – The Evolution of “Hardware Modification”

The development and refinement of lobotomy techniques mirrored the iterative process often seen in technological development. Initially, crude methods were employed. The transorbital lobotomy, famously performed with an instrument resembling an ice pick, involved inserting it through the eye socket and severing connections in the frontal lobe. Later techniques involved drilling holes into the skull to access and sever neural pathways. Each evolution of the technique, while aiming for greater precision, still operated on a fundamental misunderstanding of the brain’s interconnectedness. These were akin to early attempts at hardware modification, where components were physically removed or altered with limited foresight into the system-wide impact.

The Promise and the Peril: Intended vs. Actual Outcomes

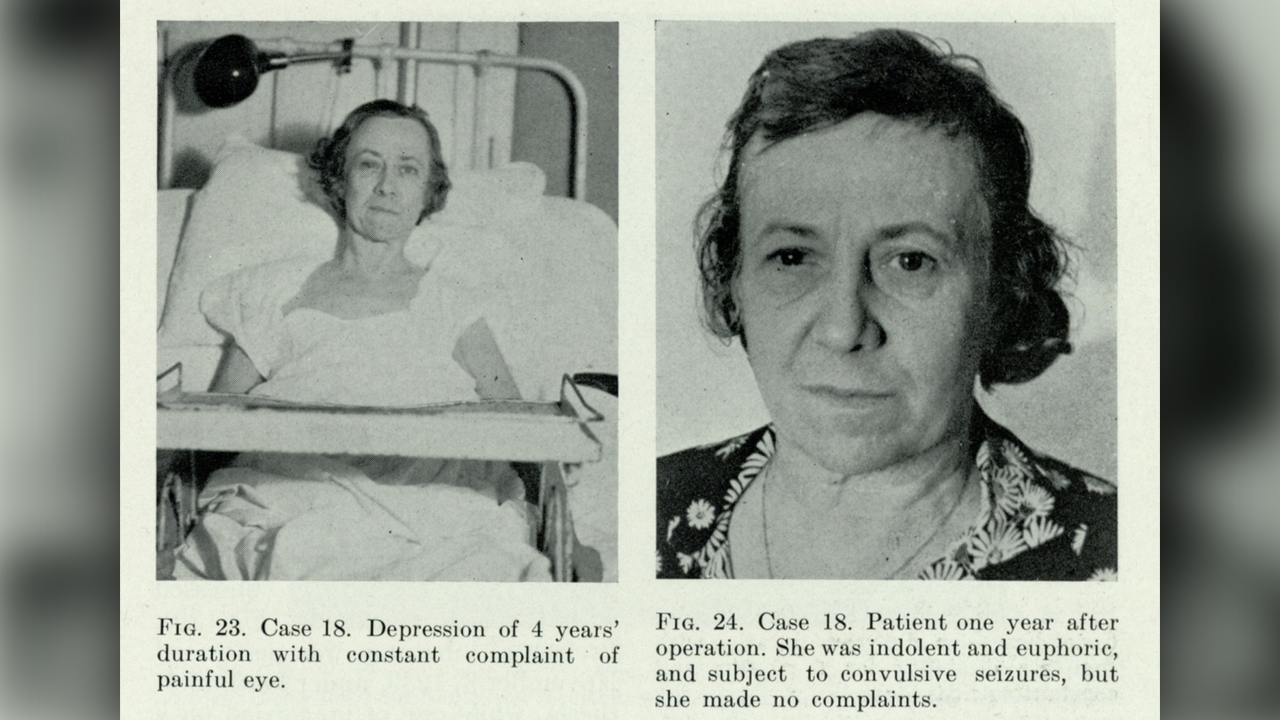

The stated aims of lobotomy were often to alleviate symptoms of severe mental illness such as schizophrenia, severe depression, and anxiety, as well as to manage intractable behavioral problems. Proponents argued that it could reduce agitation, eliminate delusional thinking, and make patients more compliant and manageable. However, the reality of the outcomes was far more complex and often devastating. While some individuals did experience a reduction in their most distressing symptoms, this often came at the cost of profound personality changes, emotional blunting, apathy, loss of initiative, and intellectual impairment. The “fixes” were often worse than the original “malfunctions,” leading to a population of individuals who were no longer suffering, but were also no longer fully themselves.

The “Systemic Impact”: Societal Repercussions and the Evolution of Mental Healthcare Technology

The widespread use and eventual discontinuation of lobotomies had a profound and lasting impact on how society viewed mental illness, treatment, and the ethical boundaries of technological intervention. The legacy of lobotomy serves as a critical case study in the history of human attempts to “fix” perceived problems with radical, yet poorly understood, technological solutions. It highlighted the dangers of unchecked enthusiasm for novel interventions without rigorous scientific validation and a deep consideration of ethical implications.

The “Decommissioning” of a Flawed Technology: The Rise of New Approaches

As the negative consequences of lobotomies became undeniable, and as understanding of neurobiology and psychology advanced, the procedure began to fall out of favor. The development of psychotropic medications in the mid-20th century offered less invasive and often more effective alternatives for managing a range of mental health conditions. This marked a significant shift in the “technology” of mental healthcare, moving from physical intervention to pharmacological and psychotherapeutic approaches. The widespread failure of lobotomy led to a critical re-evaluation of the underlying assumptions and a move towards more nuanced, evidence-based treatments.

Ethical Ramifications: The “Right to Repair” and Human Dignity in the Technological Age

The story of lobotomy also carries significant ethical weight, particularly in our current era of rapidly advancing technologies, including those that interface with the human mind and body. It serves as a stark reminder of the imperative to consider the full spectrum of potential consequences when developing and deploying any technology that has the potential to alter fundamental aspects of human experience. The debate around lobotomy underscores the importance of informed consent, patient autonomy, and the recognition of inherent human dignity, principles that are increasingly relevant as we navigate the ethical landscapes of artificial intelligence, genetic engineering, and advanced neuro-technologies. The “right to repair” oneself, or to not be “repaired” against one’s will or without full understanding, is a concept deeply rooted in the lessons learned from this chapter of medical and technological history.

Lessons for the Future: Navigating the Interface of Humanity and Technology

The lessons learned from the era of lobotomies are invaluable as we continue to develop increasingly sophisticated technologies that interact with the human condition. They emphasize the need for a cautious, ethical, and evidence-based approach. Just as early engineers learned from the unintended consequences of crude mechanical solutions, we must approach advancements in neurotechnology, AI, and other fields with a deep sense of responsibility. The question “What did lobotomies do?” is not merely a historical inquiry; it is a vital prompt for reflection on the potential pitfalls and profound responsibilities that accompany our relentless pursuit of technological solutions, particularly when those solutions venture into the intricate and delicate landscape of the human mind. The history of lobotomy serves as a cautionary tale, urging us to prioritize understanding, ethics, and human well-being above the allure of a seemingly simple, yet ultimately destructive, technological fix.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.