In the rapidly evolving landscape of visual technology, engineers and data scientists often look toward the natural world to solve complex problems in optics, surveillance, and artificial intelligence. While human vision has long been the gold standard for developing cameras and displays, the unique ocular systems of other species—specifically the rabbit—provide a fascinating blueprint for specialized technological applications. To understand “what colors can bunnies see” is to delve into the world of dichromatic vision, a field that is currently revolutionizing how we develop sensors for low-light environments and wide-angle surveillance.

The intersection of leporine biology and digital innovation offers profound insights into how we might transcend human visual limitations. By analyzing the spectral sensitivity and field of vision of rabbits, the tech industry is discovering new ways to optimize machine learning algorithms and hardware design.

The Optical Hardware: Understanding Rabbit Photoreceptors and Spectral Mapping

To understand how a rabbit perceives the world, we must first look at the hardware of their eyes. Unlike humans, who are typically trichromatic (having three types of color-detecting cone cells), rabbits are dichromatic. This means their visual system is built around two primary spectral peaks. From a technological standpoint, this is an exercise in efficiency—filtering the vast electromagnetic spectrum into the most critical data points for survival.

Dichromacy vs. Trichromacy in Computational Modeling

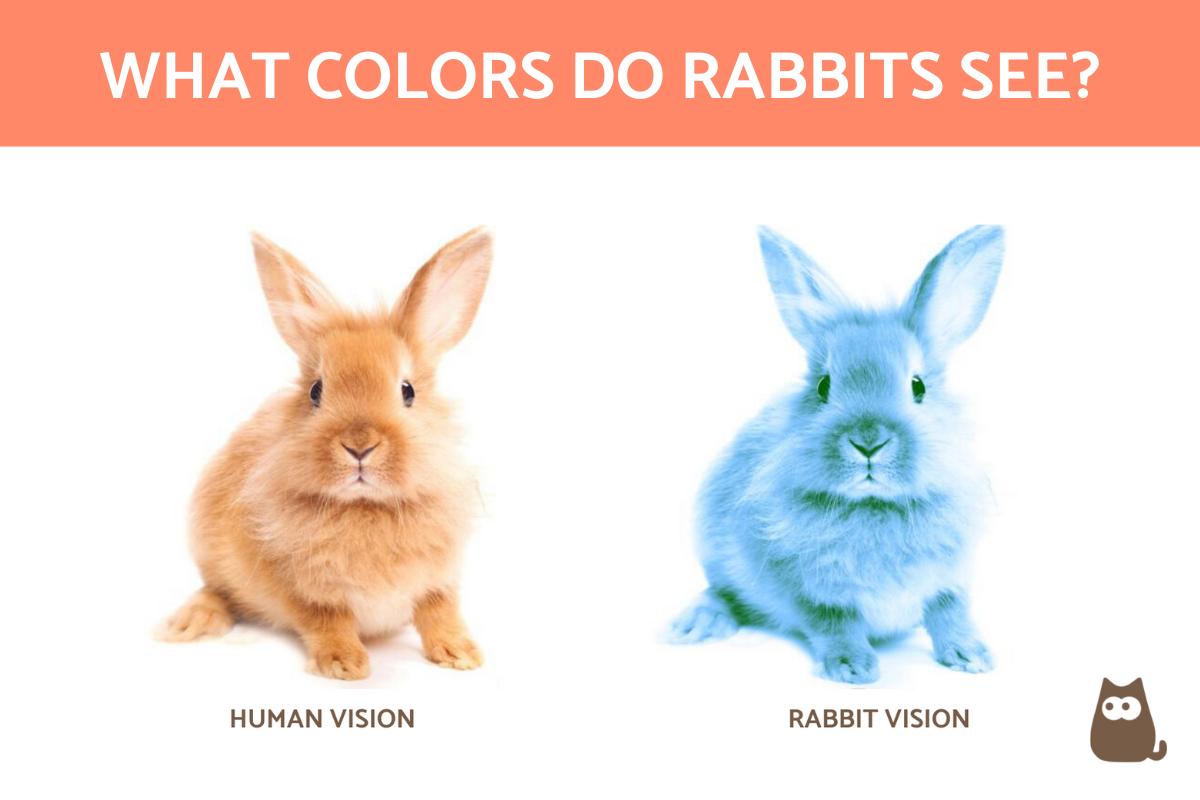

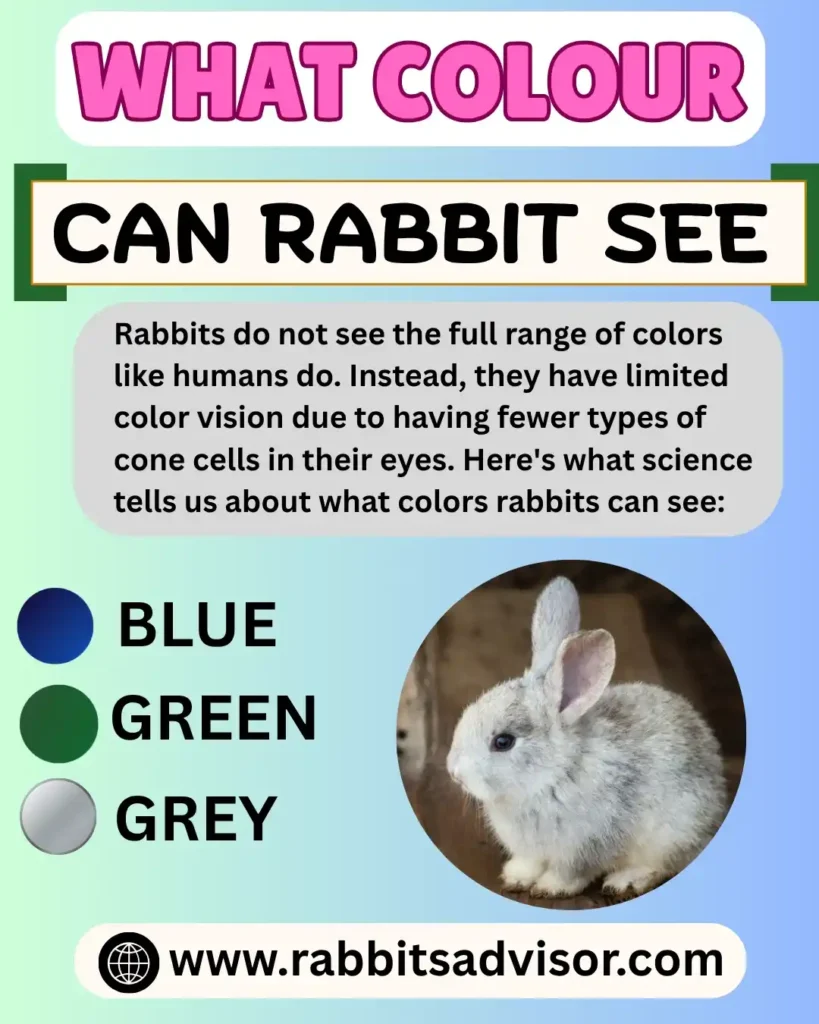

In human-centric tech, we rely on the RGB (Red, Green, Blue) model. However, rabbits lack the cone for long-wavelength light, which we perceive as red. Their vision is limited primarily to blues and greens. When developers create simulation software or augmented reality (AR) filters to mimic animal vision, they utilize computational color-mapping to desaturate the red channel and shift the histogram toward the shorter wavelengths.

This simplified color processing is currently being studied for “edge computing” applications. By reducing the color depth required for a machine to identify movement or shapes, developers can reduce the processing power needed for AI to “see” in real-time, prioritizing speed and movement detection over high-fidelity color accuracy.

Blue and Green Sensitivities: The Spectral Range

Rabbits possess a high sensitivity to the blue-green end of the spectrum. This is not a limitation but an evolutionary optimization for crepuscular activity (being active at dawn and dusk). In the tech world, this mirrors the development of specialized “blue-light” sensors used in marine technology and deep-sea exploration, where red light is the first to be filtered out by the environment. By studying the rabbit’s blue-green sensitivity, engineers are refining the “quantum efficiency” of CMOS sensors used in low-visibility hardware.

Bio-Mimicry: Translating Leporine Vision into Sensor Design

The most significant contribution of rabbit vision to modern technology lies in the realm of bio-mimicry. We aren’t just interested in the colors they see, but the way they see them. A rabbit’s eye placement and retinal density offer a masterclass in peripheral awareness and motion detection—two pillars of modern autonomous vehicle technology.

Low-Light Sensitivity and CMOS Technology

Rabbits have a higher density of rod cells compared to humans, allowing them to see clearly in near-darkness. Modern camera manufacturers are looking at this ratio of “rods to cones” to improve the Signal-to-Noise Ratio (SNR) in digital sensors. By mimicking the rabbit’s ability to maximize light capture through a larger pupil and a more sensitive retinal surface, the tech industry is producing “Ultra-Low Light” (ULL) cameras. These devices are essential for security tech and wildlife monitoring, where artificial illumination is not an option.

360-Degree Surveillance and Wide-Angle Optics

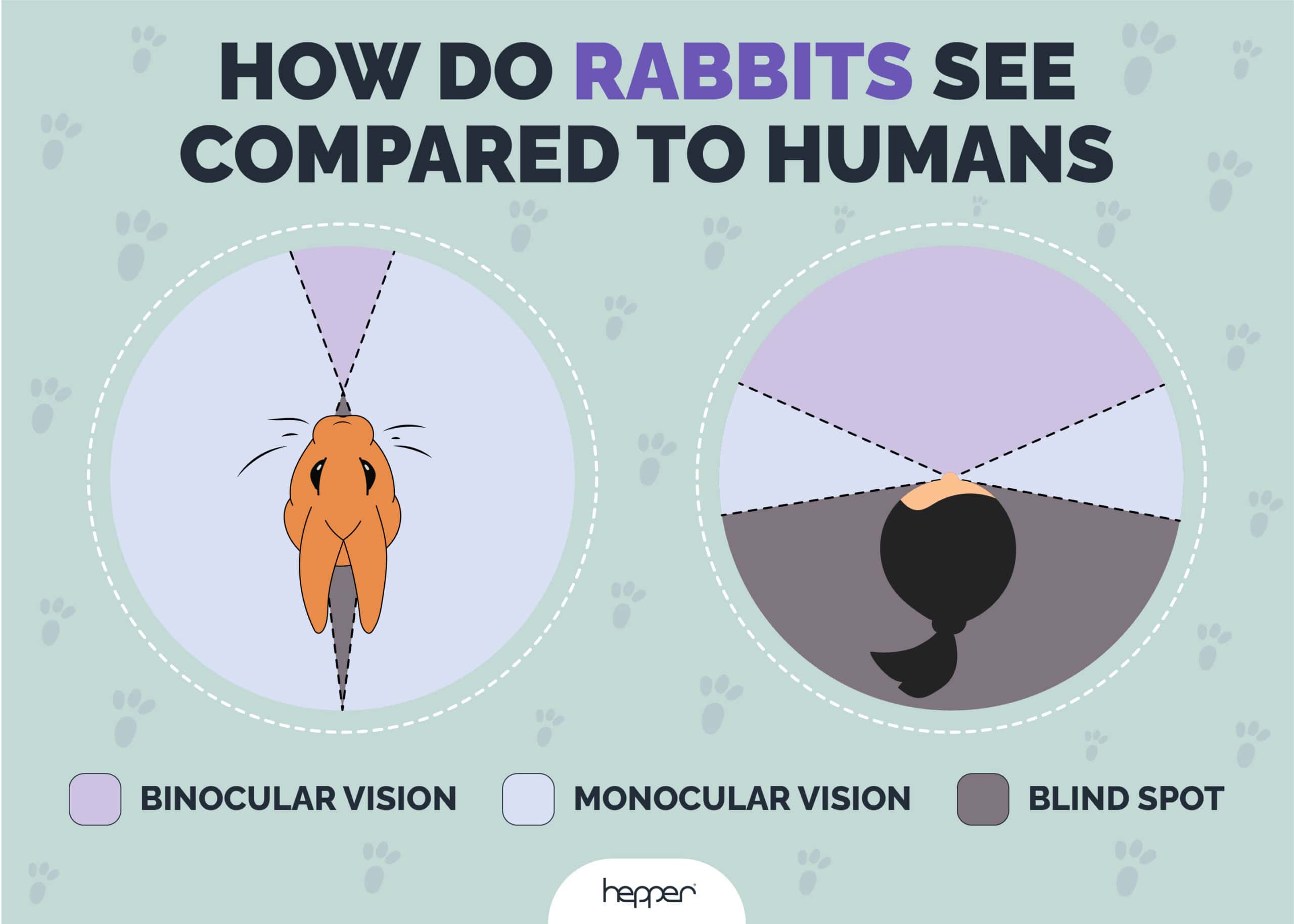

A rabbit’s eyes are positioned on the sides of its head, providing a nearly 360-degree field of vision with a small blind spot directly in front of the nose and behind the head. This “panoramic” visual capability is the foundation for modern omnidirectional camera systems.

In the development of “Smart City” infrastructure, engineers utilize lenses that mimic this lateral placement. Instead of multiple cameras focused on single points, a single sensor with a specialized wide-angle lens—inspired by the rabbit’s ocular geometry—can monitor an entire intersection. The challenge, which AI is currently solving, is the “distortion correction” required to flatten these panoramic views into a usable data stream for human monitors.

Simulation and AI: Rendering the World Through a Rabbit’s Eyes

As we move further into the era of the Metaverse and spatial computing, the ability to simulate non-human perspectives has become a vital tool for researchers, conservationists, and even game developers. Creating a digital twin of a rabbit’s visual experience requires sophisticated algorithmic processing.

Algorithmic Color Correction and Virtual Reality

In VR development, “What colors can bunnies see?” becomes a question of shader programming. To create a realistic rabbit-eye perspective, developers must implement custom “Color Lookup Tables” (LUTs) that strip out the red spectrum and enhance the blue and green vibrancy.

Furthermore, because rabbits have a lower “flicker fusion frequency” than humans, they perceive motion differently. High-refresh-rate displays (120Hz and above) are used in research labs to test how rabbits interact with digital interfaces. If the refresh rate is too low, the rabbit perceives the screen as a series of flashing images rather than a continuous video. This research is helping tech companies understand the limits of display technology and how to create smoother, more “organic” visual outputs for all species.

Machine Learning in Animal Ethology Apps

Apps designed for pet owners and farmers are now integrating AI to analyze environments based on animal vision. By pointing a smartphone camera at a field, an AI-powered app can use a “rabbit-vision filter” to show where a rabbit might see a predator or find the best clover. This involves real-time image processing, where the AI identifies objects and re-renders them using the rabbit’s dichromatic constraints and high-contrast sensitivity. This technology isn’t just a novelty; it’s a powerful tool for improving animal welfare through data-driven environmental design.

The Future of Cross-Species Interfaces and Digital Interaction

The ultimate frontier of this technology is the development of Human-Machine-Animal Interfaces. As we integrate tech more deeply into our natural world, understanding the visual hardware of the creatures around us is essential for ethical and functional design.

HMI (Human-Machine Interface) for Animal Welfare

Automated farming and pet-care tech are increasingly using “vision-compatible” interfaces. For instance, if a smart feeder uses a red light to indicate it is empty, a rabbit may struggle to distinguish that signal from the surrounding shadows. Tech companies are now pivoting to “inclusive design” for animals, using blue and yellow LEDs—colors that are highly visible to dichromatic species. This ensures that the technology we build to care for animals is actually perceptible to them.

Ethical Considerations in Sensory Simulation Tech

As we gain the ability to perfectly simulate what a bunny sees, we encounter new ethical questions in the tech space. If we can use AI to manipulate the visual environment of an animal, how do we ensure this tech is used for enrichment rather than exploitation? High-tech conservation efforts use these simulations to design better habitats, but the same technology could be used to create “invisible” barriers that capitalize on an animal’s visual blind spots. The tech industry must establish protocols for “Sensory Ethics” to ensure that as our sensors become more like those found in nature, we use that knowledge responsibly.

Conclusion: The Convergence of Biology and Bitrate

Understanding what colors bunnies can see is more than a biological curiosity; it is a gateway to more efficient, powerful, and inclusive technology. From the development of dichromatic AI sensors that save energy by ignoring redundant color data, to the creation of 360-degree surveillance systems that mimic the rabbit’s panoramic view, the “bunny perspective” is deeply embedded in the future of optics.

As we continue to refine our digital world, the lessons learned from the rabbit’s eye—its speed, its sensitivity to the dim light of dawn, and its panoramic reach—will continue to guide the next generation of gadgets, apps, and artificial intelligence. By looking through the eyes of another species, we aren’t just seeing fewer colors; we are seeing new possibilities for innovation.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.