In the rapidly evolving landscape of technology, the ability to ask the right questions is often more valuable than having immediate answers. As we move further into the era of Big Data, Artificial Intelligence, and Evidence-Based Software Engineering (EBSE), the methodology behind inquiry has become a cornerstone of technical success. One of the most effective frameworks for structuring these inquiries is the PICO process.

Originally developed for evidence-based medicine, the PICO framework has transitioned into a vital tool for data scientists, software engineers, and AI prompt engineers. It provides a structured format that transforms vague curiosities into precise, searchable, and actionable technical queries. Understanding what PICO questions are and how to apply them to modern technology is essential for any professional looking to cut through the digital noise and derive meaningful insights.

The Anatomy of a PICO Question in the Tech Landscape

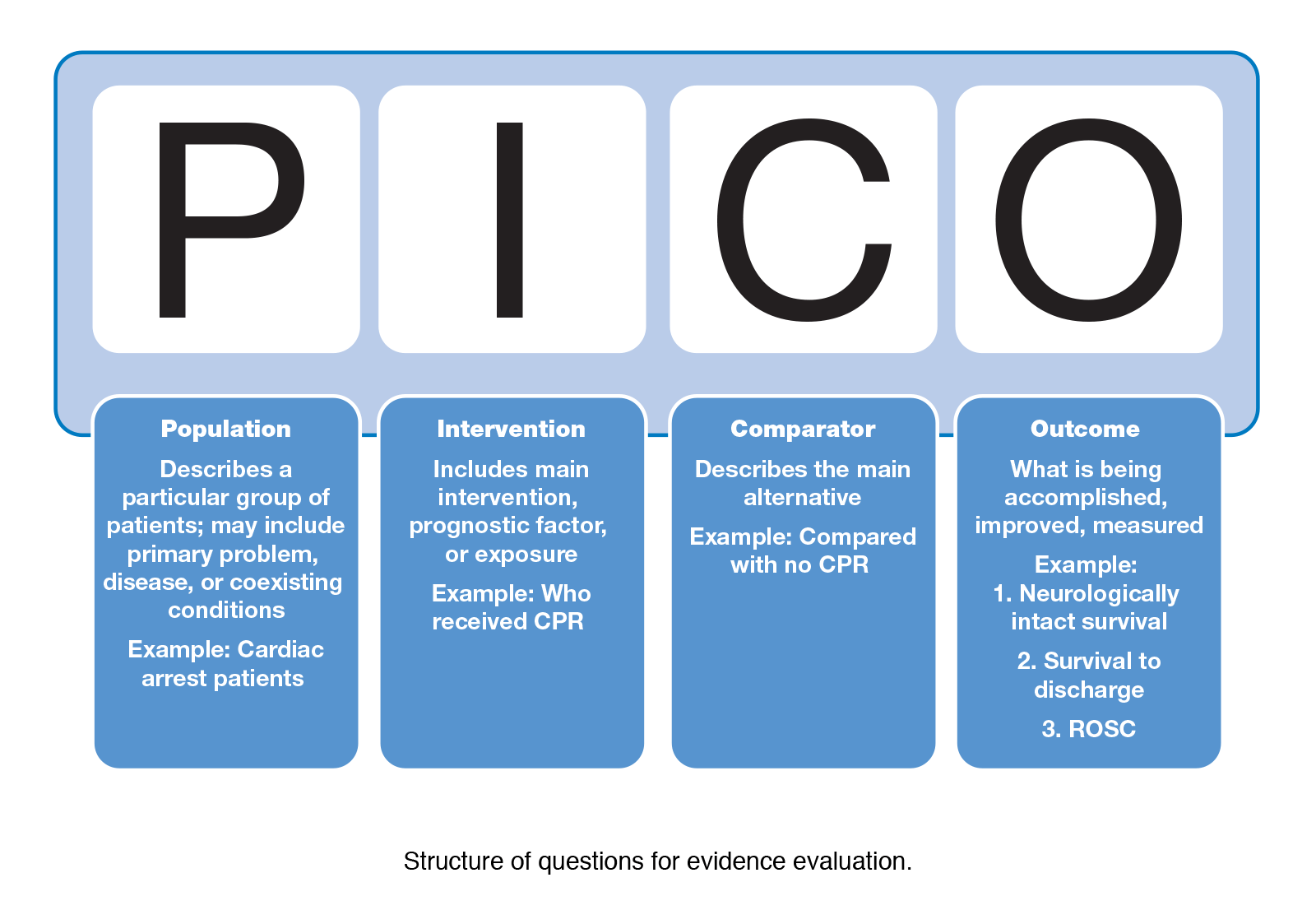

At its core, PICO is an acronym representing four key components: Population, Intervention, Comparison, and Outcome. In a technical context, these components serve as the building blocks for research designs, software testing protocols, and algorithmic evaluations. By breaking down a problem into these four segments, tech professionals can ensure they are considering every variable that might influence a system’s performance.

Population (P): Defining the User Base or Dataset

In the tech sector, “Population” rarely refers to a group of patients. Instead, it defines the specific environment, hardware, user demographic, or dataset being studied. For a software engineer, the population might be “users running iOS 17 on iPhone 13 devices.” For a data scientist, it might be “unstructured log data from cloud-based microservices.”

Defining the population with precision is the first step toward technical accuracy. Without a clear “P,” your research or testing remains too broad, leading to “noisy” data that fails to provide specific solutions.

Intervention (I): Introducing the New Software or Tool

The “Intervention” represents the specific change, tool, or variable you are testing. In technology, this is often a new software update, a different coding framework, a specific AI model (like GPT-4 vs. Claude 3), or a cybersecurity protocol.

If you are investigating how to improve database latency, your intervention might be “implementing a Redis caching layer.” This is the active element you are introducing to see if it causes a measurable shift in the environment defined in the Population phase.

Comparison (C): Benchmarking Against the Status Quo

One of the most common failures in technical research is the lack of a control group or a baseline. The “Comparison” component forces you to ask: “What are we doing now, and how does it compare to the new intervention?”

The comparison might be the current industry standard, an older version of your software, or the absence of the intervention entirely. For instance, if you are testing a new AI-driven code completion tool, your comparison would be the manual coding speed or the performance of a legacy IDE plugin.

Outcome (O): Measuring Technical Success

Finally, the “Outcome” defines what success looks like. It must be measurable and objective. In tech, outcomes are usually expressed through Key Performance Indicators (KPIs) such as latency (ms), throughput (requests per second), error rates, user retention percentages, or CPU utilization.

A well-defined outcome prevents “scope creep” and ensures that the results of your PICO question provide a definitive “yes” or “no” regarding the effectiveness of your technical intervention.

PICO as a Framework for Advanced Prompt Engineering

As Large Language Models (LLMs) become integrated into daily workflows, the field of prompt engineering has emerged as a critical technical skill. A major challenge with LLMs is their tendency to produce “hallucinations” or overly generic content when given vague instructions. Applying the PICO framework to AI prompting transforms how developers and researchers interact with these models.

From Vague Queries to Structured Instructions

A typical vague prompt might look like this: “Tell me how to make my website faster.” This lacks the specificity required for a high-quality AI response. By applying PICO logic, the prompt becomes significantly more effective:

- Population: “E-commerce websites built on React with over 50,000 monthly visitors.”

- Intervention: “Implementing Next.js Image Optimization.”

- Comparison: “Standard

tags with manual compression.”

- Outcome: “Reduction in Largest Contentful Paint (LCP) and improved SEO rankings.”

When these components are fed into an AI, the resulting output is not just a general list of tips, but a technical roadmap tailored to the specific architecture and goals of the project.

Enhancing LLM Precision through Component-Based Logic

Using PICO in AI interactions also helps in “Few-Shot” or “Chain-of-Thought” prompting. By instructing the AI to categorize its internal logic into P, I, C, and O, the developer forces the model to maintain context across a long conversation. This reduces the risk of the model losing track of the initial constraints and ensures that the technical advice remains grounded in the specified comparison and outcome metrics.

Applying PICO to Product Development and A/B Testing

In the world of SaaS (Software as a Service) and app development, data-driven decision-making is king. PICO questions provide a rigorous structure for A/B testing and Product-Led Growth (PLG) strategies, ensuring that every experiment yields actionable data.

Structuring Experiments for Data Scientists

Data scientists often face the challenge of proving that a specific change in an algorithm actually drove a change in user behavior. PICO allows them to frame their hypotheses with scientific rigor.

For example: “In mobile gamers using Android 12 (P), does implementing a machine-learning-based matchmaking algorithm (I) compared to random matchmaking (C) result in a 15% increase in session duration (O)?”

This structure allows the data science team to isolate variables and ensure that the “Outcome” is directly attributable to the “Intervention.” It also makes it easier to communicate findings to stakeholders who may not understand the underlying math but can understand the PICO logic.

Reducing Bias in Tech Evaluation

Technologists often fall victim to “Shiny Object Syndrome”—the desire to adopt a new tool simply because it is trending. PICO acts as a filter for objective evaluation. By forcing a “Comparison” and a specific “Outcome,” it prevents teams from adopting expensive new tech stacks that don’t actually offer a measurable improvement over their current systems. If a new AI tool (I) cannot be proven to outperform the current workflow (C) in a specific metric like “hours saved per sprint (O),” the PICO analysis suggests the investment may not be justified.

The Role of PICO in the HealthTech and BioTech Sectors

While PICO is useful across all tech disciplines, it is absolutely essential in HealthTech and BioTech. These sectors sit at the intersection of high-stakes medicine and high-speed technology. Here, a PICO question isn’t just a research tool—it’s a safety protocol.

Accelerating Literature Reviews with Automated Tools

HealthTech firms are currently developing AI tools designed to scan millions of medical research papers to find the best treatments. These tools rely on PICO parsing. By identifying the P, I, C, and O in thousands of different studies, these algorithms can aggregate data much faster than a human researcher could. This allows for the rapid development of evidence-based software that assists doctors in real-time.

Integrating PICO into Clinical Decision Support Systems (CDSS)

Modern Clinical Decision Support Systems are software platforms that provide clinicians with evidence-based information at the point of care. For these systems to function, they must be programmed to recognize the “PICO” of a patient’s situation.

If a doctor enters a patient’s symptoms into a tablet, the backend software uses PICO logic to search its database: “For this demographic (P), is this medication (I) more effective than the standard protocol (C) for achieving remission (O)?” The tech stack behind this interaction must be robust, utilizing natural language processing (NLP) to map the doctor’s input into the PICO framework instantly.

Future Trends: AI-Driven Research Synthesis and Structured Inquiry

Looking forward, the importance of structured questioning frameworks like PICO will only grow as we transition toward autonomous agents and automated research pipelines. In a future where AI “agents” perform much of the heavy lifting in software debugging and system optimization, the human role will shift from “the person who does the work” to “the person who asks the right PICO question.”

We are already seeing the emergence of “Auto-GPT” style agents that can perform multi-step tasks. These agents perform best when given a PICO-style objective. By mastering this framework, tech professionals are essentially learning the language of the future—a language where precision in inquiry leads to perfection in execution.

In conclusion, PICO questions are far more than a legacy medical tool. They are a universal blueprint for technical clarity. Whether you are optimizing a cloud infrastructure, prompting a generative AI, or designing a new app, the PICO framework ensures that your technical efforts are focused, measurable, and, ultimately, successful. As the complexity of our digital tools increases, the simplicity and rigor of PICO offer a reliable path toward evidence-based innovation.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.