The term “scientific investigation” often evokes images of lab coats, microscopes, and chemical beakers. However, in the rapidly evolving landscape of the 21st century, the most profound scientific investigations are happening within the realms of silicon, code, and data. In the technology sector, scientific investigation is the systematic process of discovery, experimentation, and validation that allows us to build more efficient software, more powerful hardware, and more intelligent artificial intelligence.

At its core, scientific investigation in tech is about moving from “I think this will work” to “I have proven this works through empirical evidence.” This transition is what separates a hobbyist project from a scalable, enterprise-grade technological solution. By applying the rigorous standards of the scientific method to technical problems, engineers and developers can minimize risk, optimize performance, and push the boundaries of what is digitally possible.

The Foundations of Tech-Driven Scientific Investigation

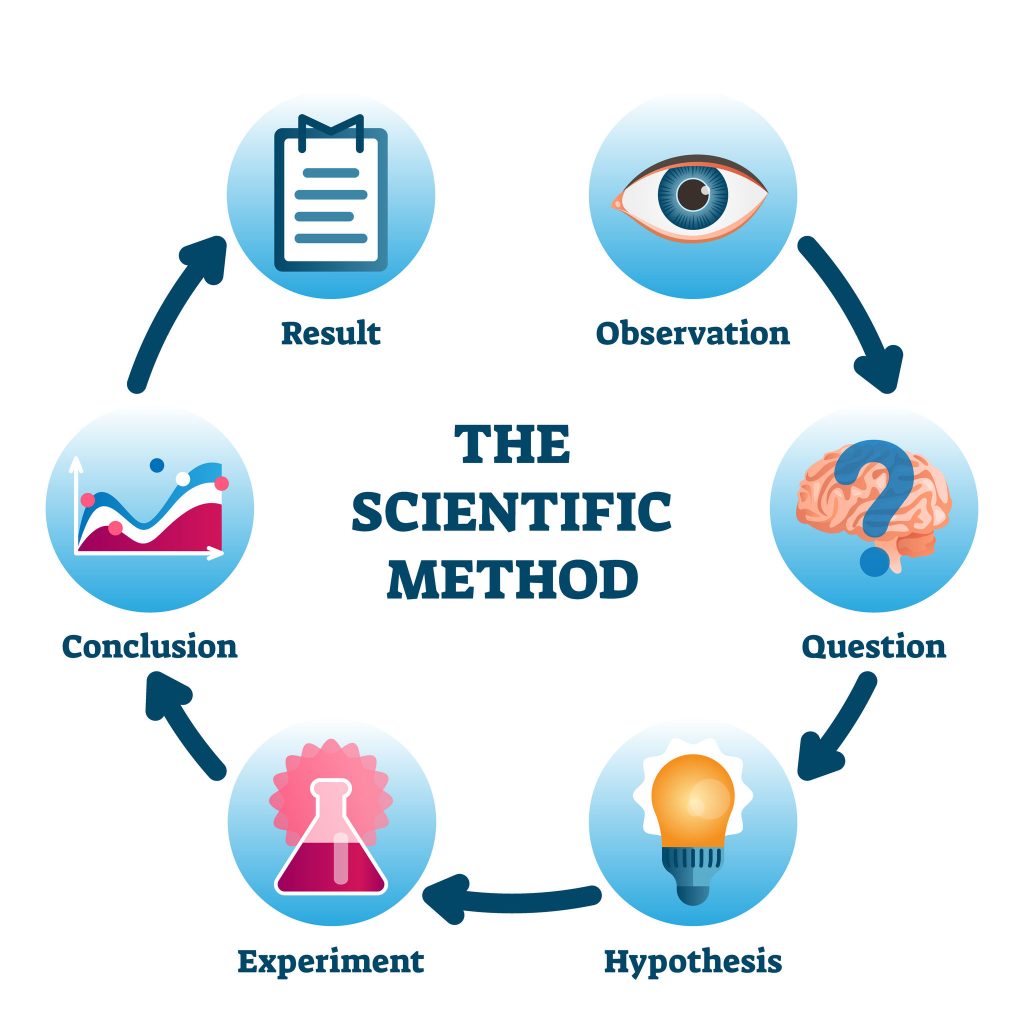

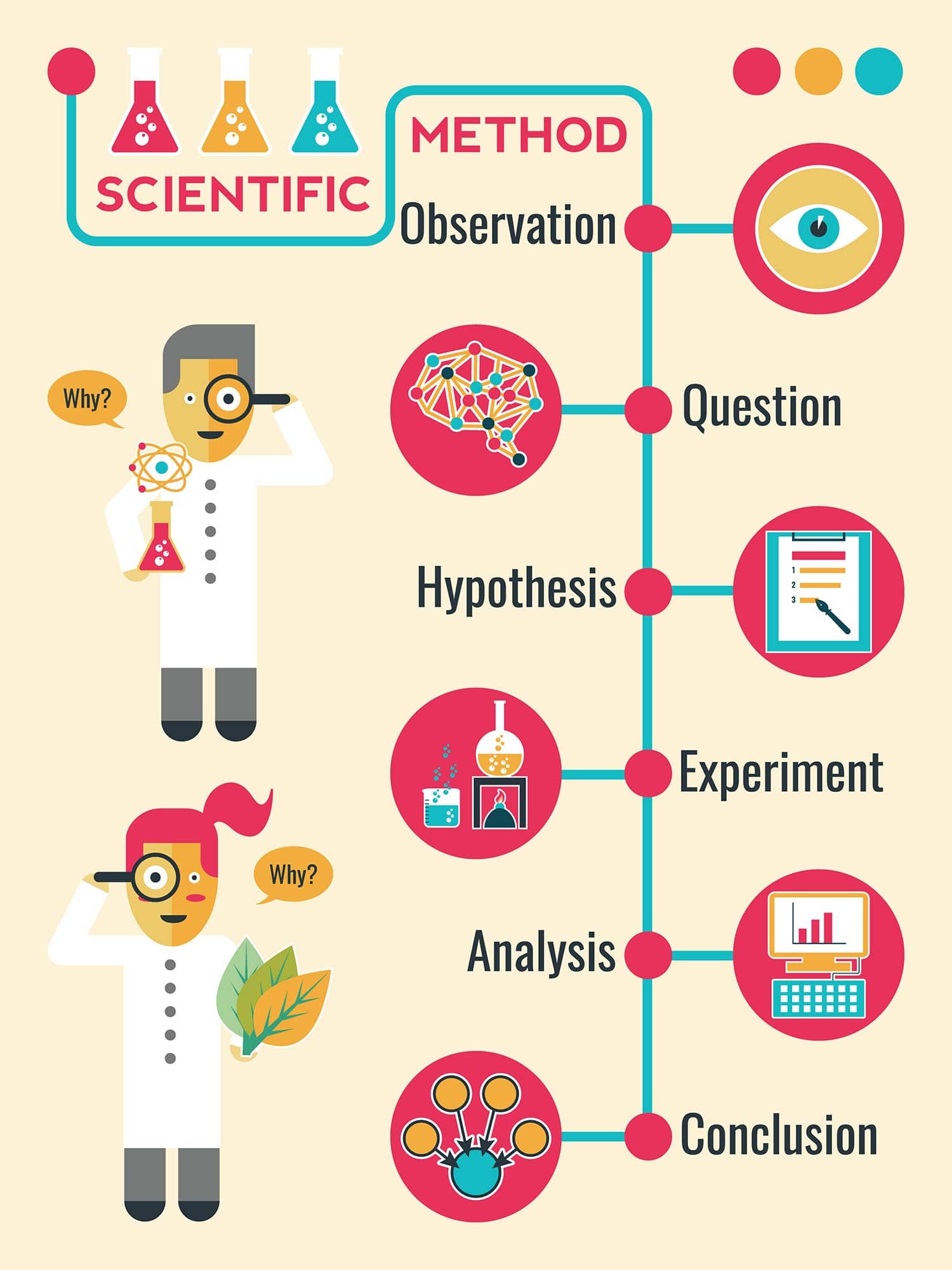

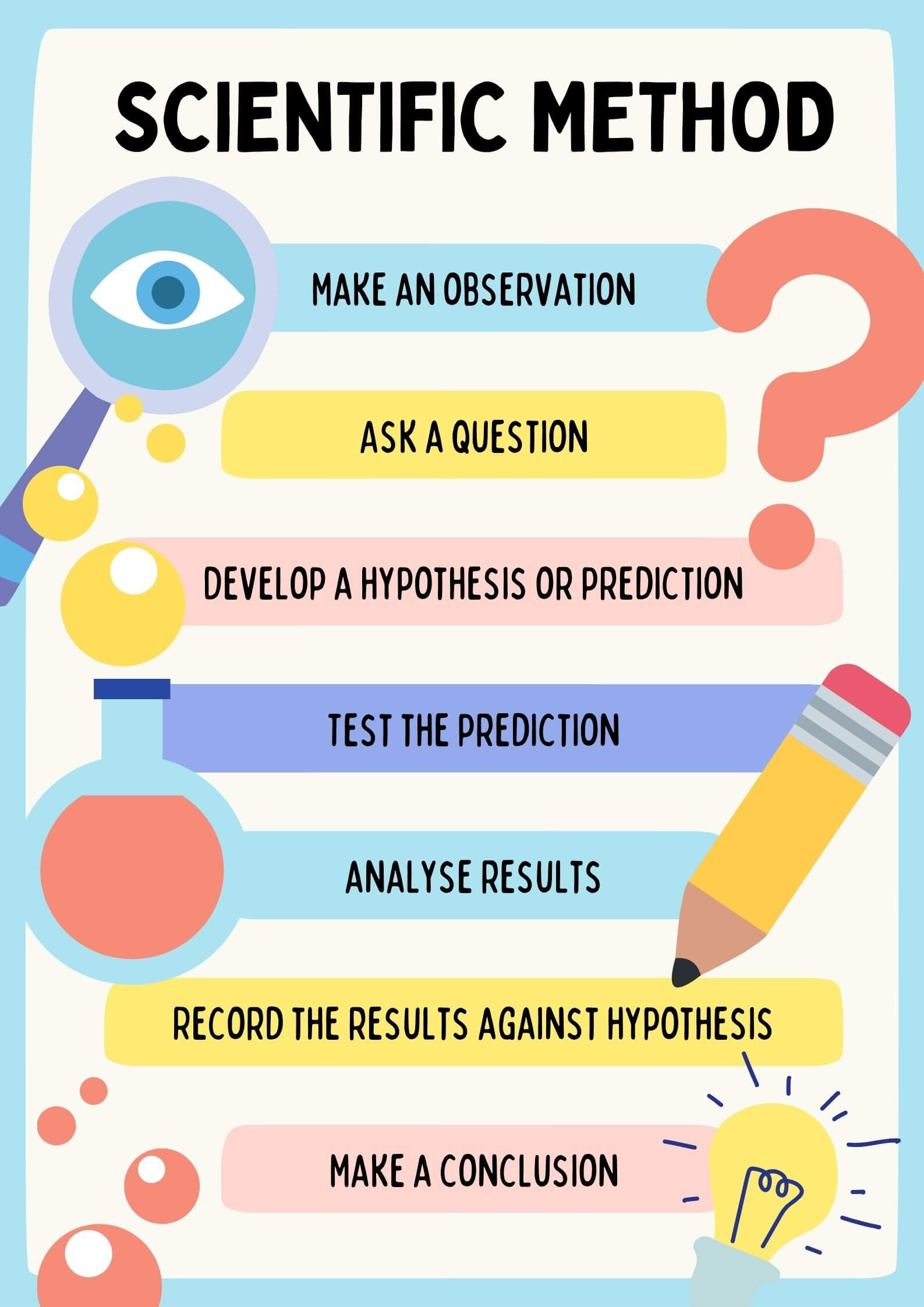

The application of the scientific method to technology begins with the shift from anecdotal decision-making to data-driven strategy. In the tech industry, a scientific investigation follows a structured path: observation, hypothesis formation, experimentation, analysis, and iteration. This cycle is not a one-time event but a continuous loop that defines the lifecycle of modern software and hardware development.

Formulating Hypotheses in Software Engineering

Every major feature update or system architecture change begins with a hypothesis. In software engineering, this might look like: “If we migrate our database from a relational structure to a NoSQL framework, we will reduce latency by 20% under peak load.” This is a testable, measurable statement. The investigation then centers on creating a controlled environment—often a staging server or a sandbox—to test this specific variable while keeping others constant. This rigor ensures that developers are not chasing “ghost” improvements but are making changes based on verifiable performance gains.

The Role of Empirical Data in Product Design

Technology is no longer built on gut feelings. Modern product design utilizes scientific investigation to understand how humans interact with interfaces. By collecting empirical data through telemetry and user monitoring, tech companies can observe patterns that users themselves might not be able to articulate. Whether it is tracking the millisecond delays in a checkout process or the heatmaps of where a user’s eyes linger on a screen, this data serves as the “raw material” for the investigation, leading to designs that are objectively more intuitive and effective.

Data Science as the Modern Laboratory

If the scientific method is the engine, then Data Science is the laboratory where technological investigation takes place. Today’s tech giants and startups alike rely on data scientists to perform complex investigations into massive datasets to find “the signal within the noise.”

Big Data and the Observational Stage

Scientific investigation always begins with observation. In the tech world, this involves the ingestion of “Big Data.” By observing the vast streams of information generated by IoT devices, social media interactions, or server logs, investigators can identify anomalies or trends. For instance, a cybersecurity firm might observe a minute but consistent increase in outbound traffic from a specific port. This observation triggers a formal investigation to determine if the pattern represents a new form of malware or a benign system update.

Machine Learning: Testing Predictive Models

The development of Machine Learning (ML) models is perhaps the purest form of scientific investigation in modern tech. An ML engineer creates a model (the experiment) and feeds it training data to see if it can accurately predict outcomes. The “investigation” involves fine-tuning hyperparameters—the variables of the model—to see which configuration yields the highest accuracy and the lowest error rate. This is a highly iterative process that mirrors traditional experimental physics, where constants are tweaked to observe their effect on the final result.

User Experience (UX) and Behavioral Scientific Research

Technology does not exist in a vacuum; it exists to be used by people. Therefore, scientific investigation in the tech sector must include a deep study of human behavior. This branch of tech research borrows heavily from cognitive psychology and sociology to ensure that technology serves human needs efficiently.

Quantitative vs. Qualitative UX Testing

A robust scientific investigation in UX uses both quantitative and qualitative methods. Quantitative research involves A/B testing, where two versions of a digital product are released to different user groups to see which performs better according to specific metrics (like click-through rates or time-on-task). Qualitative research, on the other hand, involves deep-dive interviews and usability testing to understand the “why” behind the numbers. Combining these two approaches allows tech companies to form a complete scientific picture of the user journey, leading to more “sticky” and user-friendly applications.

Iterative Prototyping: The “Trial and Error” of Tech

The concept of the “Minimum Viable Product” (MVP) is essentially a scientific experiment. By releasing a basic version of a tool, developers are testing the hypothesis that there is a market need for that specific solution. Each subsequent version of the software is an iteration based on the results of the previous “test.” This scientific approach to product-market fit reduces the financial risk of developing complex technologies that no one actually wants to use.

Cybersecurity and the Scientific Approach to Vulnerability

In the world of digital security, scientific investigation is the primary defense against increasingly sophisticated threats. Security researchers, often referred to as “White Hat” hackers, use investigative techniques to find and patch vulnerabilities before they can be exploited by malicious actors.

Threat Modeling and Hypothesis Testing

Cybersecurity professionals use threat modeling to hypothesize about potential attack vectors. They ask, “If an attacker gained access to this specific API, what lateral movements could they make within our network?” They then perform “penetration testing”—a controlled experiment where they attempt to breach their own systems using the hypothesized methods. The results of these tests provide the scientific evidence needed to harden systems and update security protocols.

Forensic Analysis: Investigating Post-Breach Data

When a security breach does occur, the scientific investigation shifts to digital forensics. Much like a crime scene investigator, a digital forensic expert examines logs, file timestamps, and memory dumps to reconstruct the sequence of events. This investigation aims to identify the “patient zero” of the infection and understand the methodology of the attacker. This knowledge is then fed back into the development cycle to ensure that the same vulnerability cannot be exploited in the future.

The Ethical Implications of Technological Investigation

As our ability to investigate and manipulate data grows, so too does the need for an ethical framework. Scientific investigation in tech is not just about what can be done, but what should be done, particularly concerning privacy, bias, and the long-term impact of automation.

Balancing Innovation with Algorithmic Transparency

One of the most pressing areas of investigation today is algorithmic bias. When an AI makes a decision—such as who gets a loan or who is flagged by a facial recognition system—it is the result of its training data. Tech companies are now conducting scientific audits of their own algorithms to investigate whether they are inadvertently perpetuating social biases. This requires a high level of transparency and a willingness to dismantle and rebuild systems that fail the ethical “test.”

Future Frontiers: Quantum Computing and Deep Tech Research

Looking forward, the nature of scientific investigation in technology is set to change with the advent of quantum computing. We are currently in the “observational” phase of quantum tech, where researchers are investigating how to maintain “qubit” stability and reduce decoherence. These deep tech investigations are moving us closer to a world where we can solve problems in seconds that would take current supercomputers millennia to process. The investigation into quantum mechanics as a computational tool represents the ultimate marriage of theoretical physics and applied technology.

Conclusion

Scientific investigation is the heartbeat of the technology industry. It is the process that turns a raw idea into a functional tool and a functional tool into a global platform. By embracing the rigor of the scientific method—through data analysis, hypothesis testing, and iterative design—the tech world ensures that innovation is not left to chance.

Whether it is a developer debugging a script, a data scientist refining a neural network, or a security expert modeling a threat, they are all participating in a grand scientific endeavor. As we move deeper into the age of AI and quantum discovery, the principles of scientific investigation will remain our most reliable compass, guiding us through the complexities of the digital frontier and ensuring that the technology we build is effective, secure, and ethically sound.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.