In the traditional medical landscape, the stethoscope has long been the universal symbol of the physician. For over two centuries, clinicians have relied on their ears to detect the subtle, popping sounds known as rales (or crackles) to diagnose conditions like pneumonia, heart failure, and pulmonary fibrosis. However, as we move deeper into the era of digital transformation, the way we identify and interpret “what are rales lung sounds” is undergoing a radical technological shift.

The integration of Artificial Intelligence (AI), high-fidelity digital sensors, and the Internet of Medical Things (IoMT) is turning the act of listening into a data-driven science. No longer limited by the subjective sensitivity of human hearing, modern diagnostic tech is providing a level of precision that was previously unimaginable.

Decoding the Sound: How AI and Signal Processing Identify Rales

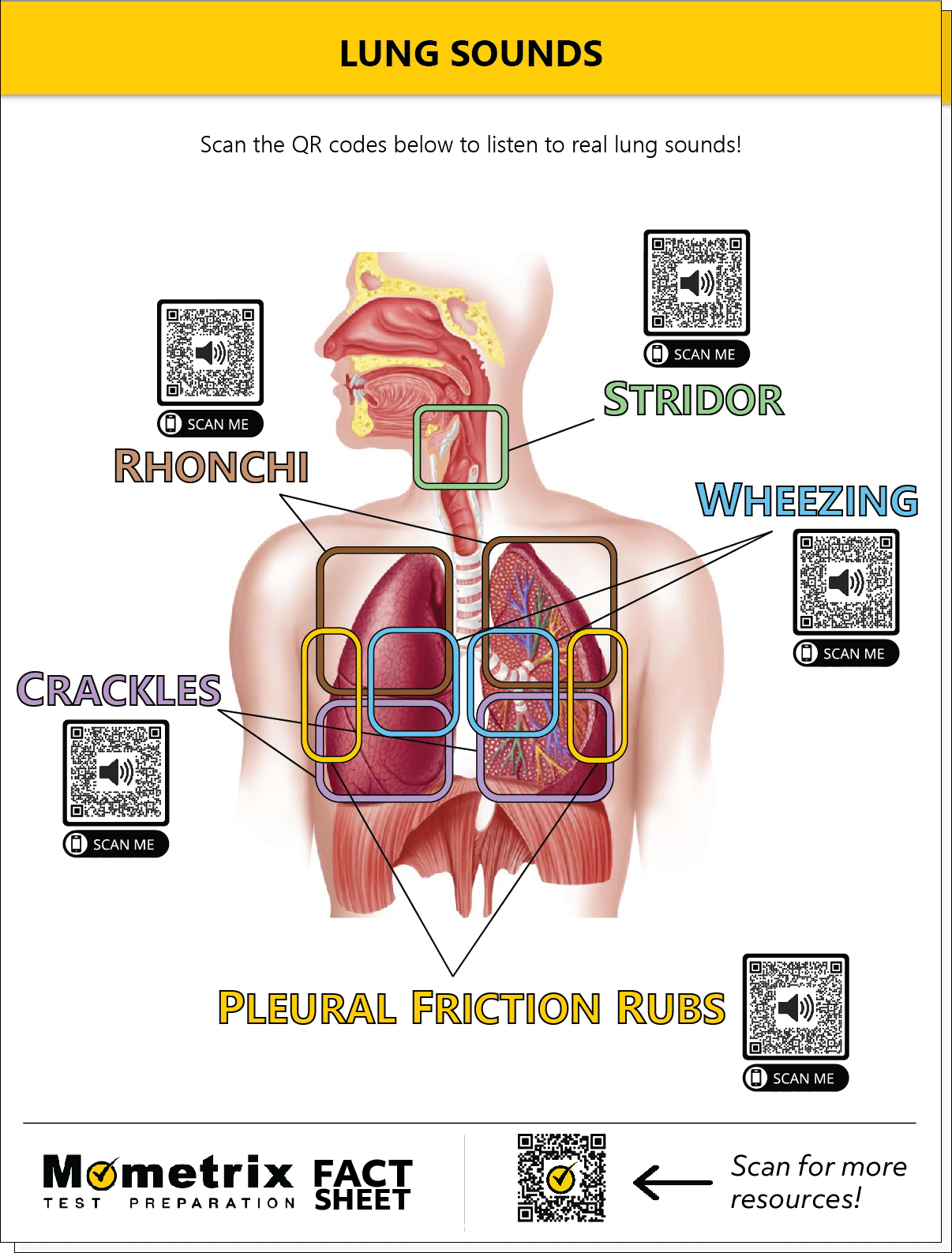

The primary challenge with rales is their subtlety. These are discontinuous, clicking, or rattling sounds heard during inhalation, caused by the snapping open of small airways and alveoli that had been collapsed by fluid or exudate. To a student, they can be difficult to distinguish from rhonchi or wheezes. To a machine, however, they are specific signatures in a frequency spectrum.

The Transition from Analog to Digital Stethoscopes

The analog stethoscope, while iconic, is fundamentally limited by acoustics. Sound waves lose energy as they travel through plastic tubing, and ambient noise can easily obscure faint rales. Digital stethoscopes have solved this through high-grade transducers that convert acoustic sound into electronic signals. These signals can be amplified up to 40 times, allowing for the detection of “fine” rales that might be missed by the naked ear. More importantly, digital stethoscopes utilize Active Noise Cancellation (ANC)—the same technology found in high-end consumer headphones—to filter out the hum of a busy hospital ward, leaving only the pure respiratory signal.

Machine Learning Algorithms in Respiratory Pattern Recognition

Once a lung sound is digitized, it becomes a data set. This is where Artificial Intelligence takes center stage. Machine learning models, specifically Deep Neural Networks (DNNs), are trained on massive databases of thousands of lung sound recordings. These algorithms can identify the specific “transient” waveforms that characterize rales.

While a human might describe rales as sounding like “Velcro being pulled apart,” an AI identifies them by their duration (usually less than 20 milliseconds), their frequency peaks, and their timing within the respiratory cycle. By automating the classification of these sounds, software platforms can provide a “second opinion” that reduces diagnostic error and standardizes care across different clinical settings.

Smart Stethoscopes and the Internet of Medical Things (IoMT)

The hardware used to detect rales is no longer a standalone tool; it is now a node in a vast digital network. The rise of Smart Stethoscopes has bridged the gap between physical examination and digital health records, creating a seamless flow of diagnostic information.

Real-time Data Visualization and Waveform Analysis

Modern diagnostic tech allows clinicians to do more than just listen; they can see the sound. Through Bluetooth-connected applications, the audio from a chest exam is converted into a phonocardiogram or a spectrogram in real-time.

When a clinician is looking for rales, the visual representation shows distinct spikes in the waveform. This visualization serves two tech-forward purposes: it acts as a teaching tool for medical residents and provides a permanent digital record of the patient’s lung status. If a patient with congestive heart failure shows a “crackling” waveform on Monday, and a clearer waveform on Wednesday after treatment, the tech provides objective, visual proof of improvement that an audio memory simply cannot match.

Remote Monitoring: Bringing Diagnostic Accuracy to Telehealth

Perhaps the most significant tech trend in respiratory care is the expansion of telehealth. Historically, a “lung check” required an in-person visit. Today, consumer-grade digital stethoscopes allow patients to record their own lung sounds at home and upload them to a secure cloud.

The software then analyzes the recording for the presence of rales and flags abnormal results for a physician’s review. This use of IoMT is crucial for chronic disease management, particularly for patients with COPD or interstitial lung disease, where the sudden appearance of rales can signal an exacerbation that requires immediate intervention. By moving the diagnostic tech into the home, we are witnessing a shift from reactive to proactive healthcare.

Software Solutions for Respiratory Health Tracking

Beyond the hardware, the software ecosystem surrounding lung sound analysis is becoming increasingly sophisticated. Software-as-a-Service (SaaS) models are entering the clinical space, offering hospitals powerful tools for longitudinal patient tracking.

Mobile Apps for Sound Classification

There is a growing market for mobile applications designed specifically for sound classification. These apps use the built-in microphones of smartphones (often augmented by a plug-in sensor) to perform basic auscultation. While not yet a replacement for clinical-grade hardware, these apps utilize edge computing—processing data locally on the device—to provide instant feedback on respiratory health. For researchers, these apps serve as a gateway to crowdsourcing data, helping to build even more robust AI models for detecting rare lung pathologies.

Cloud-Based Libraries and Collaborative Diagnosis

Digital auscultation allows for “asynchronous consultation.” When a primary care physician hears an ambiguous sound that might be rales, they can instantly share the digital audio file with a pulmonologist halfway across the world.

Cloud-based platforms host libraries of these sounds, categorized by pathology, age group, and comorbidities. This collaborative tech environment ensures that a patient in a rural clinic has access to the same diagnostic expertise as a patient in a major urban teaching hospital. The “democratization of sound” is a direct result of cloud computing and high-speed data transmission.

The Future of Clinical Tech: Predictive Analytics and Wearable Sensors

As we look toward the next decade, the technology for identifying rales will move away from intermittent checks and toward continuous monitoring. The goal is to move from “What are rales?” to “When will rales appear?”

Continuous Monitoring via Wearable Acoustic Sensors

We are currently seeing the emergence of wearable “smart patches.” These are small, adhesive sensors equipped with MEMS (Micro-Electro-Mechanical Systems) microphones. They can be worn on a patient’s back or chest for 24 hours a day, continuously monitoring for the onset of rales.

For a patient recovering from surgery or a high-risk cardiac patient, these wearables act as an early warning system. If the sensor detects the specific acoustic signature of fluid entering the lungs, it can trigger an automated alert to the medical team hours—or even days—before the patient becomes symptomatic. This is the pinnacle of predictive maintenance for the human body.

Enhancing Diagnostic Reliability through Big Data

The ultimate power of this technology lies in the aggregation of data. As thousands of digital stethoscopes and wearables collect data on rales across global populations, we are building a “Big Data” repository of respiratory health.

This data allows developers to refine AI models to account for variables like chest wall thickness, age, and even environmental background noise. The result is a diagnostic tool that becomes smarter with every use. In the near future, the question of “what are rales” will be answered not just by a doctor’s experience, but by a global database of billions of acoustic events, analyzed in milliseconds to provide a diagnosis with nearly 100% accuracy.

Conclusion: The New Sound of Medicine

The journey from the wooden tube of René Laennec to the AI-powered sensors of today is a testament to the power of technology to enhance human capability. While the fundamental nature of rales as a clinical sign hasn’t changed, our ability to detect, analyze, and act upon those sounds has been revolutionized.

By leveraging digital signal processing, AI-driven diagnostics, and the IoMT, the medical technology sector is ensuring that respiratory conditions are caught earlier, treated more effectively, and monitored with unprecedented precision. For the modern healthcare provider, the stethoscope is no longer just a listening device—it is a powerful data entry point into a sophisticated digital health ecosystem. As software continues to eat the world, it is now learning to listen to the very breath of life, turning the subtle “pop” of a rale into a clear, actionable signal for recovery.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.