In the traditional sense, scientific laws are descriptive accounts of how the universe behaves under specific conditions. They are the constants—like gravity or thermodynamics—that provide a framework for understanding the physical world. However, as we have transitioned into the information age, a new subset of “laws” has emerged within the realm of technology. These are not always immutable physical constants, but rather empirical observations and algorithmic certainties that govern the evolution of hardware, software, and artificial intelligence.

Understanding what laws are in the context of digital science is essential for anyone navigating the current tech landscape. These principles dictate how fast our processors become, how valuable our networks grow, and how complex our software systems can get before they collapse under their own weight. This exploration delves into the foundational laws of technology that mirror scientific laws in their reliability and impact.

The Nature of Heuristic Laws in Technological Science

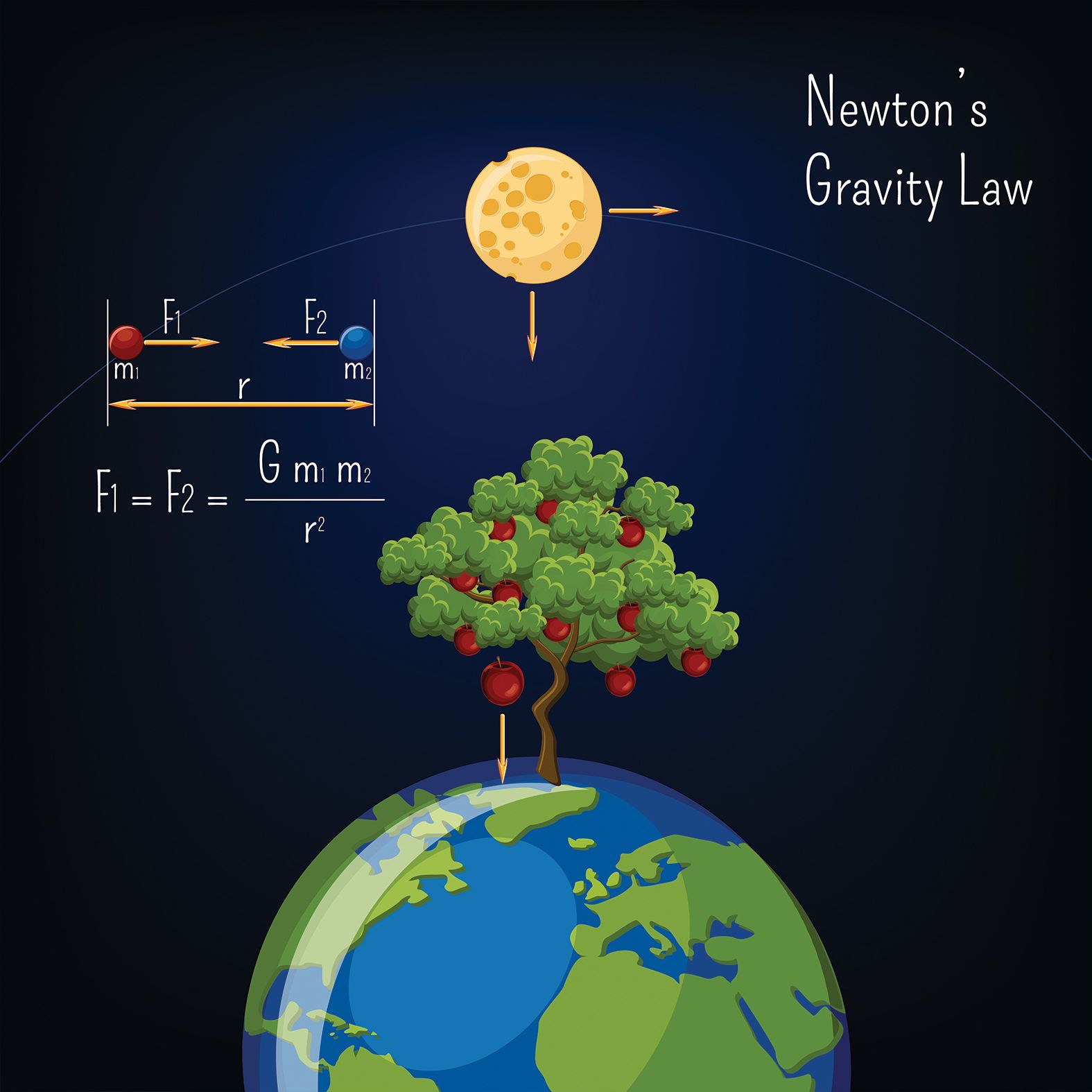

In physics, a law like Newton’s Second Law of Motion ($F=ma$) describes a universal truth. In technology, laws are often “heuristic”—meaning they are based on empirical observations that have held true over decades of rapid innovation. While a physical law describes what must happen, a technological law often describes what is happening as a result of engineering efficiency and economic incentives.

The Transition from Physical to Digital Constants

Technology is the application of scientific knowledge for practical purposes. Therefore, the laws of technology are often rooted in the physics of semiconductors and the mathematics of information theory. For instance, the way electrons move through silicon determines the limits of processing speed. As we shrink transistors to the atomic scale, we move from the classical laws of physics into the realm of quantum mechanics, which creates new “laws” for quantum computing.

Why Tech Laws Matter for Developers and Engineers

For software developers and hardware engineers, these laws serve as the boundaries of possibility. They act as a roadmap for innovation. If an engineer knows that data storage costs will drop according to a predictable curve, they can design software today that will be affordable to run tomorrow. Understanding these scientific principles allows tech leaders to make high-stakes decisions about infrastructure, digital security, and product scalability with mathematical confidence.

Scaling Laws and the Evolution of Computing Hardware

The most famous “laws” in science and technology are those that describe growth. These laws have dictated the pace of the digital revolution, turning room-sized computers into smartphones that fit in our pockets.

Moore’s Law: The Engine of the Silicon Age

Proposed by Gordon Moore in 1965, Moore’s Law is the observation that the number of transistors on a microchip doubles approximately every two years, while the cost of computers is halved. Although it is more of an industrial observation than a law of nature, it functioned as a self-fulfilling prophecy for the tech industry for over half a century.

This law is the reason why software has become more powerful and complex. As hardware became exponentially faster, developers were freed from the constraints of limited memory and processing power, leading to the rise of high-definition graphics, real-time data processing, and complex AI models. Today, as we reach the physical limits of silicon, Moore’s Law is evolving into new forms, such as 3D chip stacking and specialized AI accelerators.

Koomey’s Law and the Science of Energy Efficiency

While Moore’s Law focuses on performance, Koomey’s Law focuses on efficiency. It states that the energy of computation is halved every 1.5 years. This scientific trend is what allows our mobile devices to stay powered for a full day despite their immense processing demands. In the world of “Green Tech” and sustainable software engineering, Koomey’s Law is the primary metric. It dictates how data centers are built and how mobile hardware is designed to ensure that the digital revolution doesn’t outpace our planet’s energy capacity.

Network Laws and the Science of Connectivity

Technology is rarely about a single machine; it is about how machines interact. The “laws” governing networks are perhaps the most influential in the modern era of social media, cloud computing, and the Internet of Things (IoT).

Metcalfe’s Law: The Value of the Connected User

Metcalfe’s Law states that the value of a telecommunications network is proportional to the square of the number of connected users of the system ($n^2$). This is a fundamental law of digital science that explains why platforms like WhatsApp, LinkedIn, or Ethereum become exponentially more powerful as their user base grows.

From a tech perspective, this law explains “network effects.” A single phone is useless; two phones are slightly useful; a billion phones create a global infrastructure. This law guides the growth strategies of almost every major software platform today, emphasizing that connectivity is the ultimate multiplier of technological value.

Reed’s Law and the Power of Digital Communities

Going a step further than Metcalfe, David Reed proposed that the utility of large networks can scale even faster—exponentially—if the network allows for the formation of sub-groups or communities. While Metcalfe’s Law looks at individual connections, Reed’s Law ($2^n$) looks at the potential for group formation. This is the scientific basis for the modern social web and collaborative tools like Slack or GitHub. It suggests that the true “tech science” of the future lies not just in connecting individuals, but in facilitating complex, overlapping digital ecosystems.

Algorithmic Laws and the Science of Software Management

Software development is often seen as an art, but it is governed by rigorous scientific laws that determine how systems perform and how teams function.

Amdahl’s Law and the Limits of Parallel Processing

In the quest for faster technology, we often try to do many things at once through parallel processing. Amdahl’s Law is a formula used to find the maximum theoretical improvement of an entire system when only a part of the system is improved. It provides a sobering scientific reality: the speedup of a program using multiple processors is limited by the time needed for the sequential fraction of the program.

For software architects, Amdahl’s Law is a warning against “throwing more hardware” at a problem. It teaches that the efficiency of an algorithm is often more important than the raw power of the CPU. This law is foundational in the development of high-frequency trading platforms and large-scale cloud architectures where every microsecond of latency counts.

Brooks’s Law: The Human Element in Software Science

In his seminal work The Mythical Man-Month, Fred Brooks proposed a law that remains a cornerstone of software engineering: “Adding manpower to a late software project makes it later.” This law highlights the scientific reality of “communication overhead.” As more people are added to a tech project, the number of communication channels increases quadratically, eventually consuming all available time. This principle has led to the development of “Agile” and “Scrum” methodologies, which aim to keep tech teams small, decentralized, and efficient.

The Future of Technology Laws in the Era of AI

As we enter the age of Artificial Intelligence, new scientific laws are being discovered that describe how large language models (LLMs) and neural networks behave.

Neural Scaling Laws: Predicting AI Performance

One of the most significant recent discoveries in AI research is the existence of “scaling laws.” Researchers have found that the performance of an AI model (its “loss” or error rate) follows a predictable power-law relationship with three variables: the number of parameters in the model, the amount of compute used for training, and the size of the dataset.

These laws are as rigorous as those found in thermodynamics. They allow companies like OpenAI or Google to predict exactly how smart a future AI model will be before they even begin the months-long process of training it. This “science of scaling” is currently the most important law in the tech industry, as it determines where billions of dollars in R&D are allocated.

The Role of Digital Security as a Scientific Constraint

Finally, we must consider the laws of digital security and cryptography. The security of the modern internet is based on the mathematical difficulty of certain problems, such as factoring large prime numbers. This is a scientific law of complexity. As quantum computing advances, the laws of “Shor’s Algorithm” suggest that our current encryption methods will become obsolete. This necessitates the development of Post-Quantum Cryptography—a new field governed by the law that technology must evolve its defenses at the same rate it evolves its threats.

In conclusion, “laws in science” within the technology sector are the invisible pillars that support our digital world. From the transistor-shaving precision of Moore’s Law to the group-forming power of Reed’s Law, these principles provide the predictability and structure required for innovation. By understanding these laws, we gain a deeper insight into why technology evolves the way it does and where it is likely to take us next.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.