For decades, the digital world operated under a relatively simple social contract: while the internet contained misinformation, the tools required to convincingly fake reality were restricted to Hollywood studios and state-level intelligence agencies. Today, that contract has been shredded. We have entered an era where “What a Hoax” is no longer just a reaction to a suspicious headline, but a necessary baseline for interacting with any digital medium. From deepfake audio that can drain corporate bank accounts to the “Dead Internet Theory” suggesting a web populated primarily by bots, the technological landscape is currently defined by a crisis of authenticity.

The Rise of Synthetic Reality: Understanding the Deepfake Phenomenon

The most visceral manifestation of the modern digital hoax is the deepfake. While the term was once relegated to niche forums, the underlying technology—primarily Generative Adversarial Networks (GANs)—has democratized the ability to create hyper-realistic video and audio. This is no longer about poorly photoshopped images; it is about the wholesale synthesis of human identity.

The Technology Behind the Illusion: GANs and Diffusion Models

To understand why these hoaxes are so effective, one must understand the “adversarial” nature of their creation. A GAN consists of two neural networks: the Generator and the Discriminator. The Generator creates an image or audio clip, while the Discriminator attempts to determine if it is real or fake. They are pitted against each other in a constant loop. As the Discriminator gets better at spotting flaws, the Generator gets better at hiding them.

In recent years, Diffusion Models have furthered this capability, allowing for the generation of high-fidelity imagery from simple text prompts. The speed at which these models iterate means that the “uncanny valley”—that slight sense of wrongness in digital recreations—is shrinking rapidly. When a digital entity looks, moves, and sounds like a specific human being, the traditional human “hoax detector” fails.

From Entertainment to Exploitation: The Evolution of Manipulation

Initially, synthetic media was viewed through the lens of entertainment—bringing deceased actors back to the screen or creating viral parodies. However, the pivot toward “hoax-as-a-service” has created significant security vulnerabilities. “Vishing” (voice phishing) has seen a massive upgrade. Attackers now use AI to clone the voice of a CEO or a family member to authorize fraudulent wire transfers or extract sensitive data. These are not just pranks; they are sophisticated technological heists that leverage the human brain’s inherent trust in familiar voices.

The “Dead Internet Theory” and the Automization of Engagement

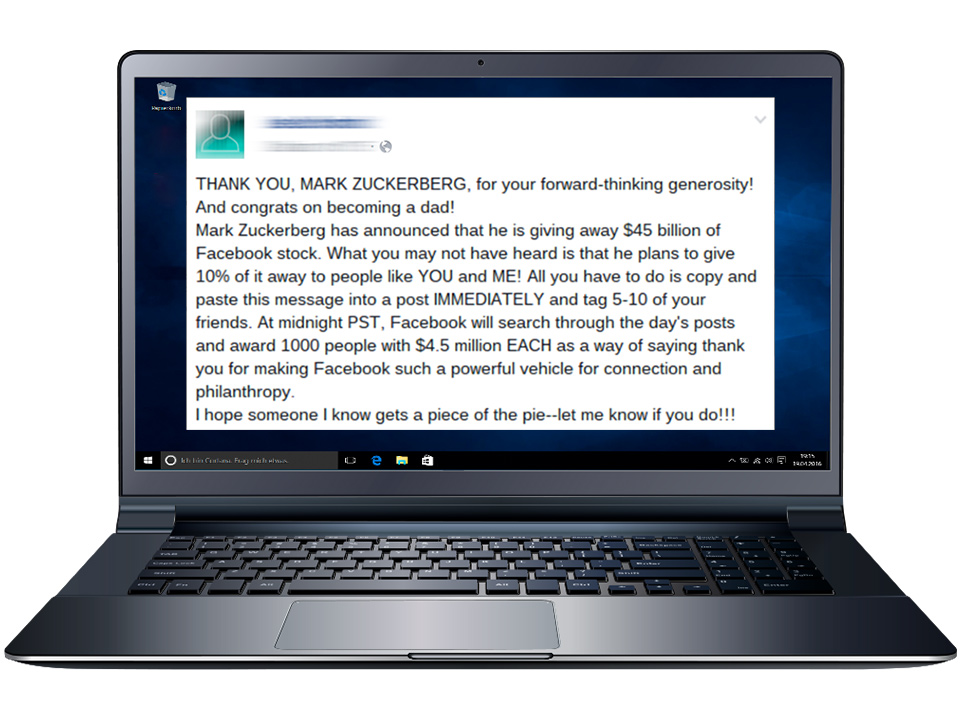

Beyond individual deceptions lies a broader, systemic hoax: the possibility that the social internet we interact with daily is largely artificial. The “Dead Internet Theory” posits that the majority of internet traffic, content, and engagement is no longer generated by humans, but by bots designed to manipulate algorithms, sell products, or sway public opinion.

Bot Farms and Algorithmic Echo Chambers

We often think of bots as clumsy scripts that post repetitive spam. However, modern Large Language Models (LLMs) have enabled the creation of bots that can engage in nuanced debate, write convincing product reviews, and maintain long-term digital personas. “Hoax” in this context refers to the illusion of popularity or consensus.

When a topic trends on social media, it is often impossible for a standard user to discern if the momentum is organic or the result of a coordinated bot swarm. This “artificial engagement” creates a feedback loop; algorithms see the bot activity, assume the topic is valuable, and then push it to actual human users. The result is a digital environment where human perception is steered by non-human actors, making the entire experience of “public opinion” a potential hoax.

The Impact on Human Connection and Discourse

The technological saturation of the web with automated content has led to a “degradation of the commons.” When users suspect that the person they are arguing with or the review they are reading is a bot, trust evaporates. This skepticism, while protective, also isolates users. The irony of the most connected era in human history is that our primary tools for connection—social platforms—are becoming minefields of automated deception, leading many to retreat from digital discourse altogether.

Vaporware and the High-Tech Hype Cycle

In the tech industry, the term “hoax” frequently applies to the products themselves. The pressure to secure venture capital and dominate market headlines has led to a resurgence of “Vaporware”—software or hardware that is heavily promoted but never actually exists or functions as promised.

Overpromising as a Business Model

The Silicon Valley ethos of “move fast and break things” has, in some sectors, morphed into “fake it until you make it.” We see this most clearly in the race for Autonomous Driving (AD) and General Artificial Intelligence (AGI). Companies often release demo videos that are meticulously edited or performed in “sandbox” environments that do not reflect real-world capabilities.

When a company showcases a humanoid robot performing complex tasks, only for it to be revealed later that the robot was being remotely operated by a human, it crosses the line from marketing to a technological hoax. This creates a distorted market where companies delivering incremental, honest progress are overshadowed by those selling impossible dreams.

Case Studies in Technological Exaggeration

The history of tech is littered with high-profile hoaxes that cost investors billions. From blood-testing devices that couldn’t perform the promised tests to “AI-powered” apps that actually utilized low-wage human workers behind the scenes to perform data entry, these examples highlight a systemic issue. The complexity of modern software makes it easy to hide the “man behind the curtain.” For the average consumer or investor, the black box of proprietary code is indistinguishable from magic, making them highly susceptible to well-funded deceptions.

Cybersecurity in a Post-Truth World

As the tools for creating hoaxes become more accessible, the burden of defense shifts to the individual and the enterprise. We are moving toward a “Zero Trust” model, not just for network security, but for information consumption.

Protecting Personal and Corporate Identity

In an era of deepfakes, traditional multi-factor authentication (MFA) is no longer sufficient. If an attacker can spoof your face and voice, they can bypass many biometric security measures. The tech industry is currently scrambling to develop “liveness detection” technologies—systems that can distinguish between a 2D/3D digital projection and a living, breathing human. This involves analyzing micro-expressions, skin blood flow (photoplethysmography), and atmospheric reflections that AI currently struggles to replicate.

The Need for New Verification Standards: Blockchain and Watermarking

To combat the hoax economy, we need a technological “paper trail.” One proposed solution is the implementation of C2PA (Coalition for Content Provenance and Authenticity) standards. This involves embedding cryptographic metadata into files at the moment of creation. If a photo is taken on a smartphone, the device signs it with a digital certificate. If that photo is later altered by AI, the certificate is broken, signaling to the viewer that the media is no longer authentic.

Furthermore, some developers are looking toward blockchain technology as a decentralized ledger for truth. By anchoring “original” pieces of content to a blockchain, creators can provide a verifiable history of an asset, making it significantly harder for a hoax version to gain traction as the original.

Conclusion: The Future of Truth in Technology

The headline “What a Hoax” will likely be the defining sentiment of the next decade of technological development. As AI continues to blur the lines between the synthetic and the organic, our reliance on software to verify reality will only increase. We are in the midst of an arms race: on one side, tools that make deception effortless and cheap; on the other, tools that attempt to reclaim the concept of a verifiable fact.

Navigating this landscape requires more than just better software; it requires a shift in digital literacy. We must move away from the passive consumption of digital media and toward an active, skeptical engagement with the screens in front of us. Technology gave us the ability to create these hoaxes, and ultimately, it must be technology—paired with human discernment—that provides the solution. The “hoax” isn’t just a single fake video or a bot; it is the illusion that our digital world is an unmediated reflection of reality. Breaking that illusion is the first step toward building a more secure and authentic digital future.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.