In the contemporary digital landscape, the phrase “telling someone what to do” has migrated from the realm of interpersonal relationships into the core architecture of our technology. When we ask, “is telling someone what to do grooming?” within a technological context, we are not discussing traditional psychological manipulation, but rather the sophisticated, data-driven process of Digital Grooming. This refers to the way software, algorithms, and user interfaces (UI) subtly mold user behavior, dictate choices, and erode individual autonomy over time.

As we spend more of our lives mediated by screens, the line between a helpful recommendation and coercive direction becomes increasingly blurred. This article explores the ethics of digital direction, the mechanics of algorithmic influence, and whether the tech industry’s penchant for “nudging” users has crossed the line into a systemic form of behavioral grooming.

Defining Digital Grooming in the Age of Big Tech

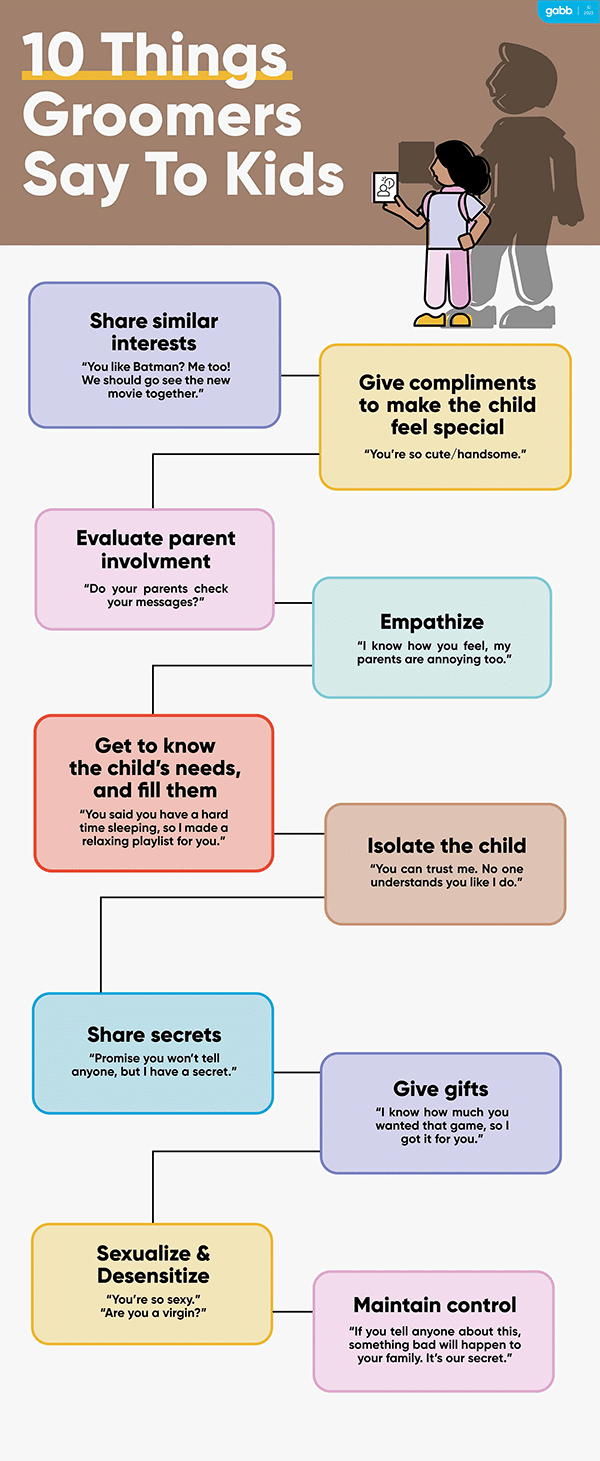

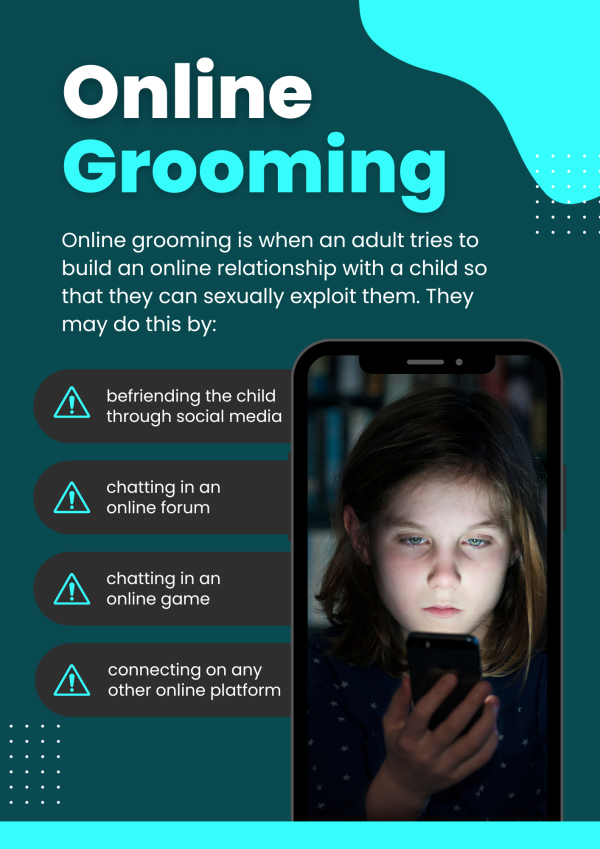

In the tech sector, “grooming” describes a process where an entity—usually a platform or an AI—systematically builds a relationship with a user to lower their defenses and direct their future actions. While the term is heavy with emotional weight, its application in software development and data science is a clinical reality of the attention economy.

The Shift from Recommendation to Dictation

Early internet technology functioned primarily as a directory. Search engines and websites provided options, and users exercised agency to choose among them. However, modern technology has shifted toward a “proactive” model. Today, algorithms do not wait for a command; they anticipate it. By predicting what a user wants to see, buy, or believe, platforms effectively “tell” the user what their next step should be. This proactive direction is the first stage of digital grooming, where the user is conditioned to rely on the machine for decision-making.

Identifying Behavioral Manipulation

Digital grooming relies on a feedback loop. Every time a user follows a suggested path—clicking a “Recommended for You” video or accepting an automated calendar invite—the system reinforces that behavior. This isn’t merely a convenience; it is a method of narrowing a user’s world. When an algorithm consistently directs a user toward specific content while filtering out alternatives, it is effectively telling them what to think. This systemic narrowing of choice is a hallmark of grooming, as it removes the user’s ability to provide informed consent for their digital journey.

The Ethics of UI/UX: Nudging vs. Controlling

User Experience (UX) design is the art of guiding a user through a digital product. However, there is a fine line between a seamless experience and a coercive one. The tech industry often uses the term “nudging” to describe subtle design choices that encourage specific behaviors, but at what point does a nudge become a command?

Dark Patterns and Forced User Journeys

“Dark patterns” are UI elements designed to trick users into doing things they didn’t intend to do, such as signing up for a recurring subscription or sharing more personal data than necessary. If an app makes it nearly impossible to find the “Delete Account” button while highlighting the “Upgrade Now” button in bright colors, it is telling the user what to do through visual dominance. This is a form of design-based grooming, where the environment is rigged to ensure the user follows the platform’s goals rather than their own.

The Illusion of Choice in App Ecosystems

Many modern apps create an “illusion of choice.” They provide a menu of options, but all options lead to the same data-harvesting outcome. When software dictates the flow of information—for instance, by automatically playing the next video or using infinite scroll—it removes the natural “stop points” in human cognition. By eliminating the moment where a user would normally decide what to do next, the technology takes over the role of the decision-maker.

Artificial Intelligence and the Engineering of Consent

The most potent tool in the digital grooming arsenal is Artificial Intelligence. Unlike static code, AI learns and evolves, allowing it to tailor its “instructions” to the specific psychological profile of an individual user.

Predictive Analytics as a Tool for Behavior Modification

Predictive analytics uses historical data to forecast future behavior. In a vacuum, this is a powerful business tool. In practice, however, it allows platforms to intervene before a user has even formed an intention. If an AI predicts a user is likely to feel lonely or vulnerable, it may surface specific types of content to exploit that state. By preemptively “telling” the user how to feel or what to consume through targeted notifications, AI performs a highly sophisticated form of psychological grooming that is nearly invisible to the user.

The Feedback Loop: How AI Refines Its Control

AI systems thrive on reinforcement learning. When an algorithm “tells” a user to engage with a post and the user complies, the AI registers a success. Over millions of iterations, the AI becomes an expert at bypassing a user’s critical thinking. This creates a relationship of dependency where the user no longer questions the “suggestions” provided by the device. This engineering of consent—making the user feel like they chose the path when the path was actually pre-determined by an algorithm—is the pinnacle of tech-driven grooming.

Digital Security and the Risk of Social Engineering

The concept of grooming is perhaps most dangerous when applied to digital security. Hackers and malicious actors use the same principles of “telling someone what to do” to execute social engineering attacks, such as phishing or “vishing” (voice phishing).

The Psychology of Command in Cyberattacks

In a social engineering context, telling someone what to do is the primary weapon. Attackers create a sense of urgency—”Your account is compromised, click here immediately”—to bypass the user’s rational thought. By mimicking the authoritative “voice” of a trusted platform (like a bank or a tech support team), the attacker grooms the victim into a state of compliance. The victim is told exactly what to do, and the “grooming” lies in the false sense of trust established by the attacker.

Automated Phishing and AI Spoofing

With the advent of Large Language Models (LLMs), malicious actors can now automate the grooming process. AI can generate highly personalized, persuasive messages that tell users to download malware or reveal passwords. These systems can maintain conversations, build rapport, and slowly direct the user toward a catastrophic security breach. This represents the ultimate convergence of tech and grooming: a machine designed specifically to manipulate human behavior through direct instruction.

Protecting Digital Sovereignty in a Directed World

If “telling someone what to do” in the tech world has become a form of grooming, how do we reclaim our autonomy? The solution lies in a combination of technological literacy, better design ethics, and robust regulation.

Strategies for Algorithmic Literacy

To combat digital grooming, users must develop “algorithmic literacy.” This involves understanding that every recommendation, notification, and “default” setting is a choice made by a developer, not a neutral fact.

- Audit Your Feed: Regularly clear your cache and reset your advertising ID to disrupt the algorithm’s profile of you.

- Turn Off Autoplay: Reclaim the “stop points” in your digital consumption.

- Seek Out Friction: Choose tools that require intentionality rather than those that offer frictionless, automated experiences.

The Role of Regulation in Digital Privacy

The responsibility should not fall solely on the user. Legislative frameworks like the GDPR in Europe and the CCPA in California are early attempts to limit how much “grooming” a platform can do. Future regulations must focus on “algorithmic transparency,” requiring companies to disclose why a user is being shown specific content and giving users the legal right to opt out of behavioral manipulation. By limiting the ability of tech to “tell us what to do” through hidden data metrics, we can begin to restore the balance of power between humans and their devices.

Conclusion

Is telling someone what to do grooming? In the context of technology, the answer is a nuanced yes. When platforms use algorithms to bypass our critical thinking, employ dark patterns to force our hands, and use AI to engineer our consent, they are engaging in a form of digital grooming. This process erodes our autonomy and turns the user from a participant into a product.

As we move further into an era defined by AI and hyper-personalization, the tech industry must confront the ethics of influence. True technological progress should empower users to make their own decisions, not groom them into a state of predictable compliance. Reclaiming our digital lives starts with recognizing when we are being guided—and having the tools to say no.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.