In the rapidly evolving landscape of cloud computing, the shift from monolithic architectures to microservices has redefined how software is built, deployed, and managed. However, this transition introduced a new layer of complexity: network communication. As applications were broken down into hundreds or even thousands of independent services, managing the traffic between them became a monumental challenge. This is where Envoy enters the frame.

Envoy is an open-source edge and service proxy designed for cloud-native applications. Originally developed by the engineering team at Lyft and later donated to the Cloud Native Computing Foundation (CNCF), it has become the gold standard for managing the “service mesh”—the intricate web of communication between microservices. This article explores the technical depths of Envoy, its architectural brilliance, and why it is considered the backbone of modern digital infrastructure.

The Genesis and Architecture of Envoy Proxy

To understand what an Envoy is, one must first understand the problem it was built to solve. In a traditional architecture, a single large application handles everything. In a microservice architecture, services need to talk to each other over a network. Networks are inherently unreliable, prone to latency, and difficult to monitor. Envoy was created to make the network transparent to applications.

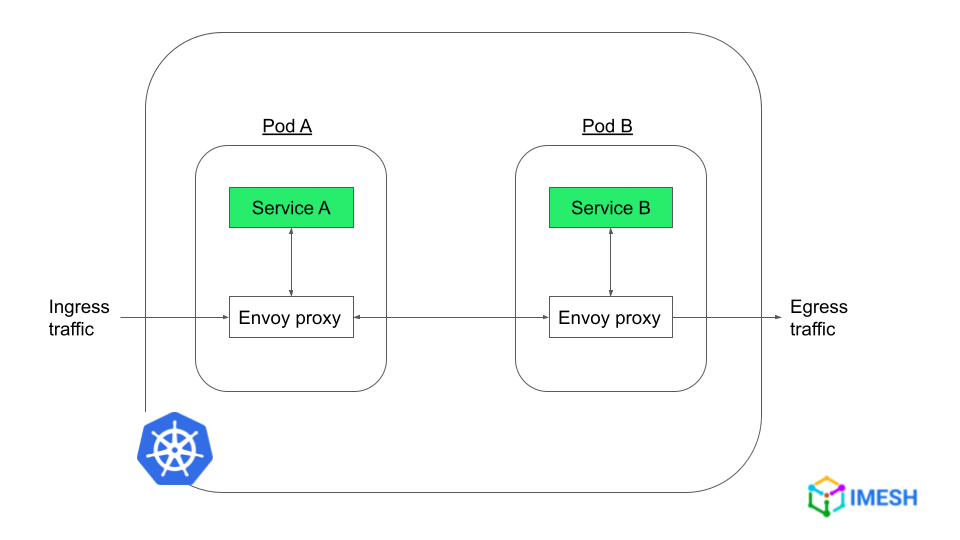

The Sidecar Pattern

The most defining characteristic of Envoy is its implementation via the Sidecar Pattern. Instead of forcing developers to bake networking logic—like retries, timeouts, and monitoring—into every single service, Envoy runs alongside the application as a separate process. This “sidecar” intercepts all incoming and outgoing traffic. Because it is out-of-process, Envoy can be updated, configured, and managed without touching the application code itself, regardless of whether that application is written in Go, Java, Python, or C++.

L4 and L7 Proxying

Envoy operates at both Layer 4 (TCP/UDP) and Layer 7 (HTTP, gRPC, MongoDB, etc.) of the OSI model.

- Layer 4 (Transport Layer): At this level, Envoy makes decisions based on IP addresses and ports. It handles connection pooling and raw byte-stream filtering.

- Layer 7 (Application Layer): This is where Envoy truly shines. It can “inspect” the traffic. It understands HTTP headers, URLs, and gRPC methods. This allows for advanced routing, such as sending 10% of traffic to a “Beta” version of a service based on a specific user cookie.

The Filter Chain Design

Envoy’s internal architecture is built on a highly modular filter chain. When a request enters Envoy, it passes through a series of filters. There are “Listener Filters” that handle initial connection metadata and “Network Filters” that handle the heavy lifting of data processing. This extensibility allows developers to plug in custom logic for authentication, rate-limiting, or logging without altering the core proxy engine.

Core Capabilities: Resilience, Observability, and Security

Envoy is often described as a “universal data plane.” Its primary job is to ensure that requests get where they need to go efficiently and securely, while providing deep insights into what is happening on the wire.

Network Resilience and Traffic Management

In a distributed system, failure is inevitable. A single slow service can cause a cascading failure across the entire system. Envoy mitigates this through several sophisticated mechanisms:

- Retries and Timeouts: Envoy can automatically retry a failed request to another instance of a service.

- Circuit Breaking: If a service starts failing consistently, Envoy “trips” a circuit breaker, temporarily stopping traffic to that service to allow it to recover, rather than continuing to overwhelm it.

- Load Balancing: Envoy supports advanced load-balancing algorithms, including “Least Request” and “Zone Aware Routing,” ensuring that traffic is distributed to the healthiest and closest available instances.

Deep Observability

“If you can’t measure it, you can’t manage it.” This is a mantra in DevOps, and Envoy is built with observability as a first-class citizen. Because it sits in the middle of every request, it can generate incredibly detailed statistics. It provides:

- L7 Metrics: Detailed counts of HTTP response codes (200s, 404s, 500s).

- Distributed Tracing: Integration with tools like Jaeger or Zipkin to track the path of a single request as it moves through twenty different services.

- Access Logging: Customizable logs that capture every detail of a transaction for later analysis or security auditing.

Security and mTLS

Envoy plays a critical role in the “Zero Trust” security model. It can handle Mutual TLS (mTLS) termination and origination. This means that even if the application code doesn’t support encryption, Envoy can encrypt the traffic between services, ensuring that data in transit is secure and that only authorized services can communicate with one another.

Envoy in the Service Mesh Ecosystem: Istio and Beyond

While Envoy is a powerful tool on its own, it is rarely managed manually in large-scale environments. In a system with thousands of Envoys, you need a way to coordinate them. This is the difference between the “Data Plane” and the “Control Plane.”

The Data Plane vs. Control Plane

Envoy is the Data Plane—it handles the actual data packets. The Control Plane is the “brain” that tells the Envoys what to do. The most famous control plane for Envoy is Istio. Istio provides a centralized interface where administrators can define policies, which are then pushed out to all the Envoy sidecars in the cluster. Other notable control planes include Gloo Mesh and Consul.

Dynamic Configuration (xDS APIs)

A key reason Envoy surpassed older proxies like NGINX or HAProxy in cloud-native circles is its “API-first” configuration. Most proxies require a configuration file and a restart to change settings. Envoy, however, uses the xDS APIs. This allows the control plane to stream updates to Envoy in real-time without ever dropping a connection. Whether it’s a new service discovery (SDS), a new route (RDS), or a new cluster (CDS), Envoy updates its internal state on the fly.

Comparison with NGINX and HAProxy

While NGINX and HAProxy are legendary tools, they were designed in an era of static servers. Envoy was built from the ground up for the “ephemeral” nature of containers and Kubernetes. While NGINX has added features to compete, Envoy’s native support for gRPC, its advanced observability, and its deeply integrated API-driven configuration give it a distinct advantage in complex, high-velocity environments.

The Future of Networking: WASM and Edge Computing

Envoy is not a static project; it continues to define the cutting edge of networking technology. Two areas, in particular, are shaping its future: WebAssembly (WASM) and the move toward the Edge.

Extending Envoy with WebAssembly

Traditionally, if you wanted to add custom logic to Envoy, you had to write it in C++ and compile it into the binary. This was slow and risky. With the introduction of WASM support, developers can now write custom filters in languages like Rust, Go, or AssemblyScript and load them into Envoy dynamically. This allows for unprecedented customization, enabling companies to build bespoke security or data-transformation filters that run at lightning speed within the proxy.

Envoy at the Edge

While Envoy started as an internal service-to-service proxy, it is increasingly being used as an API Gateway or “Edge Proxy.” In this role, it sits at the very edge of a network, handling traffic from the public internet. Its ability to handle massive throughput, combined with sophisticated rate-limiting and WAF (Web Application Firewall) integrations, makes it an ideal candidate for protecting and routing external traffic into a Kubernetes cluster.

Conclusion: Why Every Tech Professional Should Know Envoy

In the modern world of software engineering, the “network” is no longer just a pipe; it is a programmable, intelligent layer of the application stack. Envoy has successfully decoupled the complexity of the network from the business logic of the application, allowing developers to focus on building features while infrastructure engineers ensure those features are resilient, observable, and secure.

Whether you are a DevOps engineer managing a massive Kubernetes cluster, a backend developer wondering why your service calls are failing, or a CTO planning a migration to the cloud, understanding Envoy is essential. It is more than just a proxy; it is the fundamental building block that makes the scale and reliability of modern giants like Lyft, Netflix, and Google possible in a distributed world. As we move toward more decentralized and edge-based computing, Envoy’s role as the “universal translator” of the digital world is only set to grow.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.