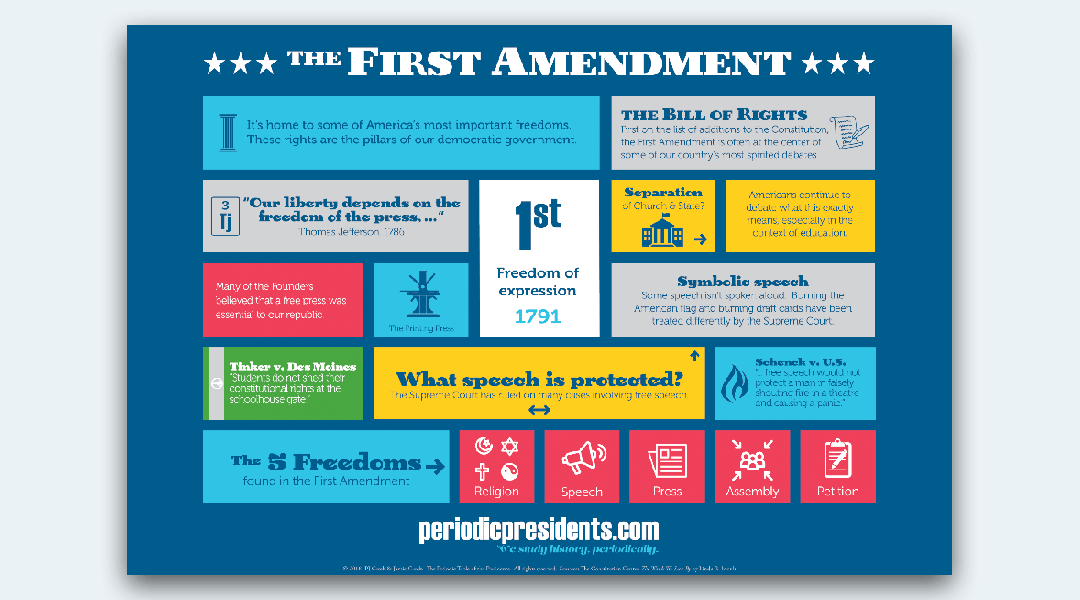

The First Amendment of the United States Constitution—protecting the freedoms of speech, press, assembly, petition, and religion—was drafted in an era of town squares, printing presses, and hand-delivered letters. Today, the “town square” is hosted on centralized servers in Silicon Valley, the “press” consists of millions of independent bloggers and social media influencers, and “assembly” often takes place in encrypted chat rooms or virtual reality. As technology continues to outpace legislation, understanding our First Amendment rights requires a deep dive into the intersection of constitutional law and digital innovation.

In the tech sector, the First Amendment is not just a legal doctrine; it is the foundation upon which the modern internet was built. However, the transition from physical to digital has created complex gray areas regarding who is bound by these rights and how they are enforced in a world governed by algorithms and code.

The Evolution of Speech: From Paper to Pixels

When we discuss First Amendment rights within the context of technology, the most significant shift is the medium of delivery. Historically, the government was the primary entity capable of suppressing speech. Today, technological intermediaries—social media platforms, internet service providers (ISPs), and search engines—hold unprecedented power over what we see and say.

Does the First Amendment Apply to Private Tech Platforms?

One of the most persistent misconceptions in the tech world is that private companies like Meta, X (formerly Twitter), or Google are legally required to uphold First Amendment standards. In reality, the First Amendment prohibits the government from abridging speech, not private corporations.

From a tech perspective, these platforms are private property. Just as a homeowner can ask a guest to leave for being rude, a social media platform can ban a user for violating Terms of Service. This distinction is crucial for tech developers and users alike: digital platforms have their own First Amendment right to curate content as they see fit, a concept often referred to as “editorial discretion.”

The Concept of the Digital Public Square

Despite being private entities, the sheer scale of these platforms has led many to argue that they function as a “digital public square.” When a single software ecosystem hosts the majority of a nation’s political discourse, the line between private property and public forum blurs. Courts are currently grappling with whether certain digital spaces have become “quasi-public,” which would fundamentally change how developers design moderation algorithms and how users exercise their rights.

Section 230 and the Legal Shield of the Internet

No discussion of First Amendment rights in tech is complete without mentioning Section 230 of the Communications Decency Act. Often called “the twenty-six words that created the internet,” Section 230 provides a safe harbor for tech companies, stating that “no provider or user of an interactive computer service shall be treated as the publisher or speaker of any information provided by another information content provider.”

Platform vs. Publisher: The Tech Distinction

Section 230 allows tech companies to host user-generated content without being held legally liable for every post, tweet, or video. Without this protection, the risk of litigation would be so high that platforms would either have to censor almost everything or stop hosting user content altogether. This legal framework has been the catalyst for the growth of the modern Web 2.0, enabling the rise of platforms that thrive on open participation.

The Impact of Content Moderation on User Expression

While Section 230 protects the platforms, it also grants them the right to engage in “Good Samaritan” blocking of offensive content. In the tech industry, this has sparked a massive surge in AI-driven moderation tools. Developers are tasked with creating software that can distinguish between “protected speech” and “harmful content” (such as incitement to violence or child safety violations). The challenge lies in the “black box” of AI: when an algorithm flags speech, it can inadvertently suppress legitimate expression, raising concerns about algorithmic bias and digital censorship.

AI, Algorithms, and the Future of Protected Speech

The rise of Artificial Intelligence (AI) has introduced a radical new question to the First Amendment debate: Is code speech? If a software engineer writes an algorithm that “expresses” an opinion or generates a poem, does that output receive constitutional protection?

Is Code Speech? The Constitutional Status of Software

In the landmark case Bernstein v. Department of Justice, the court ruled that source code is a form of expression protected by the First Amendment. This has massive implications for the tech industry. It means that the act of programming is, in itself, a form of speaking. As we move into an era of Generative AI and Large Language Models (LLMs), this precedent protects developers’ rights to create tools that can process and generate a wide array of information, even if that information is controversial.

AI-Generated Content and Intellectual Property Rights

As AI models like ChatGPT or Midjourney generate text and art, the tech world is forced to reconcile First Amendment rights with copyright and ethics. If an AI “speaks,” does it have its own rights, or do those rights belong to the user who prompted it? Currently, the legal consensus is that the First Amendment protects the human use of these tools. However, as AI becomes more autonomous, tech companies are advocating for clear guidelines that protect the freedom to innovate without being held responsible for the unpredictable “speech” of an autonomous agent.

Digital Surveillance and the Right to Peaceably Assemble

The First Amendment also protects the right to peaceably assemble and to petition the government for a redress of grievances. In the 21st century, this assembly often happens through digital tools, making digital security a cornerstone of constitutional rights.

Virtual Protests and Online Activism

From the Arab Spring to modern social justice movements, technology has enabled “virtual assembly.” However, this right is increasingly threatened by sophisticated surveillance tech, facial recognition, and metadata tracking. For the tech community, protecting First Amendment rights means building tools that prevent “chilling effects”—where individuals refrain from speaking or assembling because they fear they are being watched by the state.

Encryption as a First Amendment Right

Encryption is one of the most vital tech tools for preserving First Amendment freedoms. By ensuring that digital “speech” remains private between the sender and the receiver, encryption protects journalists, activists, and ordinary citizens. Many tech advocates argue that any government attempt to mandate “backdoors” into encrypted software is a violation of the First Amendment, as it compels tech companies to weaken the security of their own expressive “code” and undermines the privacy necessary for free assembly.

Navigating the Global Impact of Tech-Driven Speech Laws

While the First Amendment is a U.S. legal doctrine, the technology companies that operate under its protection are global. This creates a friction point between American free speech ideals and the varying regulations of other nations, such as the EU’s Digital Services Act or various “Right to be Forgotten” laws.

Transnational Tech and Conflicting Legal Frameworks

Tech giants face a daunting task: maintaining a unified software experience while complying with local laws that may restrict speech protected by the First Amendment. For example, a post that is protected in the U.S. might be illegal in Germany or India. Developers are forced to implement “geofencing” and region-specific moderation, which fragments the digital experience and challenges the universal nature of the internet.

The Role of Decentralization in Protecting Future Rights

As a response to the perceived over-reach of both governments and “Big Tech” platforms, a new movement in technology is emerging: Decentralized Web (Web3). By using blockchain technology and peer-to-peer protocols, developers are building platforms where no single entity has the power to delete content or ban users. In this tech-driven vision of the future, First Amendment rights are protected not by the courts, but by the immutable nature of the code itself.

Conclusion: The Coder’s Responsibility

The First Amendment is no longer just the domain of lawyers and judges; it is the domain of software engineers, UX designers, and data scientists. Every line of code that determines what content is boosted or what user is flagged is a decision that impacts the landscape of free expression.

As technology continues to evolve, our First Amendment rights will increasingly depend on “Digital Constitutionalism.” This means building transparency into algorithms, prioritizing user privacy through encryption, and ensuring that the tools of the future remain open and accessible to all voices. In the digital age, the price of liberty is not just eternal vigilance—it is also superior engineering.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.