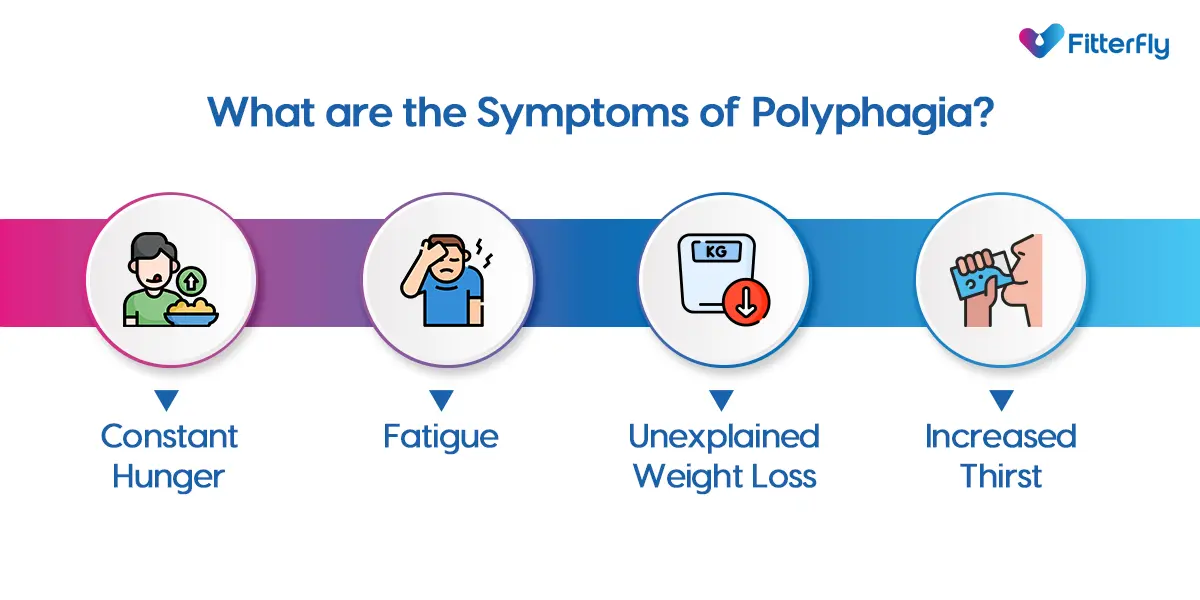

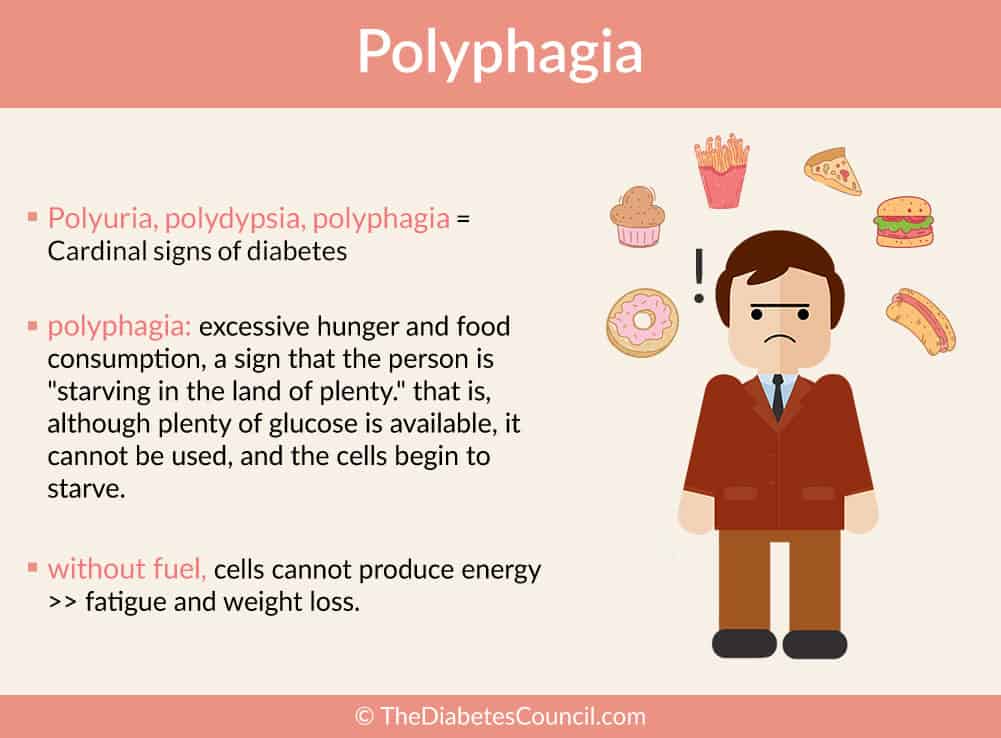

In the medical world, polyphagia describes an excessive, insatiable hunger—an physiological state where no matter how much one consumes, the body signals for more. In the landscape of 21st-century technology, we are witnessing a digital equivalent of this phenomenon. “Digital Polyphagia” is the relentless, ever-growing demand for data, compute power, and energy required to sustain the current evolution of artificial intelligence, cloud infrastructure, and the Internet of Things (IoT).

As we transition from the era of simple software to the era of generative intelligence, our systems are exhibiting a biological-like craving for resources. This article explores the technical dimensions of this hunger, the infrastructure required to feed it, and the innovations emerging to manage the “appetite” of our digital ecosystem.

The Architecture of Digital Polyphagia: Why AI Never Feels Full

The primary driver of today’s technological hunger is the shift from deterministic programming to probabilistic machine learning. In the past, software followed a set of rigid rules. Today, software “learns” from patterns, and the quality of that learning is directly proportional to the volume of data it consumes.

The Shift from Logic to Learning

Traditional software was efficient because it was lean. A banking application or a word processor required relatively few resources to execute specific commands. However, the rise of Large Language Models (LLMs) and neural networks has changed the fundamental “metabolism” of technology. These systems do not follow linear instructions; they process billions of parameters simultaneously. This shift has created a baseline hunger for “training data” that spans the entirety of the digitized human experience—from books and code repositories to social media interactions.

Large Language Models and the Hunger for Parameters

When we discuss the “size” of an AI model, we talk about parameters. GPT-2 had 1.5 billion parameters; GPT-3 jumped to 175 billion; and current frontier models are rumored to operate in the trillions. Each parameter represents a computational weight that requires memory and processing cycles during both training and inference. This exponential growth is the definition of polyphagia: as the models get smarter, their “stomach” for compute power expands at a rate that threatens to outpace our current manufacturing and energy capabilities.

The Infrastructure of Consumption: Hardware and Energy

To feed the insatiable hunger of modern algorithms, the hardware industry has had to undergo a radical transformation. We are no longer in the era of general-purpose computing; we are in the era of specialized acceleration.

The GPU Arms Race

Central Processing Units (CPUs) were the workhorses of the 20th century, designed to handle a variety of tasks one after another. However, they are insufficient for the parallel processing demands of modern AI. This has led to the dominance of Graphics Processing Units (GPUs) and Tensor Processing Units (TPUs). Companies like NVIDIA have become the “farmers” of the digital age, producing the high-calorie silicon chips required to feed data-hungry models. These chips are designed specifically to handle the massive mathematical throughput required for deep learning, yet even with massive advancements in architecture (such as the H100 and Blackwell series), the demand consistently exceeds the supply.

The Sustainability Paradox: Energy as the Ultimate Constraint

Digital polyphagia has a physical footprint. Data centers are the “stomachs” of the internet, and their caloric intake is measured in Terawatt-hours. A single query to a generative AI model can consume ten times the electricity of a standard Google search. This creates a sustainability paradox: while we use technology to optimize energy grids and discover new carbon-capture materials, the technology itself is consuming record-breaking amounts of electricity. Large tech firms are now becoming some of the world’s largest investors in nuclear and renewable energy, not just for corporate social responsibility, but out of a desperate need to keep their “hungry” servers running.

Data as the Primary Nutrient: Quality vs. Quantity

If compute power is the metabolism, data is the food. But just as in biological systems, not all “food” is nutritious. The tech industry is currently facing a “data wall,” where the supply of high-quality, human-generated data is beginning to run dry.

Synthetic Data vs. Organic Data

As AI models consume the bulk of the public internet, developers are turning to “synthetic data”—data generated by other AI models. This creates a fascinating feedback loop. If a model consumes too much of its own output without “fresh” organic data, it risks “model collapse,” a technical state where the AI becomes repetitive and loses its grasp on reality. This highlights the nuance of digital polyphagia: it isn’t just about consuming more; it’s about consuming the right diversity of information to maintain system health.

The Quality-Quantity Dilemma and Data Scans

To satisfy the hunger for data, tech companies are deploying more sophisticated scraping tools and crawlers. This has led to a technical and legal arms race regarding “data provenance.” How do we track what a model has eaten? The industry is moving toward “curated consumption,” where the focus shifts from the volume of data (petabytes) to the density of information (high-quality, peer-reviewed, and verified datasets). In this sense, the “diet” of the AI is becoming as important as the size of its “appetite.”

Mitigating the Hunger: Efficiency, Optimization, and Edge Computing

The industry realizes that perpetual growth in consumption is unsustainable. Engineers are now looking for ways to make technology more “metabolically efficient”—performing the same high-level tasks with a fraction of the resources.

Algorithmic Pruning and Quantization

One of the most promising areas of tech research is “model compression.” Techniques like pruning (removing unnecessary parameters that don’t contribute to the output) and quantization (reducing the precision of the numbers used in calculations) allow models to run on smaller, less power-hungry hardware. By making the models “leaner,” we can satisfy the demand for intelligence without requiring a massive data center for every interaction. This is the digital equivalent of increasing metabolic efficiency.

The Rise of Edge Computing

Instead of sending every request to a massive, power-hungry cloud server (centralized polyphagia), the industry is moving toward “Edge AI.” This involves processing data locally on devices like smartphones, laptops, and IoT sensors. By “decentralizing the appetite,” we reduce the strain on the global digital infrastructure and improve latency. However, this requires a new generation of low-power chips capable of performing complex reasoning without draining a battery in minutes.

The Future of Resource-Hungry Tech: Toward Neuromorphic Computing

As we look toward the next decade, the goal is to move away from the “brute force” consumption of current silicon-based systems and toward more elegant architectures inspired by the most efficient “computer” we know: the human brain.

Neuromorphic Engineering and Biomimicry

The human brain can perform complex reasoning on about 20 watts of power—roughly the amount needed to light a dim bulb. In contrast, a high-end AI server rack requires thousands of watts. Neuromorphic computing seeks to mimic the “spiking” nature of biological neurons, which only consume energy when they are firing. This “event-driven” architecture could solve the problem of digital polyphagia by ensuring that the system only “eats” when it is actually working, rather than staying in a high-consumption state constantly.

Balancing Innovation with Scarcity

The ultimate challenge of the next era of technology will be managing the tension between our limitless ambition and our finite resources. Polyphagia in a biological sense is often a symptom of an underlying condition; in the tech world, it is a symptom of our transition into a truly intelligent digital age. Whether we can continue to feed this hunger through better hardware, more efficient algorithms, or entirely new computing paradigms will define the trajectory of the 21st century.

In conclusion, “What’s Polyphagia?” in a tech context is the defining characteristic of modern AI and cloud systems. It is an insatiable demand for the nutrients of the information age: data and electricity. While this hunger drives unprecedented innovation, the future of the industry depends on our ability to move from “gluttonous” growth to sustainable, efficient evolution. The goal is no longer just to build the biggest, hungriest model, but to build the smartest, most efficient one.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.