For centuries, the question of “what planets can we see tonight” was answered by consultng paper almanacs, studying complex orbital mechanics, or simply possessing an encyclopedic knowledge of the night sky. Today, that question has been transformed by a technological renaissance. We no longer rely solely on our naked eyes or rudimentary glass lenses; instead, we leverage a sophisticated ecosystem of mobile applications, artificial intelligence, automated hardware, and digital signal processing to peel back the veil of the cosmos.

The intersection of astronomy and consumer technology has democratized the heavens. What was once the exclusive domain of professional observatories is now accessible to anyone with a smartphone and a basic understanding of modern digital tools. This article explores the technological landscape that allows us to identify, track, and visualize the planets in our solar system with unprecedented precision.

The Digital Sky: Mobile Ecosystems and AR Integration

The most immediate answer to finding planets tonight lies in the pocket of nearly every human on Earth: the smartphone. The evolution of mobile hardware—specifically the integration of magnetometers, gyroscopes, and high-speed processors—has birthed a generation of “Sky Guide” applications that have fundamentally changed how we interact with the celestial sphere.

Real-Time Sky Mapping and AI Recognition

Modern astronomy apps utilize Augmented Reality (AR) to overlay a digital map of the universe onto the live feed from a smartphone camera. When you point your device at a bright point in the sky, the software cross-references your GPS coordinates and the device’s orientation with massive celestial databases. Through sophisticated AI algorithms, these apps can distinguish between a flickering star (scintillation) and the steady glow of a planet.

This technological leap solves the primary barrier for beginners: identification. By utilizing computer vision, some advanced apps can even “plate solve” a photo taken by the user, identifying not just the planets but distant nebulae and star clusters within seconds.

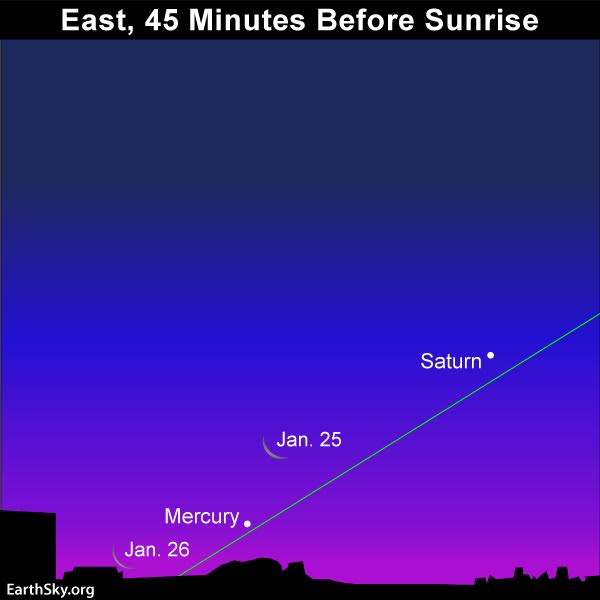

Integration with IoT and Wearable Tech

The tech stack for planetary observation has expanded into the Internet of Things (IoT). Smartwatches now provide haptic feedback and real-time alerts for “conjunctions”—events where two or more planets appear close together in the sky. These wearables pull data from APIs (Application Programming Interfaces) hosted by organizations like NASA’s Jet Propulsion Laboratory (JPL), ensuring that the user is notified the moment Jupiter or Saturn becomes visible above their local horizon. This seamless integration ensures that technology acts as a silent assistant, constantly monitoring orbital mechanics so the user doesn’t have to.

Smart Hardware: The Rise of the Automated Telescope

The traditional telescope, once a purely mechanical instrument requiring manual alignment and “star hopping,” has undergone a digital transformation. The “Smart Telescope” is perhaps the most significant gadget trend in the hobbyist space over the last decade.

Automated Alignment and Plate Solving

New-age gadgets like the Unistellar Evoscope or the ZWO Seestar utilize “Plate Solving” technology. This is a process where the telescope’s internal camera takes a picture of the star field, compares it to an internal database of millions of stars, and calculates its exact orientation. Within minutes of being turned on, these devices can autonomously orient themselves. If a user wants to see Mars, they simply select “Mars” on a connected tablet, and the telescope’s robotic motors (controlled by precise firmware) slew the optics to the exact coordinates.

Enhancement Through Digital Sensors

Unlike traditional telescopes where the human eye is the primary sensor, smart telescopes use highly sensitive CMOS (Complementary Metal-Oxide-Semiconductor) sensors. These sensors are capable of capturing photons over several minutes—a process known as “live stacking.” While the human eye might only see a faint, blurry dot when looking at a distant planet like Neptune, these digital sensors can resolve color, atmospheric bands, and even moons by processing the light in real-time. This technological shift has moved astronomy from a passive viewing experience to a high-tech data acquisition process.

Digital Signal Processing and Astrophotography Software

The quest to see “what planets are visible” often leads to the desire to capture them. However, the Earth’s atmosphere acts like a turbulent liquid, distorting the light from distant planets. This is where specialized software and digital security-grade sensors become essential.

Lucky Imaging and Frame Stacking Algorithms

To see the planets clearly, tech-savvy observers use a technique called “Lucky Imaging.” Instead of taking a single photo, high-speed industrial cameras capture thousands of frames per second in a video format. Specialized software like AutoStakkert! or Registax then analyzes every single frame.

The software’s algorithms grade the frames based on “seeing” (atmospheric stability), discarding the 90% that are blurry and “stacking” the sharpest 10%. This digital reconstruction allows an amateur using a 4-inch telescope to produce images of Saturn’s rings that rival the views from professional observatories of the 1970s. It is a triumph of software engineering over physical limitations.

Post-Processing and AI-Driven Clarification

Once a planetary image is stacked, the data undergoes rigorous post-processing. Wavelet processing—a mathematical tool used in signal processing—is applied to enhance specific layers of detail without introducing noise. Recently, AI-driven sharpening tools (such as Topaz Labs or specialized astronomical AI plugins) have been introduced. These tools are trained on high-resolution images from the Hubble Space Telescope and the James Webb Space Telescope (JWST), allowing them to intelligently “fill in” details or remove digital artifacts, creating a crisp, clear representation of what is visible in the sky tonight.

Networked Astronomy and Citizen Science

Technology has not only improved how we see planets but also how we share that data. We are currently living in the era of networked astronomy, where individual data points contribute to a global understanding of our solar system.

Global Telescope Networks and Remote Access

The “Tech” of tonight’s sky isn’t limited to what you have in your backyard. Cloud-based platforms now allow users to rent time on massive research-grade telescopes located in dark-sky sites like the Atacama Desert in Chile or the outback of Australia. Through a web browser, a user in London can control a telescope in the Southern Hemisphere to see planets that aren’t even visible from their own latitude. This “Astronomy as a Service” model uses low-latency networking and secure remote-control software to bring the entire sky to a single screen.

Contributing Data to Professional Space Agencies

The software used by amateurs often integrates directly with professional databases. When a hobbyist captures an image of a storm on Jupiter using their digital setup, they can upload that data to platforms like “JunoCam.” NASA scientists use this citizen-provided data to help plan the orbits and imaging targets of the Juno spacecraft currently orbiting Jupiter. This creates a feedback loop where consumer-grade gadgets and software become essential components of professional space exploration.

The Future of Planetary Observation Gadgets

As we look toward the next decade, the technology used to answer “what planets can we see tonight” is poised for another leap. We are moving beyond 2D screens and toward immersive, data-rich environments.

VR Space Exploration and Real-Time Telemetry

Virtual Reality (VR) and Mixed Reality (MR) headsets are beginning to incorporate real-time astronomical data. Imagine wearing a headset that labels the planets in your actual sky and then allows you to “zoom” in, switching from your local camera view to a high-resolution 3D model powered by the latest telemetry from NASA probes. This blend of real-world observation and digital simulation represents the next frontier in educational technology.

Quantum Sensing and Enhanced Optics

On the hardware side, the integration of quantum sensors and adaptive optics—technologies previously reserved for multi-billion dollar projects like the Extremely Large Telescope (ELT)—is trickling down to the high-end consumer market. Adaptive optics use deformable mirrors to counteract atmospheric turbulence in real-time, effectively “canceling out” the twinkling of stars and the blurring of planets. As the cost of these micro-actuators and high-speed processing units drops, the “view” from a backyard in a suburban neighborhood will soon rival the clarity of space-based observatories.

The question of “what planets can we see tonight” has evolved from a simple observation into a sophisticated technological exercise. Through the synergy of mobile apps, AI-driven hardware, advanced signal processing, and global networks, we have turned our devices into windows that look billions of miles into the void. Technology has not only made the planets visible; it has made them intimate, detailed, and accessible to all. Whether through an AR interface on a phone or an automated smart telescope, the night sky is no longer a mystery to be solved, but a digital landscape to be explored.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.