For millennia, spoken language was defined as the primary mode of human communication, a system of acoustic signals used to convey meaning, emotion, and social intent. However, in the modern technological landscape, the definition of “spoken language” has undergone a radical transformation. It is no longer a strictly biological phenomenon. Today, spoken language represents the ultimate frontier in Human-Computer Interaction (HCI), serving as a complex data set that engineers and data scientists are decoding to build more intuitive, responsive, and “human” artificial intelligence.

In the tech sector, spoken language is viewed through the lens of Natural Language Processing (NLP) and Speech-to-Text (STT) technologies. It is the bridge between human thought and machine execution. As we transition from a screen-first to a voice-first digital world, understanding what spoken language is—and how technology replicates it—is essential for understanding the future of software, hardware, and AI tools.

The Digital Evolution of Human Speech

To a technologist, spoken language is more than just words; it is a stream of unstructured data. Historically, computers were designed to understand structured data—binary code, spreadsheets, and rigid programming languages. The evolution of tech has been a journey toward teaching machines to interpret the messy, fluid, and context-dependent nature of human speech.

From Acoustic Waves to Data Packets

The technical process of handling spoken language begins with signal processing. When a user speaks into a device, the microphone captures acoustic pressure waves and converts them into an analog electrical signal, which is then digitized. This raw audio data is broken down into tiny segments called frames.

Modern tech tools use Digital Signal Processing (DSP) to filter out background noise and isolate the vocal frequencies. This is the foundation of every smart assistant and transcription tool we use today. By converting the nuance of human breath and vibration into mathematical vectors, developers can create systems that “hear” with increasing precision, laying the groundwork for more complex analysis.

The Role of Natural Language Processing (NLP)

Once the audio is digitized, the tech moves from the realm of physics into the realm of linguistics-based computation. This is where NLP takes over. NLP is the subfield of AI that focuses on the interaction between computers and human language.

In the context of spoken language, NLP involves several layers:

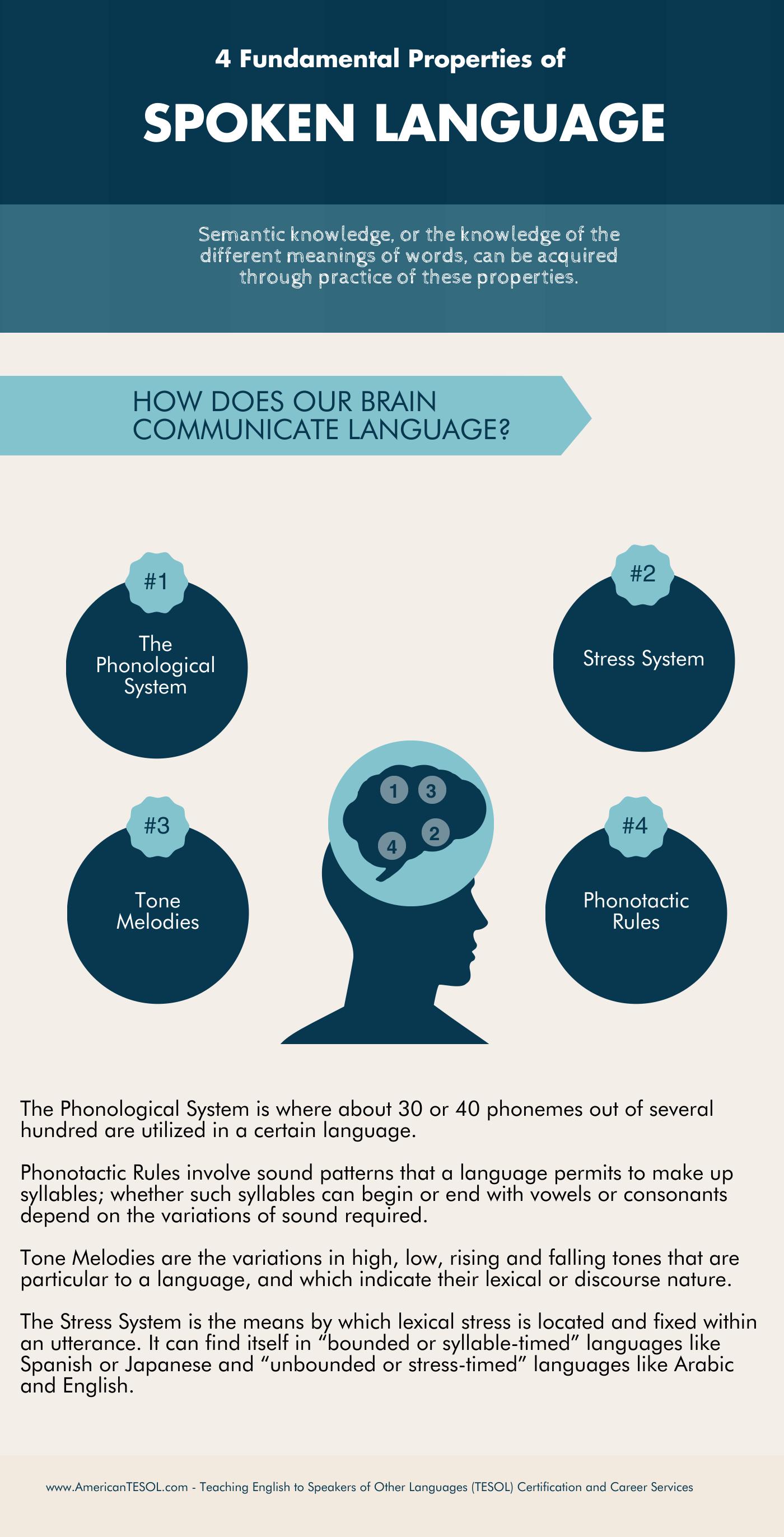

- Phonology: Recognizing the distinct sounds (phonemes) that make up a language.

- Morphology: Understanding how those sounds form words.

- Syntax: Analyzing the arrangement of words to create meaningful sentences.

- Semantics: Determining the literal meaning of those sentences.

By leveraging deep learning models, modern software can now perform these tasks in milliseconds, allowing for real-time interaction that feels natural to the human user.

Large Language Models (LLMs) and the Simulation of Spoken Intent

The most significant breakthrough in the technology of spoken language has been the rise of Large Language Models (LLMs), such as GPT-4 and its successors. These models have shifted the focus from mere transcription to the simulation of intent and conversation.

Understanding Context and Pragmatics in AI

Spoken language is notoriously difficult for machines because it relies heavily on “pragmatics”—the social context that changes the meaning of words. For example, the phrase “That’s great” can be an expression of genuine joy or biting sarcasm depending on tone and context.

Modern AI tools are trained on massive datasets to recognize these patterns. By using Transformer architectures, these models can pay “attention” to different parts of a conversation simultaneously. This allows the AI to maintain the thread of a spoken dialogue over long durations, remembering what was said minutes ago and adjusting its responses accordingly. This ability to handle context is what transforms a simple voice command into a true “spoken language” exchange.

The Shift from Rule-Based to Neural Synthesis

In the early days of voice tech, systems were rule-based. Developers had to program specific “if-then” scenarios for every possible spoken phrase. This was incredibly limiting. Today, we use neural synthesis.

Neural networks allow machines to learn the statistical likelihood of word sequences. When an AI “speaks” back to us, it isn’t just playing back recorded clips; it is generating speech using neural Text-to-Speech (TTS) engines that mimic human prosody—the rhythm, stress, and intonation of speech. This technological leap has made spoken language the primary interface for everything from customer service bots to sophisticated AI companions.

Voice Technology: Bridging the Gap Between Human and Machine

The hardware and software ecosystem surrounding spoken language has exploded into a multi-billion dollar industry. This niche is defined by two primary technological pillars: Speech-to-Text (STT) and Text-to-Speech (TTS).

Speech-to-Text (STT) and Semantic Understanding

STT is the “ear” of the machine. The latest generation of STT tools, such as OpenAI’s Whisper, uses large-scale weak supervision to achieve near-human levels of accuracy across various languages and accents. The technical challenge here is “robustness.” A robust STT engine must be able to understand a user speaking in a crowded cafe, a user with a heavy regional dialect, or a user who mumbles.

Technological progress in this area is driven by “End-to-End” (E2E) deep learning models. Unlike older systems that had separate components for acoustics and linguistics, E2E models map input audio directly to character sequences. This simplifies the software architecture and significantly reduces latency, making real-time spoken interaction possible.

Text-to-Speech (TTS) and the Quest for Emotional Resonance

If STT is the ear, TTS is the “voice.” The goal of modern TTS technology is to move past the robotic, “uncanny valley” voices of the past. Companies like ElevenLabs and Google are utilizing Generative AI to create voices that possess emotional depth.

Technically, this involves “Prosody Modeling.” By analyzing thousands of hours of human speech, AI can learn where a human would naturally pause for breath, which words they would emphasize for effect, and how their pitch would rise at the end of a question. This makes the “spoken language” of the machine indistinguishable from that of a human, opening up new possibilities in accessibility, entertainment, and digital media.

The Future of Conversational Interfaces

As we look toward the next decade of technology, spoken language is poised to replace the “Graphical User Interface” (GUI) as the dominant way we interact with the digital world. We are moving toward a “Zero-UI” future where our environment responds to our voice.

Multimodal AI: Language Beyond Text

The future of spoken language in tech is multimodal. This means AI will not just process the audio of what we say, but also the visual data of our facial expressions and the situational data of our environment. Imagine an AI that realizes you are frustrated not just by the words you speak, but by the increased volume of your voice and the frantic nature of your speech patterns.

Technically, this requires the integration of diverse neural networks. The “Vision” model, the “Audio” model, and the “Language” model must work in sync to provide a holistic understanding of the human user. This is the pinnacle of spoken language technology: an interface that understands the speaker’s state of mind as well as their literal commands.

Real-Time Translation and the End of Language Barriers

Perhaps the most exciting tech trend involving spoken language is real-time, low-latency translation. We are seeing the emergence of “Universal Translators” that were once the stuff of science fiction. Using a combination of STT, Machine Translation (MT), and TTS, devices can now listen to one language and output another in the speaker’s own voice with less than a second of delay.

This involves a massive computational feat. The system must predict the end of a sentence while it is still being spoken to begin the translation process, a technique known as “simultaneous neural machine translation.” This technology is democratizing global communication, allowing for seamless collaboration across borders and cultures.

Ethical Considerations in Digital Spoken Language

With the power to decode and replicate spoken language comes significant technological and ethical responsibility. As developers and users of these AI tools, we must address the risks inherent in digitizing the most personal form of human expression.

The Challenge of Deepfakes and Voice Cloning

As TTS technology becomes more advanced, “Voice Cloning” has become a major security concern. With only a few seconds of audio, an AI can create a perfect digital replica of a person’s voice. This has led to new types of cyberattacks, such as “Vishing” (Voice Phishing), where attackers use cloned voices to bypass biometric security or trick employees into transferring funds.

The tech industry is responding with “Audio Watermarking” and “Liveness Detection.” These are sophisticated algorithms designed to detect the subtle “digital signatures” of AI-generated speech that are invisible to the human ear. The battle over the authenticity of spoken language is the new frontline of digital security.

Accessibility and Inclusivity in Voice Tech

Finally, the technology of spoken language must be inclusive. Historically, speech recognition software was trained on limited datasets, leading to poor performance for non-native speakers, people with speech impediments, or those with non-standard accents.

Modern AI research is focusing on “Inclusive Voice AI.” By diversifying training data and implementing “transfer learning,” developers are ensuring that spoken language interfaces work for everyone. Tech is not just about power; it is about empowerment. By refining the way machines understand spoken language, we are creating a more accessible world for those who cannot use traditional keyboards or screens, ensuring that the future of technology is as diverse as the human voice itself.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.