In the rapidly evolving landscape of software development and digital product design, the difference between a successful application and a forgotten one often comes down to User Experience (UX). As tech stacks become more complex and user expectations skyrocket, developers and product managers need reliable, efficient methods to ensure their interfaces are intuitive. This is where Heuristic Evaluation comes into play.

A heuristic evaluation is a thorough assessment of a product’s user interface, intended to uncover usability issues that may hinder the user journey. Unlike broad market research, this is a specialized technical inspection conducted by experts who measure an interface against a set of established “rules of thumb” or heuristics. By identifying friction points early in the development lifecycle, tech teams can save thousands of dollars in redesign costs and significantly improve user retention.

Understanding Heuristic Evaluation in the Modern Tech Stack

To appreciate the value of heuristic evaluation, one must first understand its position within the broader field of Human-Computer Interaction (HCI). While automated testing tools can find broken links or slow load times, they often fail to identify why a user feels confused by a navigation menu or why a specific workflow feels “clunky.”

Defining the Methodology

Heuristic evaluation was popularized in the early 1990s by Jakob Nielsen and Rolf Molich. At its core, it is an “inspection method” for computer software that helps identify usability problems in the user interface (UI) design. Evaluators—typically UX experts or seasoned developers—systematically go through the interface and compare it against a set of predefined principles.

In the modern tech ecosystem, this process is essential for Agile and DevOps environments. Because it does not necessarily require recruiting external participants (unlike traditional user testing), it can be performed quickly and iteratively, making it a favorite for fast-moving software startups and enterprise-level tech firms alike.

The Difference Between Heuristic Evaluation and User Testing

A common misconception in tech circles is that heuristic evaluation is a replacement for user testing. In reality, they are two sides of the same coin. User testing involves observing real people as they interact with a product to see where they struggle. Heuristic evaluation, however, relies on expert knowledge to predict where those struggles will occur based on decades of psychological and design research.

While user testing is “behavioral,” heuristic evaluation is “diagnostic.” It allows tech teams to “clean up” the obvious interface violations before putting the product in front of actual users, ensuring that the user testing phase can focus on deeper, more nuanced issues rather than basic navigation errors.

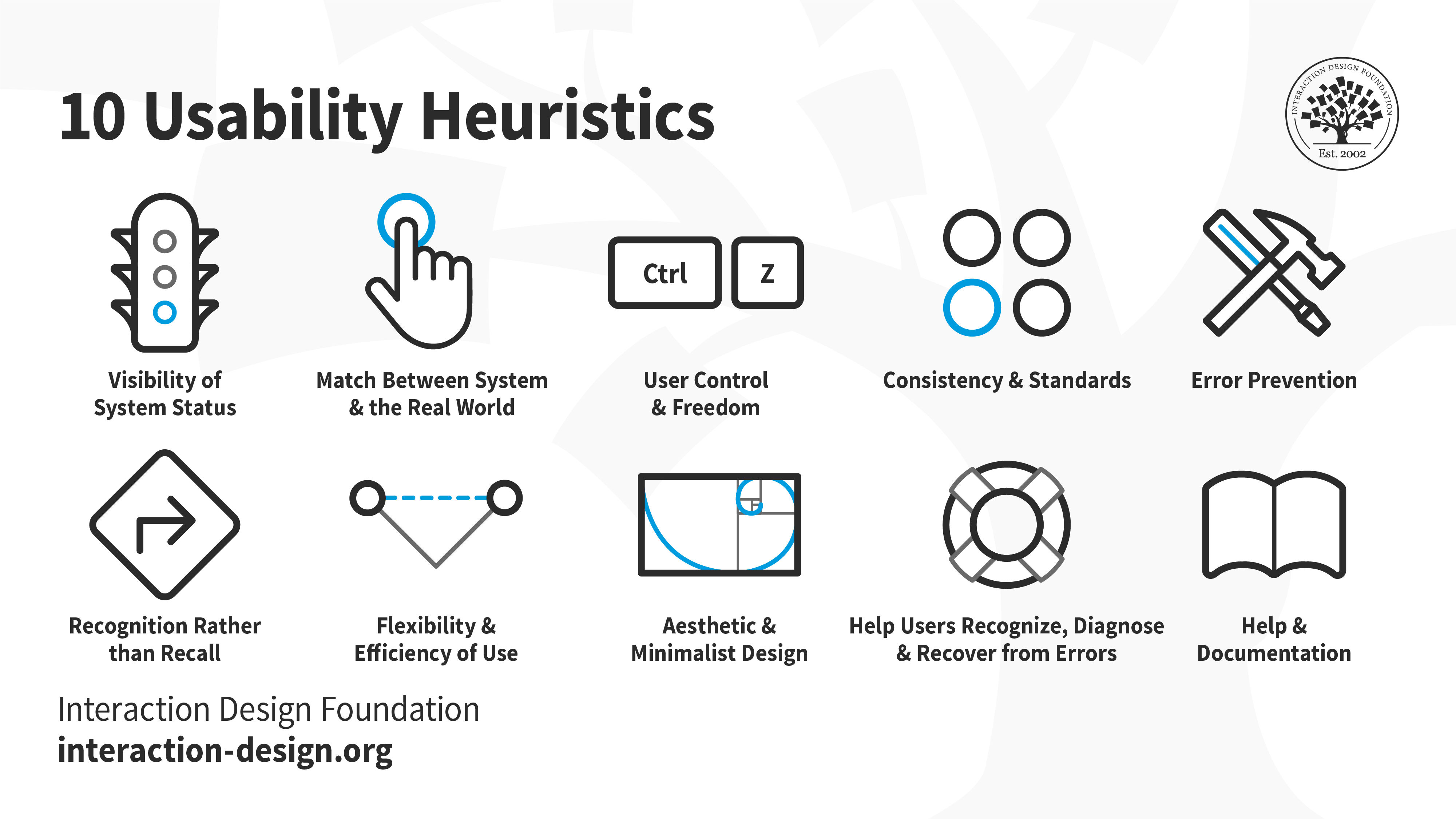

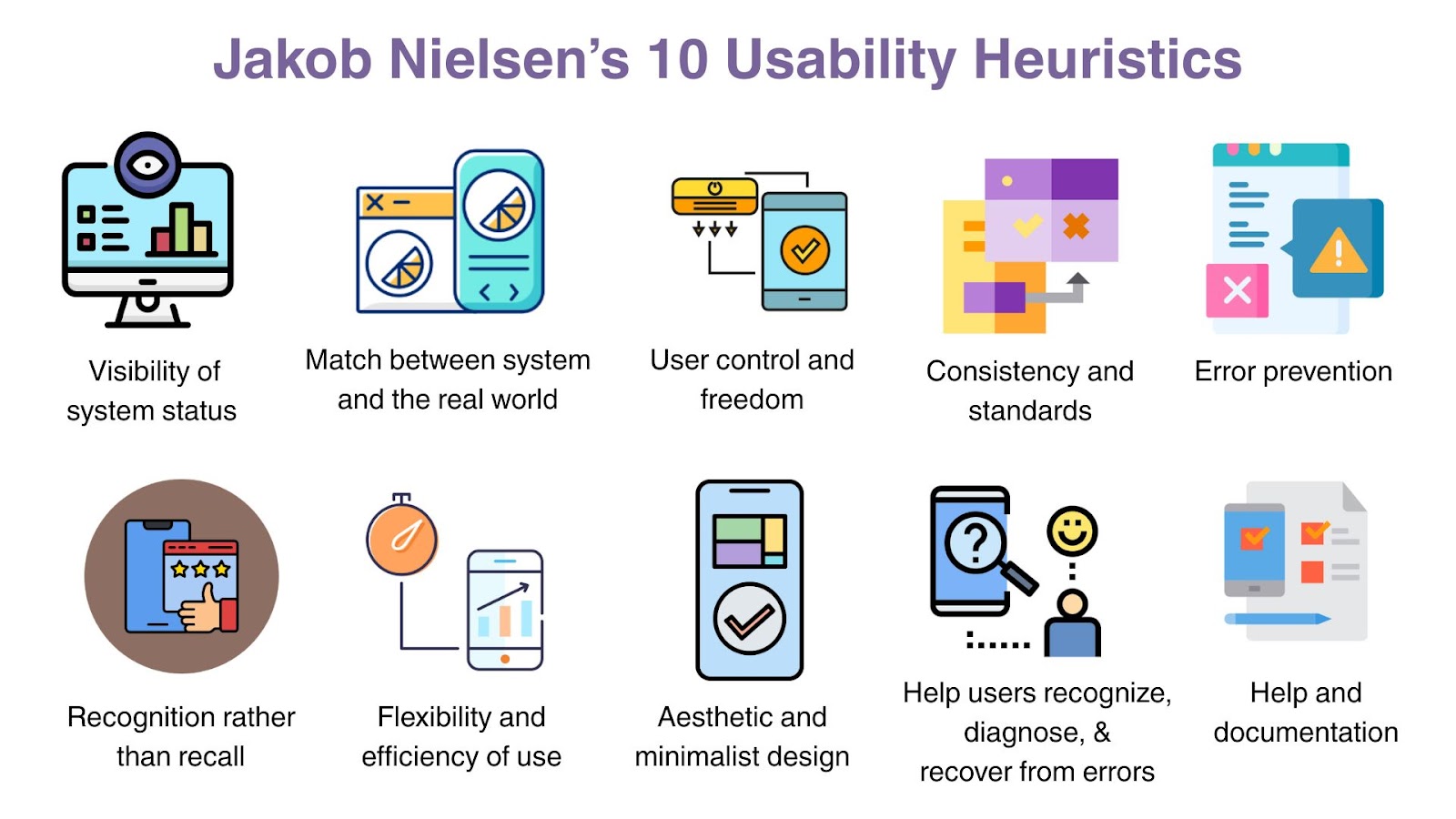

The Core Framework: Nielsen’s 10 Usability Heuristics

While various frameworks exist, Jakob Nielsen’s 10 Usability Heuristics remain the industry standard for tech professionals. These principles serve as the benchmark for any professional evaluation.

Visibility of System Status and Match Between System and Real World

The first principle, Visibility of System Status, dictates that the system should always keep users informed about what is going on through appropriate feedback within a reasonable time. In a mobile app, this might be a loading spinner or a progress bar. In a cloud-based SaaS platform, it could be a “Saved” notification that appears after an edit. Without this, users feel a sense of digital anxiety, wondering if their last action was successful.

The Match Between System and Real World suggests that the system should speak the users’ language with words, phrases, and concepts familiar to them, rather than system-oriented terms. For example, a “Trash” icon is a real-world metaphor that every user understands. Using technical jargon like “Execute Directory Purge” instead of “Delete” is a violation of this heuristic that can alienate non-technical users.

User Control, Consistency, and Error Prevention

User Control and Freedom is critical for digital security and confidence. Users often perform actions by mistake and need a clearly marked “emergency exit” to leave the unwanted state without having to go through an extended dialogue. The “Undo” and “Redo” functions are the ultimate examples of this principle in action.

Consistency and Standards ensure that users do not have to wonder whether different words, situations, or actions mean the same thing. In tech, this means following platform conventions—such as placing the search bar at the top right or using a magnifying glass icon. Error Prevention goes a step further by designing the interface so that errors cannot occur in the first place. A classic example is graying out a “Submit” button until all required fields in a software form are correctly filled.

Recognition, Flexibility, and Aesthetic Design

Recognition Rather Than Recall minimizes the user’s memory load by making objects, actions, and options visible. The user should not have to remember information from one part of the interface to another. Flexibility and Efficiency of Use caters to both inexperienced and experienced users. For instance, keyboard shortcuts in a coding IDE are “accelerators” that allow power users to move faster without distracting the novice user who prefers using the mouse.

Finally, Aesthetic and Minimalist Design is not just about beauty; it’s about signal-to-noise ratio. Every extra unit of information in an interface competes with the relevant units of information and diminishes their relative visibility. Modern tech design favors “clean” interfaces that highlight the core functionality of the app.

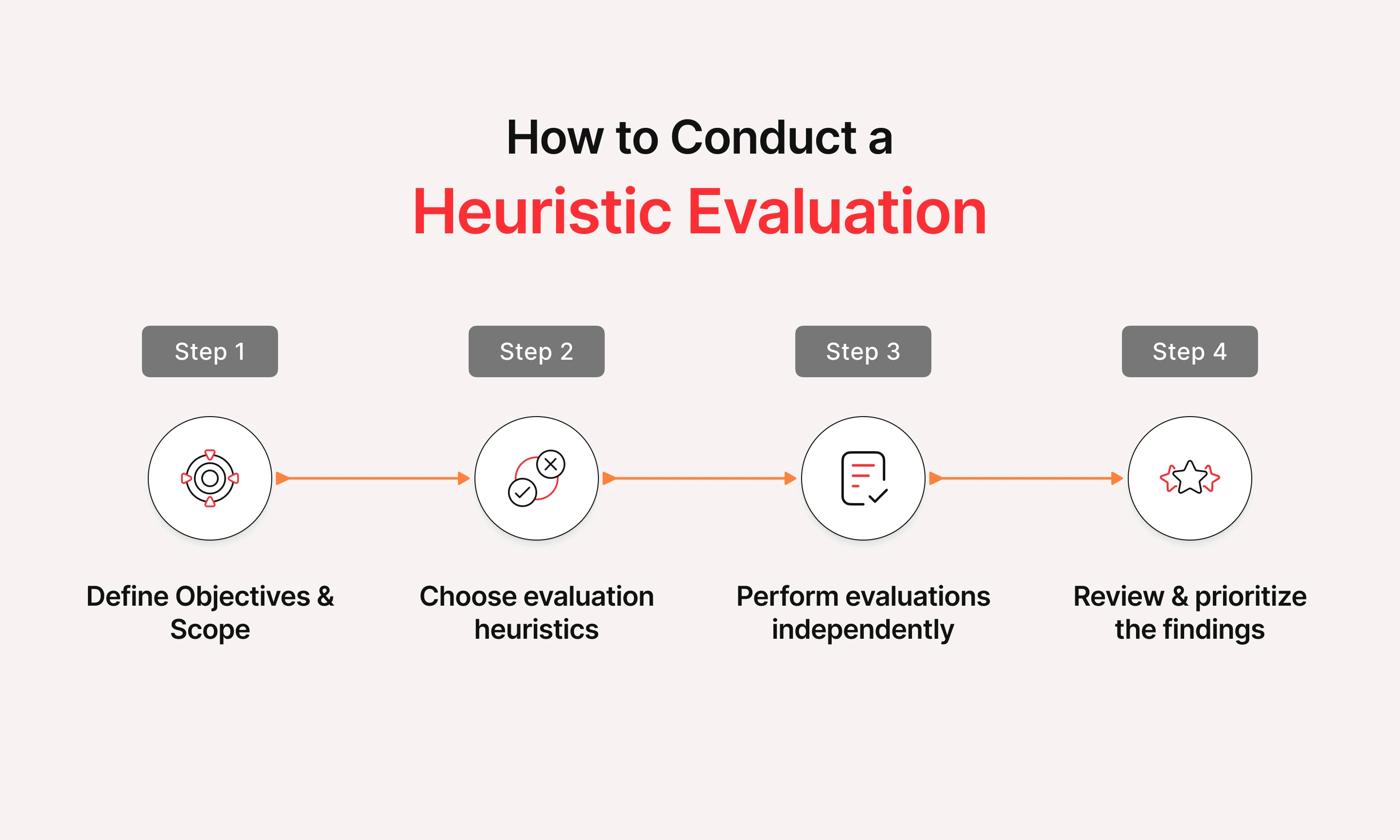

How to Conduct a Professional Heuristic Evaluation

Performing a heuristic evaluation requires more than just looking at a screen and taking notes. To yield actionable data for a tech team, the process must be structured and rigorous.

Phase 1: Planning and Selection of Evaluators

The first step is defining the scope. Are you evaluating the entire software suite or just the onboarding flow? Once the scope is set, you must select your evaluators. Research suggests that a single evaluator will find only about 35% of usability problems. However, using three to five evaluators typically uncovers around 75% to 80% of issues. These evaluators should be familiar with the 10 heuristics and, ideally, have some domain knowledge of the specific software niche (e.g., Fintech, EdTech, or Cybersecurity).

Phase 2: The Inspection Walkthrough

During this phase, each evaluator works independently. This independence is crucial to ensure that findings are not biased by the opinions of others. The evaluators go through the interface multiple times, examining the various elements and comparing them against the list of heuristics. They document every “violation,” noting exactly where it occurred, which heuristic it violated, and why. For example: “The ‘Back’ button in the checkout flow is hidden behind the keyboard—violates User Control and Freedom.”

Phase 3: Synthesis and Severity Rating

After the individual inspections are complete, the evaluators meet to aggregate their findings. They remove duplicates and then assign a Severity Rating to each problem. The standard scale is:

- 0: Not a usability problem at all.

- 1: Cosmetic problem only (needs fixing only if time permits).

- 2: Minor usability problem (low priority).

- 3: Major usability problem (high priority).

- 4: Usability catastrophe (imperative to fix before release).

This prioritized list becomes the roadmap for the development team, allowing them to tackle the most critical technical debt first.

Integrating Heuristics into the Software Development Life Cycle (SDLC)

In the world of high-stakes technology, heuristic evaluation is not just a “nice-to-have” design exercise; it is a strategic business tool that integrates directly into the SDLC.

Reducing Development Costs Through Early Detection

One of the most compelling arguments for heuristic evaluation is the ROI. In software engineering, the “1-10-100 Rule” often applies: it costs $1 to fix a problem during the design phase, $10 during development, and $100 after the product has been released to the public. By conducting a heuristic audit during the wireframing or prototyping stage, tech companies can catch logic flaws and UI inconsistencies before a single line of production code is written. This prevents “code churn” and allows engineers to focus on building features rather than patching avoidable UI bugs.

Enhancing Accessibility and Digital Security

Modern tech standards demand that software be accessible to everyone, including those with visual or motor impairments. Heuristic evaluation can be adapted to include accessibility-specific heuristics (like those found in WCAG guidelines). Furthermore, usability is intrinsically linked to security. Many digital security breaches occur because of “user error” caused by confusing interfaces. By ensuring that security settings are easy to find (Visibility of System Status) and hard to accidentally change (Error Prevention), heuristic evaluation acts as a secondary layer of digital defense.

The Future of Heuristic Evaluation: AI and Automated Audits

As we look toward the future of technology, the way we conduct heuristic evaluations is changing. Artificial Intelligence is beginning to play a significant role in how we audit digital products.

Leveraging AI Tools for Instant UX Feedback

New AI-driven tools are emerging that can scan a UI screenshot or a Figma file and automatically flag potential heuristic violations. These tools use machine learning models trained on millions of successful and unsuccessful interfaces to predict heatmaps of user attention and identify areas of high cognitive load. While these tools cannot replace the nuanced judgment of a human expert, they can provide “instant” feedback for developers during the coding process, acting as a linter for UX.

The Human Element in a Data-Driven World

Despite the rise of AI, the human element remains paramount. Tech is ultimately built for humans, and a “perfect” score from an AI tool doesn’t always translate to a delightful user experience. The future of heuristic evaluation lies in a hybrid approach: using AI for rapid, preliminary audits of basic consistency and standards, followed by expert human evaluation to tackle complex workflows and emotional engagement.

In conclusion, heuristic evaluation is a vital pillar of the tech industry. It bridges the gap between raw engineering and human psychology, ensuring that the powerful tools we build are actually usable by the people they were intended for. Whether you are a solo developer or a lead at a global tech firm, mastering the art of the heuristic audit is a surefire way to elevate your product in a competitive digital market.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.