In the realm of digital music production, the “bass clef” is more than a symbol on a staff; it represents the foundational frequency spectrum of modern sound design. For software engineers, MIDI programmers, and digital audio workstation (DAW) enthusiasts, understanding what the bass clef notes are—and how they translate into data—is essential for building immersive sonic landscapes. In the digital age, these notes serve as the coordinates for sub-oscillators, the input for artificial intelligence transcription tools, and the architectural bedrock of high-fidelity audio engineering.

The Digital Architecture of the Bass Clef: MIDI and Frequency Mapping

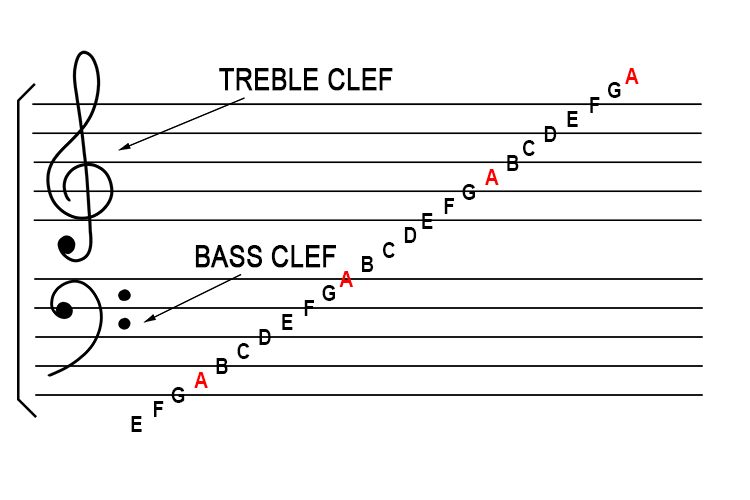

To understand the bass clef from a technological standpoint, one must look past the ink and toward the data. In traditional music theory, the bass clef (or F-clef) designates notes generally below Middle C. However, in the tech world, these notes are defined by their MIDI (Musical Instrument Digital Interface) values and their corresponding frequencies in Hertz (Hz).

Understanding MIDI Mapping for Lower Registers

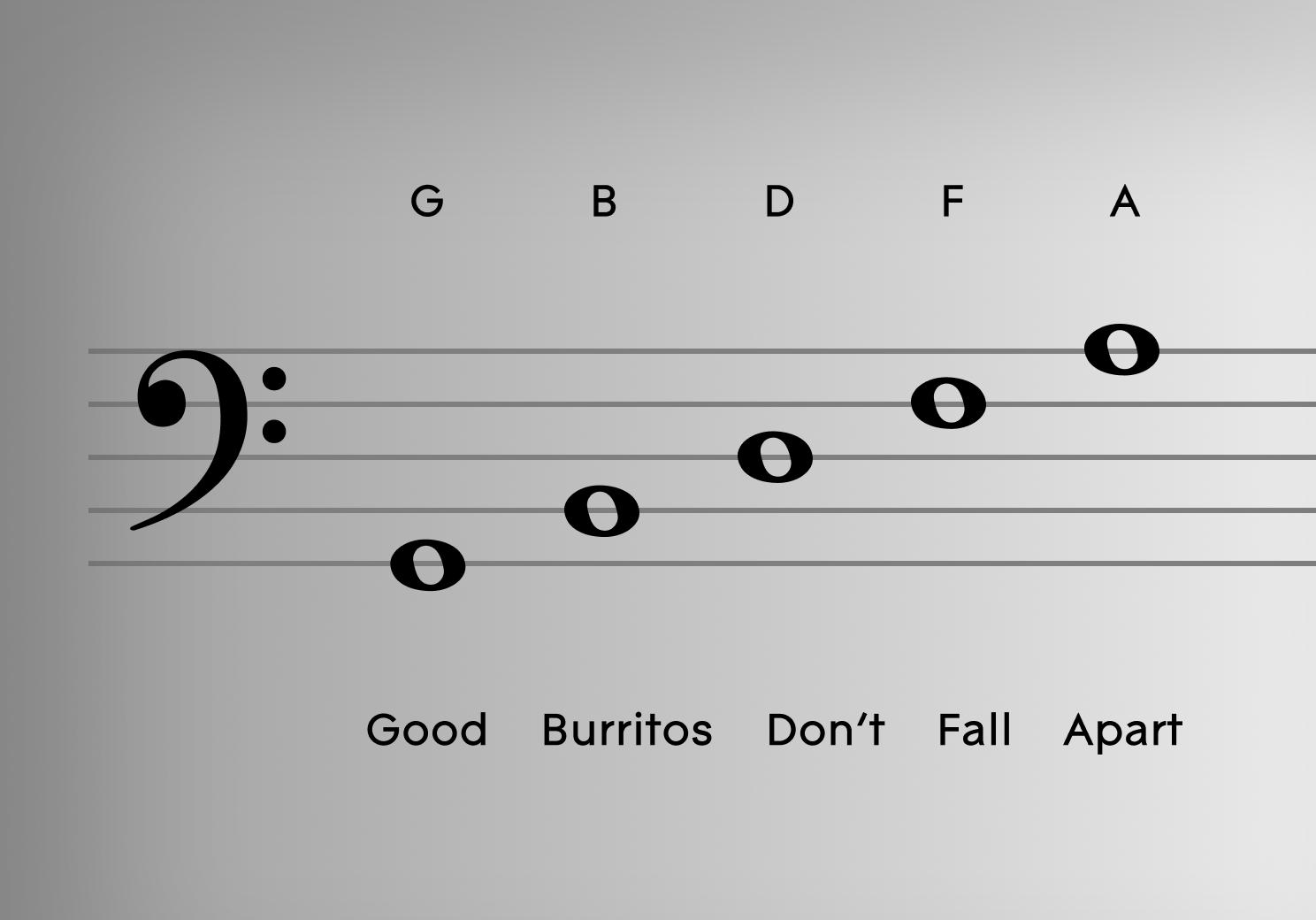

MIDI is the universal language of music technology. Each note on the bass clef corresponds to a specific MIDI note number. For instance, the “F” line that the bass clef symbol circles is F3, which carries a MIDI value of 53. Understanding this numerical mapping is crucial for developers building music software or synthesizers.

The bass clef primarily covers the range from G2 (MIDI 43) up to A4 (MIDI 69), though in a digital environment, this can extend much lower into the “sub-bass” regions of C0 or C1. When a producer inputs a note on a digital piano roll, the software isn’t “thinking” in musical notation; it is processing a binary command that triggers a specific frequency. Mastering the bass clef notes allows a technician to accurately program basslines that sit perfectly within the digital grid, ensuring timing precision that human hands often cannot achieve.

Frequency Response and the Physics of Low-End Audio

From an engineering perspective, bass clef notes represent the “heavy lifting” of the sound spectrum. The low E string on a standard bass guitar vibrates at approximately 41.2 Hz. As notes move up the bass clef staff, the frequency increases.

Tech-savvy producers use this knowledge to manage “spectral masking.” Because low-frequency waves are longer and carry more energy, they can easily clutter a digital mix. By identifying exactly which bass clef notes are being used (e.g., a low G at 49 Hz versus a C at 65.4 Hz), engineers can use high-pass filters and surgical EQ software to carve out space for the kick drum. This intersection of music theory and signal processing is what separates amateur bedroom recordings from professional-grade digital masters.

AI and Machine Learning in Bass Note Recognition

The evolution of music technology has led to the rise of Optical Music Recognition (OMR) and automated transcription. Identifying bass clef notes is a specific challenge for AI due to the complex harmonic overtones produced by low-frequency instruments like the cello, tuba, or double bass.

Optical Music Recognition (OMR) Algorithms

OMR is the “OCR” (Optical Character Recognition) of the music world. When you scan a piece of sheet music into software like SmartScore or Photoscore, the algorithm must distinguish between the Treble and Bass clefs. The “F-clef” acts as a geometric anchor. The AI looks for the two dots surrounding the fourth line of the staff to establish the coordinate system for all subsequent notes.

Modern machine learning models are trained on millions of manuscripts to identify these notes even in degraded or handwritten files. For developers, the “bass clef” is a set of features in a convolutional neural network. The technology must account for “ledger lines”—those extra lines below the staff—which represent the deepest sub-bass notes. Accurately digitizing these notes allows for the archival of historical compositions and the instant transposition of music into different keys via software.

Automated Audio-to-MIDI Transcription

One of the most impressive feats of modern music tech is the ability to take an audio recording (.wav or .mp3) and convert it into MIDI data. Software like Melodyne or Ableton’s “Convert to MIDI” function uses pitch-detection algorithms to identify bass clef notes in real-time.

Low-frequency notes are notoriously difficult for sensors to track because their waveforms are slower. This results in “latency”—a delay between the sound and the digital recognition. Advanced DSP (Digital Signal Processing) tech now uses predictive analytics to “guess” the intended bass note based on the harmonic series, allowing for near-instantaneous transcription. This is a game-changer for producers who want to hum a bassline into a microphone and have the computer immediately translate it into a digital bass clef score.

Essential Software Tools for Managing Bass Frequencies

For those working in the “low-end,” a specific suite of software tools is required to manipulate bass clef notes. These range from Digital Audio Workstations (DAWs) to specialized Virtual Studio Technology (VST) plugins.

DAWs and Low-End Signal Processing

Whether using FL Studio, Logic Pro, or Ableton Live, the “Piano Roll” is the primary interface for bass clef interaction. This digital interface replaces the traditional staff with a vertical keyboard. Here, the bass notes are visualized as blocks.

Technicians use “Side-chain Compression” technology to ensure the bass notes don’t collide with the drum kit. This is a technical process where the volume of a bass note is automatically ducked the millisecond a kick drum triggers. Without a firm grasp of where the bass clef notes sit in the frequency spectrum, a producer cannot effectively set the thresholds for these automated tools, resulting in a “muddy” or distorted sound.

Virtual Synthesis and Wavetable Technology

In the world of synthesis, bass notes are often generated through oscillators. VSTs like Xfer Records’ Serum or Native Instruments’ Massive allow users to manipulate the very shape of the sound wave.

When a user triggers a “C” on the bass clef, the software can generate a sine, saw, or square wave. Tech-focused sound designers often stack these notes. For example, they might play a standard MIDI note in the bass clef range but add a “sub-octave” (a note one octave lower) using a secondary oscillator. This creates the “chest-thumping” bass found in modern cinema and electronic music. The technology allows for precise control over the “envelope” of the note—how fast it starts (attack) and how long it lingers (release)—which is essential for defining the rhythm of a digital track.

The Future of Bass Clef Interaction: VR, Haptics, and Beyond

As we look toward the future, the way we interact with bass clef notes is moving beyond the screen and into the physical and virtual worlds.

Immersive Learning via Augmented Reality (AR)

The next generation of music education technology uses AR to teach the bass clef. Apps utilizing glasses like the Apple Vision Pro or Meta Quest can overlay digital notes directly onto a physical bass guitar or piano. By identifying the “F-line” in a 3D space, the software can guide a student’s hands to the correct position. This spatial computing approach turns the abstract concept of “bass clef notes” into a tangible, interactive UI (User Interface).

Haptic Feedback and the “Physicality” of Digital Notes

Because bass frequencies are felt as much as they are heard, tech companies are developing haptic vests and wearable devices (like the Subpac). These devices translate MIDI data from the bass clef into physical vibrations.

For a deaf musician or a high-end gamer, this technology allows for the perception of low-frequency notes through bone conduction and tactile response. This represents the ultimate convergence of music theory and wearable tech: the conversion of a note on a staff into a physical sensation, powered by real-time data processing.

Conclusion: The Digital Foundation

What are the bass clef notes? To a musician, they are the foundation of harmony. To a technologist, they are a specific range of frequencies and data points that require precise handling. From the MIDI protocols that define their existence in a DAW to the AI algorithms that transcribe them from raw audio, bass clef notes are a vital component of the modern tech landscape.

As software continues to evolve, our ability to manipulate, visualize, and feel these lower registers will only grow. Whether you are coding a new music app, engineering a global hit, or designing a virtual instrument, mastering the technology of the bass clef is the key to mastering the sound of the future.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.