In the study of human psychometrics, the concept of “Average IQ” has long served as the benchmark for cognitive capability. Traditionally defined as a score of 100, the Intelligence Quotient (IQ) is a measure derived from standardized tests designed to assess human intelligence. However, as we navigate the third decade of the 21st century, the definition of intelligence is undergoing a seismic shift. No longer is intelligence confined to biological neural networks; the rise of Artificial Intelligence (AI), machine learning, and digital augmentation is redefining what it means to be “average” and how we quantify cognitive power in a tech-driven world.

Understanding the average IQ is no longer just a psychological endeavor—it is a technological one. As software becomes more sophisticated and AI models begin to outperform humans on standardized tests, we must look at the intersection of technology and cognition to understand the future of intelligence.

1. Defining the Baseline: Human Intelligence in a Digital Context

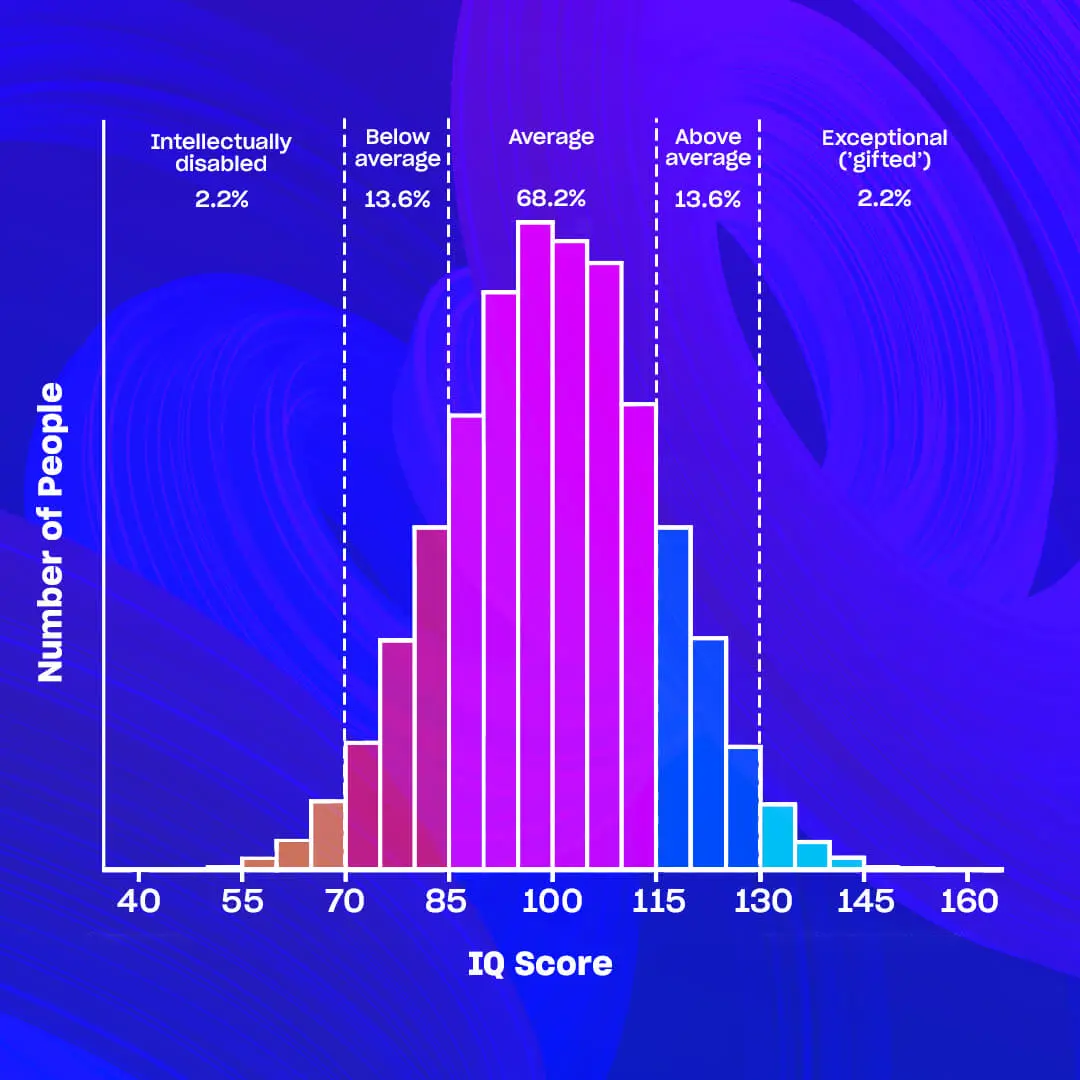

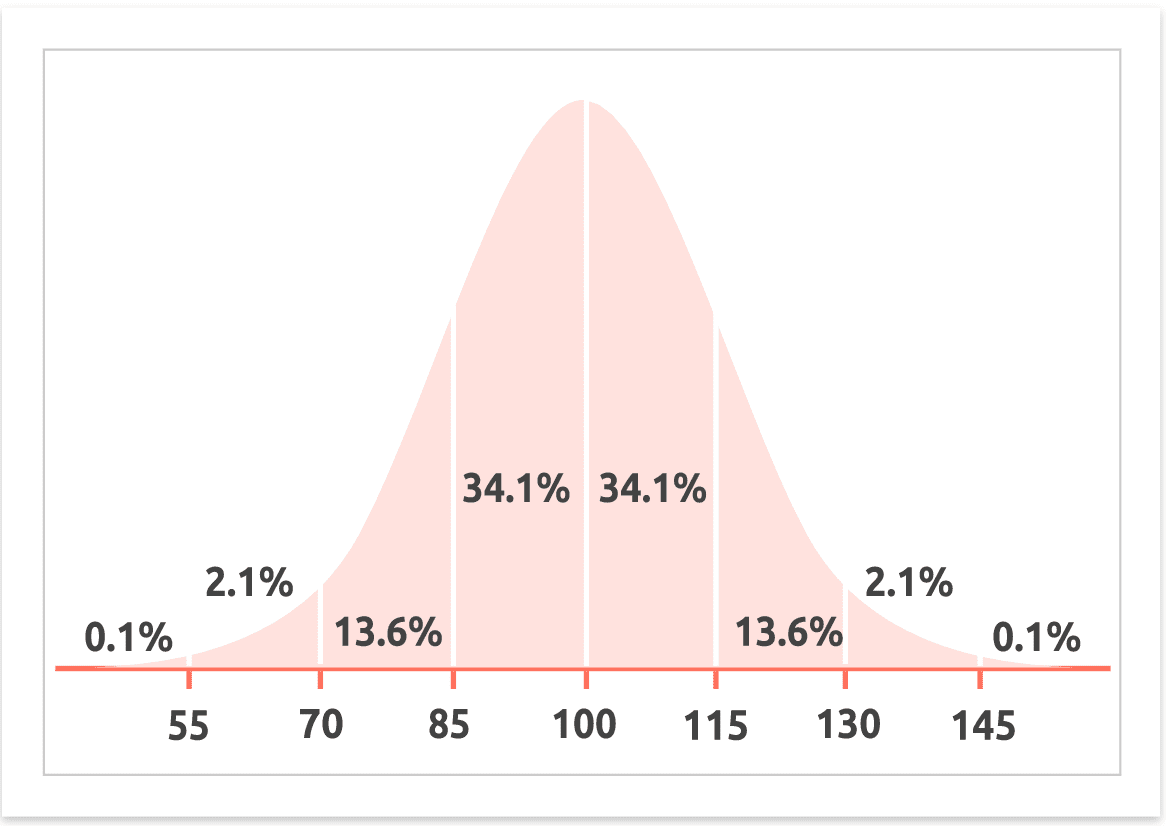

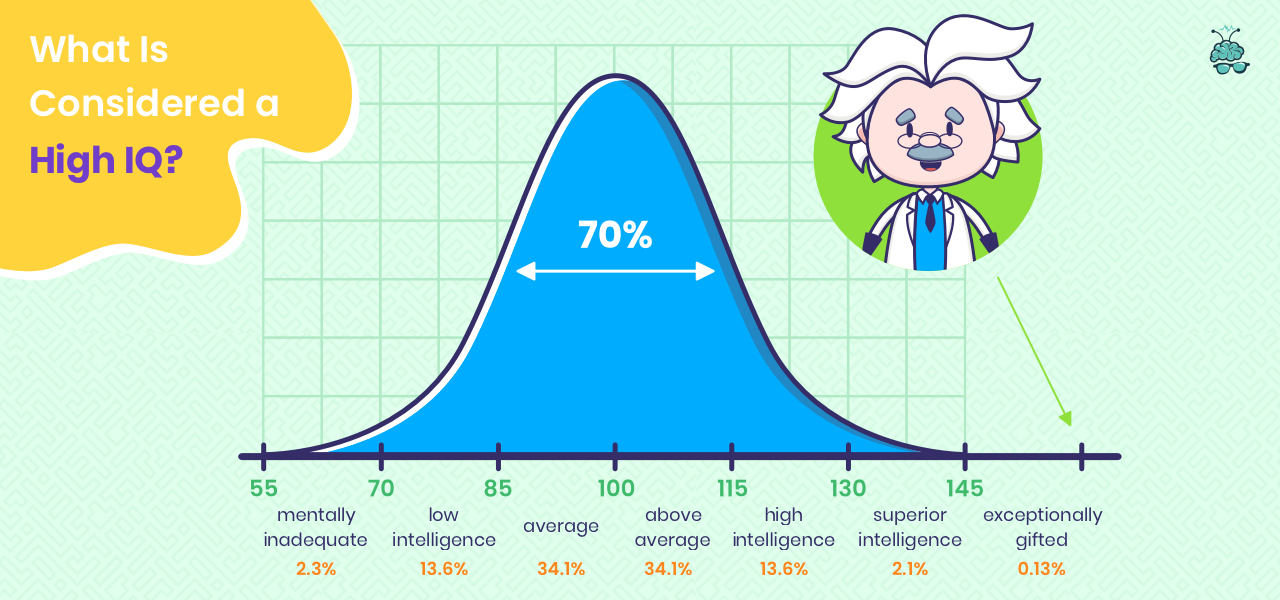

To understand the technological implications of IQ, we must first establish the baseline. The average IQ is statistically set at 100, with a standard deviation of 15. This bell curve suggests that the vast majority of the population falls between a score of 85 and 115.

The Digitization of Psychometrics

Traditionally, IQ tests were administered via pen and paper or through direct clinical observation. Today, the tech industry has revolutionized these assessments. Advanced software algorithms now provide adaptive testing, where the difficulty of the questions adjusts in real-time based on the user’s performance. This provides a more nuanced and accurate “heat map” of cognitive strengths and weaknesses than traditional methods.

The Flynn Effect and Technological Acceleration

The “Flynn Effect” refers to the observed substantial and sustained increase in IQ scores across much of the world over the 20th century. Many technologists argue that this rise is directly correlated with the proliferation of technology. Exposure to complex visual media, the requirement for digital literacy, and the constant interaction with sophisticated software interfaces have “trained” the human brain to process abstract information more efficiently, effectively raising the functional baseline of the average person.

2. Artificial Intelligence vs. Human IQ: The Great Convergence

One of the most pressing questions in the tech sector today is how the “IQ” of Large Language Models (LLMs) compares to the human average. While AI does not possess biological consciousness, its ability to solve problems, recognize patterns, and process language allows for a direct comparison with human cognitive metrics.

Benchmarking Large Language Models (LLMs)

In recent years, models like GPT-4, Claude, and Gemini have been subjected to standard human IQ tests. While these models do not “think” in the traditional sense, their performance on verbal reasoning and pattern recognition tasks has been staggering. Recent evaluations suggest that the most advanced AI models now possess a “Silicon IQ” that exceeds the human average of 100, often scoring in the 120–140 range on specific verbal and logic-based subtests.

The Gap in Fluid Intelligence

Despite high scores in crystallized intelligence (knowledge and verbal skills), technology still struggles with “Fluid Intelligence”—the ability to solve novel problems without prior knowledge. While an average human with an IQ of 100 can navigate a physical environment and adapt to unexpected social cues, AI is still refining its ability to generalize knowledge across disparate domains. Tech developers are currently focused on closing this gap through “few-shot learning” and reinforcement learning from human feedback (RLHF).

Computational IQ vs. Biological IQ

In tech circles, the conversation is shifting from “What is the average IQ?” to “What is the computational capacity?” While a human brain operates at approximately 20 watts of power, an AI cluster requires megawatts. The tech industry is striving for “algorithmic efficiency,” aiming to achieve human-level problem-solving (IQ 100) with a fraction of the current energy and data requirements.

3. Technology as a Cognitive Multiplier: Raising the Functional IQ

The tech industry isn’t just measuring IQ; it is actively seeking to enhance it. We are entering an era of “Cognitive Augmentation,” where the average person’s effective intelligence is boosted by the tools they use.

The Concept of the “Second Brain”

Software applications like Notion, Obsidian, and AI-powered personal assistants act as an external neocortex. By offloading memory storage and information retrieval to digital tools, the average person can function at a cognitive level that would have historically required a much higher IQ. This “Exocortex” allows for the management of complex data sets and multi-project workflows that were once the sole domain of the cognitive elite.

AI Co-pilots and Real-time Problem Solving

The integration of AI co-pilots into coding environments (like GitHub Copilot) and creative suites (like Adobe Firefly) has effectively raised the floor of technical capability. A developer with average logical reasoning skills can now produce high-level code by leveraging the augmented intelligence of the software. In this context, the “average IQ” of a workforce is less important than the “Technical Quotient” (TQ) of the tools they employ.

Neural Interfaces and the Future of IQ

Looking toward the horizon, companies like Neuralink and Synchron are exploring Brain-Computer Interfaces (BCIs). The goal is to create a high-bandwidth link between the human brain and digital systems. If successful, this technology could render the traditional 100-IQ average obsolete, allowing humans to access the entirety of the internet’s information and processing power directly through thought.

4. Data Analytics and the Quantification of Cognitive Trends

In the world of Big Data, the “Average IQ” is a vital data point for understanding labor markets, UX design, and educational technology. Tech companies use cognitive demographic data to tailor products to the median user’s processing speed and comprehension levels.

UX Design and Cognitive Load

Software developers spend billions of dollars researching “Cognitive Load Theory.” By understanding that the average user has a specific cognitive ceiling, designers create interfaces that minimize friction. This involves utilizing “progressive disclosure”—showing only the information needed at a specific moment—to ensure that the average IQ user is not overwhelmed by the complexity of the software.

Predictive Analytics in Education

EdTech platforms use data analytics to track the learning trajectories of millions of students. By mapping these trajectories against average cognitive benchmarks, these platforms can identify “outliers” (those significantly above or below the average IQ) and provide personalized, AI-driven curriculum adjustments. This data-driven approach ensures that technology can bridge the gap for those below the average while accelerating those above it.

The Ethics of Cognitive Data

As we move toward a more quantified world, the tech industry faces ethical dilemmas regarding “Cognitive Privacy.” If software can accurately estimate a user’s IQ based on their typing speed, reaction time, and problem-solving patterns within an app, how should that data be protected? The tech sector is currently debating frameworks to ensure that cognitive profiling does not lead to digital discrimination.

5. The Future Roadmap: From IQ to AGI

The ultimate goal of the tech industry is the creation of Artificial General Intelligence (AGI)—a system that meets or exceeds the cognitive capabilities of a human across all domains. When AGI arrives, the concept of “Average IQ” will be fundamentally transformed.

AGI and the New Intelligence Standard

If an AI system with an effective IQ of 200 becomes widely available for a few cents per hour, the value of “Average IQ” in the workforce will change. We will move from a “Knowledge Economy” to an “Allocation Economy,” where the most valuable skill is not the ability to process information (IQ), but the ability to direct AI agents to solve the right problems.

The Democratization of High Intelligence

Technology is the great equalizer. Just as the calculator democratized complex arithmetic, AI is democratizing high-level synthesis, coding, and strategic analysis. The “average” person, equipped with the right tech stack, will soon possess the functional output of a genius from a previous era.

Conclusion: Redefining the Human-Tech Synergy

The question “What is average IQ?” is no longer a static query about a number on a test. In the tech landscape, it is a dynamic metric that represents the starting point of human-machine collaboration. As we continue to develop AI that challenges our cognitive limits and software that expands our mental horizons, the average intelligence of our species will no longer be measured by what we can do alone, but by what we can achieve in tandem with our tools. The future of intelligence is not a competition between biological and silicon minds; it is a synthesis that promises to elevate the “average” to heights previously unimagined.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.