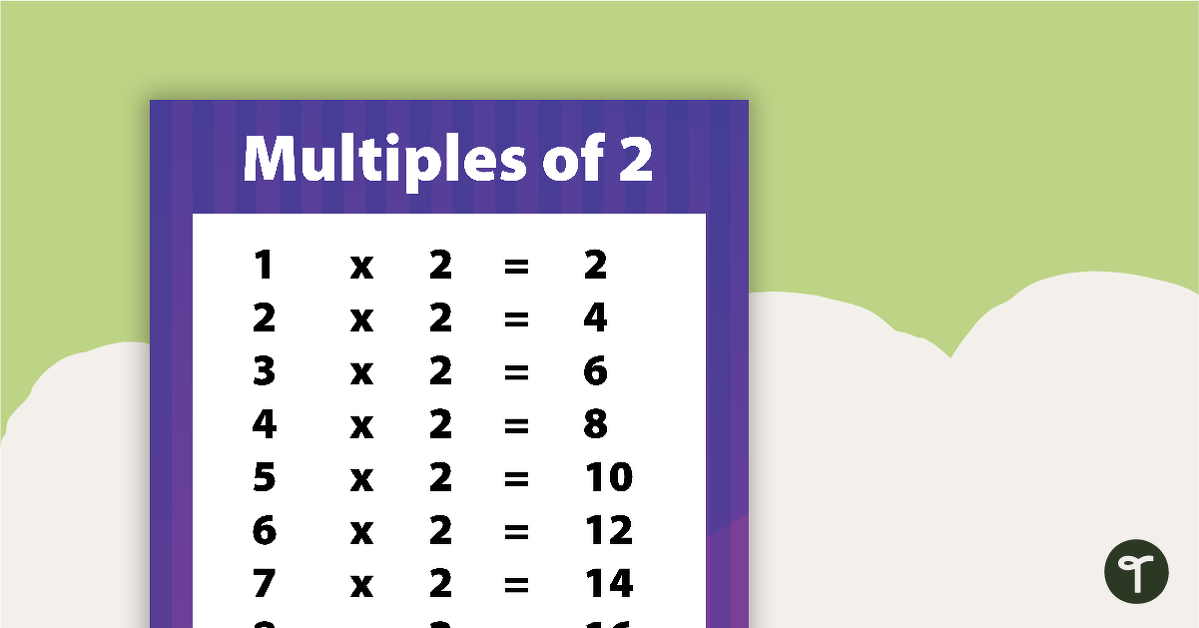

In the realm of mathematics, multiples of 2 are defined simply as any number that can be expressed in the form $2n$, where $n$ is an integer. These are the even numbers—2, 4, 6, 8, 10, and so on. While this may seem like a basic concept taught in primary school, in the world of technology, multiples of 2 are the invisible architecture upon which our entire digital civilization is constructed. From the smallest transistor on a silicon chip to the vast data centers powering global AI models, the logic of “2” dictates how we store, process, and transmit information.

Understanding the significance of these multiples is essential for anyone looking to grasp how software interacts with hardware. In tech, we don’t just use multiples of 2; we live by them. This article explores how this mathematical sequence governs everything from memory allocation to network protocols and the future of quantum computing.

The Architecture of Digital Logic: Why 2 is the Magic Number

At the most fundamental level, computers do not understand the decimal system (base-10) that humans use. Instead, they operate on a binary system (base-2). This choice isn’t arbitrary; it is a result of the physical limitations and efficiencies of electronic hardware.

Transistors and the Binary State

The heart of every modern processor is the transistor, a tiny electronic switch. These switches have two primary states: “on” (representing 1) or “off” (representing 0). Because there are only two possible states, the entire logic of computing is built on powers and multiples of 2. When we group these switches together, the number of possible configurations grows exponentially, but always remains rooted in the base-2 system. This binary simplicity allows for high-speed processing with minimal error, as the hardware only needs to distinguish between a high voltage and a low voltage.

Bits, Bytes, and Word Sizes

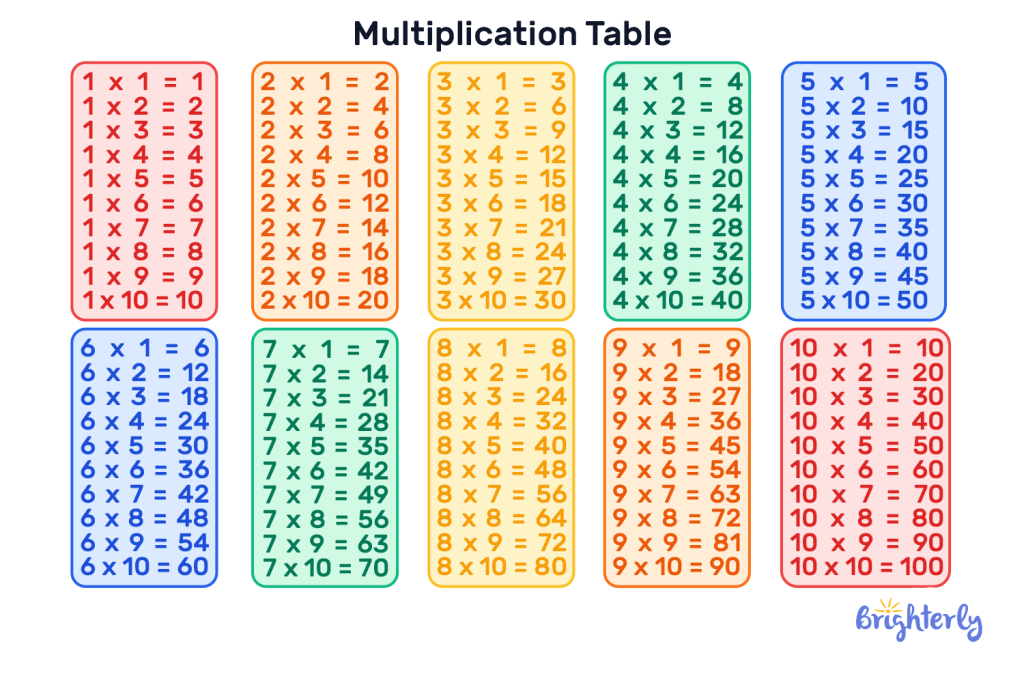

The “Bit” is the smallest unit of data in computing. When we aggregate bits, we do so in multiples of 2. A “Byte” consists of 8 bits. Why 8? Historically, 8 bits provided enough combinations ($2^8 = 256$) to represent the entire English alphabet, numbers, and control characters. From there, we see the progression of CPU architectures: 8-bit, 16-bit, 32-bit, and the modern 64-bit systems. A 64-bit processor can handle significantly more data at once than a 32-bit processor because it can address a vastly larger range of memory locations—specifically $2^{64}$ locations. This leap from 32 to 64 is a perfect example of how multiples of 2 define the performance ceilings of our software.

Multiples of 2 in Hardware Engineering and Memory

If you have ever wondered why you can buy a 128GB smartphone or an 8GB RAM module, but rarely a 7GB or 13GB version, the answer lies in the efficiency of binary addressing. Hardware engineers design memory layouts to align with the binary logic of the processor’s address bus.

Memory Evolution: From Kilobytes to Terabytes

Digital storage is almost exclusively sold and manufactured in powers and multiples of 2. When a computer looks for a piece of data in its RAM, it uses a binary address. To maximize the efficiency of the electrical pathways on a motherboard, the number of memory cells is kept in a power-of-two configuration. This is why we see a progression of 2GB, 4GB, 8GB, 16GB, 32GB, and so on.

In the software world, this creates a distinction between “decimal” gigabytes (used by marketers) and “binary” gibibytes (used by operating systems). A manufacturer might say a drive has 500GB (based on $10^9$), but a computer sees it as a multiple of 2, often leading to that “missing” space users notice when they plug in a new drive.

Processor Cores and Parallel Processing

Modern hardware trends have shifted away from simply increasing clock speeds to adding more cores. Interestingly, these cores often follow multiples of 2 for optimal load balancing. Dual-core, quad-core, octa-core (8), and 16-core processors allow operating systems to distribute tasks symmetrically. When software is optimized for “multi-threading,” it thrives when the number of tasks can be divided evenly across these cores. This symmetry reduces “latency” and ensures that the electrical signals within the chip travel the most efficient paths.

Screen Resolution and Digital Visuals

The screens we stare at every day—from smartwatches to 8K televisions—are grids of pixels. The way these pixels are organized and the way colors are rendered is deeply tied to the logic of multiples of 2.

Aspect Ratios and Pixel Density

While aspect ratios like 16:9 are standard, the actual pixel counts often lean toward binary-friendly numbers in the backend of the graphics processing unit (GPU). When a GPU renders a frame, it divides the screen into blocks (often 8×8 or 16×16 pixels) to process textures and lighting. By using multiples of 2, the GPU can use “bit-shifting” operations—a very fast mathematical shortcut in binary—to calculate positions and colors much faster than if it were using base-10 numbers.

The Logic of 4K and 8K Scaling

The transition from 1080p to 4K and eventually 8K is not just about “more pixels.” It is about scaling. 4K resolution (3840 x 2160) is essentially double the horizontal and vertical resolution of “Full HD” (1920 x 1080). This “2x” scaling factor is crucial for software developers. It allows UI elements to be scaled up cleanly without “interpolation” errors, where a pixel would have to be split in half (which is physically impossible). By sticking to multiples of 2, the digital image remains sharp and the transition between different hardware generations remains seamless for the end-user.

Networking and Data Transmission

The internet is a vast web of interconnected devices, each identified by a unique address. The protocols that govern how data moves from point A to point B are also built on the foundation of multiples of 2.

IP Addressing and Subnetting

IPv4, the most widely used internet protocol, uses 32-bit addresses. These are expressed as four “octets” (multiples of 8). Each octet can have a value from 0 to 255 (a total of 256 possibilities, or $2^8$). When network engineers set up a corporate network, they use “subnets” to divide traffic. These subnets are almost always sized in multiples of 2 (e.g., 8, 16, 32, 64, or 128 available IP addresses). This mathematical regularity allows routers to use high-speed binary masks to determine where to send a packet of data in nanoseconds.

Bandwidth and Throughput Efficiency

When we talk about internet speeds—like 100 Mbps or 1 Gbps—we are measuring the number of bits sent per second. Under the hood, data compression algorithms (like ZIP files or JPEG images) rely on identifying patterns. Many of these algorithms use a “sliding window” of data that is a multiple of 2. By analyzing data in blocks of 2, 4, or 8 kilobytes, the software can quickly identify redundant information and “shrink” the file size for faster transmission. This efficiency is what allows us to stream high-definition video over relatively thin wireless signals.

The Future of Computing: Beyond Base-2?

As we push the limits of silicon-based technology, the industry is looking toward new horizons. While our current world is defined by the multiples of 2, the next generation of tech—Quantum Computing—redefines this relationship.

Quantum Computing and Qubits

In a traditional computer, a bit is either 0 or 1. In a quantum computer, a “qubit” can exist in a superposition of both states simultaneously. However, even in this advanced field, the power of the machine is measured by the number of qubits, and the computational space grows by powers of 2. A 2-qubit system has 4 possible states; a 3-qubit system has 8; and a 50-qubit system has quadrillions of states. We are still tethered to the exponential growth of 2, even as we move into the realm of subatomic physics.

The Enduring Legacy of Binary

Despite the rise of AI and quantum research, the “Multiple of 2” remains the gold standard for digital stability. Software languages, from Python to C++, are compiled down into machine code that eventually becomes a series of binary signals. As long as our hardware relies on the distinction between “on” and “off,” “high” and “low,” or “0” and “1,” the multiples of 2 will remain the most important numbers in the tech industry.

In conclusion, “what are multiples of 2” is more than a math query; it is a question about the DNA of our digital world. Whether you are upgrading your RAM, designing a website, or troubleshooting a network, you are interacting with a system that prizes the symmetry and efficiency of the number 2. It is the language of the machine, and understanding it is the first step toward mastering the technology that defines our era.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.