In the rapidly evolving landscape of technology, from the complex algorithms powering artificial intelligence to the physics engines behind high-end gaming, there is a silent mathematical engine at work. At the heart of this engine lies a concept from linear algebra known as the “null space.” While it may sound like a term plucked from a science fiction novel, the null space (also referred to as the kernel) is one of the most practical and essential concepts in modern computing. It represents the set of all inputs that a system maps to zero, providing a blueprint for what a system ignores, what it can afford to lose, and how it maintains stability under pressure.

To understand the null space is to understand the architecture of data itself. As we push the boundaries of machine learning, robotics, and digital security, mastering these hidden dimensions becomes not just a mathematical exercise, but a technical necessity for developers and engineers.

The Mathematical Foundation: Decoding Linear Transformations

Before diving into high-level tech applications, we must establish what the null space is within the framework of linear transformations. In tech, almost everything—from an image filter to a neural network layer—can be represented as a matrix.

Defining the Kernel: From Vectors to Zero

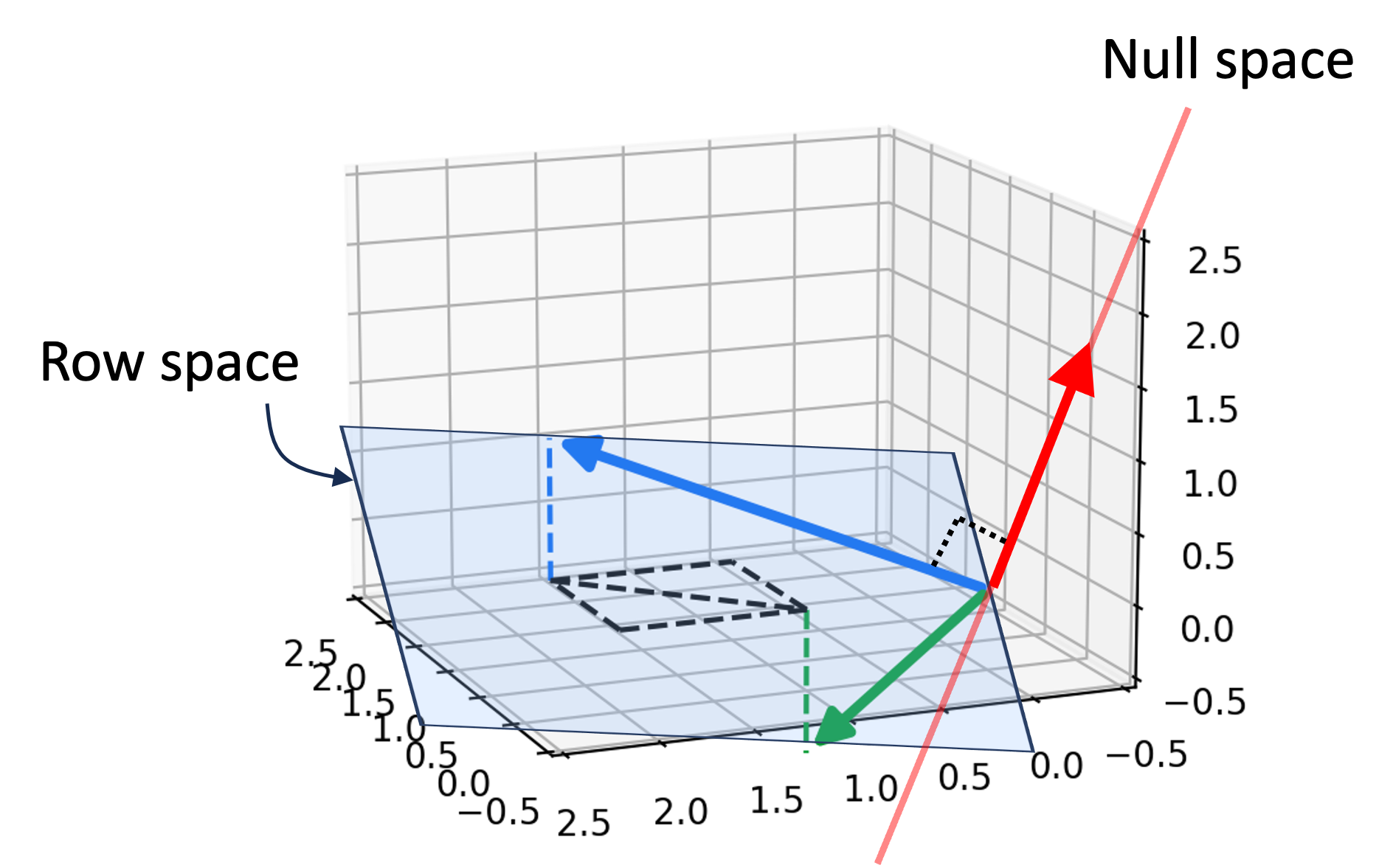

In technical terms, if you have a matrix $A$ representing a transformation, the null space consists of all vectors $x$ that satisfy the equation $Ax = 0$. In simpler terms, if you imagine the matrix as a machine that processes data, the null space contains all the inputs that result in an output of absolutely nothing. This doesn’t mean these inputs are “useless”; rather, it defines the “blind spots” or the redundancies of that specific transformation.

The Role of Matrices in Tech Environments

Matrices are the spreadsheets of the tech world, organizing vast amounts of data into rows and columns. When a software program processes a high-resolution image, it treats that image as a massive matrix. The transformations applied—such as blurring, sharpening, or compressing—are mathematical operations. The null space of these operations tells us which parts of the data are being discarded or collapsed. Understanding this allows engineers to predict how data will be altered before a single line of code is executed.

Dimensionality and the Rank-Nullity Theorem

One of the most important concepts for a data scientist is the Rank-Nullity Theorem. It states that the total number of dimensions in your input data is equal to the rank (the dimensions that “do something”) plus the nullity (the dimensions in the null space). In the context of Big Data, this is vital. If your nullity is high, it means your data contains a lot of redundant information that isn’t contributing to the final result. Identifying this allows for leaner, faster, and more efficient software.

Null Space in Artificial Intelligence and Machine Learning

The explosion of AI has turned the null space from an academic curiosity into a cornerstone of model optimization. When we train deep learning models, we are essentially navigating massive multi-dimensional spaces.

Feature Selection and Data Compression

In machine learning, “features” are the variables we use to train a model (e.g., age, income, and location for a credit score AI). However, not all features are unique. If two features are perfectly correlated, one of them effectively falls into the null space of the other’s predictive power. By identifying the null space of a dataset, AI engineers can perform “dimensionality reduction.” This processes the data to keep only the most impactful information, drastically reducing the computational power required to train a model without sacrificing accuracy.

Redundancy in Neural Networks

Modern neural networks, such as Large Language Models (LLMs), have billions of parameters. Surprisingly, research has shown that many of these parameters are redundant—they exist in the “null space” of the network’s decision-making process. This realization led to a technique called “pruning.” By identifying the weights and neurons that map to zero (or near-zero) impact on the output, developers can “prune” the network, making it smaller and faster so it can run on edge devices like smartphones rather than massive server farms.

Solving Underdetermined Systems in Deep Learning

Often in AI, we encounter “underdetermined systems,” where we have more unknowns than equations. In these scenarios, there isn’t just one right answer, but an infinite number of possible solutions. The null space defines the range of these solutions. By understanding the null space, researchers can “regularize” their models, steering the AI toward the most logical and generalized solution rather than one that has simply memorized the training data (a problem known as overfitting).

Applications in Computer Graphics and Robotics

While AI uses null space for data management, robotics and computer graphics use it to manage physical and virtual movement. This is where the concept becomes incredibly tangible.

Inverse Kinematics and Singularities

In robotics, “Inverse Kinematics” (IK) is the process of calculating the joint angles needed to move a robot’s hand to a specific point in space. If a robot arm has seven joints but only needs to reach a 3D coordinate, it has extra “degrees of freedom.” These extra movements exist in the null space of the task. Engineers use this “null space motion” to allow the robot to perform secondary tasks—like avoiding an obstacle or minimizing energy consumption—while still keeping its hand exactly where it needs to be. It is the mathematical secret to fluid, human-like robotic motion.

Image Processing: Noise Reduction and Filtering

Every time you use a “noise reduction” filter on a photo, you are interacting with the null space. Digital noise often exists in high-frequency dimensions that don’t contribute to the actual “signal” or meaning of the image. By projecting the image data into a space where the noise is mapped to the null space, software can effectively “zero out” the graininess while keeping the subject of the photo sharp.

Optimization and Constraint Satisfaction

In 3D game engines like Unreal or Unity, developers often need to satisfy multiple constraints simultaneously—such as a character’s feet staying on the ground while their head looks at a moving target. The null space allows the engine to solve for the primary constraint (the feet) and then use the “leftover” mathematical space to solve for the secondary constraint (the head movement) without breaking the first one.

Digital Security and Cryptography: The Hidden Dimensions

The null space is also a critical tool in the world of cybersecurity, acting as both a shield and a sword in the realm of encryption and error correction.

Linear Cryptanalysis and Null Spaces

Cryptographers use linear algebra to build and break codes. In linear cryptanalysis, attackers look for linear approximations of a cipher. If an encryption algorithm has a predictable null space, an attacker can theoretically “nullify” certain parts of the key, drastically reducing the complexity required to crack the code. Consequently, modern security protocols are designed to ensure their transformations are non-linear and lack an easily accessible null space.

Error Correction Codes

Data transmission is never perfect; bits get flipped or lost due to interference. To fix this, tech protocols use “Error Correction Codes” (ECC). These codes add redundant data to a message in a very specific way. When the data is received, the system checks if the incoming vector falls into the null space of a “parity-check matrix.” If it doesn’t, the system knows an error has occurred and uses the properties of that null space to calculate exactly which bit went wrong and flip it back. This is why your Wi-Fi and hard drives work reliably even in “noisy” environments.

The Future of Computational Logic: Why Developers Must Understand Null Space

As we move toward a future defined by quantum computing and real-time autonomous systems, the importance of linear algebra concepts like the null space will only grow.

Beyond Simple Coding: The Rise of the Math-Centric Developer

The era of the “scripting-only” developer is shifting. As we integrate more AI and physics-based logic into standard applications, the most successful developers will be those who understand the underlying mathematical structures. Understanding the null space allows a developer to write code that isn’t just functional, but mathematically optimized. It provides a deeper insight into “algorithmic efficiency,” helping to identify where code is doing unnecessary work.

Building More Efficient Algorithms

In the push for “Green Tech” and sustainable computing, reducing the energy consumption of data centers is paramount. Much of the energy wasted in computing comes from processing “zero-impact” data—information that effectively lives in the null space of the desired output. By refining our algorithms to identify and bypass these null dimensions early in the pipeline, we can create software that requires less electricity and fewer hardware resources.

In conclusion, the null space is far from a void. It is a space of infinite potential and a vital tool for technical refinement. Whether it is helping a robot move gracefully, compressing a 4K video, or protecting your private data, the null space is the silent guardian of our digital world. By mastering this concept, tech professionals can unlock new levels of precision, efficiency, and innovation in their work.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.