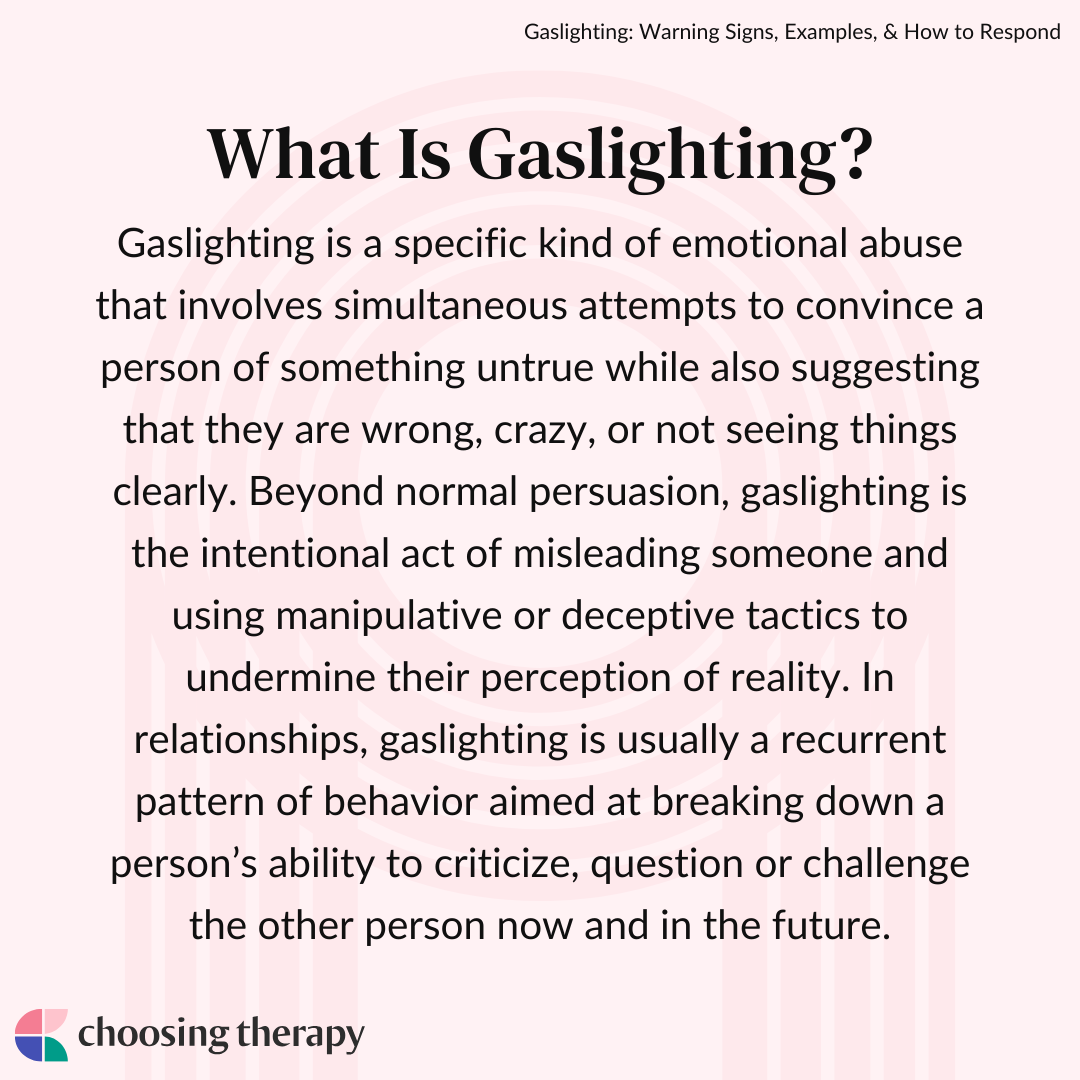

Gaslighting, a term originating from psychological manipulation, has found a disturbing and increasingly prevalent application within the realm of technology. While its roots lie in interpersonal deception, its manifestation in digital spaces and technological interactions presents unique challenges and requires a specific understanding. This article delves into the meaning of gaslighting within the tech sphere, exploring how it operates, its impact on users, and the strategies for identifying and mitigating its effects. We will focus on how manipulative tactics are employed through software, user interfaces, and digital information flows to undermine users’ perception of reality and control.

The Digital Facade: How Technology Facilitates Gaslighting

Technology, by its very nature, can create a disconnect between physical reality and perceived digital experience. This disconnect can be exploited to subtly manipulate users, leading them to doubt their own judgment or memory. The opaque nature of many digital systems, coupled with the sheer volume of information, creates fertile ground for such manipulation.

Algorithmic Orchestration and Information Control

At its core, algorithmic gaslighting involves the deliberate manipulation of the information presented to a user to shape their perception and decision-making. This is not merely about showing personalized ads; it extends to subtly altering search results, news feeds, or even the availability of certain information to create a desired narrative or impression.

Subtle Redirection and Omission

Algorithms are designed to curate user experiences, but this power can be weaponized. Imagine a scenario where search results for a particular product or service are consistently ranked lower for a user who has expressed dissatisfaction or is exploring alternatives. This subtle redirection, coupled with the omission of critical information or contrasting viewpoints, can lead a user to believe that their initial negative experience was an anomaly or that no viable alternatives exist. The user begins to doubt their own research or instincts, questioning if they “remembered” the information correctly or if they are somehow misinterpreting the available data.

The Illusion of Consensus or Dissent

Social media platforms, in particular, are susceptible to algorithmic manipulation that can create an artificial sense of consensus or dissent. By selectively amplifying certain posts or comments, or by downranking others, platforms can make a particular viewpoint appear far more widespread or fringe than it actually is. A user might find their feed dominated by posts supporting a specific political stance, leading them to believe this is the prevailing public opinion, even if independent analysis suggests otherwise. Conversely, dissenting voices might be systematically suppressed, making the user feel isolated or mistaken in their differing perspective. This manufactured reality undermines their confidence in their own assessment of public sentiment.

User Interface (UI) Deception and Dark Patterns

Beyond algorithmic manipulation, the very design of user interfaces can be employed to subtly mislead and gaslight users. “Dark patterns” are design choices that trick users into doing things they might not otherwise do, often to the benefit of the platform or company. These patterns prey on human psychology and can erode a user’s sense of agency.

The “Opt-Out” Maze and Hidden Settings

Many digital services make it significantly easier to opt in to certain features, subscriptions, or data sharing agreements than to opt out. Users might find themselves inadvertently subscribed to a service after a free trial, with the cancellation process being deliberately convoluted, hidden deep within settings menus, or requiring multiple steps that are not clearly signposted. When the user finally discovers the charge or the unintended subscription, they might question their own attention to detail, thinking, “I must have missed something,” rather than recognizing a deliberate design choice intended to obscure their ability to control their settings. This erodes their trust in their own ability to manage their digital presence.

Ambiguous Language and Misleading Prompts

The language used in prompts, notifications, and terms of service can also be a tool for gaslighting. Vague phrasing, the use of double negatives, or the presentation of choices in a biased manner can trick users into agreeing to something they didn’t intend. For instance, a prompt might say, “Do not wish to miss out on updates?” followed by a button labeled “Yes.” A user focused on quickly proceeding might click “Yes” without fully processing the negative framing, only to find themselves bombarded with unwanted notifications later. They might then doubt their own comprehension, thinking they misread the question or misunderstood its implications.

The Psychological Impact: Undermining User Trust and Autonomy

The consistent exposure to gaslighting tactics within technology can have profound psychological effects on users. It erodes trust, fosters self-doubt, and ultimately diminishes a user’s sense of autonomy in the digital world.

Erosion of Trust in Digital Platforms and Information

When users repeatedly experience situations where their understanding of a digital interaction or piece of information is subtly undermined, their trust in the platforms they use begins to crumble. They become perpetually on guard, second-guessing every notification, every piece of content, and every decision presented to them. This constant vigilance is exhausting and breeds cynicism. The user may start to wonder if anything they see or experience online is truly as it appears, leading to a generalized distrust of digital information and services.

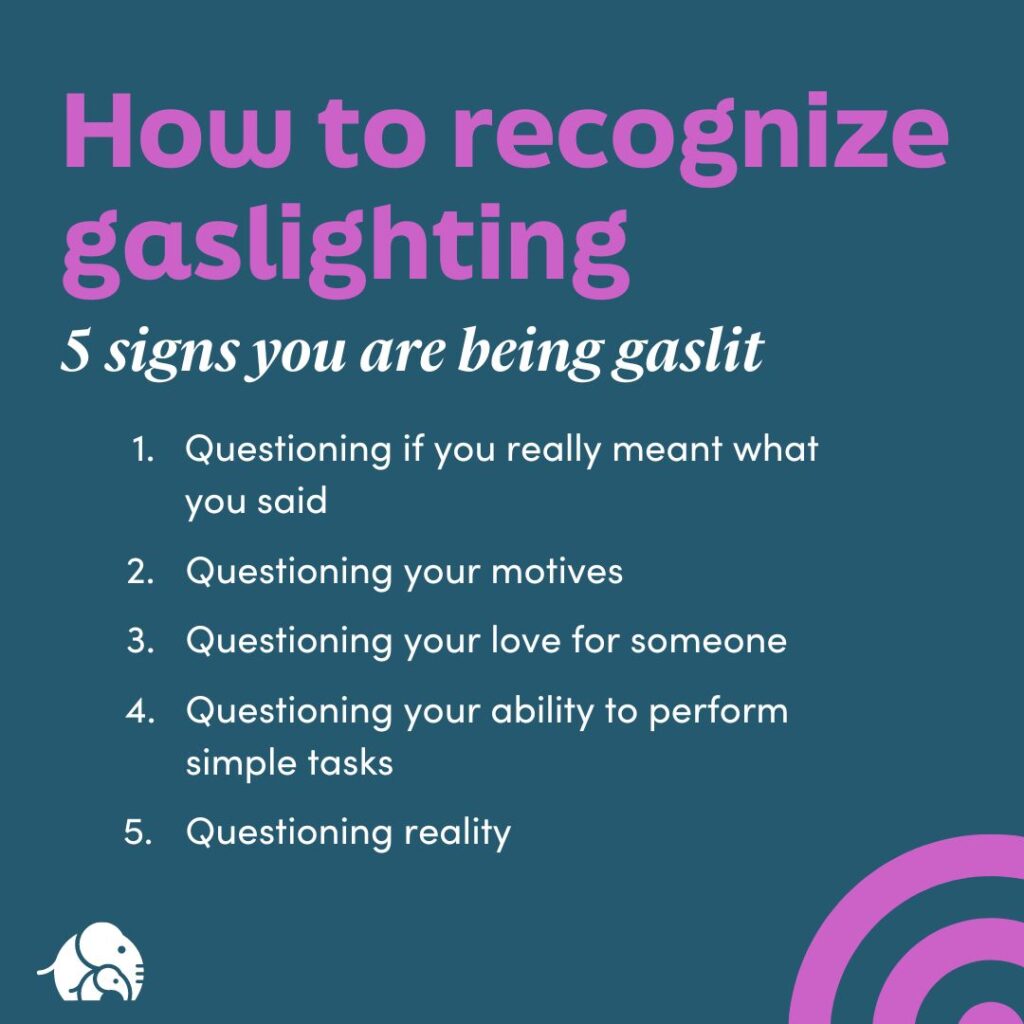

The “Am I Crazy?” Phenomenon: Self-Doubt and Cognitive Dissonance

One of the most insidious effects of technological gaslighting is the induction of self-doubt. When a user is repeatedly led to believe they have made a mistake, misunderstood something, or are misremembering, they begin to question their own cognitive abilities. This can manifest as the “Am I crazy?” phenomenon, where individuals doubt their own sanity and perception of reality. The cognitive dissonance – the mental discomfort experienced by a person who holds two or more contradictory beliefs, ideas, or values, or participates in an action that goes against one of these – becomes a constant companion. The user may start to believe that the problem lies within themselves, rather than with the manipulative design or algorithmic manipulation they are encountering.

Questioning Memory and Perception

Digital systems often record user actions, providing a factual, albeit sometimes incomplete, record. However, gaslighting occurs when the presentation of this information is skewed. For example, a user might receive a notification confirming a purchase, only to later find no record of that purchase in their transaction history. The system might then offer explanations that subtly blame the user for “not saving the confirmation” or “misplacing the email,” rather than acknowledging a glitch or a deliberate misrepresentation. This constant questioning of one’s own memory and perception is a hallmark of gaslighting.

Diminished User Agency and Control

Ultimately, technological gaslighting aims to reduce a user’s agency and control. When users feel they cannot trust their own judgment regarding digital interactions, their ability to make informed choices is severely hampered. They may become hesitant to engage with new technologies, fearful of being manipulated. This can lead to a passive acceptance of whatever the digital environment presents, a state of learned helplessness where the user feels powerless to influence their own digital experience.

Recognizing and Resisting Digital Gaslighting

Navigating the digital landscape requires a heightened awareness of manipulative tactics. By understanding the mechanisms of technological gaslighting, users can develop strategies to recognize and resist it, reclaiming their autonomy and trust.

Developing Digital Literacy and Critical Thinking

The first line of defense against digital gaslighting is robust digital literacy. This involves understanding how algorithms work, recognizing common dark patterns, and critically evaluating information presented online. Users need to move beyond passive consumption and actively question the sources and presentation of digital content and interactions.

Verifying Information and Cross-Referencing

A fundamental practice in combating digital gaslighting is to consistently verify information. This means cross-referencing data from multiple reputable sources, rather than relying on a single search result or a curated feed. If a platform or service presents information in a way that seems contradictory or questionable, users should seek independent confirmation. For instance, if a product review seems overly biased, a user should seek out reviews on different platforms or consult independent consumer watchdog sites.

Understanding Algorithmics and UI Design

Educating oneself about how algorithms personalize content and how user interfaces are designed to encourage certain behaviors is crucial. Knowing that search results can be influenced by past behavior, that social media feeds are curated for engagement, and that certain UI elements are designed to trick users can help in disarming these tactics. This knowledge shifts the user’s perspective from “What did I do wrong?” to “How is this system trying to influence me?”

Practical Strategies for Defense

Beyond awareness, concrete actions can be taken to protect oneself from digital gaslighting. These strategies focus on maintaining control and reinforcing one’s own perception of reality.

Leveraging Privacy Settings and Tools

Actively managing privacy settings is paramount. Users should regularly review and adjust permissions granted to apps and websites, opting out of unnecessary data collection. Utilizing browser extensions that block trackers and limit algorithmic personalization can also help create a more neutral digital environment. While sometimes challenging to find, consciously seeking out and utilizing granular privacy controls empowers users to regain a degree of autonomy.

Documenting and Reporting Suspected Manipulation

When encountering instances that feel like gaslighting – particularly those involving financial transactions, critical settings, or significant service changes – it is important to document them. Taking screenshots, saving emails, and noting specific dates and times can serve as evidence. Reporting these instances to platform providers, consumer protection agencies, or advocacy groups can not only help resolve individual issues but also contribute to broader awareness and potential changes in industry practices. While the immediate emotional toll of doubt can be significant, documenting the facts can help reinforce one’s own reality and serve as a tool for recourse.

Cultivating a Healthy Skepticism

A healthy dose of skepticism is an invaluable tool in the digital age. This doesn’t mean becoming overly paranoid, but rather adopting a mindset of questioning and critical evaluation. When something online feels “off,” or when a user finds themselves doubting their own understanding, it’s a sign to pause, investigate, and trust their instincts. The goal is to move from a position of passive acceptance to active engagement, where the user is an informed and empowered participant in their digital interactions, rather than a pawn to be manipulated. By understanding what gaslighting means in the context of technology, we can better equip ourselves to navigate the digital world with confidence and integrity.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.