In the modern digital landscape, where billions of devices interact across every conceivable time zone, the need for a standardized, unambiguous method of measuring time is paramount. While humans rely on calendars and clocks—riddled with complexities like leap years, time zones, and daylight savings—computers require something far more linear and predictable. This is where the Unix timestamp (also known as Epoch time or POSIX time) comes into play. It is the silent heartbeat of the internet, a simple integer that tracks the passage of time with mathematical precision.

The Mechanics of Unix Time: Understanding the Epoch

At its core, a Unix timestamp is defined as the total number of seconds that have elapsed since the “Unix Epoch.” To understand how a computer perceives time, one must first understand this specific starting point and the logic behind the counter.

The Starting Point: January 1, 1970

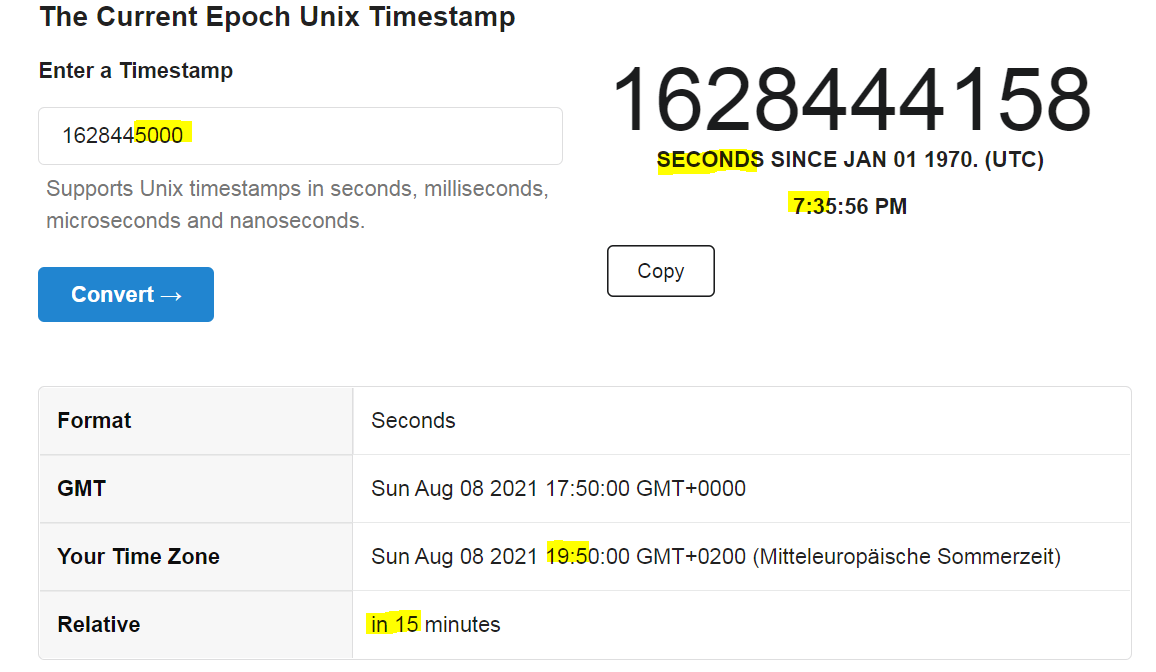

The Unix Epoch is defined as 00:00:00 Coordinated Universal Time (UTC) on Thursday, January 1, 1970. This date was chosen somewhat arbitrarily by the early developers of the Unix operating system at Bell Labs, but it has since become the industry standard for virtually all modern computing environments, including Linux, macOS, and even web-based APIs. When a Unix timestamp is “0,” it refers exactly to that moment in 1970. As each second passes, the counter increments by one.

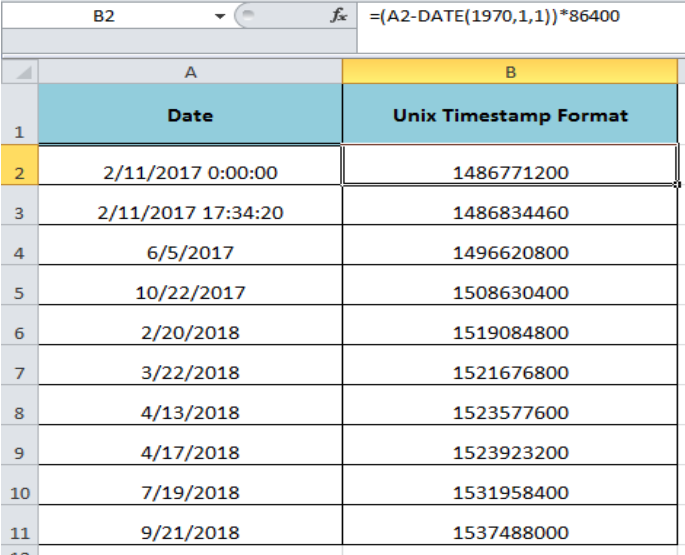

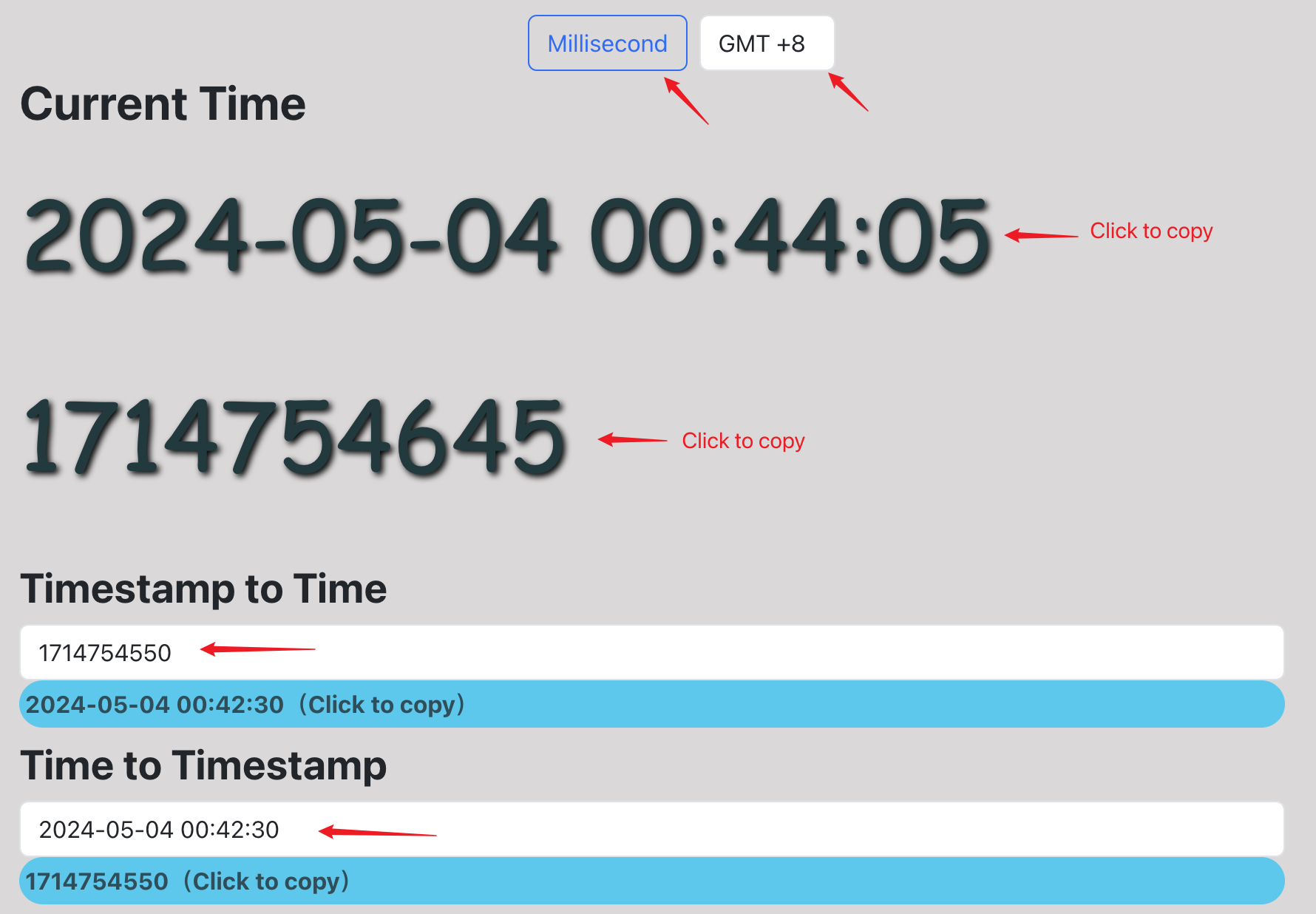

How the Counter Works: Seconds vs. Milliseconds

Standard Unix time is measured in seconds. For instance, a timestamp of 1672531200 corresponds to January 1, 2023. However, as computing power increased and the need for higher precision arose—particularly in high-frequency trading or scientific simulations—many programming environments (such as JavaScript and Java) began using milliseconds. A millisecond timestamp is simply the standard Unix timestamp multiplied by 1,000. Distinguishing between these two is a fundamental skill for developers to avoid “date bugs” that could set an application’s timeline thousands of years into the future or past.

Leap Seconds and UTC Alignment

One of the most technical aspects of the Unix timestamp is how it handles “leap seconds.” Unlike the physical rotation of the Earth, which can fluctuate slightly, Unix time is intended to be linear. Most Unix-based systems handle leap seconds by repeating the previous second or “smearing” the extra second across a period of time. This ensures that the timestamp remains loosely synchronized with UTC without breaking the mathematical simplicity of a monotonic counter.

Why Developers and Systems Rely on Unix Timestamps

If humans find “October 12, 2023, at 4:30 PM” easier to read, why do systems insist on using long strings of digits? The answer lies in the inherent challenges of “Human Time” versus “System Time.”

Platform Independence and Universal Compatibility

One of the greatest hurdles in global software development is the “Time Zone Nightmare.” If a server in California logs an event at 2:00 PM and a server in London logs an event at 10:00 PM, determining which happened first requires complex calculations involving offsets. A Unix timestamp, however, is identical regardless of where the server is located. Because it is based on UTC, a timestamp of 1700000000 represents the exact same moment in history for every machine on Earth. This makes it the ultimate “Single Source of Truth” for distributed systems.

Simplifying Date Calculations and Sorting

Computers are significantly faster at comparing integers than they are at parsing strings. To determine if one date is “later” than another using human-readable strings, a program must parse the month, day, year, and time, account for AM/PM, and then compare. With Unix timestamps, the computer simply checks if Integer A > Integer B. This makes sorting large datasets by time or calculating the duration between two events (e.g., Timestamp2 - Timestamp1) incredibly efficient and less prone to logic errors.

Database Efficiency and Storage Optimization

In large-scale databases handling millions of transactions per second, storage efficiency is critical. Storing a date as a string (e.g., “2023-10-12 16:30:00”) requires significantly more bytes than storing it as a 4-byte or 8-byte integer. By using Unix timestamps, developers can minimize the storage footprint of their logs and indexes, leading to faster query performance and lower infrastructure costs.

Implementing Unix Time Across Programming Environments

Every major programming language provides built-in tools for working with Unix time. Understanding how to generate and convert these timestamps is a foundational element of backend and frontend engineering.

Usage in JavaScript and Web Development

In the world of web development, JavaScript’s Date object is the primary tool for time management. Calling Date.now() returns the current Unix timestamp in milliseconds. Because APIs often send data in seconds, developers frequently use Math.floor(Date.now() / 1000) to normalize the data. This conversion is crucial when building real-time dashboards or countdown timers that must remain consistent across different user browsers.

Python and Data Science Integration

Python, a favorite for data science and automation, handles timestamps via the time and datetime modules. The command time.time() provides the current Unix timestamp as a floating-point number, allowing for sub-second precision. For data analysts, converting these integers back into readable formats using datetime.fromtimestamp() is a common step in cleaning datasets for visualization or reporting.

Unix Time in SQL and Cloud Databases

Relational databases like PostgreSQL and MySQL offer native functions like UNIX_TIMESTAMP() and FROM_UNIXTIME(). These allow developers to perform time-based arithmetic directly within a query. In the era of Cloud Computing, services like AWS DynamoDB or Google Cloud Firestore often use Unix timestamps for “Time to Live” (TTL) settings, where a record is automatically deleted once the current time exceeds the stored timestamp.

The “Year 2038 Problem” (Y2K38) and Future Challenges

Much like the famous Y2K bug at the turn of the millennium, the Unix timestamp faces its own “existential crisis” known as the Year 2038 problem. This is a significant concern for legacy systems and embedded hardware.

The 32-bit Integer Limitation

Many older systems store the Unix timestamp as a signed 32-bit integer. The maximum value a signed 32-bit integer can hold is 2,147,483,647. When the Unix timestamp reaches this number—which will happen at 03:14:07 UTC on January 19, 2038—the counter will “overflow.” Instead of incrementing to the next second, it will wrap around to the minimum negative value, causing the system to believe the date is December 13, 1901.

Potential Consequences for Legacy Systems

The implications of the Y2K38 bug are vast. Financial systems calculating long-term mortgages, critical infrastructure managing power grids, and embedded systems in transportation could all fail if they cannot handle dates beyond 2038. Unlike Y2K, which was largely a formatting issue, Y2K38 is a fundamental hardware and data type limitation.

Modern Solutions: The Transition to 64-bit

The tech industry is already well underway in mitigating this risk. Most modern 64-bit operating systems use a 64-bit integer to store Unix time. A 64-bit integer can hold a value so large (approximately 292 billion years) that it will effectively outlast the existence of the Earth itself. The challenge remains in updating legacy hardware—such as industrial sensors and older networking equipment—that cannot easily be upgraded to 64-bit architectures.

Unix Timestamp in Cybersecurity and Distributed Systems

Beyond simple time-tracking, Unix timestamps play a critical role in securing the digital world and ensuring that complex networks function in harmony.

Log Analysis and Forensic Investigation

In cybersecurity, the timeline of an attack is everything. When a security analyst examines server logs to trace a breach, they rely on Unix timestamps to correlate events across multiple machines. If a firewall in Tokyo logs a suspicious packet and a database in New York logs an unauthorized access attempt, the Unix timestamps allow the analyst to see exactly how many milliseconds apart those events occurred, facilitating the reconstruction of the attack vector.

Synchronizing Distributed Clusters

Modern applications often run on “clusters” of hundreds of servers. These servers must be perfectly synchronized to manage distributed databases (like Cassandra or MongoDB). These systems use Unix timestamps to determine which version of a piece of data is the “newest.” Without a universal time standard, a server with a slightly fast clock could overwrite newer data with older information, leading to massive data corruption.

Token Expiration and Session Management

When you log into a website, the server often issues a JSON Web Token (JWT). Inside this token are Unix timestamps representing the “Issued At” (iat) and “Expiration” (exp) times. Because the Unix timestamp is a universal constant, your browser and the server can both independently verify if the session has expired without needing to communicate about time zones or clock offsets. This is a cornerstone of modern stateless authentication.

Conclusion: The Permanent Legacy of Epoch Time

The Unix timestamp is a testament to the power of simplicity in engineering. By reducing the chaotic complexity of human calendars into a single, ever-growing integer, it has enabled the creation of global, high-performance digital systems. While the Year 2038 problem serves as a reminder of the limitations of older technology, the transition to 64-bit systems ensures that Unix time will remain the standard for centuries to come. Whether you are a developer debugging a database, a data scientist analyzing trends, or a security expert securing a network, the Unix timestamp is the invisible thread that holds the digital timeline together.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.