In William Golding’s classic novel, the conclusion is often viewed through a psychological or sociological lens. However, in the modern era of Silicon Valley, the ending of Lord of the Flies serves as a chillingly accurate metaphor for the failure of autonomous systems, the breakdown of decentralized protocols, and the critical necessity of “Human-in-the-Loop” (HITL) intervention. When we ask “what happened at the end,” we aren’t just looking at the rescue of a group of schoolboys; we are looking at a “hard reset” of a system that had spiraled into a catastrophic failure state.

For the tech community, the island is a closed sandbox environment. The boys are autonomous agents. The ending—where a naval officer arrives just as Ralph is about to be “deleted” by Jack’s tribe—represents the ultimate intervention of a higher-level governance layer over a localized, malfunctioning network.

The Breakdown of Systemic Logic: From Decentralized Order to Algorithmic Bias

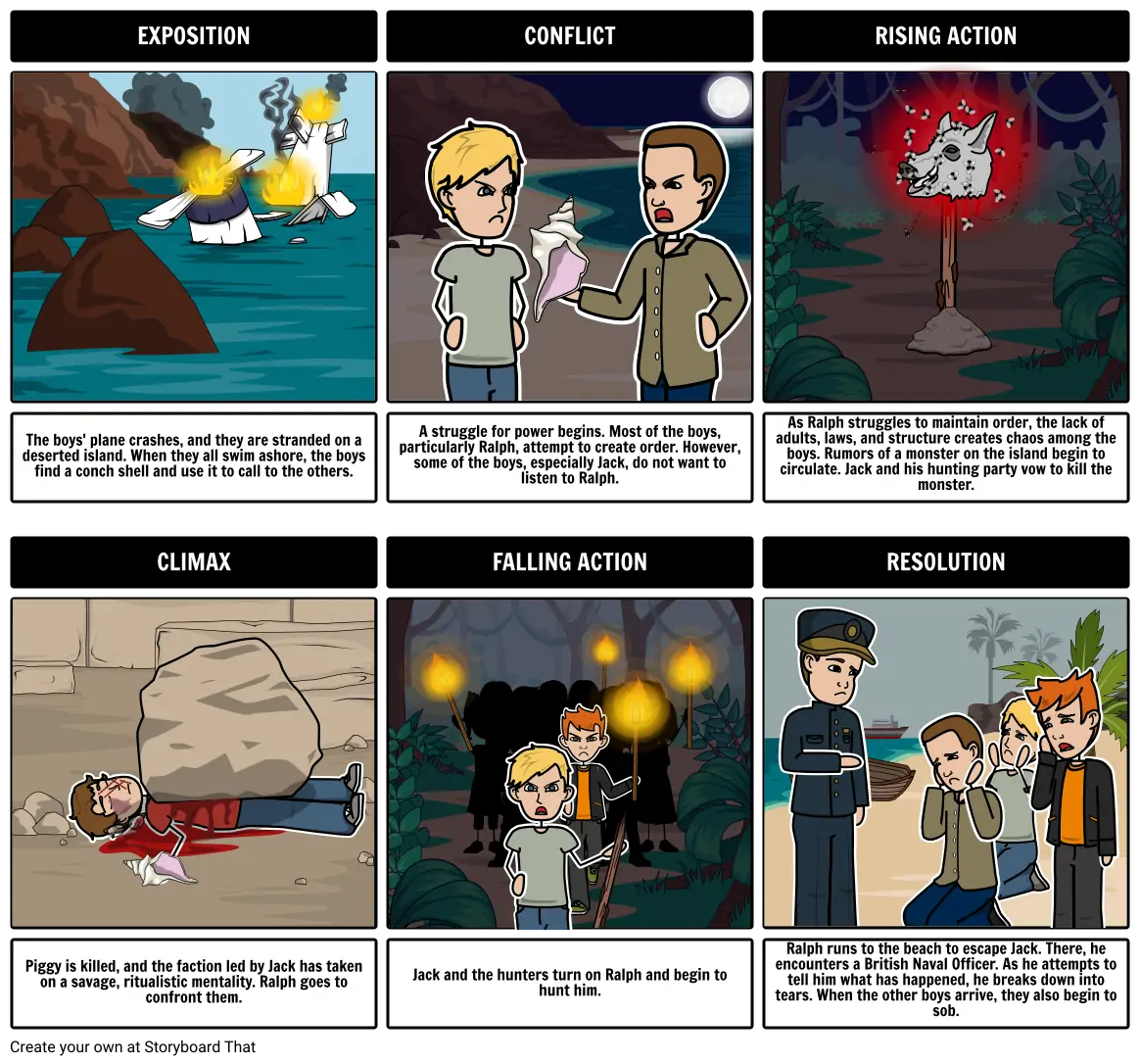

To understand the end, we must analyze the “software” that ran the island. Initially, the boys established a decentralized protocol represented by the Conch. This was a classic queuing system: whoever holds the conch has the priority to transmit data (speak).

The Conch as an Interface: The Fragility of Protocol

In the tech world, protocols only work if every node in the network agrees to follow them. The ending of the book is the result of a slow corruption of this code. Jack represents an “adversarial attack” on the system. He realizes that the Conch (the interface) is only as strong as the social consensus behind it. By the time the novel ends, the interface has been physically destroyed—shattered into pieces alongside Piggy. In technical terms, this is a “System 32” failure. Without a central communication protocol, the system enters a state of pure entropy.

Signal vs. Noise: When Data Patterns Turn Destructive

The “Beast” in the novel functions much like a hallucination in a Large Language Model (LLM). It is a false pattern derived from noisy data (fear, shadows, and a dead parachutist). By the end of the story, this hallucination has become the primary driver of the system’s logic. Jack’s tribe isn’t just a group of hunters; they are a feedback loop that reinforces biased data. When Ralph is hunted in the final pages, he is the last “clean” data point being purged by a biased, corrupted algorithm.

The Naval Officer Moment: The Role of Human Oversight in Autonomous Systems

The climax of Lord of the Flies occurs when Ralph stumbles onto the beach and hits the boots of a naval officer. This is the “Deus ex Machina” of the story, but in technological terms, it is a “System Override.”

Hard Resets and Kill Switches

What happened at the end was a “Hard Reset.” The island was literally on fire—Jack’s tribe had set the forest ablaze to smoke Ralph out. This fire, intended to destroy a single target, was actually destroying the entire infrastructure (the food source and shelter) of the island. This is a classic “Alignment Problem” in AI. The agent (Jack) had a goal (kill Ralph) and pursued it with such single-mindedness that it endangered the entire environment.

The arrival of the naval officer represents the deployment of a “Kill Switch.” The officer is a representative of a larger, more complex civilization—a higher-tier server or an administrative user. His presence immediately freezes the processes on the island. The “hunters” who were moments away from murder suddenly revert to “little boys.” This illustrates the power of external governance to recalibrate agent behavior instantaneously.

The Paradox of the “Adult” Intervention: Regulatory Compliance

There is a bitter irony at the end of the book: the officer who rescues them is a soldier engaged in a global war. In tech, this reflects the “Regulatory Paradox.” We often look to government bodies or international standards to “rescue” us from the chaos of unregulated tech development (like AI or social media echo chambers). However, those regulatory bodies are often operating within their own systems of conflict and “warfare.” The officer represents a “Parent Process” that is just as flawed as the “Child Process” he is shutting down, yet his presence is the only thing that prevents total systemic collapse.

Lessons for Modern Tech Infrastructure: Preventing Digital Tribalism

The ending of the novel provides a roadmap for what happens when “moving fast and breaking things” goes too far. In the tech industry, we see “Island Mentality” in siloed development teams, closed social media platforms, and decentralized finance (DeFi) ecosystems that lack a safety net.

Building Ethical Guardrails in Software Development

The tragedy of Piggy—the “CTO” of the island—is that his logic was not protected by a security layer. When we develop software today, the end of Lord of the Flies warns us that logic and intelligence are not enough. We need “Cybersecurity for Culture.” This means building guardrails that prevent a system from being hijacked by bad actors or adversarial inputs. If the boys had an immutable “Code of Conduct” baked into their “kernel,” the ending would have been a peaceful transition of power rather than a scorched-earth hunt.

Decentralized Autonomous Organizations (DAOs) and the Piggy Problem

Modern DAOs often face the “Lord of the Flies” dilemma. Without a centralized authority, how do you prevent the “Jack” (the whale or the hostile voter) from taking over? The end of the book shows that pure decentralization, without a mechanism for conflict resolution or a “Naval Officer” emergency protocol, leads to the destruction of the minority (the “Ralphs” and “Piggies” of the world). To prevent the “island ending” in tech, developers are now looking at “Optimistic Rollups” and multi-signature wallets—technical versions of the “Conch” that are harder to break.

The Future of AI Alignment: Ensuring the Island Stays Productive

As we move toward Artificial General Intelligence (AGI), the ending of Lord of the Flies becomes a mandatory case study for AI Safety researchers. We are essentially building “boys on an island”—autonomous models that we hope will behave rationally.

Reinforcement Learning from Human Feedback (RLHF) as a Savior

The naval officer is the ultimate embodiment of RLHF. Throughout the book, the boys were learning from a negative reward signal (fear and violence). The officer provides a sudden, massive corrective signal. He reminds them of their “Initial Training Data”: the rules of British society and the expectations of civilized behavior.

For AI developers, the goal is to ensure that the “Naval Officer” (human values) is present in the system before the island catches fire. We cannot wait for the system to reach a state of total collapse before intervening. We must integrate the “end of the story” into the “beginning of the code.”

Scalable Oversight and the “Fire” Problem

In the end, the fire meant to destroy Ralph was what actually attracted the rescue ship. In a strange twist of tech-logic, the system’s failure (the fire) triggered the external monitor (the ship). In modern cloud computing, we call this “Observability.” A system that is failing should emit a “smoke signal” that alerts engineers to intervene. However, a well-designed system shouldn’t have to burn down its entire environment just to get an “Adult’s” attention.

Conclusion: The Final Log-off

What happened at the end of Lord of the Flies was more than a rescue; it was a demonstration of the fragility of order in the face of systemic corruption. For those in the technology sector, the book serves as a cautionary tale about the importance of governance, the dangers of unaligned goals, and the necessity of robust, failsafe protocols.

As we build the digital islands of the future—be they social networks, AI ecosystems, or decentralized financial webs—we must remember that without a “Conch” that holds power and an “Officer” that ensures safety, the system will eventually set itself on fire. The “end” of the story is a reminder that in tech, as in life, the most important feature is not the ability to operate autonomously, but the ability to remain aligned with the civilization that created it. We must ensure that when the “Naval Officer” of history looks at our digital creations, he doesn’t find a scorched earth, but a thriving, well-governed network.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.