In the rapidly evolving landscape of technology, the most complex systems—from the silicon chips in our smartphones to the cloud servers powering artificial intelligence—rely on a fundamental language of symbols. Among the most critical of these is “bar notation.” While it may appear as a simple horizontal line placed over a variable, bar notation is the cornerstone of Boolean algebra, digital circuit design, and the logical framework that allows computers to process binary information.

Understanding bar notation is essential for software engineers, hardware designers, and tech enthusiasts alike, as it represents the concept of logical inversion. This article explores the technical significance of bar notation, its application in modern hardware engineering, and its role in the documentation of complex digital systems.

The Fundamentals of Bar Notation in Computing

At its core, bar notation is a symbolic representation used to indicate the “complement” or the “NOT” operation in Boolean algebra. In a binary system, where every state is either a 1 (true) or a 0 (false), bar notation tells the processor to flip that state.

Definition and Syntax

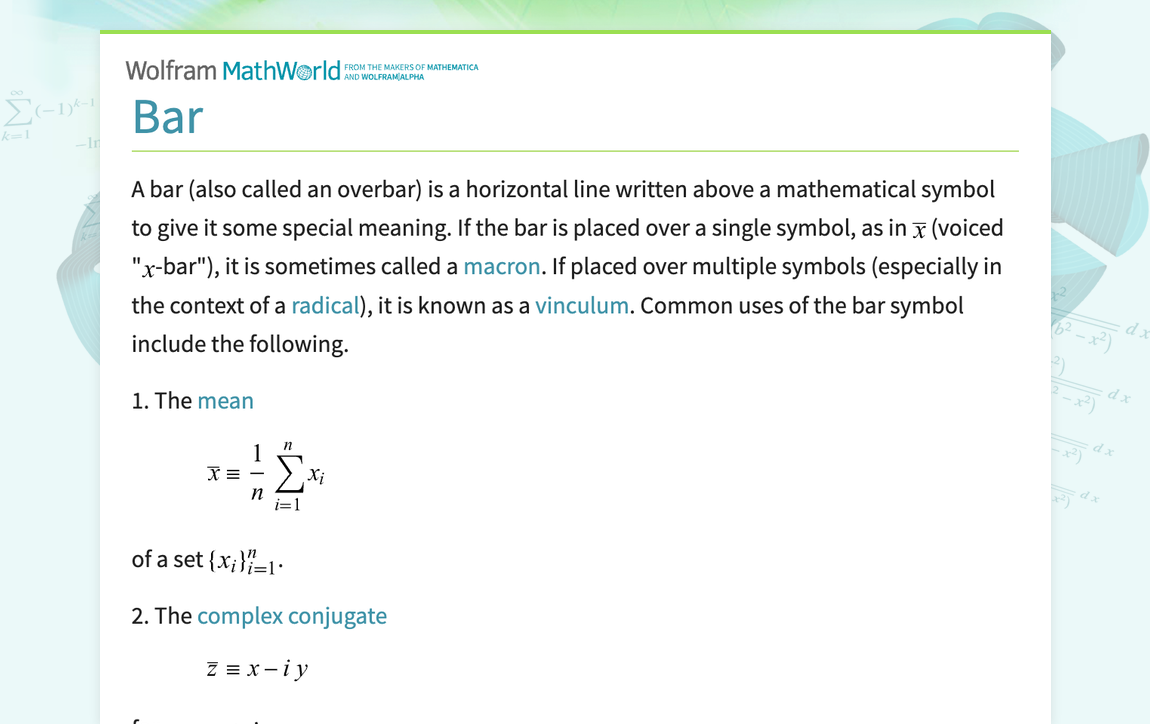

In technical documentation and mathematical logic, bar notation is expressed as a horizontal line drawn over a letter or a variable (e.g., $bar{A}$). If the variable $A$ represents a high voltage or a “true” state, $bar{A}$ (read as “A-bar” or “Not A”) represents a low voltage or a “false” state. This syntax is universal across electrical engineering and computer science, providing a concise way to represent the negation of a logic gate’s output.

The Role of the Overline in Boolean Logic

Boolean logic is the mathematical foundation of all digital computing. Developed by George Boole in the mid-19th century, it was later applied to electronic switching circuits by Claude Shannon. In this framework, bar notation serves as one of the three primary operators, alongside the AND (conjunction) and OR (disjunction) operators. Without the ability to denote a logical inverse via bar notation, it would be impossible to build the complex decision-making trees required for modern software algorithms.

Applications in Digital Circuit Design and Hardware Engineering

In the realm of hardware, bar notation moves from the chalkboard to the silicon wafer. It is the primary tool used by engineers to map out how electrons should flow through transistors to perform calculations.

Logic Gates and Truth Tables

Every processor is composed of billions of microscopic logic gates. The most fundamental gate that utilizes bar notation is the NOT gate, also known as an inverter. When an engineer designs a circuit, they use truth tables to define the behavior of these gates. For a NOT gate, the truth table is simple: if the input is $A$, the output is $bar{A}$.

However, the utility of bar notation expands significantly when applied to universal gates like NAND (Not-AND) and NOR (Not-OR). A NAND gate is represented as $(overline{A cdot B})$. This indicates that the output is only “false” if both inputs are “true.” Because NAND gates are easier and cheaper to manufacture at the atomic scale, most modern flash memory (NAND Flash) is built entirely around this specific logical application of bar notation.

De Morgan’s Laws and Circuit Optimization

One of the most powerful applications of bar notation in technology is its role in De Morgan’s Laws. These mathematical rules allow engineers to simplify complex logical expressions, which directly translates to reducing the number of transistors needed on a chip. The laws state:

- The complement of a product is equal to the sum of the complements: $(overline{A cdot B}) = bar{A} + bar{B}$

- The complement of a sum is equal to the product of the complements: $(overline{A + B}) = bar{A} cdot bar{B}$

By using bar notation to manipulate these equations, hardware designers can optimize chip architecture for speed and energy efficiency. This optimization is what allows for the continued miniaturization of tech gadgets while simultaneously increasing their processing power.

Bar Notation in Software Documentation and Programming

While bar notation is a physical reality in hardware, it remains a vital communication tool in the software layer, particularly in technical writing, digital security, and high-level programming documentation.

Using Bar Notation in LaTeX and Technical Writing

For developers and researchers writing technical papers or documentation for AI tools, representing bar notation accurately is crucial. The standard tool for this is LaTeX, a typesetting system used widely in academia and tech. Using the command overline{A}, a researcher can clearly communicate a logical negation in a white paper. This clarity is vital when documenting “Active Low” signals—signals in a system that perform their function when the voltage is low, often represented in schematics with bar notation to prevent catastrophic errors in hardware-software integration.

Representation in Modern Programming Languages

In modern coding, physical bars are difficult to type on a standard keyboard, so different symbols often substitute for bar notation. In C-based languages (like C++, Java, and JavaScript), the exclamation point (!) serves as the logical NOT. In Python, the word not is used. However, when these programmers dive into low-level systems or bitwise operations, they often return to the concept of the “Tilde” (~) for bitwise NOT operations. Despite the change in symbol, the logical principle remains identical to the classical bar notation: it is a command to invert the binary data.

Digital Security, Error Detection, and Data Integrity

The reach of bar notation extends into how we secure and transmit data across the internet. It plays a silent but essential role in ensuring that the files you download are not corrupted.

Checksums and Parity Bits

In digital security and data transmission, bar notation is used to describe the logic behind parity bits and checksums. These are extra bits of data added to a transmission to detect errors. If a data packet is sent, the receiving hardware uses logical inversion (represented by bar notation in the algorithm’s design) to verify that the bits haven’t flipped due to electromagnetic interference. If the calculated bar-notation-based complement does not match the received data, the system knows the security or integrity of the digital packet has been compromised.

Cryptographic Logic

Encryption algorithms, which protect everything from personal messages to global financial transactions, rely heavily on XOR (Exclusive OR) operations and their complements. Bar notation is used by cryptographers to map out these logic paths. By understanding the “inverse” of a data set, security software can create complex “keys” that are mathematically impossible to crack without the corresponding logical map. The bar over the variable is, in essence, the “lock” that keeps unauthorized users out of sensitive digital systems.

Future Trends: The Evolution of Notation in Quantum Computing

As we move toward the era of quantum computing, the way we use and represent logical notation is evolving, yet the foundation remains rooted in the principles of bar notation.

Expanding Notations for Qubits

In classical computing, a bit is either 0 or 1. In quantum computing, a qubit can exist in a superposition of both states. While traditional bar notation is used for binary inversion, quantum logic uses “Bra-Ket” notation (Dirac notation). However, the concept of the “conjugate transpose”—a sophisticated version of the logical complement—still utilizes bar-like symbols to represent the state of a qubit. Tech professionals transitioning to quantum systems must carry their understanding of classical bar notation into these more complex mathematical spaces.

Symbolic Logic in AI and Machine Learning

In the development of neural networks, bar notation is frequently used in the mathematical models that define “cost functions” and “backpropagation.” When an AI tool “learns,” it is essentially calculating the difference (the inverse or complement) between its current output and the desired result. Engineers use bar notation to represent normalized values and mean averages in the data sets that train these models. As AI continues to integrate into every gadget and app, the symbolic logic represented by a simple bar remains one of the most powerful tools in the developer’s arsenal.

Conclusion

Bar notation is far more than a relic of high school mathematics; it is the fundamental “switch” that enables the digital age. From the gate-level optimization of a CPU to the cryptographic protocols that secure the global web, the overline represents a critical logical operation: the power of negation.

For those working in the technology sector, mastering bar notation provides a deeper insight into how hardware and software interact. It allows for the simplification of complex circuits, the accurate documentation of technical systems, and the development of more efficient algorithms. As we push the boundaries of what is possible with silicon and move toward quantum frontiers, the humble bar notation will continue to be the shorthand for the logic that defines our digital world.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.