Arithmetic, at its core, is the fundamental branch of mathematics concerned with the manipulation of numbers. It’s the bedrock upon which more complex mathematical disciplines are built, and its principles are woven into the fabric of our daily lives, even if we don’t always recognize them. While often perceived as a basic subject taught in early schooling, a deeper understanding of arithmetic reveals its profound significance, particularly within the realm of technology. Far from being a static concept, arithmetic has evolved and continues to be a critical enabler of the digital world we inhabit.

The Enduring Pillars of Arithmetic

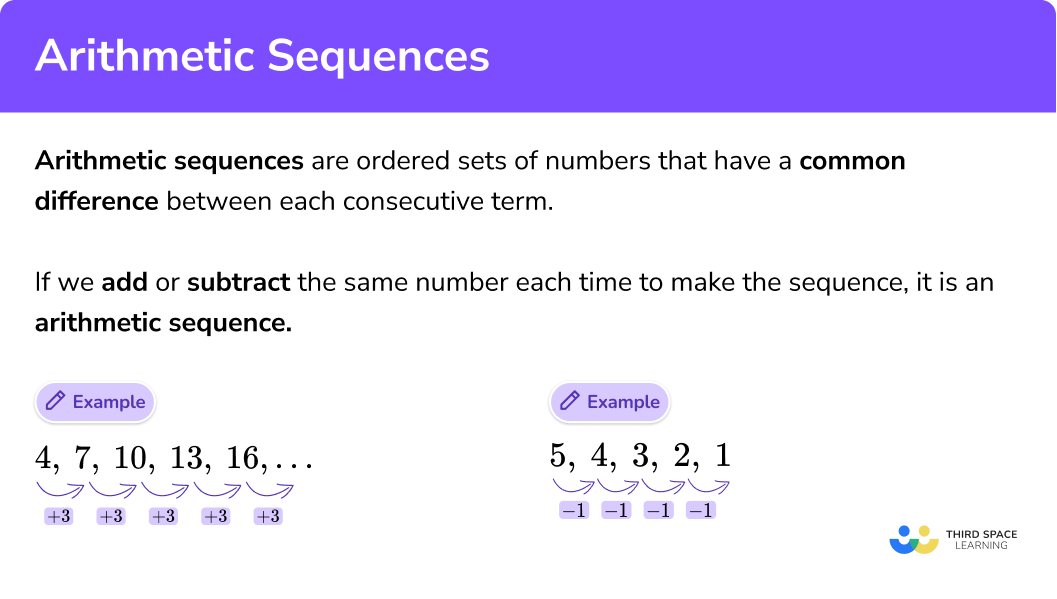

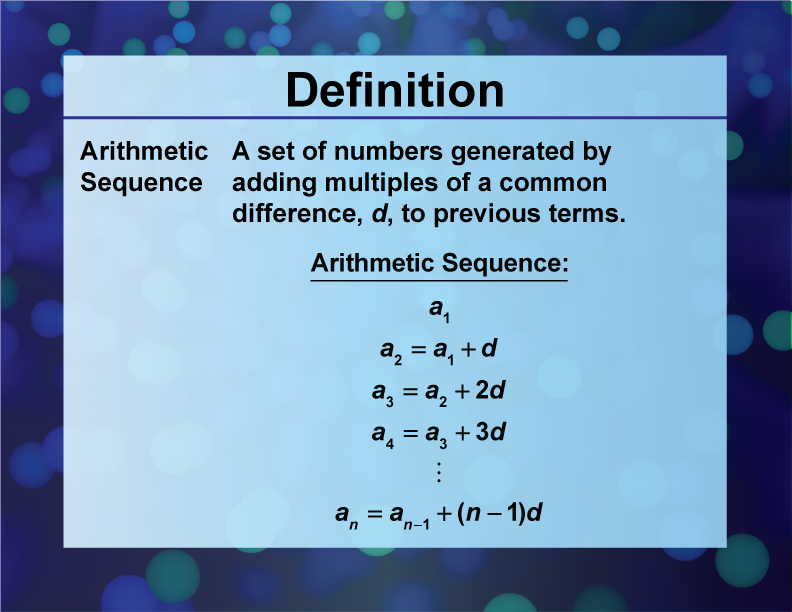

The foundational elements of arithmetic are the operations that allow us to combine and compare numbers. These operations, seemingly simple, are the building blocks for all numerical computation.

Addition: The Art of Combining

Addition is the process of finding the sum of two or more numbers. It’s the most intuitive of the arithmetic operations, representing the act of bringing quantities together. In the digital realm, addition is the literal act of aggregating data points, counting events, or summing up values in a ledger. Every time a server processes a request and increments a counter, or a database query returns a sum of records, addition is at play. This fundamental operation underlies the tracking of website traffic, the calculation of financial transactions within an application, and the aggregation of sensor data in an IoT device.

Subtraction: The Science of Difference

Subtraction is the inverse of addition, representing the act of taking away one quantity from another. It’s crucial for determining differences, calculating remaining quantities, and accounting for losses. In technology, subtraction is vital for tasks like calculating remaining battery life, determining the time elapsed between two events, or deducting stock from an inventory system. When a user makes a purchase, subtraction is used to update the available stock levels. Similarly, in network traffic analysis, subtraction might be used to measure the amount of data transferred over a specific period.

Multiplication: The Power of Repeated Addition

Multiplication is a shortcut for repeated addition. Instead of adding a number to itself multiple times, we multiply. This operation is indispensable for scaling operations, calculating areas, and determining compound effects. In technology, multiplication is frequently used in algorithms that deal with scaling factors, such as rendering graphics at different resolutions, calculating the total storage needed for a dataset of a certain size, or determining the total processing power required for a complex task. It’s also fundamental in algorithms that deal with rates, like calculating the total distance traveled given a constant speed over time.

Division: The Principle of Equitable Distribution

Division is the inverse of multiplication, representing the act of splitting a quantity into equal parts or determining how many times one number is contained within another. It’s essential for sharing, calculating averages, and determining ratios. In technology, division is used extensively for calculating averages (e.g., average response time of a server), determining data transfer rates (data divided by time), and in algorithms that distribute workloads across multiple processors. When a streaming service calculates how much data to buffer per second, or an operating system determines how to allocate CPU time, division plays a critical role.

Arithmetic in the Algorithmic Age

The true power of arithmetic in the modern technological landscape lies in its integration into algorithms. Algorithms are sets of rules or instructions that a computer follows to solve a problem or perform a task. Arithmetic operations are the fundamental commands within these algorithms, enabling everything from simple calculations to complex data analysis.

Data Processing and Analysis

At the heart of every data-driven application is arithmetic. Whether it’s a social media platform analyzing user engagement, a financial institution processing transactions, or a scientific research project crunching experimental data, arithmetic is the engine. Basic operations are used to clean, transform, and aggregate data. For instance, calculating the mean, median, and mode of a dataset all rely heavily on arithmetic. Standard deviation, a measure of data dispersion, is calculated using subtraction, squaring, addition, and division. These statistical measures, derived from arithmetic, are crucial for understanding trends, identifying anomalies, and making informed decisions.

Performance Metrics and Optimization

Understanding and optimizing the performance of technological systems relies heavily on arithmetic. Developers and engineers constantly measure metrics like response times, throughput, and error rates. Calculating these metrics involves arithmetic operations. For example, determining the average response time of a web server requires summing up individual response times and dividing by the number of requests. Optimizing algorithms often involves analyzing their computational complexity, which is expressed in terms of the number of arithmetic operations performed. A more efficient algorithm will require fewer operations, leading to faster execution times and reduced resource consumption.

Resource Management

Effective management of computational resources – such as CPU cycles, memory, and network bandwidth – is dependent on arithmetic. Operating systems use arithmetic to track resource allocation, monitor usage, and make scheduling decisions. For instance, when multiple applications are running concurrently, the operating system uses arithmetic to divide CPU time fairly among them. Similarly, network routers use arithmetic to calculate the best paths for data packets, considering factors like bandwidth and latency. The efficient utilization of these resources, driven by arithmetic calculations, directly impacts the speed, responsiveness, and overall stability of any digital system.

Advanced Arithmetic: The Foundation of Complexity

While basic arithmetic operations form the bedrock, more advanced arithmetic concepts are equally vital in the technological sphere, enabling sophisticated computations and intricate systems.

Number Systems and Representation

Computers operate using binary numbers (base-2), which consist of only 0s and 1s. Understanding how decimal numbers (base-10), the numbers we use daily, are converted to and from binary is a fundamental aspect of computer science. This conversion process relies on arithmetic principles like place value and involves operations like multiplication and addition. Furthermore, different number systems, such as hexadecimal (base-16), are used in computing for reasons of conciseness and ease of representation. Manipulating numbers across these different bases requires a solid grasp of arithmetic.

Boolean Algebra and Logic Gates

Boolean algebra, a system of algebra in which the variables take on only two values, typically denoted as “true” and “false” (or 1 and 0), is fundamental to digital electronics and computer logic. The operations in Boolean algebra – AND, OR, NOT – have direct physical implementations in the form of logic gates within microprocessors. While not strictly arithmetic in the traditional sense of numbers, these operations are the digital equivalent of calculations. The combination of these logic gates, built upon Boolean algebra and underpinned by binary arithmetic, forms the foundation of all digital computation. Every decision made by a CPU, every piece of data processed, originates from these fundamental logical operations.

Cryptography and Security

Modern digital security and encryption techniques rely heavily on sophisticated arithmetic, particularly number theory. Algorithms like RSA, which secure online transactions and communications, are based on the properties of prime numbers and modular arithmetic. Operations such as modular exponentiation, which involves calculating a number raised to a power within a specific modulus, are computationally intensive and require efficient arithmetic implementations. The security of these systems hinges on the difficulty of reversing these arithmetic operations, making a deep understanding of advanced arithmetic crucial for both designing secure systems and developing methods to break them.

The Ubiquitous Influence of Arithmetic in Technology

From the most basic smartphone app to the most advanced supercomputer, arithmetic is an invisible yet indispensable force. Its principles are embedded in the silicon chips that power our devices, the software that orchestrates their functions, and the networks that connect them.

Software Development and Engineering

Every line of code written by a software engineer, in some form, engages with arithmetic. Whether it’s calculating loop iterations, managing memory addresses, or implementing complex algorithms, arithmetic operations are the core instructions. Compilers and interpreters translate human-readable code into machine code, where arithmetic operations are precisely defined and executed by the hardware. The efficiency and correctness of software are directly tied to how effectively arithmetic is applied.

Hardware Design and Performance

The design of computer hardware itself is intrinsically linked to arithmetic. Microprocessors, the brains of our computers, are essentially vast arrays of circuits designed to perform arithmetic and logic operations at incredible speeds. The speed of a processor is often measured in FLOPS (Floating-point Operations Per Second), a metric directly derived from arithmetic capabilities. Engineers meticulously design these circuits to perform addition, subtraction, multiplication, and division with minimal latency and maximum efficiency.

Artificial Intelligence and Machine Learning

The burgeoning fields of Artificial Intelligence (AI) and Machine Learning (ML) are heavily reliant on arithmetic. Training sophisticated neural networks involves vast amounts of matrix multiplication and vector addition. Algorithms for pattern recognition, prediction, and decision-making are built upon complex mathematical models that leverage arithmetic at their core. The ability of AI systems to learn and adapt is a direct consequence of their capacity to perform an enormous number of arithmetic operations on massive datasets.

In conclusion, arithmetic is far more than just the elementary school subject we first encounter. It is the fundamental language of computation, the essential tool that empowers the entire technological ecosystem. Understanding its principles, from the basic operations to its advanced applications in cryptography and AI, provides crucial insight into how our digital world functions and continues to evolve. As technology advances, the role of arithmetic, though often unseen, will remain central to innovation and progress.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.