In the realm of mathematics, the conversion of a simple fraction like 1/6 into a decimal seems like a middle-school exercise. However, when we transition this query into the world of technology, software engineering, and computational theory, it transforms into a fascinating study of precision, binary representation, and the limitations of digital hardware. To answer the question “What is 1/6 in decimal form?” we must first look at the raw mathematical output: 0.1666… (recurring).

But for a software developer, a data scientist, or an AI architect, this infinite string of sixes represents a challenge. How does a computer—a machine built on finite bits—store an infinite number? How do modern apps ensure that 1/6 doesn’t lead to a catastrophic rounding error in a financial algorithm or a glitch in a 3D rendering engine? This article explores the technical nuances of converting 1/6 to decimal form and how modern technology manages the complexities of non-terminating numbers.

The Mathematical Foundation: Converting 1/6 to a Decimal

Before diving into the high-tech applications, we must establish the baseline mathematical truth. Converting a fraction to a decimal is achieved through long division, dividing the numerator (1) by the denominator (6).

The Division Process

When you divide 1 by 6, the process begins by recognizing that 6 does not go into 1. We add a decimal point and a zero, making it 10. Six goes into 10 once, leaving a remainder of 4. We add another zero, making it 40. Six goes into 40 six times (36), leaving a remainder of 4 again. This creates an infinite loop.

The resulting decimal is 0.16666666666…, where the digit 6 repeats indefinitely. In mathematical notation, this is often represented as 0.16 with a bar (vinculum) over the 6, indicating it is a recurring decimal.

Identifying Repeating vs. Terminating Decimals

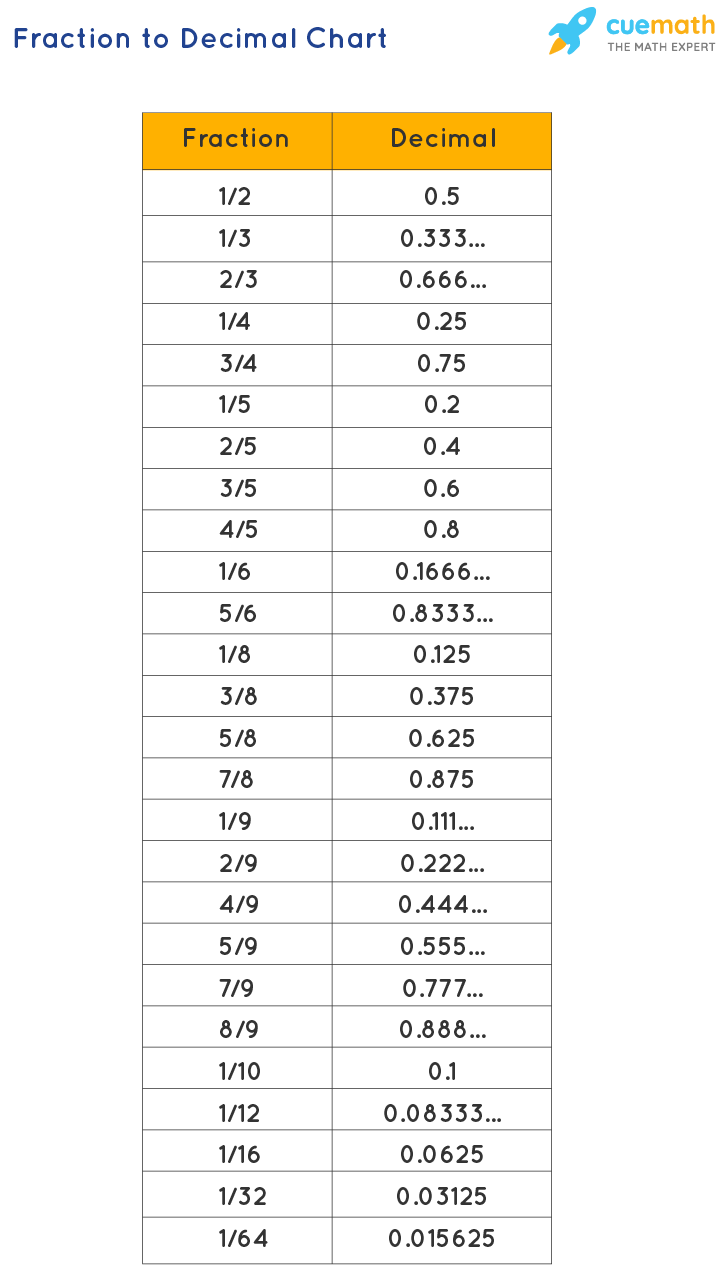

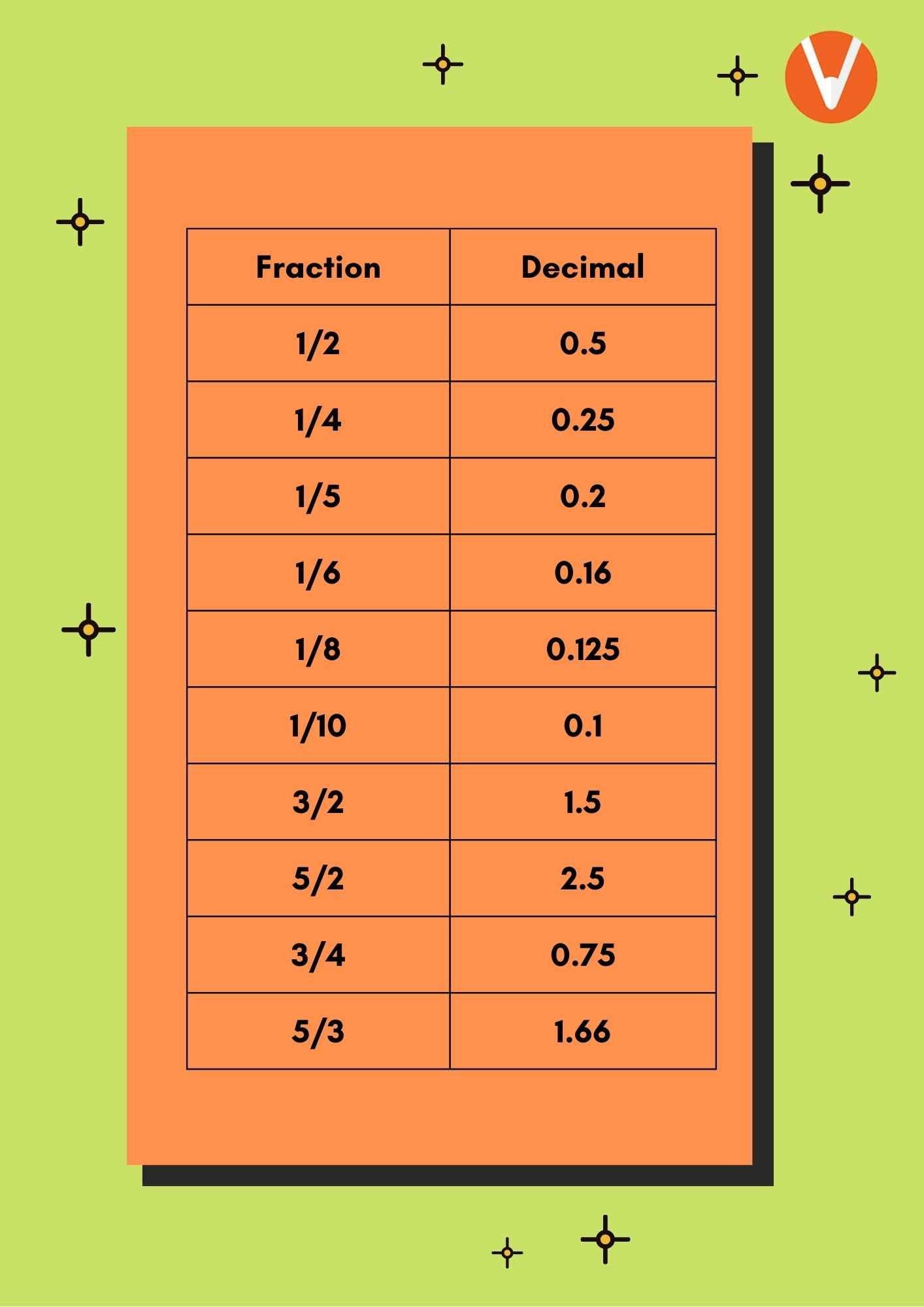

In technology, the distinction between a terminating decimal (like 1/5 = 0.2) and a repeating decimal (like 1/6) is critical. Terminating decimals are “clean” for digital systems to process. Repeating decimals, however, require a decision-making process by the software: where do we cut it off? This “truncation” or “rounding” is the point where pure mathematics meets the practical constraints of computer science.

The Technology of Precision: How Software Handles Non-Terminating Decimals

Computers do not think in base-10 (decimal); they think in base-2 (binary). This creates a secondary layer of complexity when dealing with a fraction like 1/6. Even if a number terminates in decimal form, it might not terminate in binary, leading to what tech professionals call “floating-point errors.”

Floating-Point Arithmetic (IEEE 754)

Most modern software uses the IEEE 754 standard for floating-point arithmetic. This is the method by which processors (CPUs and GPUs) represent real numbers. When you input “1/6” into a JavaScript console or a Python script, the computer stores it as a “double-precision float.”

A double-precision float allocates 64 bits of data to a number: 1 bit for the sign, 11 bits for the exponent, and 52 bits for the mantissa (the significant digits). Because 1/6 is infinite, the computer must round the 52nd bit. This means that in many programming environments, 1/6 is actually stored as something closer to 0.16666666666666665741… This tiny discrepancy is the ghost in the machine that engineers must account for.

The Binary Challenge: Why Computers Struggle with 1/6

In binary, a fraction can only be represented as a terminating expansion if its denominator is a power of 2 (2, 4, 8, 16, etc.). Because the denominator of 1/6 is 6 (which has a prime factor of 3), it becomes a repeating sequence in binary as well.

This means that every time a piece of software performs a calculation with 1/6, it is technically working with an approximation. While this doesn’t matter when calculating a discount on a pair of shoes, it matters immensely in high-frequency trading, aerospace engineering, and cryptographic systems where precision is paramount.

Practical Applications in Modern Software Development

Technology doesn’t exist in a vacuum; it is applied to solve real-world problems. The way 1/6 is handled changes depending on the “stack” or the specific industry application.

Financial Tech (FinTech) and Rounding Errors

In the world of FinTech, 0.1666… can be a liability. If a bank is calculating interest that involves a divisor of six and they use standard floating-point numbers, those tiny rounding errors (often called “round-off noise”) can accumulate over millions of transactions.

To solve this, developers use specialized “Decimal” or “BigNum” libraries. For instance, in Python, the decimal module allows for arbitrary-precision arithmetic. This allows the software to treat 1/6 with as many digits as the user specifies, or to perform “symbolic” math, keeping the value as a fraction (1/6) as long as possible before converting it to a decimal at the very last step.

Graphics, CAD, and Digital Design

In 3D modeling and Computer-Aided Design (CAD), precision is the difference between a functional machine part and a digital error. If a software tool needs to divide a circle into six equal segments, it relies on the decimal representation of 1/6.

Graphics cards (GPUs) are optimized for speed over absolute precision, often using “single-precision” (32-bit) floats to render frames at 60 or 120 frames per second. Engineers in this space must balance the visual “smoothness” of a 0.1666… slope with the hardware’s ability to calculate that position in real-time.

AI and Large Language Models (LLMs): How Algorithms Interpret Mathematical Queries

As we enter the era of Artificial Intelligence, the way machines “understand” the question “What is 1/6 in decimal form?” has shifted from simple calculation to neural processing.

Tokenization of Numbers

When you ask a Large Language Model (like the one generating this text) for the decimal of 1/6, it doesn’t necessarily pull out a calculator immediately. Instead, it processes the query through “tokens.” It recognizes the pattern of the question and retrieves the mathematical fact from its training data.

However, advanced AI models now use “Chain of Thought” reasoning or integrated code interpreters. This means the AI realizes that 1/6 is a recurring value and may actually run a hidden Python script to ensure the decimal it provides is accurate to a specific number of places, rather than just “guessing” based on language patterns.

Symbolic Math vs. Neural Processing

The next frontier in AI technology is the integration of symbolic AI with neural networks. Symbolic AI treats 1/6 as an object with rules, rather than just a string of digits. By treating 1/6 as a fraction first and a decimal second, AI tools can provide more “insightful” answers—explaining why the 6 repeats and how that affects further calculations in a complex equation.

Tools and Calculators for High-Accuracy Conversions

For users who require more than a simple “0.166,” several categories of digital tools exist to handle these conversions with professional-grade accuracy.

Arbitrary-Precision Arithmetic Libraries

Software libraries such as GMP (GNU Multiple Precision Arithmetic Library) are the gold standard for tech professionals. These tools allow for calculations to be performed to thousands of decimal places. If a scientist needs 1/6 calculated to 10,000 digits for a physics simulation, these libraries bypass the standard 64-bit limits of a traditional CPU.

The Future of Digital Calculation: Quantum and Beyond

As we look toward the future, quantum computing offers a different perspective on numerical representation. While classical computers are binary, quantum bits (qubits) can exist in superpositions. While this doesn’t “change” the value of 1/6, it could fundamentally change how we run simulations involving irrational and repeating numbers, potentially allowing for simulations of infinite series that are currently too computationally expensive for standard hardware.

Conclusion: The Depth of a Simple Fraction

The journey from “1/6” to “0.1666…” is a testament to the complexity of the digital age. What starts as a simple division becomes a deep dive into the IEEE 754 standard, binary limitations, software library choices, and the cognitive architecture of AI.

In tech, 1/6 is more than just a number; it is a reminder that we live in a world of approximations. Whether you are a developer writing a line of code, an architect designing a skyscraper in CAD, or an investor tracking a fractional share, understanding how 1/6 translates into the decimal world is essential for maintaining precision in a digital landscape. The next time you see that repeating 6, remember that beneath the screen, your device is performing a complex balancing act between the infinite nature of math and the finite reality of silicon.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.