In the landscape of modern technology, where artificial intelligence and complex algorithms dictate our daily digital interactions, it is easy to forget that the foundation of these systems is built upon basic mathematical principles. One such principle—the division of a fraction by another fraction—might seem like a relic of middle-school arithmetic, but in the realm of software development, data science, and computational logic, it remains a critical operation. Understanding “how to divide fraction by fraction” is not just about moving numbers on a page; it is about understanding the logic of reciprocals, which powers everything from graphics rendering to financial tech algorithms.

As we move toward more sophisticated AI-driven tools, the ability to translate these manual mathematical steps into programmatic logic is what separates a basic user from a tech-savvy innovator. This guide explores the mechanics of fraction division through the lens of technology, software logic, and digital tools.

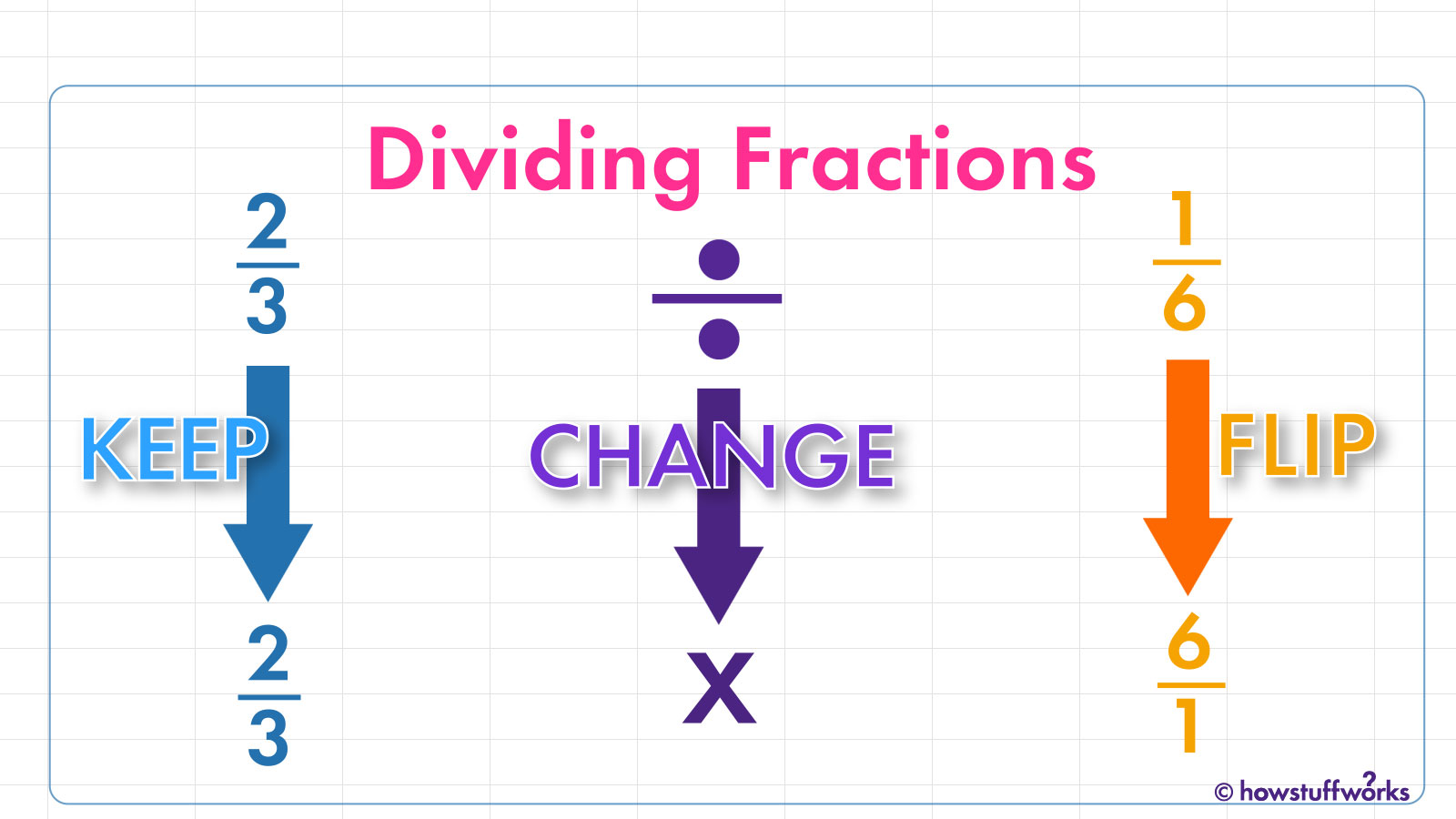

The Algorithmic Foundation: The “Keep-Change-Flip” Logic

To understand how software handles the division of fractions, we must first look at the underlying algorithm. In mathematics, this is commonly referred to as the “Keep-Change-Flip” (KCF) method. While a human sees this as a mnemonic device, a developer sees it as a sequence of logical operations.

The Mechanics of the Reciprocal

The core of dividing fractions lies in the concept of the reciprocal. To divide one fraction (the dividend) by another (the divisor), you must multiply the dividend by the reciprocal of the divisor. In technical terms, the reciprocal is the multiplicative inverse. For any fraction $a/b$, its reciprocal is $b/a$.

When a computer processes this, it doesn’t “divide” in the traditional sense. Division is often computationally more “expensive” or complex for a processor than multiplication. Therefore, converting a division problem into a multiplication problem by flipping the divisor is a more efficient path for a logic gate to follow.

Step-by-Step Computational Logic

- Keep: The first fraction (the dividend) remains untouched in the system’s memory buffer.

- Change: The operation symbol is switched from a division operator to a multiplication operator.

- Flip: The second fraction (the divisor) is inverted. The numerator becomes the denominator, and the denominator becomes the numerator.

- Process: The system performs two multiplication operations: numerator times numerator and denominator times denominator.

- Simplify: The resulting fraction is passed through a Greatest Common Divisor (GCD) algorithm to reach its simplest form.

Software Tools and AI-Driven Math Engines

We no longer live in an era where we must rely solely on mental math. The “Tech” niche has produced a variety of sophisticated tools that make dividing fractions instantaneous, but the technology behind these tools is fascinatingly complex.

Natural Language Processing in WolframAlpha

WolframAlpha is perhaps the most prominent example of a computational intelligence engine. Unlike a standard calculator, it uses Natural Language Processing (NLP) to understand the query “how to divide fraction by fraction.” When a user inputs a query, the engine parses the semantic meaning, identifies the variables, and applies symbolic mathematics rather than just numerical approximation. This allows the software to provide not just the answer, but the step-by-step breakdown of the logic used, which is essential for debugging or educational purposes.

AI-Powered Visual Recognition Apps

Apps like Photomath and Google Lens have revolutionized how we interact with mathematical problems. These tools use computer vision and neural networks to “see” a handwritten fraction. The technology identifies the characters, recognizes the division symbol, and maps the relationship between the numbers. Once the visual data is converted into a digital string, the app applies the KCF logic mentioned earlier. This seamless transition from physical ink to digital solution represents the pinnacle of mobile math tech.

The Role of LLMs in Mathematical Logic

Large Language Models (LLMs) like GPT-4 or specialized math models are now capable of solving fraction-based problems through “Chain of Thought” reasoning. Instead of just outputting a result, these AI tools simulate the human thought process, explaining the inversion of the divisor and the subsequent multiplication. This is a massive leap for tech-based tutoring, as it provides context-aware assistance to developers and students alike.

Coding Fractions: Handling Rational Numbers in Modern Languages

For software engineers, simply knowing the rule isn’t enough; one must know how to implement it in code. Most programming languages treat numbers as integers or floats (decimals). However, decimal representation can lead to “floating-point errors”—minuscule inaccuracies that can ruin a high-precision project. To solve “how to divide fraction by fraction” accurately, developers use specific libraries.

Python’s fractions Module

Python provides a built-in module specifically for rational number arithmetic. Instead of converting $1/3$ to $0.33333333$, which is technically inaccurate, Python’s fractions.Fraction class stores the numerator and denominator as distinct integers.

from fractions import Fraction

# Dividing 1/2 by 3/4

dividend = Fraction(1, 2)

divisor = Fraction(3, 4)

result = dividend / divisor # The language handles the "flip" internally

print(result) # Output: 2/3

In this tech environment, the complexity of the division is abstracted away, allowing developers to focus on higher-level logic while ensuring 100% mathematical precision.

JavaScript and BigInt for Precise Math

In the web development world, JavaScript traditionally struggled with precision. However, with the advent of BigInt and libraries like Fraction.js, developers can now build complex financial and scientific calculators that handle fraction division without the risk of rounding errors. This is crucial for “EdTech” platforms that require absolute accuracy when teaching users how to divide fractions.

The Importance of the GCD Algorithm

When a developer writes a function to divide fractions, they must also include a “simplification” step. This is done using the Euclidean Algorithm to find the Greatest Common Divisor (GCD). This ensures that if the output is $4/8$, the technology automatically reduces it to $1/2$ before presenting it to the user. This level of automated refinement is a hallmark of high-quality software.

Real-World Applications in High-Tech Industries

Why does the tech world care so much about dividing fractions? It isn’t just for classroom exercises. Precise fractional division is a cornerstone of several high-stakes industries.

Computer Graphics and CAD Software

In Computer-Aided Design (CAD) and 3D rendering, objects are often scaled using fractional ratios. If a designer needs to scale a component down by a fraction relative to another fractional measurement, the software must perform “fraction by fraction” division thousands of times per second. Any error in the reciprocal logic could result in a “glitch” or a structural misalignment in a digital blueprint.

Data Science and Probability

Data scientists frequently work with probabilities, which are essentially fractions. When calculating conditional probabilities (Bayes’ Theorem), one often has to divide one probability (fraction) by another. In a machine learning context, these calculations help determine the likelihood of an outcome. The technology must be able to handle these fractions with high velocity and zero error to ensure the AI model remains reliable.

Cryptography and Digital Security

Advanced cryptography often relies on modular arithmetic and rational numbers. While the math used in encryption is far more advanced than simple fractions, the fundamental principles of reciprocals and multiplicative inverses are identical. Security protocols use these mathematical “traps” to ensure that data can be encrypted and decrypted only by those with the correct “key.”

The Future of Computational Mathematics

As we look toward the future, the way we approach “how to divide fraction by fraction” will continue to evolve alongside our technology. We are moving away from manual calculation and toward “intent-based” computing.

Quantum Computing and Rational Logic

Quantum computers operate on qubits, which can represent complex states. While traditional binary computers handle fractions through specific scripts, quantum algorithms may find new ways to process multi-dimensional rational numbers, potentially solving complex equations that involve millions of fractional divisions in a fraction of a second.

The Integration of AR and Math

Augmented Reality (AR) is set to change how we learn and apply math. Imagine wearing a pair of smart glasses while working on a mechanical engineering project. As you look at two different gear ratios (expressed as fractions), the AR software could overlay the division calculation in real-time, showing you exactly how one fraction relates to the other.

Conclusion: The Tech Behind the Math

Understanding how to divide fraction by fraction is a journey from simple classroom rules to the complex world of software architecture. Whether it is through the “Keep-Change-Flip” algorithm, Python’s fractions module, or AI-driven computational engines, the technology remains rooted in the same logical truth. For the modern professional, mastering this logic is not just about getting the right answer; it is about understanding the digital language that builds our world. As software continues to eat the world, the fractions that make up its code must be divided, multiplied, and simplified with absolute precision.