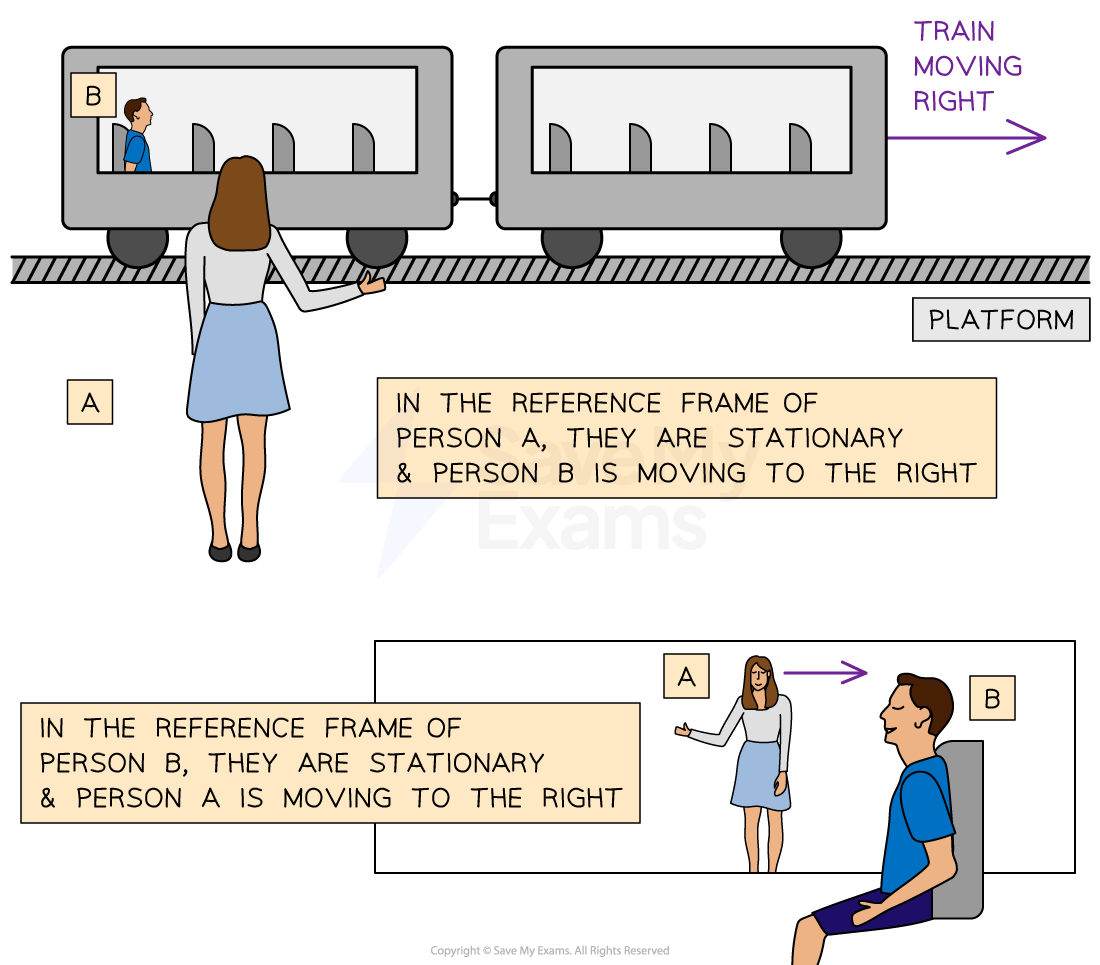

In the realm of classical physics, an inertial frame of reference is defined as a coordinate system that is not undergoing acceleration. It is a space where Newton’s First Law—the law of inertia—holds perfectly: an object at rest stays at rest, and an object in motion stays in motion unless acted upon by an external force. While this might sound like a theoretical concept reserved for textbooks, it has become the invisible backbone of the 21st-century tech stack. From the smartphone in your pocket to the autonomous drones patrolling agricultural fields and the sophisticated guidance systems of SpaceX rockets, the “inertial frame” is the fundamental mathematical anchor that allows technology to interact with the physical world.

Understanding the inertial frame of reference in a technological context is no longer just about understanding gravity or velocity; it is about “sensor fusion,” digital precision, and the ability of artificial intelligence to map its own existence within a three-dimensional space.

The Hardware Perspective: MEMS and the Digitalization of Motion

To translate the abstract concept of an inertial frame into functional technology, engineers rely on Inertial Measurement Units (IMUs). These are micro-electromechanical systems (MEMS) that act as the sensory organs for hardware. Without a stable frame of reference, a device has no way of knowing where “up” is or how fast it is turning.

Defining the Baseline for Motion Sensors

In consumer tech, the inertial frame of reference is established by the interaction between accelerometers and gyroscopes. An accelerometer measures linear acceleration along three axes (X, Y, and Z). However, because the Earth is constantly pulling on these sensors via gravity, the device must use the Earth’s gravitational field as its primary inertial reference. This allows your smartphone to know when you’ve rotated it from portrait to landscape mode. The tech “calculates” the constant 9.8 m/s² of gravity and treats it as the baseline, effectively creating a local inertial frame within the device’s software.

The Role of Gyroscopes and Magnetometers

While accelerometers handle linear motion, gyroscopes measure angular velocity—the rate of rotation. To maintain a “locked” frame of reference, high-end tech like VR headsets or professional cinematography gimbals use a third component: the magnetometer. By measuring the Earth’s magnetic field, the magnetometer provides a North-referenced heading. When these three sensors work together, they perform what is known as “9-axis sensor fusion.” This fusion creates a stable, digital inertial frame that remains consistent even if the user is moving erratically, preventing the “drift” that often plagues lower-quality motion tracking.

Autonomous Systems and the Quest for Absolute Positioning

For autonomous vehicles (AVs) and robotics, the inertial frame of reference is a matter of safety and operational viability. A self-driving car must distinguish between its own movement and the movement of the world around it. If the car’s internal “frame” is slightly misaligned with the “global frame” of the road, the result could be catastrophic.

SLAM: Simultaneous Localization and Mapping

One of the most significant breakthroughs in robotics tech is SLAM. In this process, a robot enters an unknown environment and must simultaneously build a map of that environment while tracking its own location within it. The inertial frame of reference here is dynamic. The robot uses LIDAR and cameras to identify “landmarks,” but it relies on its internal inertial sensors to fill the gaps between visual frames. If the cameras are blinded by a glare or the LIDAR is obscured by heavy rain, the robot reverts to its inertial frame—calculating position based on its last known velocity and acceleration. This is often referred to as “dead reckoning.”

Correcting for Non-Inertial Noise in Real-World Environments

The real world is rarely a perfect inertial frame. Roads have bumps, Earth rotates, and vibrations from engines create “noise” in the data. Modern automotive tech uses advanced algorithms, such as the Kalman Filter, to strip away this noise. A Kalman filter is a mathematical tool that predicts the future state of a system based on its current inertial frame and then corrects that prediction with new sensor data. This ensures that the tech maintains a smooth, jitter-free understanding of its trajectory, allowing for the micro-adjustments in steering and braking that make autonomous transit possible.

Spatial Computing and the User’s Inertial Experience

With the rise of the Metaverse, Vision Pro, and high-end gaming, the “inertial frame of reference” has moved from the vehicle to the human head. Spatial computing is essentially the tech of aligning a digital world with the user’s physical inertial frame so perfectly that the brain cannot tell the difference.

Reducing Latency through Frame Synchronization

In Virtual Reality (VR), “motion-to-photon” latency is the enemy. This is the delay between your head moving (a change in your physical inertial frame) and the screen updating the image. If the digital frame of reference lags even by 20 milliseconds, the disconnect between the inner ear’s vestibular system and the eyes causes motion sickness. Tech giants like Meta and Apple solve this by over-sampling inertial data at frequencies of 1,000Hz or higher. By the time your brain has processed a movement, the device has already recalculated the inertial frame and re-rendered the digital environment to match.

Haptic Feedback and Spatial Audio

The application of the inertial frame extends beyond visuals into the auditory and tactile. Spatial audio utilizes the inertial frame to “pin” a sound source to a specific coordinate in 3D space. As you turn your head, the software shifts the audio balance in real-time to ensure the sound stays fixed in the “room,” rather than moving with your ears. This creates an immersive experience where the tech disappears, leaving only a seamless integration of the digital and physical worlds.

Aerospace and Satellite Tech: Managing Interplanetary Frames

At the highest level of technology—aerospace—the concept of an inertial frame of reference becomes incredibly complex. Unlike a car on a road, a satellite or a deep-space probe has no “ground” to use as a reference.

GNSS and GPS Data Processing

Global Navigation Satellite Systems (GNSS) rely on highly precise atomic clocks and a predefined inertial frame called the International Terrestrial Reference System (ITRS). However, because satellites are moving at high velocities and are further from Earth’s gravity, they experience time and motion differently (a nod to Einstein’s relativity). The technology inside a GPS receiver must constantly calculate the difference between the satellite’s moving frame and the user’s “fixed” frame on Earth. This requires sophisticated software that accounts for both the rotation of the Earth and the gravitational effects on signal propagation.

Deep Space Navigation and Star Trackers

When tech moves beyond Earth’s orbit, it can no longer rely on GPS. To establish an inertial frame of reference in the void of space, spacecraft use “Star Trackers.” These are high-resolution cameras that take photos of the star field and compare them to an internal database of billions of stars. By recognizing patterns, the spacecraft can determine its orientation with extreme precision. This celestial-based inertial frame allows probes like the James Webb Space Telescope to point at a distant galaxy with an accuracy equivalent to hitting a penny from a mile away.

The Future of Inertial Technology: Quantum Sensors

As we look toward the future of tech, the limitations of current MEMS sensors are becoming apparent. Thermal noise and mechanical fatigue eventually lead to “drift,” where the inertial frame becomes slightly inaccurate over time. The next frontier in this niche is Quantum Inertial Sensing.

Atom Interferometry and the Absolute Frame

Quantum sensors use the properties of atoms cooled to near absolute zero. By measuring the interference patterns of atom waves, these sensors can detect changes in motion with a level of precision that is orders of magnitude higher than today’s best silicon chips. This technology would allow for “unpluggable” navigation—the ability for a submarine or an aircraft to navigate for months with zero GPS signal and zero drift. In this scenario, the tech would be able to maintain an almost perfect inertial frame of reference by measuring the fundamental fabric of spacetime itself.

Integration with AI and Predictive Modeling

The final piece of the puzzle is the integration of AI. Future tech will not just react to inertial changes but predict them. By training neural networks on trillions of data points from inertial sensors, AI can learn to distinguish between intentional movement and environmental interference. Whether it’s an AI-powered exoskeleton helping a patient walk or a high-speed delivery drone navigating a wind-swept city, the ability to define, maintain, and predict the inertial frame of reference will be what separates “smart” tech from truly intelligent, autonomous systems.

In summary, the inertial frame of reference is the silent director of the modern technological symphony. It provides the “where” and “how” for every digital interaction involving motion. As our devices become more autonomous and our virtual experiences more immersive, our reliance on the precision of these digital frames will only grow, cementing the marriage between classical physics and the cutting edge of technological innovation.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.