The traditional classroom has long been dominated by visual stimuli—whiteboards, textbooks, and written examinations. However, as the digital landscape evolves, technology is pulling us back toward an ancient method of knowledge acquisition: sound. To understand what an auditory learner is in the modern context, we must look beyond the simple definition of “someone who learns by hearing” and instead examine how software, artificial intelligence, and specialized hardware are transforming the auditory experience into a high-performance cognitive tool.

In the realm of educational technology (EdTech), the auditory learner is no longer a student who simply thrives on lectures; they are a power user of digital ecosystems designed to convert data into soundscapes. This article explores the intersection of cognitive science and technology, detailing how the auditory learning style is being optimized through cutting-edge innovations.

Defining the Auditory Learner in the Era of High-Fidelity Data

At its core, an auditory learner is an individual who processes information most effectively when it is spoken or heard. In psychological terms, these individuals have a high aptitude for phonological processing, allowing them to retain sequences of sounds, recognize nuances in tone, and synthesize complex ideas through verbal dialogue. While visual learners might remember the layout of a page, auditory learners remember the cadence of a speaker’s voice or the specific rhythm of an explanation.

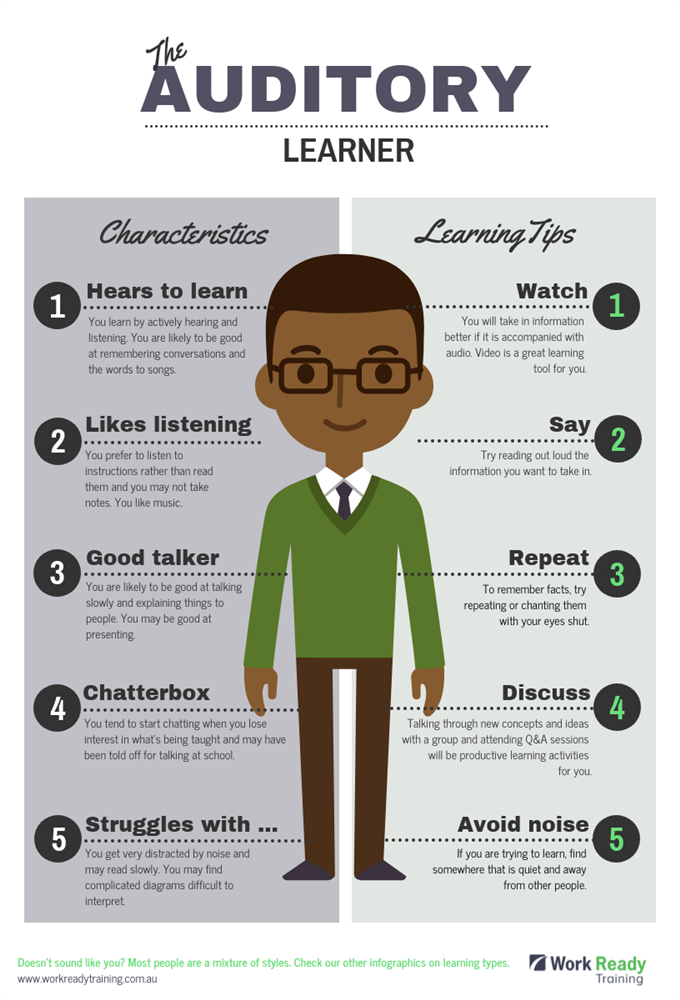

The Core Characteristics of Auditory Processing

Auditory learners typically exhibit a set of recognizable traits that have significant implications for how they interact with technology. They often prefer oral instructions over written manuals, tend to talk through problems out loud to reach a solution, and are highly sensitive to the emotional subtext provided by a speaker’s pitch and volume. In a professional tech environment, these are the individuals who gravitate toward voice memos, recorded meetings, and collaborative brainstorming sessions rather than long-form email threads.

The Shift from Analog to Digital Soundscapes

Historically, the auditory learner was limited by the availability of a live speaker. Today, the “digital auditory learner” utilizes a vast array of asynchronous tools. The rise of the “audio-first” internet—driven by podcasts, audiobooks, and social audio platforms—has shifted the focus from static text to dynamic sound. This transition has turned what used to be a passive learning style into an active, tech-driven pursuit where information is consumed during commutes, workouts, and multi-tasking windows, effectively expanding the time available for professional development.

The Rise of Assistive EdTech: Specialized Tools for the Modern Ear

For the auditory learner, the modern software stack is a goldmine of productivity. The barrier between “reading” and “listening” has been completely dismantled by sophisticated software that turns any digital interface into an oral presentation.

Text-to-Speech (TTS) and AI Voice Synthesis

The most significant technological leap for auditory learners is the evolution of Text-to-Speech (TTS) software. Early iterations were robotic and difficult to follow, often leading to cognitive fatigue. However, modern AI-driven synthesis, such as those used by Speechify or ElevenLabs, employs neural networks to mimic human prosody—the natural rhythm and intonation of speech. These tools allow auditory learners to convert dense technical documentation, coding tutorials, or legal contracts into high-quality audio files. By adjusting the speed (often to 2x or 3x), these learners can consume information at a rate that far exceeds traditional reading speeds while maintaining high retention.

Specialized Apps for Spoken Organization

Beyond simple consumption, auditory learners require tools to organize their thoughts. Software like Otter.ai or Fireflies.ai uses automated speech recognition (ASR) to transcribe meetings in real-time. For an auditory learner, the value here is twofold: they can stay fully engaged in the conversation without the distraction of manual note-taking, and they are provided with a searchable “audio-visual” record afterward. Furthermore, mind-mapping software that integrates voice commands allows these individuals to build complex project architectures using only their voice, aligning the workflow with their natural cognitive strengths.

AI and the Hyper-Personalization of Auditory Learning

Artificial Intelligence is not just replicating human speech; it is fundamentally changing how auditory learners interact with data. We are entering an era of “interactive audio,” where the learner doesn’t just listen to a recording but engages in a bidirectional dialogue with an intelligent system.

Neural Networks and Natural Language Processing

Modern Large Language Models (LLMs) like GPT-4 and Gemini have introduced sophisticated voice modes that allow for “Socratic” learning. An auditory learner can ask a complex question about quantum computing or software architecture and receive a spoken explanation. If a concept is unclear, the learner can interrupt, ask for a metaphor, or request a deeper dive into a specific sub-topic. This mimics the experience of a one-on-one tutor, providing a personalized feedback loop that is far more effective for auditory processors than reading a static Wikipedia entry.

Real-Time Translation and Global Accessibility

Technology is also breaking down linguistic barriers for auditory learners. Real-time AI translation tools can now take a live stream or a video lecture in one language and output a high-fidelity audio translation in another. This allows auditory learners to access global experts and niche tech conferences without being limited by their native language. For a tech professional, this means the ability to “listen” to a developer conference in Tokyo or a cybersecurity seminar in Berlin with the same ease as a local podcast.

Hardware Evolution: Enhancing the Auditory Experience

The software is only as good as the hardware that delivers it. The tech industry has seen a massive surge in investment regarding “hearables”—smart wearable devices designed specifically to optimize the auditory environment.

Spatial Audio and Noise-Cancellation Technology

For an auditory learner, the environment is often the biggest distraction. Active Noise Cancellation (ANC) technology, pioneered by brands like Sony and Bose, is a critical “productivity tool” for this niche. By eliminating ambient low-frequency noise, ANC allows the learner to achieve a state of “flow” where the only input is the educational content. Furthermore, the advent of Spatial Audio (3D audio) creates a sense of presence in virtual environments. When an auditory learner attends a virtual reality (VR) lecture, spatial audio allows them to perceive where speakers are standing, making the experience more immersive and easier for the brain to categorize and remember.

Wearable Tech and Bone Conduction

Not all auditory learning happens in a vacuum. Bone conduction headphones, such as those from Shokz, represent a unique tech solution for learners who need to remain aware of their surroundings. By sending vibrations through the cheekbones to the inner ear, these devices allow for the consumption of audiobooks or podcasts while leaving the ear canal open. This is a game-changer for professionals who learn while navigating urban environments or for those who find traditional earbuds uncomfortable during long study sessions.

Future Trends: The Convergence of Voice UI and Education

As we look toward the future of technology, the reliance on screens is likely to diminish in favor of Voice User Interfaces (VUI). This shift will place auditory learners at the forefront of the next digital revolution.

Voice Assistants as Interactive Tutors

We are moving past the era of “Siri, play music” into the era of specialized educational agents. Future iterations of voice assistants will be integrated into Learning Management Systems (LMS). Imagine an auditory learner asking their smart home, “Review the key takeaways from yesterday’s Python workshop,” and the AI providing a curated summary while the learner prepares breakfast. This seamless integration of learning into the “internet of things” (IoT) turns every moment into a potential educational opportunity.

The Metadata of Sound: Searching Audio Content

One of the historical disadvantages of auditory learning was the difficulty of “skimming” or searching through audio compared to text. However, new AI indexing technologies are making audio as searchable as a Google Doc. By using timestamped metadata and semantic search, an auditory learner can now search for a specific keyword across 500 hours of a podcast series and jump directly to the relevant segment. This solves the “linear problem” of audio, giving it the same flexibility and utility as written data.

In conclusion, the auditory learner is no longer a niche category in educational psychology; they are the primary beneficiary of a massive technological shift toward voice, sound, and AI-driven interaction. By leveraging the latest in TTS software, AI-powered tutors, and high-fidelity hearables, these individuals can transform their natural inclination for sound into a significant competitive advantage in the fast-paced world of technology and digital innovation. The future of learning isn’t just something we will see—it’s something we will hear.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.