In an increasingly digitized world, the ability to anticipate and prepare for critical events is no longer a luxury but a fundamental necessity. From cybersecurity breaches and system outages to customer churn and market shifts, every industry faces recurring challenges that peak at specific times or under particular conditions. The quest to identify “what month has the most [critical events]” is, at its heart, a profound data science problem – a desire to understand temporal patterns, quantify risk, and ultimately, build more resilient systems and strategies. This deep dive explores how cutting-edge technology, particularly data analytics and artificial intelligence, empowers organizations to move beyond reactive measures, transforming insights into foresight and proactive defense.

The Predictive Power of Data in Tech

At the core of understanding and mitigating critical events lies the disciplined collection, processing, and analysis of vast datasets. Every interaction, every log entry, every transaction leaves a digital footprint that, when aggregated, can reveal powerful underlying trends. The tech industry, by its very nature, is both a generator and a primary consumer of this data, leveraging it to optimize everything from user experience to infrastructure reliability.

Unpacking Historical Data for Seasonal Trends

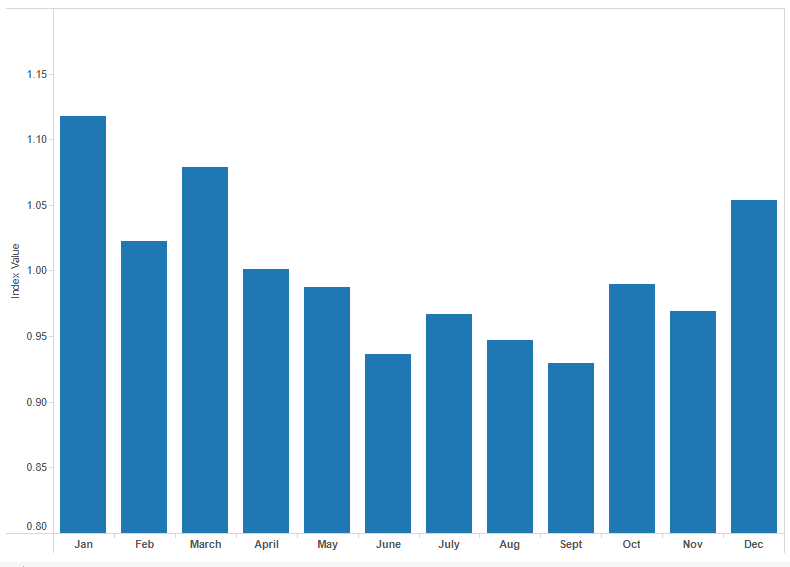

Historical data is the bedrock of predictive analytics. Just as epidemiological studies might identify seasonal variations in public health outcomes, tech companies analyze historical performance logs, incident reports, user activity metrics, and even external factors to unearth recurring patterns. This could involve identifying months with historically high rates of denial-of-service attacks, quarters with increased software vulnerabilities due to release cycles, or periods of peak hardware failure influenced by environmental conditions or user stress.

Sophisticated data warehousing and lakehouse architectures enable organizations to store petabytes of this longitudinal data efficiently. Tools for data exploration and visualization, such as Tableau, Power BI, or open-source libraries like Matplotlib and Seaborn in Python, then allow data scientists to identify anomalies, correlations, and cyclical patterns that might otherwise remain hidden. For instance, a cloud provider might discover that resource contention consistently peaks during specific business quarters, correlating with global financial reporting cycles or seasonal e-commerce surges. By analyzing years of such data, they can build a baseline expectation for “normal” peak activity and flag deviations that signify emergent issues. This historical perspective is crucial for establishing benchmarks and understanding the natural ebb and flow of digital ecosystems. Without a clear understanding of past trends, any attempt at future prediction is merely guesswork.

The Role of Real-time Analytics in Anomaly Detection

While historical data provides the macro trends, real-time analytics offers the micro-level vigilance necessary to catch critical events as they unfold or even before they fully manifest. In a world where minutes of downtime can cost millions and data breaches have catastrophic consequences, the speed of insight is paramount. Real-time analytics platforms ingest streaming data from various sources—network traffic, server logs, application performance monitors (APM), security information and event management (SIEM) systems, and user behavior streams—and process it with minimal latency.

This continuous stream of information is then fed into algorithms designed for anomaly detection. These algorithms are trained on historical data to understand normal system behavior and user patterns. Any significant deviation from this established norm, such as an unusual spike in login attempts from an unfamiliar geographical region, an unprecedented surge in API calls, or a sudden drop in transaction success rates, can trigger an alert. The challenge with real-time anomaly detection is to minimize false positives while ensuring critical events are never missed. This often involves dynamic thresholds, contextual analysis, and machine learning models that adapt to evolving system behaviors. Tools like Apache Kafka for streaming data, Apache Flink or Spark Streaming for processing, and specialized monitoring solutions like Datadog or Splunk provide the backbone for these real-time operational intelligence systems. The synergy between historical context and real-time vigilance forms a powerful defense, allowing tech teams to detect the precursors to a major incident and intervene before it escalates into a full-blown crisis, essentially predicting “the month with the most incidents” not as a fixed calendar date, but as a dynamic risk window.

AI and Machine Learning: From Insight to Foresight

Beyond mere detection, artificial intelligence and machine learning propel organizations into the realm of true foresight. These advanced computational techniques can not only identify patterns but also build predictive models that forecast future states, assess probabilities, and even suggest optimal interventions. This moves the organization from asking “what happened?” to “what will happen?” and “what should we do about it?”.

Predictive Models for System Stability and Security

Machine learning algorithms, particularly those in the realm of supervised and unsupervised learning, are adept at building predictive models. For system stability, models can be trained on vast amounts of operational data, including CPU utilization, memory consumption, network latency, error rates, and historical incident logs. These models learn the complex interplay of factors that precede system degradation or failure. For instance, a recurrent neural network (RNN) might identify a subtle, gradual increase in disk I/O coupled with a specific pattern of application errors that consistently leads to a server crash within a 24-hour window. By flagging these precursors, operations teams can perform preventative maintenance, scale resources, or initiate failovers before a user ever experiences an outage.

In cybersecurity, predictive models are even more critical. They can analyze threat intelligence feeds, network traffic, user behavior, and vulnerability databases to forecast potential attack vectors, identify emerging malware strains, and predict which systems are most likely to be targeted next. Graph neural networks, for example, can model network topologies and identify propagation paths for sophisticated attacks. By learning from millions of past attacks and security events, these models can assess the likelihood of a security incident peaking in a given month or under specific conditions, allowing security teams to reinforce defenses where they are most needed. The goal is to shift from detecting known threats to predicting and neutralizing unknown or evolving threats before they cause significant damage.

Automating Risk Assessment and Resource Allocation

One of the most powerful applications of AI in predicting critical periods is the automation of risk assessment and dynamic resource allocation. Traditionally, these processes have been manual, time-consuming, and prone to human bias or oversight. AI models, however, can continuously evaluate multiple risk factors simultaneously, providing a real-time risk score for various components of an IT infrastructure or a business process.

For instance, an AI-powered system could assess the combined risk of a specific microservice based on its recent error rate, the number of open bugs, the current load, the age of its dependencies, and external threat intelligence. If the risk score crosses a predefined threshold, the system could automatically trigger actions: spinning up additional compute instances, rerouting traffic, initiating a security patch deployment, or escalating an alert to a human operator. This dynamic allocation is crucial for managing the fluctuating demands of digital services, ensuring that resources are available when critical usage peaks—or when unexpected surges in activity, such as a DDoS attack, begin to strain infrastructure. By automating these responses, organizations can drastically reduce the mean time to detect (MTTD) and mean time to respond (MTTR) to incidents, transforming potential crises into manageable events.

Strategic Application: Fortifying Digital Infrastructures

The insights generated by data analytics and AI are only as valuable as their application. Translating predictive intelligence into actionable strategies is where true resilience is built. This involves not just fixing problems but fundamentally strengthening the digital foundations of an organization.

Proactive Cybersecurity Measures and Threat Intelligence

In the context of predicting “what month has the most cyberattacks,” AI-driven threat intelligence plays a pivotal role. Predictive models can analyze global threat landscapes, identify emerging attack campaigns, and correlate them with an organization’s specific vulnerabilities and assets. This enables security teams to move from a reactive posture to a highly proactive one.

Instead of waiting for an attack to occur, organizations can deploy advanced phishing detection technologies ahead of predicted social engineering campaigns, harden specific endpoints known to be vulnerable during certain periods, or conduct targeted security awareness training when intelligence suggests a heightened risk of insider threats. Integrating AI into Security Orchestration, Automation, and Response (SOAR) platforms allows for automated responses to predicted threats, such as isolating compromised systems, updating firewall rules, or blocking malicious IP addresses before a full-scale breach. This proactive hardening minimizes the attack surface and significantly reduces the impact of inevitable security incidents. The ultimate goal is to identify and mitigate the factors that lead to peak vulnerability, effectively flattening the curve of monthly security incidents.

Enhancing System Uptime and Performance Management

For infrastructure and operations teams, predicting peak loads and potential failure points is paramount to maintaining high availability and optimal performance. AI-driven performance management tools can analyze application and infrastructure metrics to predict bottlenecks, resource starvation, and potential service degradation hours or even days in advance.

For example, an AI model might predict that a specific database cluster will hit its maximum connection limit during a major marketing campaign scheduled for next month. This allows operations teams to proactively scale up the database, optimize queries, or implement caching strategies well in advance, preventing a performance meltdown. Similarly, AI can predict the “end-of-life” for hardware components based on their historical performance and usage patterns, allowing for scheduled replacements that minimize unplanned downtime. By optimizing resource allocation, intelligently load balancing traffic, and even predicting user experience degradation, organizations can ensure that their digital services remain robust and responsive, even during periods of extreme demand or unexpected challenges. This strategic application of predictive tech transforms system management from an art into a precise, data-driven science.

The Human Element: Ethical Considerations and Skill Development

While AI and data are powerful tools, their effective deployment always hinges on human oversight, ethical considerations, and a skilled workforce. The technology is an enabler, but people remain the critical decision-makers and strategists.

Balancing Automation with Human Oversight

The allure of fully autonomous systems capable of predicting and responding to critical events is strong, but a complete hands-off approach carries inherent risks. AI models, while powerful, can be prone to bias (inherited from the data they are trained on), may lack common-sense reasoning, and can occasionally produce “hallucinations” or unexpected outputs. Therefore, a judicious balance between automation and human oversight is essential.

Automated systems are excellent for quickly identifying anomalies, performing routine tasks, and escalating critical alerts. However, complex decision-making, contextual understanding, and strategic planning require human intelligence. Human operators provide the ethical compass, the nuanced judgment, and the ability to interpret data in the broader organizational and societal context. They can override erroneous automated actions, refine predictive models based on new information, and make critical judgment calls that an algorithm cannot. This collaboration, where AI augments human capabilities rather than replaces them, is the most robust model for managing critical events, especially those that might peak unexpectedly.

Cultivating Data Literacy Across Organizations

The power of predictive analytics and AI extends far beyond the data science team. For an organization to truly leverage these capabilities, data literacy must become a widespread competency. Every department, from marketing to finance, and every role, from executive leadership to frontline staff, benefits from understanding how data is collected, interpreted, and used to inform decisions.

Training programs focused on data fundamentals, statistical thinking, and the ethical implications of AI can empower employees to better understand the insights generated by predictive models. This includes knowing the limitations of the data, the assumptions behind the algorithms, and how to critically evaluate the predictions. When more people within an organization can interpret the output of a system predicting “what month has the most [X]” (be it customer complaints, sales opportunities, or system outages), they are better equipped to contribute to the organization’s resilience. This democratization of data intelligence fosters a culture of proactive problem-solving and innovation, moving the entire organization toward a more data-driven and resilient future.

Future Horizons: The Evolution of Predictive Analytics

The journey of predictive analytics and AI is far from over. Continuous advancements promise even more sophisticated methods for anticipating and managing critical events. The next wave of innovation will further refine our ability to derive foresight from data, making systems even more intelligent and resilient.

Edge Computing and Decentralized Prediction

As the Internet of Things (IoT) proliferates, generating data at an unprecedented scale from countless decentralized sources, the paradigm of sending all data to a central cloud for processing becomes inefficient and sometimes impractical. Edge computing brings processing capabilities closer to the data source, allowing for real-time analytics and predictive model execution directly on devices or local gateways.

This decentralized approach will enable faster anomaly detection and proactive responses at the source of potential critical events. Imagine smart factories where machines predict their own maintenance needs or identify manufacturing defects in real-time without latency. In the realm of cybersecurity, edge AI could detect and neutralize localized threats before they ever reach the central network. This shift will enhance the granularity and speed of predictive capabilities, allowing for more precise interventions against localized peak challenges, making the concept of predicting “what month has the most issues” granular down to specific devices or micro-locations, rather than just large-scale systems.

Explainable AI (XAI) for Critical Decision-Making

A major challenge with complex AI models, particularly deep neural networks, is their “black box” nature. It can be difficult to understand why a model made a particular prediction, which is a significant hurdle when those predictions relate to critical events like system failures or security breaches. Explainable AI (XAI) is an emerging field dedicated to making AI models more transparent and interpretable.

XAI techniques aim to provide human-understandable explanations for AI predictions, detailing which input features contributed most to a particular outcome. For predicting critical events, XAI is invaluable. If an AI system predicts a high likelihood of a system outage next month, XAI could explain that the prediction is based on an unusual correlation between a recent software update, a specific increase in database queries, and a pattern of memory leaks observed in historical data. This transparency builds trust in AI systems, allows human experts to validate or challenge predictions, and provides actionable insights for remediation. It transforms AI from a mere predictor into a collaborative partner, enhancing our collective ability to understand, anticipate, and strategically address the periods of greatest risk and challenge in our digital world.

In conclusion, the fundamental human desire to understand “what month has the most deaths” – or more broadly, what period carries the greatest risk – finds its modern answer in the sophisticated integration of data analytics and artificial intelligence within the tech domain. By moving from reactive problem-solving to proactive prediction, organizations can fortify their digital infrastructures, safeguard their assets, and ensure uninterrupted service, building a future where resilience is not just a goal, but a continuously optimized reality.

aViewFromTheCave is a participant in the Amazon Services LLC Associates Program, an affiliate advertising program designed to provide a means for sites to earn advertising fees by advertising and linking to Amazon.com. Amazon, the Amazon logo, AmazonSupply, and the AmazonSupply logo are trademarks of Amazon.com, Inc. or its affiliates. As an Amazon Associate we earn affiliate commissions from qualifying purchases.