Energy is the lifeblood of the technological age. From the smartphone in your pocket to the colossal data centers powering the cloud, every innovation, every computation, and every digital interaction relies on a precise understanding and management of energy. Yet, for many, the array of units used to quantify energy – joules, watts, watt-hours, kilowatts, and more – can be a source of confusion. Demystifying these measurements is not just an academic exercise; it’s crucial for understanding device specifications, evaluating efficiency, driving sustainable innovation, and shaping the future of technology.

This article delves into the fundamental units of energy measurement, dissecting their relevance and application within the dynamic landscape of technology. We will explore how these metrics empower engineers, inform consumers, and guide the industry towards a more efficient and sustainable digital future.

The Fundamental Building Blocks: Joules, Watts, and Watt-hours

At the core of all energy discussions, especially in technology, are a few essential units. Understanding their individual definitions and interrelationships is the first step toward grasping the bigger picture of tech’s energy consumption.

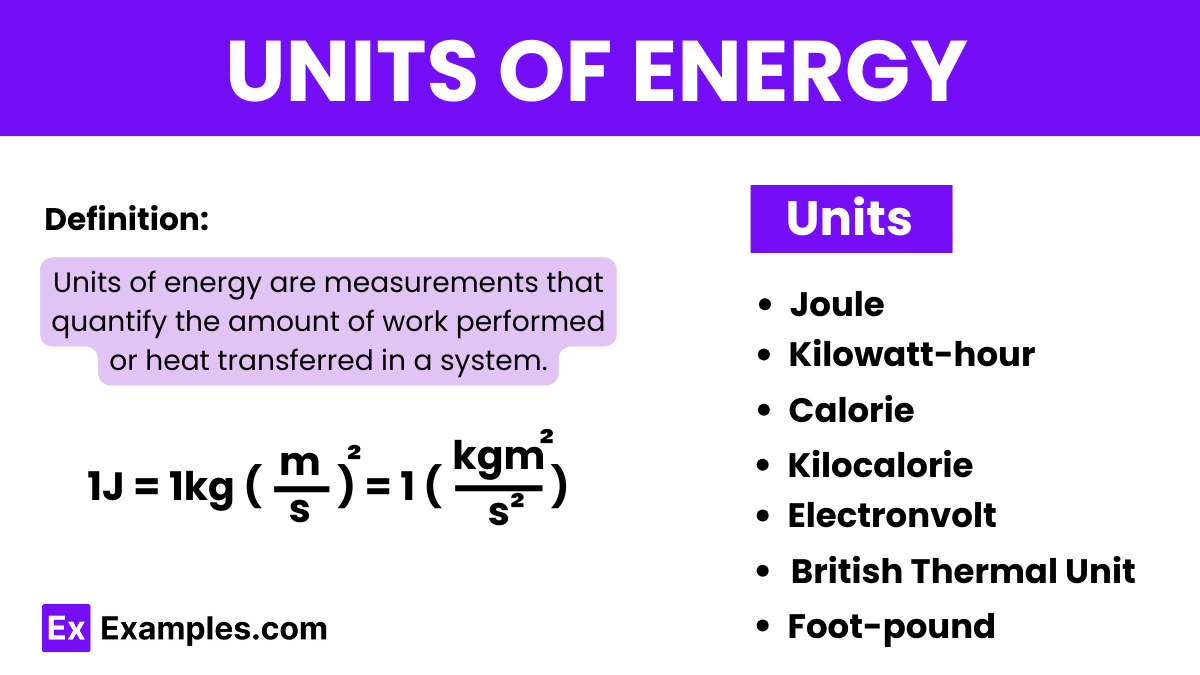

The Joule: Energy’s SI Standard

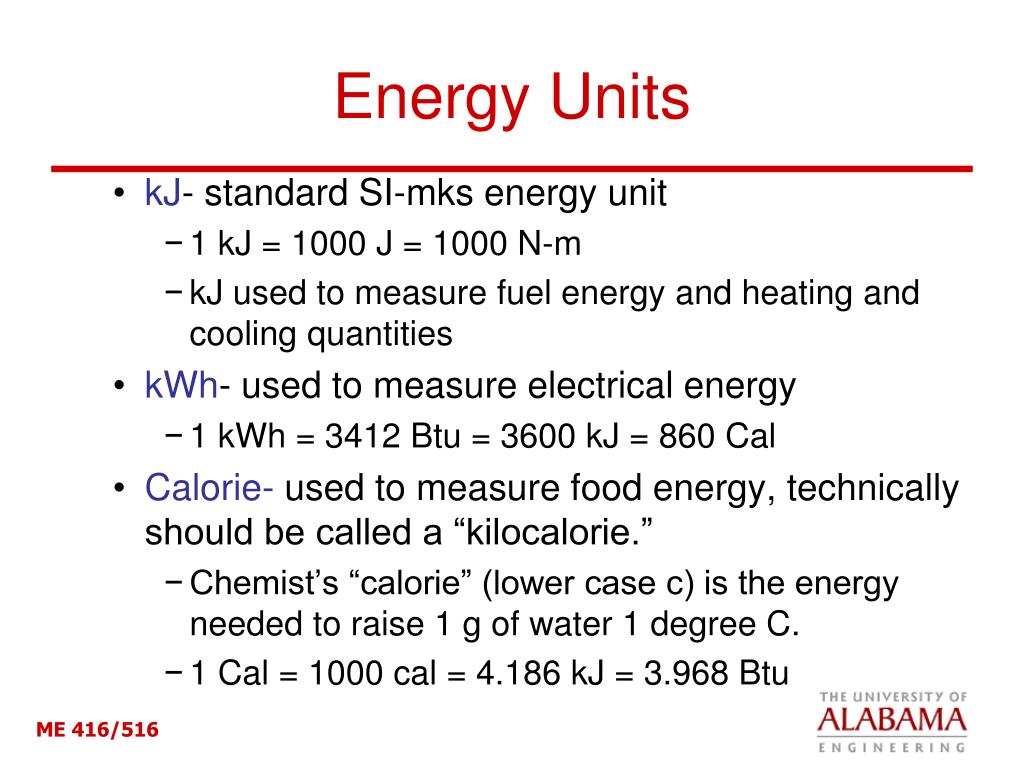

The joule (J) is the international standard (SI) unit of energy, named after the English physicist James Prescott Joule. It represents the amount of work done when a force of one newton moves an object one meter. While foundational in physics, the joule is often too small a unit for practical, everyday tech measurements, leading to the use of its larger multiples or more specialized units. For instance, the energy consumed by a single CPU operation might be measured in picojoules, while the thermal energy dissipated might be more significant. Though not commonly seen on consumer labels, the joule underpins calculations for everything from semiconductor physics to the efficiency of power converters. It serves as the bedrock upon which other, more immediately recognizable tech energy units are built.

Watts: Powering Performance and Consumption

Often confused with energy, the watt (W) is actually a unit of power, representing the rate at which energy is produced or consumed. One watt is defined as one joule per second (1 W = 1 J/s). This distinction is vital in technology. A high-performance graphics card, for example, might have a peak power consumption of several hundred watts, indicating how quickly it draws energy from the power supply. A device’s wattage rating tells you about its instantaneous demand for energy, which is critical for sizing power supplies, designing cooling systems, and understanding the thermal output of electronic components. From the power output of a USB charger (e.g., 5W, 10W) to the thermal design power (TDP) of a CPU, watts are ubiquitous in describing the operational intensity of tech hardware.

Watt-hours: Quantifying Energy Storage and Usage

While watts describe the rate, watt-hours (Wh) measure the total amount of energy consumed or stored over a period. It is simply power multiplied by time. If a 100-watt light bulb runs for one hour, it consumes 100 Wh of energy. This unit, and its common multiples like kilowatt-hours (kWh) and megawatt-hours (MWh), is arguably the most practical for everyday tech applications involving duration. Watt-hours are fundamental for understanding battery capacities in laptops, smartphones, electric vehicles, and uninterruptible power supplies (UPS). A laptop battery might be rated at 60 Wh, telling you how much energy it can store, and consequently, how long it can power the device given its average power consumption. Kilowatt-hours are the units you typically see on your electricity bill, indicating your total energy consumption over a billing cycle.

Energy Measurement in Modern Computing and Devices

The choice and interpretation of energy units are critical for both the design and the user experience of modern technological devices, from tiny wearables to massive data centers.

Decoding Device Power Specifications

When you look at the technical specifications of a gadget, you’ll encounter various power-related figures. Understanding these helps in making informed choices and ensuring proper operation. For instance, a power adapter might list input voltage (e.g., 100-240V AC), output voltage (e.g., 5V DC), and output current (e.g., 2A). Multiplying the output voltage by the output current gives you the output power in watts (5V * 2A = 10W). This tells you the maximum rate at which the charger can supply energy to your device. For larger systems like desktop PCs, the power supply unit (PSU) is rated in watts (e.g., 750W), indicating its maximum sustained power output to all internal components. Incorrectly sizing a PSU can lead to instability or hardware damage, highlighting the practical importance of these wattage metrics.

Battery Life and Capacity: More Than Just mAh

For portable devices, battery life is a paramount concern. While many small devices, especially smartphones, market their batteries in milliamp-hours (mAh), this can be misleading when comparing devices with different operating voltages. Milliamp-hours denote the capacity in terms of electric charge, not energy. A 4000 mAh battery at 3.7V (common for phones) stores approximately 14.8 Wh (4000 mAh * 3.7V = 14800 mWh = 14.8 Wh). A laptop battery with a lower mAh rating but a higher voltage (e.g., 2000 mAh at 11.1V) would actually store more energy (2000 mAh * 11.1V = 22200 mWh = 22.2 Wh). Therefore, comparing batteries based on their Watt-hour capacity provides a much more accurate representation of their energy storage capability and, consequently, their potential runtime across different devices and voltages.

The Energy Footprint of Data Centers

At the industrial scale, particularly within cloud computing and data centers, energy measurement shifts to massive scales. These facilities consume enormous amounts of electricity, often measured in megawatt-hours (MWh) or even gigawatt-hours (GWh) annually. Understanding and optimizing this consumption is not just an economic imperative but also an environmental one. Metrics like Power Usage Effectiveness (PUE) have become standard for evaluating data center efficiency. PUE is calculated by dividing the total facility power by the IT equipment power. A PUE of 1.0 means all power goes to IT equipment (ideal but practically impossible), while a PUE of 2.0 means that for every watt consumed by IT equipment, an additional watt is consumed by cooling, lighting, and other infrastructure. Constant measurement of energy at various points within the data center, from individual servers to cooling units, drives continuous improvement in PUE and overall sustainability.

Driving Green Tech: Renewable Energy and Efficiency

The escalating demand for computing power, coupled with growing environmental concerns, places energy measurement at the forefront of the green tech movement. Accurate metrics are essential for both harnessing renewable energy and maximizing the efficiency of existing technologies.

Measuring Renewable Energy Output

Renewable energy technologies like solar and wind power rely heavily on precise energy measurement. Solar panels are rated by their peak power output in watts (e.g., a 400W panel), indicating the maximum electrical power they can produce under ideal sunlight conditions. Wind turbines are rated in kilowatts (kW) or megawatts (MW), signifying their maximum power generation capacity. However, because these sources are intermittent, the more practical measurement for actual energy contribution to the grid is in kilowatt-hours (kWh) or megawatt-hours (MWh) over time. For instance, a solar farm’s annual output will be measured in GWh. Energy storage systems, crucial for grid stability with renewables, are likewise rated by their total capacity in kWh or MWh, indicating how much energy they can store and release. These measurements are vital for grid planning, investment decisions, and tracking progress toward renewable energy targets.

Energy Efficiency Standards and Innovation

Precise energy measurement is the bedrock upon which energy efficiency standards are built. Programs like Energy Star and regional energy labeling initiatives (e.g., EU energy labels) rely on standardized tests to quantify the energy consumption of devices like monitors, servers, and home appliances. These standards push manufacturers to innovate, driving the development of more efficient processors, low-power displays, intelligent power management firmware, and more efficient power delivery systems. For consumers, these labels provide clear, comparable data, allowing them to choose products that minimize their energy footprint and operating costs. Without consistent and accurate energy measurement protocols, such standards would be impossible to implement or enforce, stalling progress towards more sustainable technology.

The Future of Energy Measurement in Tech: Smart Grids and Beyond

As technology continues its rapid evolution, so too does the sophistication of how we measure, monitor, and manage energy. The future promises even more granular control and intelligent optimization.

Smart Homes and IoT: Granular Energy Monitoring

The proliferation of smart home devices and the Internet of Things (IoT) is ushering in an era of unprecedented granularity in energy monitoring. Smart plugs can measure the real-time power consumption of individual appliances in watts and track cumulative usage in watt-hours. Smart thermostats learn household patterns to optimize heating and cooling, reducing overall energy consumption. Home energy management systems aggregate data from smart meters and individual devices, providing residents with actionable insights into their energy usage patterns. This hyper-aware ecosystem allows for dynamic optimization, helping users save money and reduce their environmental impact by making informed decisions about when and how to use energy-intensive devices.

AI and Machine Learning for Energy Optimization

The vast streams of energy data collected by smart sensors and IoT devices are ripe for analysis by artificial intelligence (AI) and machine learning (ML) algorithms. In data centers, AI can dynamically adjust server loads, cooling systems, and power distribution based on real-time demand and predicted usage patterns, leading to significant energy savings. In smart cities, AI-powered systems can optimize public lighting, traffic signals, and waste management based on energy consumption data. AI can even predict equipment failures by detecting anomalous power draws, enabling predictive maintenance that prevents costly downtime and wasted energy. These intelligent systems transform raw energy measurements into proactive strategies for efficiency and sustainability.

The Metaverse and Digital Sustainability

As concepts like the metaverse gain traction, the energy implications of immersive, persistent digital environments become a critical consideration. Rendering vast virtual worlds, supporting countless concurrent users, and powering complex AI interactions will demand colossal amounts of computing power, and by extension, energy. The development of the metaverse and other future digital frontiers will necessitate a renewed focus on energy efficiency across all layers of the tech stack, from fundamental algorithms to hardware architecture. Precise energy measurement will be paramount to understanding the true environmental cost of these new digital realms and to guiding the innovation required to make them sustainable.

Conclusion

Understanding “what unit of measurement is used for energy” is far more than a technicality; it’s a foundational pillar supporting the entire edifice of modern technology. From the joule, the scientific standard, to the watts that define power, and the watt-hours that quantify usage and storage, these units provide the language through which we comprehend, design, and optimize our digital world.

In the tech realm, accurate energy measurement fuels innovation, drives the quest for greater efficiency, and enables the transition towards a greener, more sustainable future. Whether evaluating the battery life of a smartphone, designing a hyper-efficient data center, or harnessing the power of renewable sources, these metrics empower engineers, inform consumers, and guide industry leaders. As technology continues to advance into ever more complex and energy-intensive frontiers, the precise understanding and diligent application of energy units will remain absolutely critical to shaping a future that is both technologically brilliant and environmentally responsible.